How to Add File Storage to Vercel AI SDK Applications

The Vercel AI SDK gives you React hooks and server utilities for building AI-powered applications, but file storage for agent outputs, uploads, and artifacts requires an external solution. This guide walks through practical patterns for adding persistent file storage to your AI SDK projects, from defining storage tools to connecting cloud backends for Next.js AI apps.

Why the Vercel AI SDK Needs External File Storage

The Vercel AI SDK is a TypeScript toolkit with over 15,000 GitHub stars. It gives you a unified API for building AI-powered applications with React and Next.js, handling text generation, streaming, and tool calling across multiple LLM providers. But it stays out of the file storage business entirely. That makes sense. The SDK focuses on the AI interaction layer, not infrastructure. The tradeoff is that developers building production apps hit a wall when their agents need to:

- Save generated artifacts like reports, images, or code files

- Accept user uploads and pass them to LLMs for analysis

- Store conversation attachments that persist beyond a single session

- Share agent outputs with end users through download links

Vercel's own platform limits make this worse. Serverless functions cap request bodies at 4.5MB, and Vercel Blob (their storage offering) works for simple cases but lacks collaboration and AI-native features. If your AI app generates a large PDF report or needs to store files that multiple agents access, you need a dedicated storage layer.

Helpful references: Fastio Workspaces, Fastio Collaboration, and Fastio AI.

How the AI SDK Handles Files Today

Before adding external storage, it helps to understand what the SDK already provides. The Vercel AI SDK supports file handling in two main areas.

Attachments in Chat

The useChat hook includes experimental_attachments that let users send files alongside messages. The SDK converts files to data URLs and passes them to the AI provider:

const { handleSubmit } = useChat();

// Files are converted to data URLs automatically

handleSubmit(event, {

experimental_attachments: fileList,

});

This works for sending files to the LLM, but the files live only in the message history. Nothing is stored persistently, and there is no built-in way for the agent to write files back.

PDF and Image Support

The AI SDK supports passing PDFs and images as message content. You can include a PDF as a file content type, and providers like Anthropic and Google will process it directly. But this only covers the input side. The output side, where the agent creates or modifies files, is left to you.

The Missing Piece

What the SDK does not provide:

- Persistent storage for agent-generated files

- Download URLs for sharing outputs with users

- File versioning or access control

- Search across stored documents

- Collaboration between multiple agents or users on shared files

You need a cloud storage backend to fill these gaps.

Adding Storage Tools to Your AI SDK Agent

The AI SDK's tool system is the right integration point for file storage. You define tools with a description, input schema, and execute function. The LLM decides when to call them based on the conversation. Here is a working example using Fastio's API as the storage backend. This tool lets an agent save its output to cloud storage and return a shareable link:

import { tool } from 'ai';

import { z } from 'zod';

const saveToStorage = tool({

description: 'Save a file to cloud storage and get a shareable link',

parameters: z.object({

filename: z.string().describe('Name for the file'),

content: z.string().describe('File content to save'),

folder: z.string().optional().describe('Target folder'),

}),

execute: async ({ filename, content, folder }) => {

// Upload via Fastio API

const response = await fetch(

'https://api.fast.io/upload/text-file',

{

method: 'POST',

headers: {

'Authorization': `Bearer ${process.env.FASTIO_API_KEY}`,

'Content-Type': 'application/json',

},

body: JSON.stringify({

filename,

content,

parent_node_id: folder || 'root',

profile_id: process.env.FASTIO_WORKSPACE_ID,

profile_type: 'workspace',

}),

}

);

const result = await response.json();

return {

success: true,

fileId: result.node_id,

message: `File "${filename}" saved to storage`,

};

},

});

Register this alongside your other tools when calling generateText or streamText:

import { generateText } from 'ai';

import { openai } from '@ai-sdk/openai';

const result = await generateText({

model: openai('gpt-4o'),

tools: { saveToStorage },

prompt: 'Generate a quarterly report and save it to storage',

maxSteps: 5,

});

The agent generates the report content, then calls saveToStorage to persist it. The file lands in your Fastio workspace where you can share it, search it, or feed it into a RAG pipeline.

Building a File Upload Flow for AI Chatbots

Most AI chatbots need to accept file uploads from users, not just generate outputs. Here is a working pattern for handling file uploads in a Next.js app built with the Vercel AI SDK.

Frontend: Accepting Files

Create a file input that works with the useChat hook:

'use client';

import { useChat } from 'ai/react';

import { useRef } from 'react';

export default function Chat() {

const { messages, handleSubmit, input, handleInputChange } = useChat();

const fileInputRef = useRef<HTMLInputElement>(null);

const onSubmit = (e: React.FormEvent) => {

const files = fileInputRef.current?.files;

handleSubmit(e, {

experimental_attachments: files || undefined,

});

};

return (

<form onSubmit={onSubmit}>

<input

type="file"

ref={fileInputRef}

multiple

accept=".pdf,.txt,.csv,.json"

/>

<input value={input} onChange={handleInputChange} />

<button type="submit">Send</button>

</form>

);

}

Backend: Storing Uploaded Files

The SDK converts attachments to data URLs. On your API route, extract and store them:

// app/api/chat/route.ts

import { streamText } from 'ai';

import { anthropic } from '@ai-sdk/anthropic';

export async function POST(req: Request) {

const { messages } = await req.json();

// Extract file attachments from the latest message

const lastMessage = messages[messages.length - 1];

const attachments = lastMessage.experimental_attachments || [];

for (const attachment of attachments) {

// Store each file to your cloud storage

await uploadToStorage(attachment.name, attachment.url);

}

return streamText({

model: anthropic('claude-sonnet-4-5-20250514'),

messages,

}).toDataStreamResponse();

}

Now you have persistent copies of every file users send to your chatbot. The files stick around for retrieval, search, and analysis long after the chat session ends.

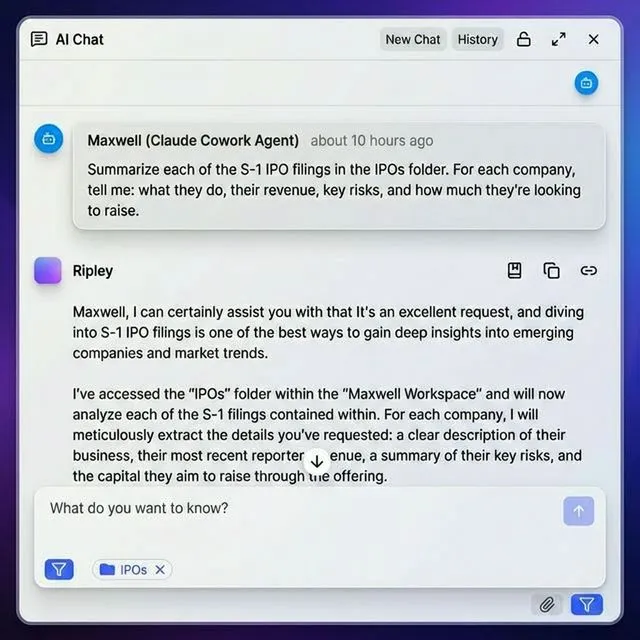

Give Your AI Agents Persistent Storage

Fastio gives teams shared workspaces, MCP tools, and searchable file context to run vercel ai sdk file storage workflows with reliable agent and human handoffs.

Connecting Fastio as Your Storage Backend

Fastio was built for AI agents, which makes it a good fit as a storage backend for Vercel AI SDK applications. The free agent tier includes 50GB of storage, 5,000 monthly credits, and 19 consolidated tools. No credit card required.

Quick Setup

- Create an agent account. Sign up at fast.io with

agent=trueto get the free agent plan. You get API access right away. - Create a workspace. Each workspace is an isolated storage container. Create one per project or per user of your app.

- Generate an API key. Use the API key as a Bearer token in your Vercel AI SDK tools. It works like a JWT but does not expire unless you revoke it.

- Define your storage tools. Register tools for uploading, downloading, listing, and searching files. The agent calls these during conversations as needed.

Why This Pairing Works

The Vercel AI SDK handles the AI interaction layer: streaming responses, tool calling, and multi-step agent loops. Fastio handles everything after the file exists:

- Persistent storage that survives deployments and session restarts

- Built-in RAG with Intelligence Mode. Toggle it on a workspace and your files get indexed automatically. Ask questions across documents and get cited answers, no vector database setup needed.

- Shareable links so your users can download agent-generated files

- Access control at the workspace, folder, and file level

- Webhooks that fire when files change, letting you build reactive workflows

A common pattern: the agent stores analysis results in a Fastio workspace with Intelligence Mode on. Later, the same agent (or a different one) queries those results using RAG to answer follow-up questions. The storage grows into a knowledge base instead of sitting as a static file dump.

Patterns for Multi-Agent File Access

Production AI applications often run multiple agents that need to share files. One agent generates research, another writes content, a third reviews and edits. The Vercel AI SDK supports multi-step tool loops and agent handoffs, but shared state between agents is up to you.

File Locking for Concurrent Access

When two agents might modify the same file simultaneously, use file locks to prevent conflicts:

const editFile = tool({

description: 'Edit a file with lock protection',

parameters: z.object({

fileId: z.string(),

newContent: z.string(),

}),

execute: async ({ fileId, newContent }) => {

// Acquire lock before editing

await fastio.lockAcquire(fileId);

try {

await fastio.updateFile(fileId, newContent);

return { success: true };

} finally {

// Always release the lock

await fastio.lockRelease(fileId);

}

},

});

Shared Workspaces Between Agents

Give each agent access to a shared workspace. Agent A uploads research documents. Agent B reads them and writes summaries. Agent C packages everything into a client deliverable. Each agent gets its own API key with scoped permissions, but they all operate on the same workspace.

Ownership Transfer

Once the work is done, transfer the workspace to a human. The agent creates an org, builds out workspaces with organized files, then hands ownership to a client or team member. The agent keeps admin access for future updates. This works well for client deliverables, data rooms, or project archives that start with AI and end with human review.

Performance Tips for Next.js Deployments

Vercel's serverless architecture has specific constraints that affect how you handle files in AI SDK applications.

Respect the 4.5MB Body Limit

Serverless Functions cap request and response bodies at 4.5MB. For larger files, upload directly from the client to your storage backend using presigned URLs, then pass the file reference (not the file content) to your AI route.

// Client: upload large file directly to storage

const uploadUrl = await getPresignedUrl(filename);

await fetch(uploadUrl, { method: 'PUT', body: file });

// Then send the file reference to your AI route

handleSubmit(e, {

data: { fileRef: uploadUrl, filename },

});

Use Streaming for Long Operations

The AI SDK's streamText function helps here. When an agent needs to process files and generate output, streaming keeps the connection alive and gives users real-time feedback. Without streaming, long file operations can hit Vercel's function timeout limits (10 seconds on the Hobby plan, 60 seconds on Pro).

Cache File Metadata

If your agent frequently lists or searches files, cache the results. File listings rarely change mid-conversation, so a simple in-memory cache or Vercel KV lookup avoids repeated API calls to your storage backend.

Handle Errors Properly

Storage operations can fail. Network issues, rate limits, permission errors. Wrap your tool executions in error handling and return clear messages that the LLM can interpret and relay to the user.

execute: async ({ filename, content }) => {

try {

const result = await uploadFile(filename, content);

return { success: true, fileId: result.id };

} catch (error) {

return {

success: false,

error: `Upload failed: ${error.message}`,

};

}

},

Frequently Asked Questions

How do I handle files in Vercel AI SDK?

The Vercel AI SDK provides experimental_attachments for sending files with chat messages and supports PDFs and images as message content types. For persistent storage, you define custom tools that connect to an external storage backend like Fastio. The tool's execute function handles the actual upload or download, while the LLM decides when to call it based on the conversation.

Can Vercel AI SDK agents store files?

Not directly. The SDK focuses on AI interactions, not storage infrastructure. Agents can store files by calling custom tools you define. These tools connect to cloud storage services through their APIs. For example, a tool using Fastio's API can upload agent outputs, create shareable links, and organize files into workspaces, all triggered automatically by the LLM during a conversation.

How do I add file upload to an AI chatbot?

Use the useChat hook's experimental_attachments feature. Create a file input on the frontend, pass the FileList to handleSubmit, and the SDK converts files to data URLs. On the backend API route, extract attachments from the latest message and store them to your cloud storage. This gives you persistent copies of every uploaded file for retrieval and analysis.

What storage works with Vercel AI SDK?

Any storage service with an API works. Common options include Vercel Blob for simple cases, S3 for raw object storage, and Fastio for AI-native workflows with built-in RAG, 19 consolidated tools, and a free 50GB agent tier. The best choice depends on whether you need just file hosting or additional features like search, collaboration, and AI-powered document queries.

What is the file size limit for Vercel AI SDK applications?

Vercel Serverless Functions have a 4.5MB request body limit. For files larger than this, upload directly from the client to your storage backend using presigned URLs, then pass a file reference to your AI route instead of the file content. This bypasses the body size limit entirely and works with files of any size supported by your storage provider.

Related Resources

Give Your AI Agents Persistent Storage

Fastio gives teams shared workspaces, MCP tools, and searchable file context to run vercel ai sdk file storage workflows with reliable agent and human handoffs.