How to Build RAG Pipelines with OpenClaw

OpenClaw RAG pipelines index documents into embeddings for semantic retrieval. This helps agents be more accurate with your data. Fastio indexes files using Intelligence Mode. ClawHub provides tool access. This guide shows setup steps, code examples, multi-agent workflows, and production examples. It covers RAG best practices too.

What Is an OpenClaw RAG Pipeline?

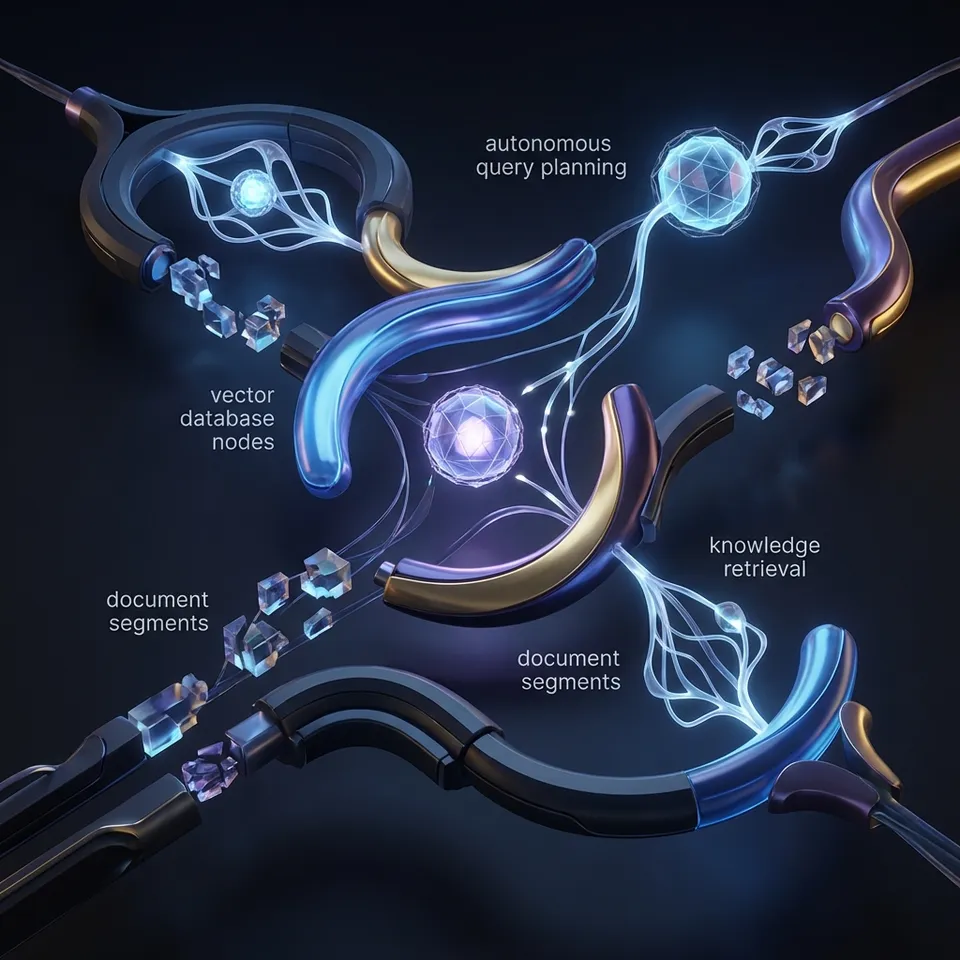

An OpenClaw RAG pipeline indexes documents into a vector store, retrieves relevant chunks based on a query using semantic similarity, and passes those chunks to an LLM prompt for grounded response generation.

OpenClaw agents can each handle part of it. One indexes, one retrieves, one synthesizes. Fastio provides the storage and indexing built in. No need for services like Pinecone or Weaviate.

Core steps:

- Ingestion and indexing: Split documents into chunks, generate embeddings, store in vector DB.

- Retrieval: Embed query, find top-k similar chunks (e.g., high cosine similarity).

- Generation: Stuff context into LLM prompt: "Use only this context: {chunks}. Answer: {query}"

RAG reduces hallucinations on your domain data, as Pinecone explains. Fastio's Intelligence Mode automates embeddings and storage for OpenClaw.

Fastio supports large-file uploads with chunked transfer. Indexed content becomes queryable via natural language.

Why Combine OpenClaw and Fastio for RAG?

OpenClaw handles agent coordination well but lacks storage and built-in RAG. Fastio adds those with agent tools.

Benefits:

- No infrastructure needed: Intelligence Mode indexes uploads automatically. Queries include citations right away.

- Agent collaboration: Multiple OpenClaw instances work in one workspace using locks and webhooks.

- Free tier: 50GB storage, 5,000 credits/month, no card needed.

- Scales to production: Handles book-length PDFs, multi-format support (PDF, DOCX, code).

LangChain requires setting up a vector store. Here, ClawHub works without configuration.

Prerequisites

Get set up:

- Install OpenClaw locally.

- Create Fastio agent account. 50GB free.

- Install ClawHub skill:

npx clawhub@latest install dbalve/fast-io. Adds 14 tools.

Auth happens once via browser. Test with: "Create workspace rag-demo".

Verify: Check MCP server for 19 consolidated tools.

Verify Installation

In OpenClaw:

Create Fastio workspace "rag-test" with intelligence enabled.

Expect workspace ID and "intelligence_enabled": true.

Create Indexed Workspace

Workspaces act as your vector stores. Intelligence Mode enables auto-indexing.

ClawHub call:

org-create-workspace {"name": "rag-pipeline", "intelligence": true}

Confirm with workspace-details: "intelligence_enabled": true.

Uploads now auto-chunk and embed. Costs 10 credits/page ingested.

Pro tip: Name workspaces by project, e.g., "q1-financials-rag".

Index Your Documents

Load your documents.

Local upload:

workspace-storage-add-file workspace="rag-pipeline" path="/docs/" filename="manual.pdf" content_base64="[base64]"

URL import:

web-import workspace="rag-pipeline" path="/docs/" url="https://example.com/data.pdf"

Pulls from Drive/Box via OAuth. No local storage hit.

Batch via agent loop:

docs = ["doc1.pdf", "doc2.txt"]

for doc in docs:

tool("workspace-storage-add-file", {"workspace": "rag-pipeline", "path": "/corpus/", "filename": doc})

Poll storage-list: ai_state: "ready". Large files chunk automatically.

Implement Retrieval

Core of RAG: fetch relevant context.

Semantic query:

workspace-search workspace="rag-pipeline" query="payment terms" folders_scope="root:abc"

Returns top chunks, scores, nodeIds.

Hybrid (keyword + semantic):

Add search_type: "hybrid".

Agent code:

def retrieve(query):

results = tool("workspace-search", {"workspace": "rag-pipeline", "query": query, "top_k": 5})

return [r["content"] for r in results if r["score"] > 0.75]

Scope with folders_scope="folderId:abc" limits to subfolders.

Generation and Post-Processing

Combine retrieval + LLM.

Direct AI chat (easiest):

ai-chat-create context_type="workspace" type="chat_with_files" folders_scope="root:abc" query_text="Summarize payment terms"

Polls until done and returns cited answers.

Custom LLM:

chunks = retrieve("indemnity clauses")

prompt = f"""Context: {' '.join(chunks)}

Question: What are indemnity clauses?

Answer using only context:"""

response = llm(prompt)

Post-process: re-rank chunks, compress context with an LLM.

Ready for Agentic RAG?

50GB free, 5,000 credits/month. Install ClawHub skill for OpenClaw RAG pipelines.

Multi-Agent RAG Architectures

A single agent works for simple cases. Production setups use agent teams.

Three-agent research pipeline:

- Indexer agent: Uses web-import to pull sources, acquires exclusive lock with storage-lock-acquire to avoid concurrent writes, uploads files, then releases lock.

- Retriever agent: Sets up webhook with webhook-create events=["file_ingested"], triggers on new files, performs workspace-search for relevant chunks.

- Synthesizer agent: Takes retrieved chunks, generates response using ai-chat-create or LLM prompt, outputs to create-note with citations.

Indexer agent code example:

lock = tool(\"storage-lock-acquire\", {\"path\": \"/corpus/\", \"workspace\": \"rag-pipeline\"})

tool(\"web-import\", {\"workspace\": \"rag-pipeline\", \"path\": \"/corpus/\", \"url\": \"https://example.com/source.pdf\"})

tool(\"storage-lock-release\", {\"lock_id\": lock[\"id\"]})

Retriever agent webhook handler:

def handle_ingest(event):

query = f\"Key points from {event['filename']}\"

chunks = tool(\"workspace-search\", {\"workspace\": \"rag-pipeline\", \"query\": query, \"top_k\": 5})

synth_agent.run(chunks)

Conflict-free multi-agent access: File locks ensure safe concurrent operations. Invite other agents with member-add.

Scale to production: Pair with human review in the UI. Transfer ownership to clients. Use webhooks to re-index on file changes. The setup works for many agents and large data amounts.

Optimization and Best Practices

Build reliable pipelines.

- Chunking strategy: Fastio handles semantic boundary-aware chunking automatically, with chunk sizes optimized for retrieval.

- Top-k tuning: Begin with a small top-k set; evaluate using precision@K or NDCG metrics on a validation set.

- Scoped retrieval: Use folder-level scopes like

folders_scope="root:abc"for modular pipelines. - Version pinning: Cache reliable results by querying

nodeId:versionIdfor reproducible retrieval. - Monitoring ingestion: Poll

activity-pollfor indexing status; alert onai_state != \"ready\". - Cost management: Monitor

auth-statusfor remaining credits; batch operations to stay under limits. - Reranking: Apply a cross-encoder model post-retrieval to boost precision.

- Query expansion: Rewrite queries with LLM for better recall in ambiguous cases.

Hybrid search combines semantic vectors with keyword matching to catch domain terms.

Evaluation framework: Create a dataset of query-ground_truth pairs. Compute recall@k, precision@k, and faithfulness score using tools like RAGAS or TruLens.

Troubleshooting Common Issues

No results: Check ai_state: "ready", intelligence ON.

Low scores: Increase top_k, check query embedding.

Credit errors: Upgrade or transfer ownership.

Locks stuck: storage-lock-release.

Large files: Use chunked uploads for large document sets.

Logs: events-search filters by actor/type.

OpenClaw RAG vs Other Frameworks

Fastio eliminates DB setup, adds collab.

Frequently Asked Questions

How to build RAG in OpenClaw?

Install ClawHub Fastio skill, create intelligent workspace, index docs via upload/web-import, retrieve with workspace-search, generate via ai-chat-create or custom LLM.

OpenClaw RAG best practices?

Scope retrieval with folders_scope, use hybrid search, lock files in multi-agent, monitor ai_state, pin versions for repro.

Fastio RAG costs?

Free agent tier: 50GB, 5,000 credits/mo ([10 credits/page ingest](https://fast.io/storage-for-agents/)). Pro scales up.

Multi-agent RAG collaboration?

Invite agents as members, use locks/webhooks. Ownership transfer to humans.

Large document support?

1GB/file uploads, auto-chunking for books/PDFs.

Diff from LangChain RAG?

Zero-infra vector store, MCP-native tools, human-agent UI parity.

How to eval RAG performance?

Embed test queries, measure chunk recall, use ai-chat for groundedness checks.

Related Resources

Ready for Agentic RAG?

50GB free, 5,000 credits/month. Install ClawHub skill for OpenClaw RAG pipelines.