How to Load Balance MCP Servers for Production Scale

Scaling Model Context Protocol (MCP) servers isn't as simple as adding more instances. When you move from a few users to thousands of concurrent agent requests, you have to manage session states and connection persistence. This guide breaks down the architecture patterns you need for a reliable, high-availability MCP setup.

Why Load Balancing Matters for MCP

Load balancing spreads tool requests across multiple servers so a single instance doesn't crash under pressure. Most MCP servers handle a few hundred concurrent connections before they start to struggle. Adding more users or complex RAG tasks pushes these limits quickly. When a node maxes out, CPU spikes and tool execution slows down. Your AI agent then waits too long for a response, which breaks the user experience. Without a load balancer, your agents might time out or stop working entirely if a server crashes. Spreading traffic keeps them responsive as you scale. This setup also simplifies maintenance. You can upgrade one node at a time without taking your whole service offline, ensuring 24/7 availability.

Core Concepts: Stateless vs. Stateful MCP

Check how your tools manage state before picking a load balancer. The choice between stateless and stateful designs will dictate how you scale.

Stateless MCP Servers are simple to scale because every request stands on its own. If an agent needs to read a file, the server does it and moves on. Since it doesn't save context between calls, any instance can handle the next request. To scale up, just spin up more containers and add them to the pool. This approach is a core part of modern AI agent architecture patterns.

Stateful MCP Servers are more complex. They track context like ongoing code analysis or multi-step chats. This requires "sticky sessions" (session affinity). Your load balancer must identify the mcp-session-id or a cookie to route every request from that session to the same server. If a request lands on the wrong server, the context disappears and the tool call fails. You have two ways to scale these:

- Sticky Sessions: The load balancer hashes the session ID to map users to specific backends.

- Shared State: This is the better move for cloud setups. By moving session data into a central store like Redis, any server can handle any request. This makes your whole system more resilient.

Load Balancing Strategies and Patterns

Your transport layer defines your strategy. In production, you'll want to move beyond local pipes to network-based transports.

1. HTTP/SSE and Layer 7

Most production environments use Server-Sent Events (SSE) over HTTP. Since SSE connections stay open, Layer 7 balancers like NGINX or AWS ALB work best. They look at HTTP headers to decide where to route traffic.

- Least Connections: This strategy sends new requests to the server with the fewest active SSE streams. It's often the best choice for MCP since some tool calls take much longer than others.

- Header-Based Routing: You can route traffic based on custom headers. For example, you might send all requests with an

x-mcp-priority: highheader to a dedicated high-performance pool.

2. Stdio (The Scaling Bottleneck)

The standard input/output (stdio) transport is built for local, 1:1 connections between a host (like Claude Desktop) and a server. It can't be load balanced effectively because it relies on a direct process pipe. To scale, you have to wrap stdio servers in an HTTP/SSE adapter or use a native HTTP MCP implementation. This change is necessary if you're moving beyond a single developer's machine.

3. Tool-Based Routing

Advanced setups use "smart" routing. A gateway looks at the requested tool (like github_create_issue vs. slack_post_message) and sends the traffic to a specific pool of servers. This lets you scale resource-heavy tools, like image generation, independently from lighter ones like database lookups. This "micro-tool" architecture prevents one heavy tool from slowing down everything else.

Give Your AI Agents Persistent Storage

Stop worrying about load balancers. Get a production-ready, auto-scaling MCP server with 19 consolidated tools and 50GB of free storage.

Step-by-Step: Setting Up NGINX for MCP

NGINX is a great choice because it handles long-lived HTTP and SSE connections without extra plugins.

Step 1: Define Your Upstream Pool

In your NGINX configuration, start by defining the servers that will handle the MCP traffic. Use the upstream block to list your server instances.

upstream mcp_servers {

### Use hash for sticky sessions if stateful

### hash $http_mcp_session_id;

server 10.0.0.1:8080;

server 10.0.0.2:8080;

server 10.0.0.3:8080;

}

Step 2: Configure the Server Block Next, create a server block that listens for incoming agent requests. You must disable buffering for SSE to work, otherwise the delivery of tool outputs to the agent will be delayed.

server {

listen 80;

server_name mcp.example.com;

location / {

proxy_pass http://mcp_servers;

proxy_http_version 1.1;

### Essential for SSE

proxy_set_header Connection "";

proxy_buffering off;

proxy_cache off;

proxy_set_header Host $host;

proxy_set_header X-Real-IP $remote_addr;

}

}

Step 3: Implement Health Checks Add a dedicated location for health checks so the load balancer only sends traffic to healthy nodes.

location /health {

access_log off;

return 200 'OK';

}

Scaling Architecture Patterns

To reach production scale, you can use a few different deployment patterns depending on your infrastructure.

The Sidecar Pattern Running an MCP server as a sidecar alongside your app is simple. It creates a 1:1 mapping that scales automatically with your app pods. However, it can waste resources if the MCP server sits idle while your app does other work. This is common in Kubernetes when tools are tightly coupled to the main logic.

The Centralized Fleet Running a dedicated group of MCP servers behind a load balancer is the best approach for larger teams.

- Autoscaling: Trigger scaling based on active SSE connections or CPU usage during tool execution. When the average CPU across the fleet exceeds 70%, spin up a new instance.

- Global Load Balancing: For low-latency agents, deploy MCP fleets in multiple regions and use a global load balancer to route agents to the nearest pool.

Queue-Based Scaling For heavy tools like long-running data exports, consider a worker pattern. The load balancer places requests into a message queue like RabbitMQ, and a fleet of workers pulls jobs as they have capacity. This provides the best resilience against massive traffic spikes.

Monitoring and Observability at Scale

If you can't measure it, you can't manage it. In a distributed MCP environment, monitoring is tricky because tool calls are often asynchronous and stateful.

Key

Metrics to Track

- Tool Execution Latency: Measure the time from the request arriving at the load balancer to the tool result being sent back. Watch for p95 and p99 spikes.

- Connection Count: Track the number of active SSE streams per server. This helps you tune your autoscaling thresholds.

- Error Rates by Tool: If one specific tool, like those found in a standard MCP tools set, is failing while others work, you likely have a dependency issue rather than a load balancer problem.

- Resource Utilization: Monitor memory and CPU per instance. Some MCP tools are memory-intensive, especially those handling large file parses or complex RAG operations.

Centralized Logging

Aggregate logs from all MCP instances into a single system like ELK or Datadog. Every tool call should include a request_id that is passed through the load balancer, making it possible to trace an agent's action across your entire setup.

Security Considerations for Scaled MCP

Scaling your MCP infrastructure also increases your attack surface. You need to integrate security into your strategy from the start.

TLS and Encryption Never run MCP over unencrypted HTTP in production. Use your load balancer for TLS termination so all data stays encrypted. Stick to TLS 1.3 to keep connection times fast.

Authentication and Authorization The load balancer is a great place to enforce authentication. Before a request reaches an MCP server, verify the API key or JWT. You can also implement secure MCP server authentication at the gateway level, so only certain agents can call sensitive tools.

Rate Limiting Protect your backend servers from "noisy neighbor" problems. Set rate limits at the load balancer level based on the agent's ID or IP address. For example, you might limit an agent to 10 tool calls per second to prevent accidental loops or abuse.

High Availability and Failover

Reliability matters for autonomous agents. If a server dies while an agent is waiting for a result, the agent might crash or give a wrong answer. You need a solid failover plan.

Circuit Breakers: Add these to your gateway. If a server starts timing out or throwing errors, the breaker trips and stops sending traffic there. This gives the instance a chance to recover or lets Kubernetes replace it.

Graceful Shutdowns: When you scale down, make sure servers handle SIGTERM signals correctly. They should stop taking new requests but keep existing SSE connections open until current tool calls finish. This prevents cutting off agents in the middle of a task.

Active Health Checks: Go beyond simple "port open" checks. Your load balancer should run "deep" health checks that verify the MCP server can actually execute a simple tool, like ping, before it's considered healthy.

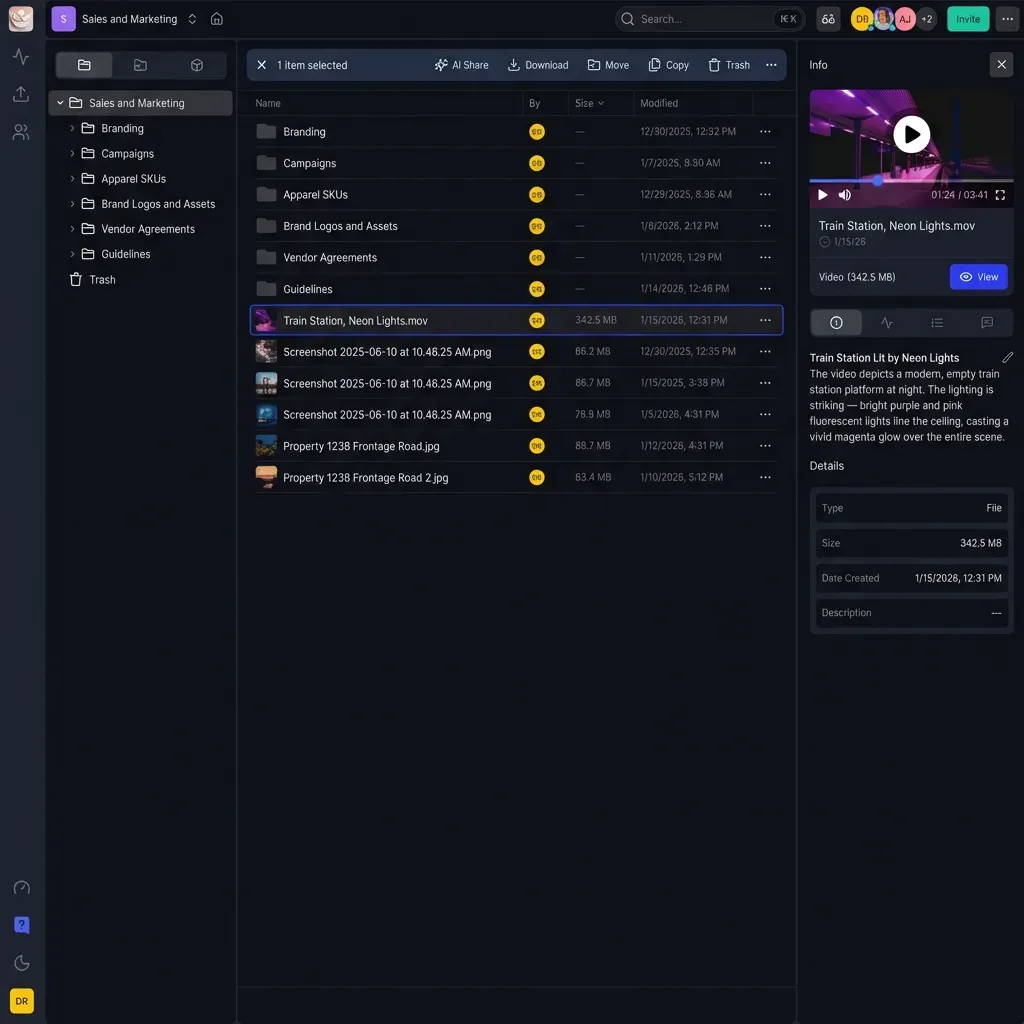

How Fastio Helps Scale Your Agents

Configuring load balancers and Redis clusters is a lot of work. Fastio gives you a managed, serverless setup designed for AI agents and MCP workflows. * 19 consolidated tools Ready to Go: Get a full library of file and data tools via a high-performance, load-balanced MCP server. * Scaling That Just Works: We handle the spikes. Our platform supports thousands of concurrent requests over SSE with zero configuration. * Persistent State: We use Durable Objects to track sessions. You get the benefits of shared state without managing Redis yourself. * Intelligence Mode: Built-in RAG indexes your files automatically. Agents query data through our infrastructure, ensuring fast responses no matter how many files you have. * Security by Default: We handle TLS, authentication, and rate limiting for you, so your tools stay secure as you scale from one agent to a million. Fastio takes care of scaling, monitoring, and security so you can focus on building agents that get the job done.

Frequently Asked Questions

How do I scale an MCP server?

Run multiple instances behind a Layer 7 load balancer like NGINX or AWS ALB. Use the SSE transport and, for stateful tools, turn on session stickiness so requests from the same agent always hit the same instance.

Can I run multiple MCP server instances?

Yes, running multiple instances is the best way to ensure your tools stay available. You'll need to use the HTTP/SSE transport, since stdio only works for local, single-process connections.

What load balancer works with MCP?

Any load balancer that supports HTTP long-polling or Server-Sent Events (SSE) will work. Popular choices include NGINX, HAProxy, Traefik, and cloud options like AWS Application Load Balancer (ALB).

How do I handle MCP server failures?

Use health checks and automatic failover in your load balancer. Moving session state to a store like Redis also helps, because it allows any server to take over a session if another one fails.

Related Resources

Give Your AI Agents Persistent Storage

Stop worrying about load balancers. Get a production-ready, auto-scaling MCP server with 19 consolidated tools and 50GB of free storage.