How to Design Serverless AI Agent Architecture

Serverless AI agent architecture lets agents run on-demand using FaaS platforms like AWS Lambda, with external services for state and coordination. This design scales automatically for bursty AI workloads, cutting costs up to multiple% compared to always-on servers. You'll learn components, single/multi-agent patterns, state strategies, and how tools like Fastio provide persistent storage for agents.

What Is Serverless AI Agent Architecture?

Serverless AI agent architecture runs AI agents on-demand using function-as-a-service (FaaS) platforms. Agents execute in short-lived functions triggered by events, with no server management. Traditional agents run on persistent VMs, incurring idle costs. Serverless shifts compute to providers like AWS Lambda or Cloudflare Workers, billing only for execution time. State lives externally in databases or object storage. This fits AI agents that process queries sporadically. For example, a customer support agent activates on new tickets, calls an LLM, fetches data, and responds, all without provisioning servers.

Helpful references: Fastio Workspaces, Fastio Collaboration, and Fastio AI.

What to check before scaling serverless ai agent architecture

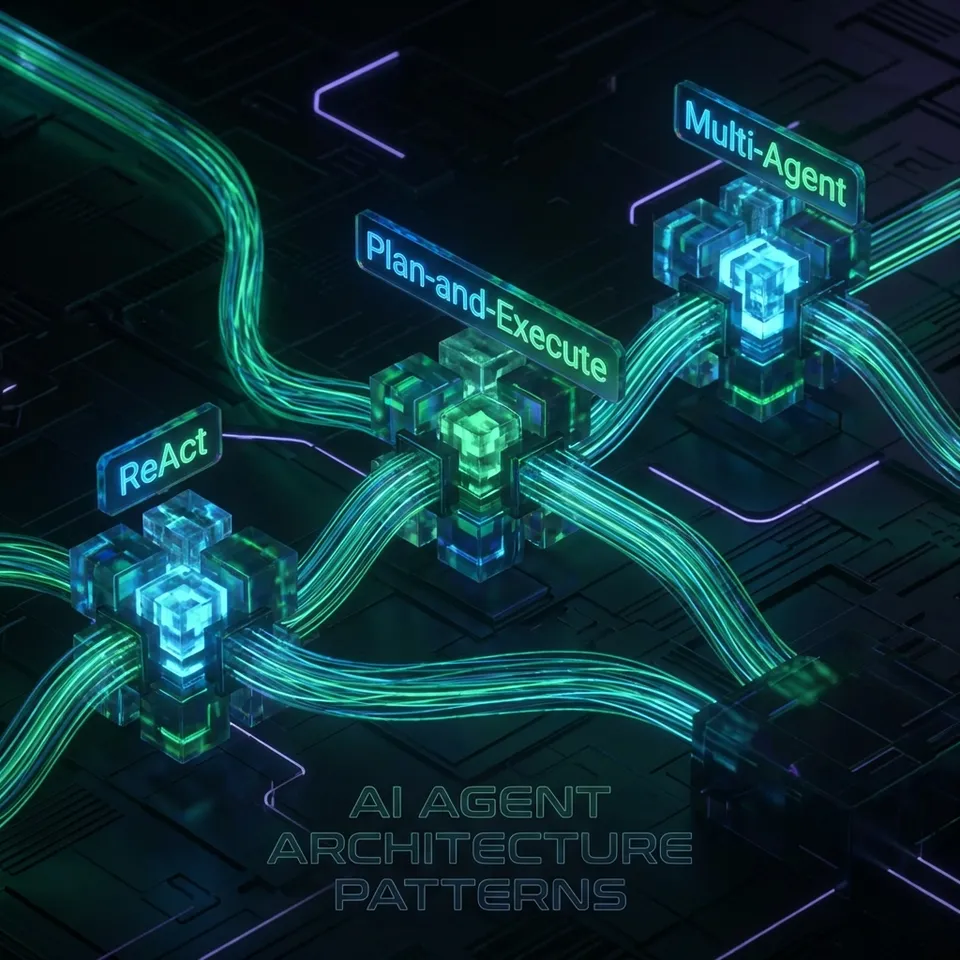

Serverless AI agent systems include these parts:

Event Triggers: HTTP requests, queues (SQS), or schedules start functions. Webhooks from tools like Fastio notify on file changes.

FaaS Compute: Lambda or equivalent runs agent logic: LLM calls, tool execution, decision loops.

LLM Provider: External API like Claude or OpenAI handles reasoning.

Tools: External services for actions. Fastio's MCP server offers multiple tools for file ops via Streamable HTTP.

State Store: Databases (DynamoDB) or files track conversation history, agent memory.

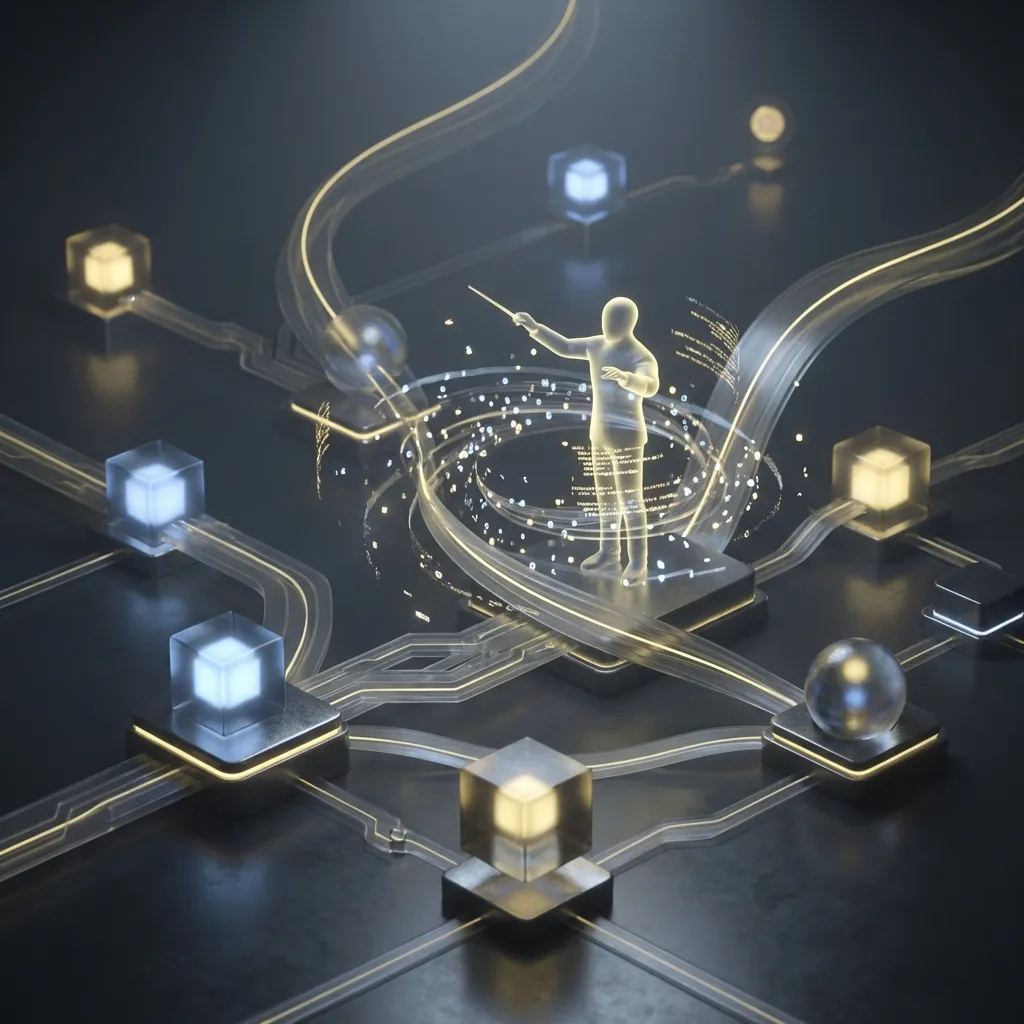

Orchestrator: For multi-agent, a supervisor routes tasks.

The diagram shows flow: trigger → function → LLM/tools → state update → response.

Single-Agent Serverless Patterns

Start with simple single-agent designs.

Stateless Pattern: Each invocation is independent. Use prompt engineering for context. Good for one-shot tasks like summarization.

Stateful with External DB: Save/load state from DynamoDB or Redis. Agent resumes from last checkpoint.

Example AWS Lambda agent:

import boto3

dynamodb = boto3.resource('dynamodb')

def lambda_handler(event, context):

table = dynamodb.Table('agent-state')

state = table.get_item(Key={'session_id': event['session_id']})

### LLM call, update state

response = llm_call(state['history'] + event['query'])

table.put_item(Item={'session_id': event['session_id'], 'history': updated_history})

return response

Scales to millions of invocations, costs pennies.

Multi-Agent Coordination in Serverless

Multi-agent systems divide tasks: researcher, writer, reviewer. Serverless adds challenges: stateless functions, cold starts, coordination.

Shared State: Use centralized stores like Fastio workspaces. Agents read/write files with locks to avoid conflicts.

Supervisor Pattern: Central Lambda routes tasks via queues. Agent A outputs to queue, supervisor dispatches to B.

File-Based Handoff: Agents write JSON artifacts to shared storage. Fastio supports ownership transfer: agent builds workspace, hands to human.

Event-Driven: Webhooks trigger next agent. Fastio notifies on file changes, perfect for reactive workflows.

Gap filled: Competitors overlook coordination. Use file locks for concurrent access.

State Management and Persistence

Serverless functions are ephemeral. Persist state externally.

Memory Types:

- Short-term: In-memory cache (rare, per invocation).

- Long-term: Object storage (S3), files. Fastio free agent tier: 50GB, 1GB max file, 5k credits/month.

Strategies:

- Checkpointing: Save after each step.

- Vector stores for RAG: Fastio Intelligence Mode auto-indexes files.

- Durable execution: Use workflows like Step Functions. Fastio example: Agents use MCP tools for URL import, locks, webhooks, no local I/O.

Build Serverless Agents with Persistent Storage

Fastio gives agents 50GB free storage, 251 MCP tools, built-in RAG. No credit card, works with any LLM. Built for serverless agent architecture workflows.

Deployment Platforms and Examples

AWS Lambda: Mature, integrates Bedrock Agents. Cold starts ~100ms.

Vercel/Edge: Fast for web agents.

Cloudflare Workers: Global edge, low latency.

OpenClaw integration: clawhub install dbalve/fast-io for Fastio tools.

Production tips: Idempotency, retries, monitoring.

Pros, Cons, and Cost Analysis

Pros:

- Auto-scale: Handles bursts.

- No ops: Provider manages infra.

Cons:

- Cold starts: multiple-500ms delay.

- Limits: 15min timeout, multiple memory.

- Vendor lock.

Costs: Lambda ~$0.multiple/multiple requests + duration. Bursty agents save vs EC2.

Frequently Asked Questions

What is serverless AI agent architecture?

Serverless AI agent architecture runs agents in FaaS functions triggered by events, with external state management for scalability. No servers to manage, ideal for sporadic workloads.

Pros and cons of serverless agents?

Pros: auto-scaling, low cost for bursts, no DevOps. Cons: cold starts, execution limits, state complexity. Best for event-driven tasks.

How to handle state in serverless AI agents?

Use external databases or file storage for persistence. Services like Fastio provide agent-native storage with locks and webhooks.

Best platforms for serverless AI agents?

AWS Lambda for maturity, Cloudflare Workers for edge, Vercel for web. Choose based on ecosystem.

Can serverless handle multi-agent systems?

Yes, via supervisors, queues, shared state. File-based handoffs with coordination prevent conflicts.

Related Resources

Build Serverless Agents with Persistent Storage

Fastio gives agents 50GB free storage, 251 MCP tools, built-in RAG. No credit card, works with any LLM. Built for serverless agent architecture workflows.