How to Implement Multi-Camera Semantic Video Indexing for AI Analysis

Multi-camera semantic video indexing uses AI to link visual data across several feeds at once, creating a unified, searchable timeline. This process replaces manual scrubbing with sub-second retrieval. It allows teams to track subjects across different fields of view without losing track of who is who.

The Challenge of Manual Multi-Camera Correlation

Reviewing footage from one camera is tedious, but managing a network of ten or fifty cameras is overwhelming for most teams. When a subject moves from the lobby to a stairwell and then to a parking garage, traditional systems treat these as isolated video files. Security professionals usually have to open each file, sync the timestamps, and manually confirm the subject is the same person. This fragmentation creates a massive visibility gap where key details are lost in the transition between feeds.

Industry benchmarks show that correlating multi-camera feeds manually takes about 10x the real-time duration of the footage. Tracing a subject's path through multiple minutes of activity across multiple cameras could take a human operator five hours to piece together. This delay makes real-time response impossible and turns forensic investigations into multi-day projects. In a high-stakes environment like a hospital or a large transit hub, those lost hours translate to increased risk and massive labor costs.

Beyond the time investment, manual review is prone to cognitive fatigue. Studies in visual attention suggest that human operators begin to miss important events after just twenty minutes of monitoring. When multiple screens are involved, the error rate climbs even faster because the brain struggles to map spatial relationships between disconnected viewpoints. Semantic indexing fixes this by capturing the "meaning" of the video as it records, allowing the system to handle the heavy lifting of correlation.

Helpful references: Fastio Workspaces, Fastio Collaboration, and Fastio AI.

How Semantic Indexing Creates a Unified Timeline

Semantic video indexing works by turning raw pixels into high-dimensional vector embeddings. Instead of searching for "File_001.mp4," the system recognizes concepts like "person in a blue jacket" or "white van turning left." Deep learning models analyze frames and extract feature vectors to make this work. These vectors represent the visual and temporal essence of the footage in a mathematical space where similar concepts are grouped together.

Multi-camera environments rely on three key technical layers to make this work. First, the system needs sub-millisecond time synchronization. Without perfectly aligned timestamps, the AI cannot tell if an event in Camera A happened before an event in Camera B. A central Network Time Protocol (NTP) server usually handles this, keeping every recording device in the network in perfect lockstep.

The indexing engine then generates "re-identification" (Re-ID) signatures. These are unique mathematical descriptions of a subject's appearance that stay consistent even when camera angles or lighting change. A Re-ID signature focuses on stable features like clothing patterns, height ratios, and gait rather than transient factors like shadows or glare. A vector database then stores these embeddings for sub-second retrieval across thousands of hours of footage. When you search for a specific person, the system compares your query to the stored vectors and pulls every matching frame from every camera in the network.

This unified approach eliminates the need to manually stitch together timelines. By treating the entire camera network as a single data source, semantic indexing provides a continuous view of events. If a subject disappears from one camera, the index already knows which cameras share a boundary and where the subject is most likely to reappear. This spatial awareness is what separates true multi-camera indexing from basic file search.

Stream and Share Video on Fastio

Turn your multi-camera video archives into a searchable knowledge base. Index thousands of hours of footage and find any moment in seconds with Fastio's Intelligence Mode. Built for multi camera semantic video indexing workflows.

Implementing Cross-Camera Tracking Persistence

The hardest part of multi-camera analysis is tracking someone through "blind spots" where no cameras are present. Modern AI handles this using spatial and temporal reasoning. Instead of just looking at pixels, the system builds a spatial graph that represents the physical layout of your facility. This graph tells the AI which camera feeds are adjacent and what the typical travel time is between them.

When a subject exits the frame of Camera A, the system does not just stop tracking. It predicts where the subject should reappear based on their last known speed and direction. For example, if a subject enters a hallway from the North, the semantic index focuses on matches on the South exit camera within a specific time window. This localized search window reduces the computational load and makes the automated timeline much more accurate. It prevents the system from accidentally matching the subject with a similar-looking person on the other side of the building.

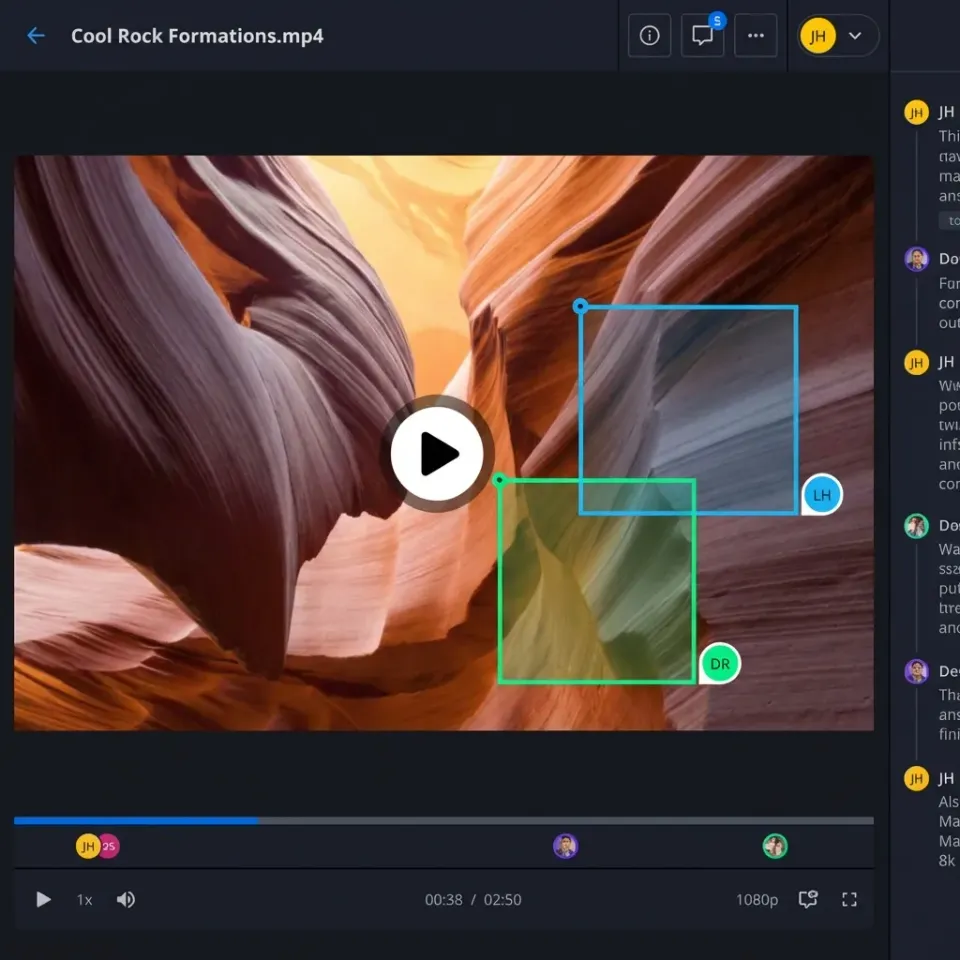

Handling variations in lighting and camera quality is another important part of persistence. A subject might look different under fluorescent office lights compared to bright sunlight in a parking lot. Advanced Re-ID models are trained to be "invariant" to these changes, focusing on structural features that remain constant. Fastio workspaces manage this by grouping related camera streams into one environment where Intelligence Mode indexes them as a single unit. This gives the AI access to the full context of the subject's journey, which leads to much higher confidence scores in subject matching.

This lets teams follow a "digital breadcrumb trail" without ever losing the subject. Whether the person is moving through a warehouse, a retail store, or an office complex, the semantic index maintains the connection. This level of persistence is essential for modern forensic workflows where speed and accuracy are the top priorities. Without it, the "multi-camera" aspect is just a collection of separate views rather than a unified system.

A Step-by-Step Implementation Guide

Building a multi-camera semantic indexing pipeline involves balancing local data ingestion and cloud processing. A successful setup requires careful preparation of your hardware and a clear understanding of your search goals. By following a structured approach, you can ensure that your video data is not only stored but also fully actionable for AI analysis.

This process is designed to be scalable, meaning you can start with a few key cameras and expand as your needs grow. The focus remains on creating a high-quality index that provides reliable results during high-pressure investigations. Keep a record of your settings to help maintain the system as it grows.

1. Synchronize Internal Clocks

Set all cameras and recording servers to a unified Network Time Protocol (NTP) source. Even a five-second drift can break the logic the AI uses to track subjects moving between feeds. For better results, use a local NTP stratum server to minimize latency between devices on your network.

2. Configure Stream Aggregation

Upload your multi-cam streams to a Fastio workspace. Use the "URL Import" tool to pull directly from your cloud storage or S3 buckets. This avoids local I/O bottlenecks and keeps your data ready for indexing. If you are working with large volumes of high-resolution footage, use a staged upload to manage bandwidth.

3. Activate Intelligence Mode

Turn on Intelligence Mode in the workspace. Fastio will start generating semantic embeddings for your video files. This process pulls out metadata, recognizes objects, and builds the vector index for natural language search. The system does the heavy work in the background, so your files become searchable without manual tagging.

4. Establish Spatial Rules

Map out the relationships between your cameras in your workspace configuration. Identify which feeds are next to each other and document the expected transit times between them. This information helps the Re-ID algorithm maintain tracks as subjects move through the area, especially in layouts with complex hallways or multiple floor levels.

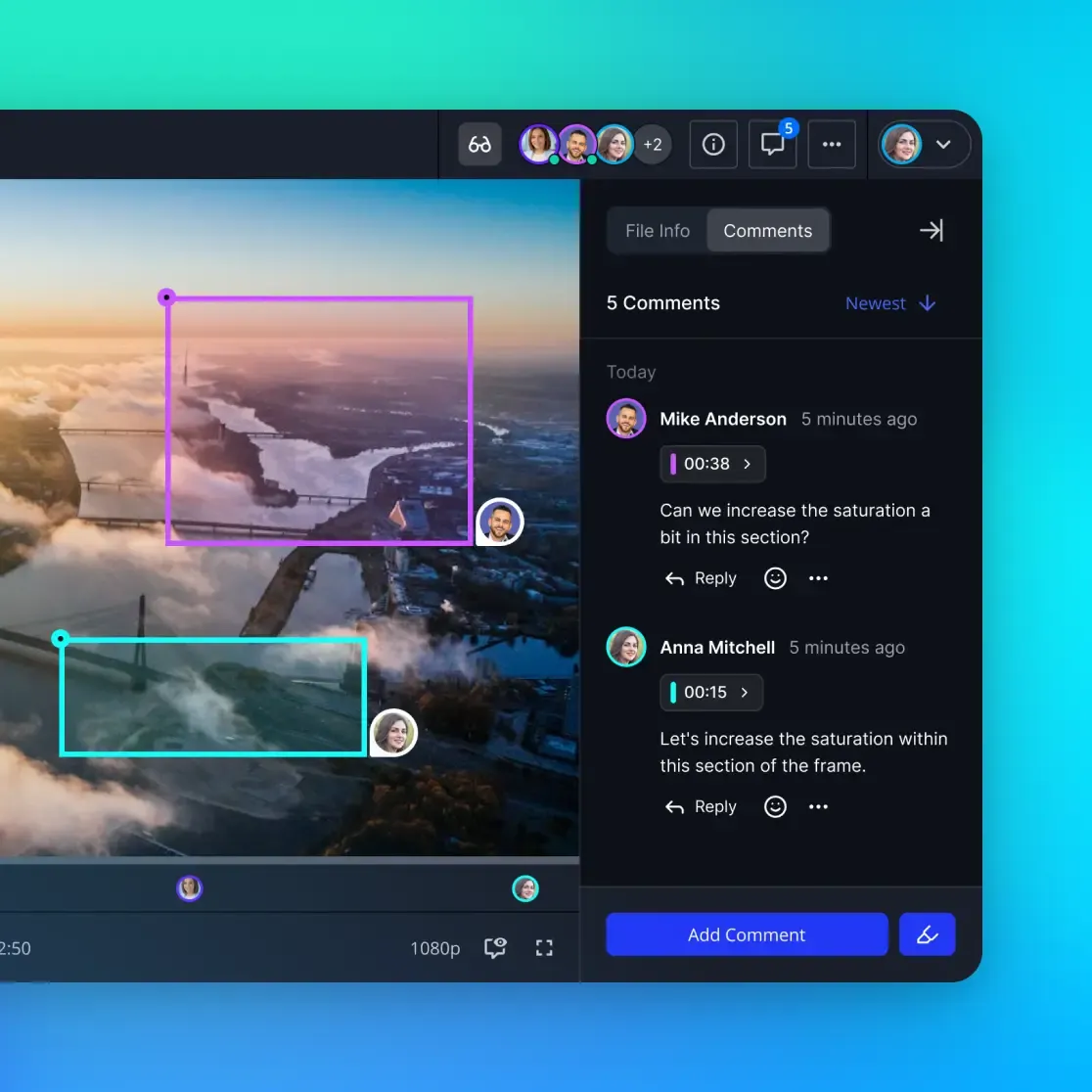

5. Query via Natural Language

Use the chat interface to find specific moments. Instead of scrubbing through timelines, just ask: "Show me the person in the red hat moving from the entrance to the elevator." The system will scan the multi-camera index and present a unified timeline of the events. You can then export these clips as a single evidence package for further review.

Best Practices for Large-Scale Video Indexing

Managing multi-camera indexing at scale requires more than just powerful software. It requires a disciplined approach to data management and camera placement. To get the most out of your semantic index, focus on these operational best practices.

First, fine-tune your camera angles for feature extraction. While a wide-angle "bird's eye" view is good for general awareness, it often lacks the detail needed for high-confidence Re-identification. Positioning cameras at eye level at key "choke points," such as doorways or narrow corridors, gives the AI better data to build a subject's visual signature.

Second, consider the balance between video resolution and processing speed. While multiple video offers high-resolution detail, it also requires much more computational power and storage bandwidth to index. For many semantic search applications, 1080p is the "sweet spot" that provides enough detail for accurate object recognition while keeping the indexing process fast and efficient.

Finally, maintain a consistent lighting environment where you can. Sudden changes in brightness or color temperature can sometimes confuse Re-ID models. Using infrared-capable cameras for low-light areas or using consistent indoor lighting helps the system maintain a stable track as subjects move between different zones.

Evidence and Performance Benchmarks

Switching to a semantic indexing model provides clear improvements in both speed and accuracy. Manual video search is limited by human attention spans, which drop off quickly after just multiple minutes of monitoring. AI-driven systems don't get tired and can process massive amounts of data at the same time, so no detail is missed.

Research into semantic search performance shows that AI indexing can cut search time by up to 90% compared to traditional manual methods. While a person might take hours to find a specific event, a vector-based search engine can find the exact frames in under multiple milliseconds. In retail environments, this technology has been shown to reduce the time spent on loss prevention investigations by over multiple%, allowing teams to resolve cases in minutes rather than days.

Accuracy for cross-camera tracking has also improved. Modern Re-ID algorithms can maintain subject tracks with over multiple% accuracy in controlled environments. Even in crowded public spaces, these systems outperform human operators who often lose track of subjects in high-traffic areas. By combining these benchmarks with the simplicity of a unified workspace, teams can work at a level that was not possible before.

This data confirms that semantic indexing is not just a convenience; it is a major change in how we handle video intelligence. The ability to treat thousands of hours of footage as a searchable database changes the economics of security and media management, making it feasible to analyze data that would otherwise be left untouched on a hard drive.

Frequently Asked Questions

What is the difference between keyword and semantic video search?

Keyword search relies on manual tags like 'Entrance' or 'multiple:multiple PM.' Semantic search understands the actual content of the video, so you can search for visual concepts like 'man carrying a box' or 'car with a broken taillight' without needing manual labels. This allows for much more flexible and powerful querying of your video archives.

How do you track a person across multiple cameras?

The system uses Re-identification (Re-ID) algorithms to create a visual signature of the person. This signature is compared across different camera feeds. Even if the person changes their angle or moves into different lighting, the AI recognizes the mathematical pattern of their appearance to maintain a single track. This process is much faster and more reliable than manual tracking by a human operator.

Can I use existing surveillance cameras for semantic indexing?

Yes, as long as you can export the video files or provide an RTSP stream. The semantic indexing happens at the software level, so you do not need 'AI cameras' at the edge; you can process standard footage through a cloud-based workspace like Fastio. This means you can upgrade your existing security infrastructure with modern AI capabilities without replacing your hardware.

How long does it take to index a video feed?

Fastio processes video files quickly once they are uploaded to a workspace with Intelligence Mode enabled. Most files are indexed in a fraction of their total duration, making them searchable shortly after the upload finishes. The exact time depends on the video resolution and the complexity of the content, but the system is designed for high-throughput processing.

Does semantic indexing work in low-light or night-vision conditions?

Modern semantic indexing models are trained on a wide variety of lighting conditions, including infrared and low-light footage. While clarity is always better for accuracy, the system can still extract structural features and gait patterns even in challenging environments. This ensures that your search capabilities remain active multiple/multiple, regardless of the time of day.

Is multi-camera indexing limited to security applications?

No, this technology is also widely used in sports analysis, retail analytics, and media production. For example, a sports team might use it to track players across multiple stadium cameras, or a retail store might use it to analyze customer flow through different departments. Any environment with multiple camera feeds can benefit from a unified, searchable index.

How does the system handle subjects who change their appearance?

Re-ID models focus on features that are difficult to change quickly, such as height, build, and movement patterns. While a subject putting on a jacket might lower the matching confidence, the system often uses temporal context, such as knowing that the subject was just seen in an adjacent room, to maintain the track. This combination of visual and spatial logic provides a high level of reliability.

Can I search for specific objects besides people and vehicles?

Yes, the semantic index can be configured to recognize a wide range of objects, including bags, tools, or specific types of equipment. This makes it a powerful tool for industrial safety audits or operational monitoring where you need to find specific items across a large facility. You can ask the system to "find the person carrying a ladder" to see all relevant footage.

Related Resources

Stream and Share Video on Fastio

Turn your multi-camera video archives into a searchable knowledge base. Index thousands of hours of footage and find any moment in seconds with Fastio's Intelligence Mode. Built for multi camera semantic video indexing workflows.