How to Set Up a Llama Agent Workspace

Llama agent workspaces combine persistent storage, RAG, and collaboration for AI agents running Llama models. Fastio's free plan gives you 50 GB storage, 5,000 monthly credits, and 5 workspaces. No credit card needed. This step-by-step guide walks you through setup from account creation to multi-agent workflows.

What Is a Llama Agent Workspace?

Llama agent workspaces fix common issues when building AI agents with Llama models. Models like Llama 3.1 handle tool use and long reasoning chains well. But they need persistent storage to keep track of state between runs. Without it, agents forget everything after each session. Complex tasks become impossible.

Llama models handle agentic workflows well. They score well on benchmarks like HumanEval, where Llama 3.1 70B gets 80.5% pass@1. This makes them solid for code generation and tool calling in multi-step processes. With 711k downloads last month on Hugging Face, Llama 3.1 is one of the most popular choices for agent development.

Frameworks like LlamaIndex provide agent tools and storage examples. Local setups suit solo developers, but teams require cloud storage for sharing files across agents and humans.

Fastio workspaces solve this by combining persistent storage with built-in RAG. Upload documents, enable Intelligence Mode, and query contents with citations. For example, ask "Summarize Q4 sales from the CSVs I uploaded" to get cited insights across all files.

Here's a comparison of storage options for Llama agents:

Reliable storage supports multi-step agent workflows. Without it, agents lose context and fail on longer tasks like research or reports.

Why Fastio for Llama Model Agent Collaboration?

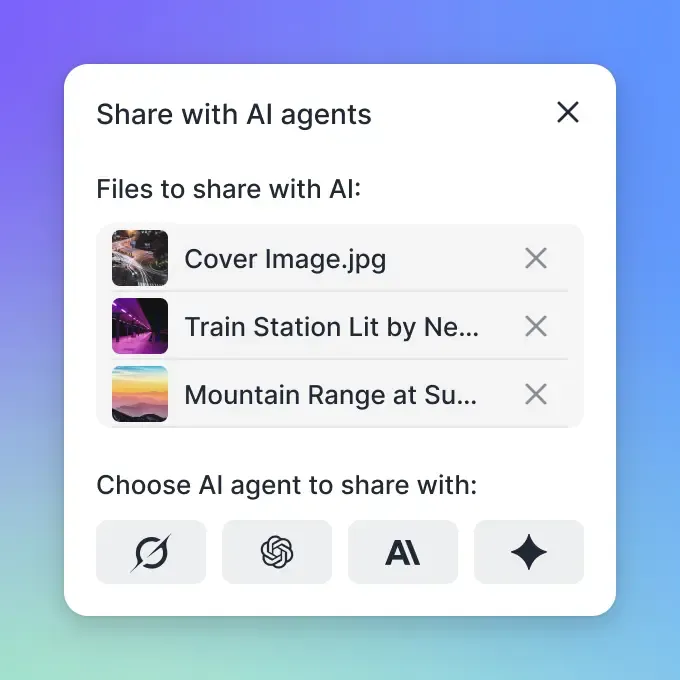

Fastio lets Llama agents share workspaces with humans for collaboration. Agents access the full UI via 19 consolidated tools, while humans use the web interface.

Free agent tier: 50GB storage, 5,000 monthly credits covering 100 credits/GB storage, 212 credits/GB bandwidth, 1 credit per 100 AI tokens, and 10 credits per page for document ingestion. Includes 5 workspaces, 50 shares, and 5 members per workspace. No credit card required. Sign up.

Fastio benefits for Llama agents: - Native MCP support: 19 consolidated tools for every UI action, streamed via HTTP or SSE. - Zero-config RAG: Toggle Intelligence Mode; files auto-index for semantic search and chat. - Multi-agent ready: File locks prevent conflicts. Webhooks support reactive flows. - Human handoff: Ownership transfer API keeps agent admin after transfer.

MCP client example:

from mcp import Client

client = Client("/storage-for-agents/", token="your_token")

files = client.call("list_files", {"workspace_id": "ws_123"})

summary = client.call("ai_chat_create", {"workspace_id": "ws_123", "query": "Key insights from recent uploads?"})

Cons and alternatives: - Lacks built-in compute (pair with Replicate or RunPod for Llama inference). - Vs S3: More agent-friendly, no egress fees on free tier.

| Platform | Free Tier | MCP Tools | RAG | Locks | Ownership Transfer | |----------|-----------|-----------|-----|-------|--------------------| | Fastio | 50GB | 19 | Built-in | Yes | Yes | | OpenAI Files | None | Limited | No | No | No | | S3 | No | None | Manual | Manual | N/A | | Pinecone | Vectors only | No | Yes | No | No |

Start Your Llama Agent Workspace Today

Fastio gives teams shared workspaces, MCP tools, and searchable file context to run llama agent workspace workflows with reliable agent and human handoffs.

First step: Create Free Agent Account and Workspace

You'll need: Node or Python, API token from dashboard.

Sign up free. Grab your API key.

Create org/workspace:

ORG_ID=$(curl -X POST https://api.fast.io/v1/orgs -H "Auth: Bearer $TOKEN" -d '{"name": "llama-team"}' | jq .id)

WS_ID=$(curl -X POST https://api.fast.io/v1/workspaces -H "Auth: Bearer $TOKEN" -d "{\"org_id\": \"$ORG_ID\", \"name\": \"llama-agent-prod\"}" | jq .id)

- Enable RAG:

curl -X PATCH https://api.fast.io/v1/workspaces/$WS_ID -H "Auth: Bearer $TOKEN" -d '{"intelligence_mode": true}'

- Upload test doc:

curl -X POST https://api.fast.io/v1/files -H "Auth: Bearer $TOKEN" -F "file=@report.pdf" -F "workspace_id=$WS_ID"

- Test RAG query in MCP chat: "Summarize uploaded report."

Define clear tool contracts and fallback behavior so agents fail safely when dependencies are unavailable. This improves reliability in production workflows.

Verify and Troubleshoot

Check indexing status in dashboard under workspace settings. Wait a few minutes for large files. View logs with client.call("events_search", {"workspace_id": WS_ID}). Common errors: token rate limits, file too large (>1GB).

Test RAG Query

Use MCP chat or API: ai_chat_create({"query": "List key metrics from uploaded files", "workspace_id": WS_ID}). Expect citations to specific files/pages.

Step 2: Install OpenClaw for Llama Integration

OpenClaw lets Llama models call MCP tools in natural language.

Install:

pip install clawhub

clawhub install dbalve/fast-io

Tools include upload, list, search, lock (14 total).

Llama code example (via Ollama):

import ollama

response = ollama.chat(model='llama3.2', messages=[

{'role': 'system', 'content': 'Use Fastio OpenClaw tools.'},

{'role': 'user', 'content': 'Upload sales-report.pdf to llama-agent-prod/reports/'}

])

Handles chunked uploads up to 1GB automatically.

Step 3: Configure Shared Storage for Multiple Llama Agents

Add members for collaboration:

curl -X POST https://api.fast.io/v1/workspaces/$WS_ID/members -H "Auth: Bearer $TOKEN" -d '{"user_id": "agent2", "role": "editor"}'

Use locks to avoid conflicts:

### Llama Agent 1

lock = client.acquire_lock(file_id="shared-plan.xlsx")

### Edit...

client.release_lock(lock.id)

Webhooks:

curl -X POST https://api.fast.io/v1/webhooks -d '{

"workspace_id": "ws_123",

"events": ["file_uploaded"],

"url": "https://your-llama-server/webhook"

}'

LlamaIndex: Connect Fastio as a custom retriever via REST API.

Define clear tool contracts and fallback behavior so agents fail safely when dependencies are unavailable. This improves reliability in production workflows.

Advanced: Multi-LLM Agent Collaboration

Share one workspace across LLMs like Llama and others [/storage-for-agents/].

Ownership transfer:

curl -X POST https://api.fast.io/v1/orgs/$ORG_ID/transfer -H "Auth: Bearer $TOKEN" -d '{"to_user_id": "human@example.com"}'

Handoff example: Llama agent sets up data room, creates share link, emails human teammate.

Multi-agent work: File locks prevent overwrites in parallel edits.

Scaling: Webhooks trigger re-indexing on file changes.

Define clear tool contracts and fallback behavior so agents fail safely when dependencies are unavailable. This improves reliability in production workflows.

Frequently Asked Questions

Which workspace works best for Llama agents?

Fastio offers 50GB free storage, built-in RAG, 19 consolidated tools, and human collaboration. Works directly with Llama 3.x.

Can Llama agents share storage on Fastio?

Yes. Unlimited guests, file locks for concurrent access, role-based permissions.

What's in the free tier for agent workspaces?

50GB storage, 5,000 credits/month, 5 workspaces. Covers most agent workflows.

Can LlamaIndex agents use Fastio storage?

Yes, via REST API or MCP tools. Intelligence Mode indexes files automatically.

What MCP tools work with Llama agents?

All 19 consolidated tools. They cover UI features like upload, search, lock, webhooks. Any LLM compatible.

Related Resources

Start Your Llama Agent Workspace Today

Fastio gives teams shared workspaces, MCP tools, and searchable file context to run llama agent workspace workflows with reliable agent and human handoffs.