How to Integrate Fastio API with Cloudflare Workers

Integrating the Fastio API with Cloudflare Workers lets developers handle file routing, authentication, and webhook processing directly at the edge. Running serverless functions close to your users cuts latency and offloads heavy I/O tasks from your primary backend. This guide covers setting up the integration, managing large file streams, and using edge intelligence.

Understanding Edge File Processing and Latency

Integrating the Fastio API with Cloudflare Workers lets developers handle file routing, authentication, and webhook processing right at the network edge. Running code closer to the user cuts latency. Instead of making a user in Tokyo wait for a server in New York to authorize a file upload, you can process that request in a Tokyo data center. This approach changes how applications manage file transfers.

Cloudflare Workers run on V8 isolates instead of standard containers. This means your serverless functions boot almost instantly. According to Cloudflare Docs, Cloudflare Workers offer 0ms cold starts for edge execution. You avoid the slow boot times common with older serverless platforms. Pairing this fast environment with the Fastio API gives you a quick way to intercept uploads. You can validate security tokens and apply business logic before any files touch your main servers.

This setup helps when building data-intensive applications. Bad or unauthenticated file uploads eat up bandwidth and memory. Catching these invalid requests at the edge protects your core application and keeps it responsive. Processing files at the edge also gives your users the lowest possible latency. They get faster upload speeds and a better experience.

Why Combine Fastio API with Cloudflare Workers?

Using the Fastio API in Cloudflare Workers removes the need for a standard middleware server. In a typical setup, you have to route file uploads through a backend framework like Node.js, Express, Django, or Ruby on Rails. You do this just to authenticate the session, generate a presigned upload URL, or check the payload. That old method adds extra network hops, increases latency, and struggles to scale with large files.

Moving this logic to the edge lets the Cloudflare Worker catch the client request right away. The Worker can check the user's JWT or session token on its own. It then makes a secure server-to-server fetch call to the Fastio API to get a temporary upload token. Finally, it proxies the incoming file stream straight to Fastio storage. This direct path cuts transfer times and lowers the load on your main servers.

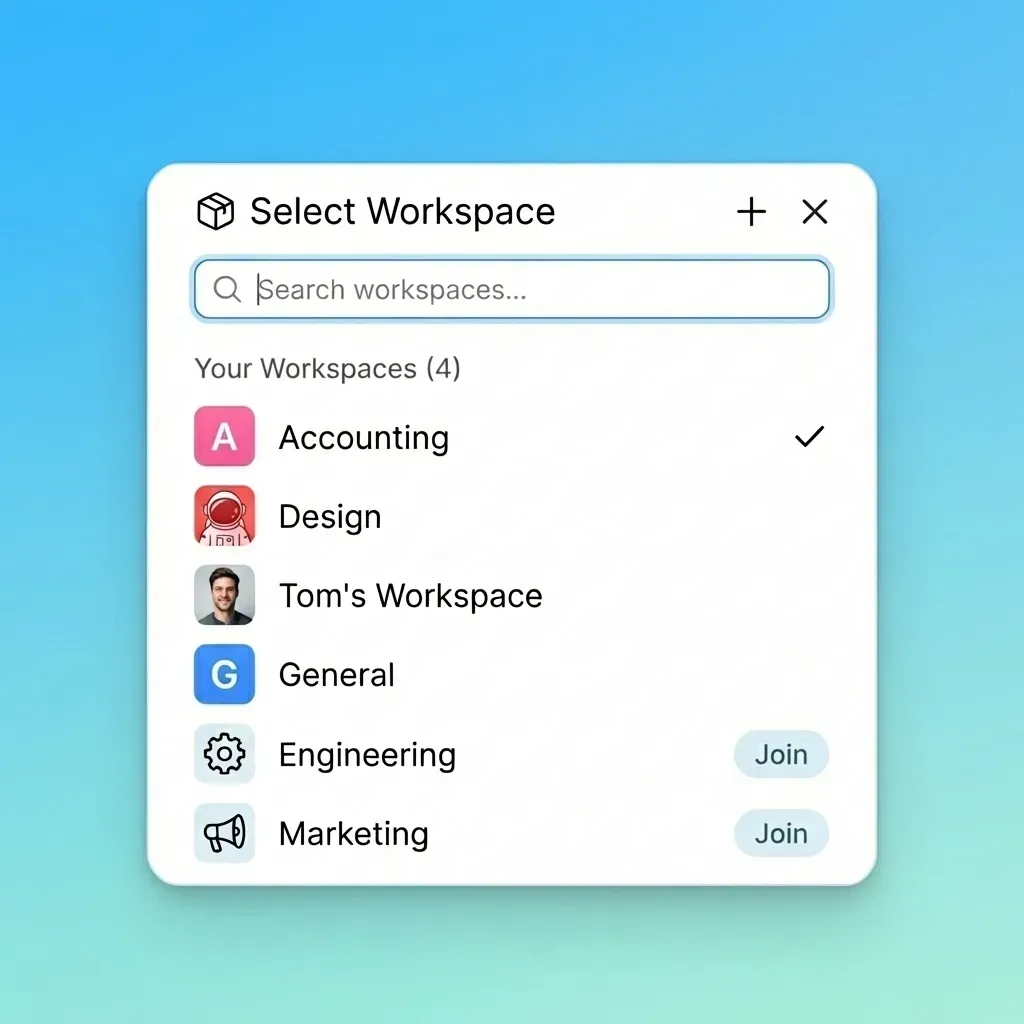

The operational benefits also stand out. The Fastio free agent tier provides multiple of free storage and access to multiple MCP tools. This makes it easy for developers to build edge-native workflows without big upfront costs. You get reliable storage backed by Cloudflare's global network, while keeping strict control over your data routing.

Architecture: How Fastio Integrates at the Edge

An edge-first file integration separates the work into three parts: the client application, the Cloudflare Worker, and the Fastio API infrastructure. Knowing how data flows between them helps you build better integrations.

When a user starts a file upload, the client application sends an HTTP POST request with the file data to your Cloudflare Worker endpoint. The Worker checks the incoming request headers. It validates the authentication credentials and confirms the request passes basic security checks. After that, the Worker makes a secure API call to the Fastio endpoints to request an upload destination or presigned URL.

The Worker then streams the file payload directly to Fastio using standard web streams. This step avoids buffering the whole file in the Worker's memory. Streaming matters because V8 isolates have strict memory limits, usually around multiple. Trying to load a large file into memory will crash the Worker. Using the Streams API, specifically the TransformStream interface, lets the Worker inspect file bytes as they pass through. You can reject bad file signatures or calculate checksums on the fly before the upload to Fastio finishes.

Prerequisites for Setting Up Your Edge Worker

Before writing any code, you need to set up your development environment and secure your credentials. This prep work prevents security issues down the line.

Start by creating a Cloudflare account if you lack one. Then, install the Wrangler CLI tool via npm. Wrangler is the official tool for building, testing, and deploying Cloudflare Workers. Run the init command to generate a new Worker project. This step creates your wrangler.toml config file and your main script.

You also need an active Fastio account. You can sign up for the free agent tier. The platform limits file sizes to multiple for free accounts, but Fastio Documentation states that the system supports files up to 250GB for premium tiers. This makes it a good fit for heavy multimedia workloads. Go to your Fastio developer dashboard and create a new API key with permissions to manage uploads and workspaces.

Keep your keys safe. Store your Fastio API key as an encrypted secret in your Cloudflare Worker environment. Never put your API credentials in your wrangler.toml file or commit them to version control. Use the Wrangler CLI to bind the secret to your Worker so it only loads during runtime.

Implementing the Fastio API Integration

Let's look at the implementation steps. Cloudflare Workers run on the standard Fetch API, which pairs well with Fastio's REST endpoints. In your Worker's index.js or index.ts file, export a default fetch handler to catch incoming network traffic.

When a POST request hits your Worker, extract the metadata from the headers. Pull out the filename, content type, and user ID. Then, make an authenticated fetch call to the https://api.fast.io/v1/uploads endpoint. Pass your API key in the authorization header. Fastio will return an upload URL or session token.

After you get the destination URL, proxy the client's request.body straight to it. The body of a Fetch request is a ReadableStream, so Cloudflare automatically streams the data to Fastio in chunks. This method avoids buffering and keeps the Worker under its memory limits. Remember to wrap your external API calls in try/catch blocks. This helps you handle network timeouts, API rate limits, or dropped connections, so you can send proper HTTP status codes back to the client.

Ready to build intelligent edge workflows?

Start integrating Fastio with your Cloudflare Workers today. Get 50GB of free storage and 19 consolidated tools with no credit card required.

Handling Large File Uploads and Streaming Data

Streaming data correctly is a core part of edge file processing. Cloudflare Workers cannot buffer large payloads. If a user uploads a multiple video, loading that file into a JavaScript variable will crash the V8 isolate because of memory limits.

You need to pipe the incoming request stream directly to the Fastio API. Fastio supports streaming uploads out of the box. Beyond basic proxying, you can add a TransformStream to your Worker. This setup lets your Worker inspect data chunks as they move through the edge node. You can calculate SHA-multiple file hashes, check for bad byte patterns, or remove metadata on the fly without holding the whole file in memory.

For massive files that break standard streaming limits, try a different approach. Use the Cloudflare Worker as an orchestration layer. The Worker authenticates the user, gets a Fastio presigned direct-upload URL, and sends that URL back to the client. The browser or client app then uploads the file directly to Fastio servers. This bypasses the Worker for the actual data transfer. The Worker still manages the authentication, but Fastio's network handles the heavy lifting.

Processing Fastio Webhooks at the Edge

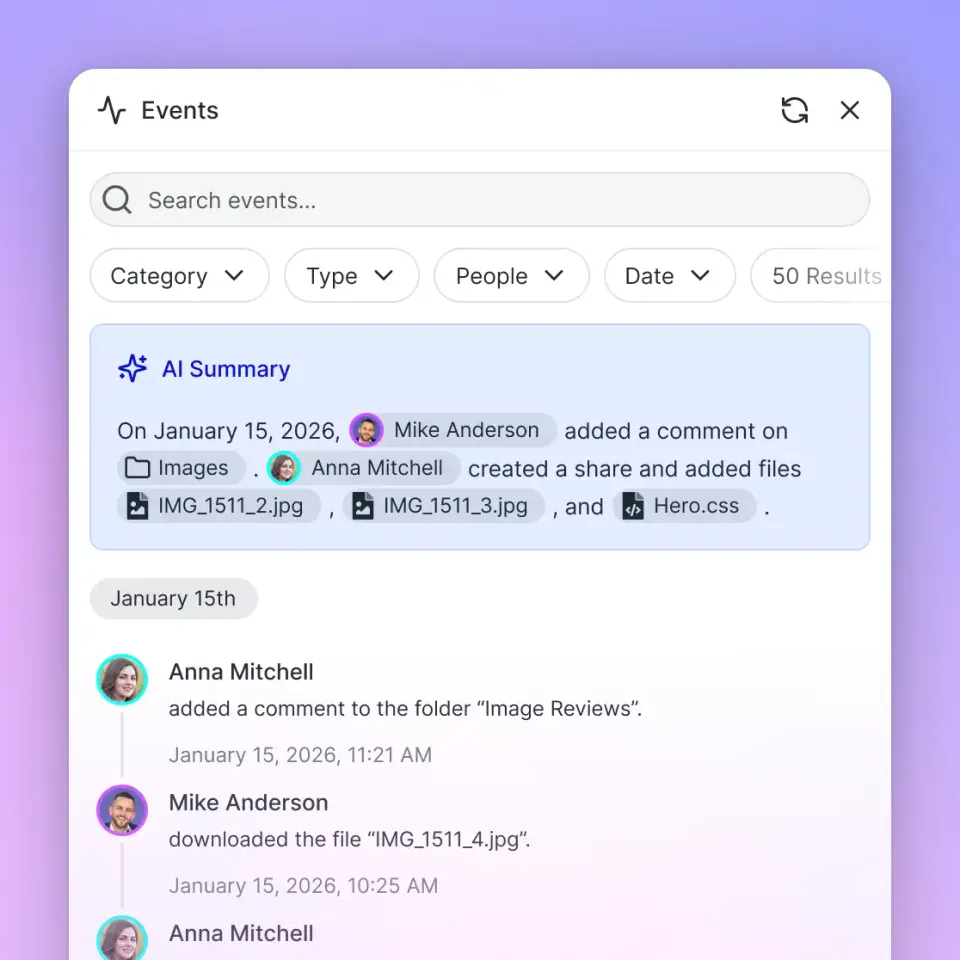

Webhooks let your application react to file events as they happen. When a file is uploaded, modified, or deleted in a Fastio workspace, the platform sends an HTTP webhook payload to your chosen destination. Cloudflare Workers make great webhook receivers because of their high uptime and low latency.

To set this up, add a webhook in your Fastio dashboard that points to a route on your Cloudflare Worker. When the Worker gets the webhook payload, it first needs to check the cryptographic signature in the request headers. This step confirms the request actually came from Fastio and blocks anyone trying to spoof file events.

After verifying the signature, the Worker can run your business logic. It might update an SQL database, send a Slack notification, or call an AI agent via OpenClaw to process the new file. This event-driven setup keeps your systems in sync and saves you from polling the API over and over.

Integrating Edge Intelligence and AI Agents

Fastio acts as an intelligent workspace, not just a plain storage bucket. When you route files through Cloudflare Workers into Fastio, the platform indexes the content. Turning on Intelligence Mode for a workspace lets AI agents query the files using built-in RAG (Retrieval-Augmented Generation) capabilities.

If you build with the OpenClaw framework, adding this integration takes one step. Run clawhub install dbalve/fast-io in your terminal. This gives your edge functions access to multiple natural language tools built for file management and analysis.

One useful pattern starts with a Cloudflare Worker receiving an upload. It streams the file to Fastio, then sends a request to an LLM. The LLM can write a summary, pull out tabular data, or run sentiment analysis via Fastio's intelligence features. This turns a basic file upload into an automated data pipeline running from the edge.

Troubleshooting Common API Integration Errors

While integrating the Fastio API with Cloudflare Workers, you might run into errors linked to the serverless environment. The most frequent issue is the CPU Time Exceeded error. Cloudflare Workers place strict limits on how much synchronous CPU time your code can use per request.

To prevent CPU timeouts, avoid running heavy synchronous tasks, like hashing large files with standard JavaScript libraries. Instead, use the native Web Crypto API built into the edge runtime. It runs asynchronously outside the main V8 isolate thread and handles the math much faster.

CORS (Cross-Origin Resource Sharing) issues also trip up many developers. If a web browser calls your Worker directly, your response must include the correct Access-Control-Allow-Origin and Access-Control-Allow-Methods headers. Also, keep an eye on your Worker's performance using the Cloudflare analytics dashboard. Watching latency metrics, checking error rates by region, and reading logs keeps your edge API integration running well.

Frequently Asked Questions

How do I use Fastio API in Cloudflare Workers?

You can use the Fastio API in Cloudflare Workers by using the standard Fetch API to make HTTP requests to Fastio endpoints. Store your Fastio API key as an encrypted secret in your Worker environment and stream file uploads using ReadableStreams to stay under memory limits.

Can Cloudflare Workers handle file uploads?

Yes, Cloudflare Workers handle file uploads by streaming the incoming request body directly to a destination like Fastio. Workers support the Streams API, so you can proxy large files without hitting the strict memory limits of V8 isolates.

What is the memory limit for Cloudflare Workers?

Cloudflare Workers usually have a hard memory limit of 128MB per execution context. Due to this cap, developers need to stream large file uploads instead of buffering the whole file into memory when calling storage APIs.

How do I secure my Fastio API keys in Cloudflare?

Secure your Fastio API keys using Cloudflare Worker Secrets. Run the Wrangler CLI to bind your API key as an encrypted environment variable. This keeps it out of your source code and makes it accessible only during runtime.

Related Resources

Ready to build intelligent edge workflows?

Start integrating Fastio with your Cloudflare Workers today. Get 50GB of free storage and 19 consolidated tools with no credit card required.