How to Manage Google AI Studio Files

Google AI Studio lets developers upload documents, images, and video for Gemini's large context window. But the file expiration policy hurts production workflows.

What is Google AI Studio File Management?

Google AI Studio file management feeds data into Gemini models. Unlike standard cloud storage, it focuses on "context caching" and quick inference. It supports many file types including PDF, CSV, spreadsheets, and multimedia. This lets you build multimodal agents that can "see" and "hear" your data.

The platform has storage limits for total project storage and individual files. This capacity lets you use Gemini 1.5 Pro's massive context window to analyze hour-long videos or large codebases in a single prompt.

The main advantage is bypassing complex vector database setups. With a 2 million token window, you can upload entire books, code repositories, or quarterly reports directly. The system tokenizes this content instantly. The model can then perform "in-context learning" across the dataset. This differs from RAG (Retrieval-Augmented Generation) pipelines that chunk and retrieve small snippets of text. Here, the model sees everything at once. It finds connections and patterns that broken-up data might hide.

Helpful references: Fastio Workspaces, Fastio Collaboration, and Fastio AI.

The File Expiration Rule Explained

The biggest limitation of Google AI Studio is file retention.

Files uploaded to Google AI Studio expire automatically. Google designed this for quick testing and prototyping, not long-term storage.

If you build permanent agents or production apps, you cannot rely on AI Studio as your primary database. You must re-upload files or use the Files API to refresh content dynamically. This eats up bandwidth and manages API quotas poorly.

This temporary nature often trips up developers moving from the "playground" phase to production. Imagine building a customer service agent that needs a product manual. If you upload that manual on Monday, your agent loses access by Wednesday. You need a "hydration" strategy where your app constantly checks for file availability and re-uploads missing resources before an inference request. This slows down the user and complicates your backend with extra state management logic.

Supported File Types and Limits

Gemini is multimodal, so it understands more than just text. Google AI Studio supports direct uploads for:

- Documents: PDF, CSV, RTF, TXT, HTML, Markdown

- Images: PNG, JPEG, WEBP, HEIC (size limits apply)

- Audio: MP3, WAV, AAC, FLAC

- Video: MP4, MOV, MPEG, AVI (size limits apply)

Pro Tip: For large video files, Gemini processes audio and visual frames separately. This allows for accurate transcription and scene understanding without manual preprocessing.

Knowing the token cost helps you plan limits. For instance:

- Video: A video file consumes about 258 tokens per second. A 10-minute clip uses roughly 150,000 tokens. This is well within the 2 million limit but worth tracking.

- Images: Each image accounts for exactly 258 tokens, regardless of resolution or aspect ratio.

- Audio: Audio is tokenized at roughly 32 tokens per second. These numbers help you plan how much data fits into a single context window without cutting valuable information.

How to Upload Files to Google AI Studio

There are two main ways to get your data into Gemini's context window:

1. Manual Upload (Web Interface) In the AI Studio prompt interface, click the "+" icon to upload local files or select from Google Drive. This works well for one-off tests or checking how Gemini interprets a specific document.

2. The Gemini Files API

For automated workflows, use the media.upload method in the Gemini API. This lets your agent programmatically send files to the temporary storage bucket. However, you still need logic to re-upload files once they expire.

When using the google-generativeai Python SDK, the process involves two steps: uploading the file to the Files API and passing the file URI to the generation method. Note that file upload is asynchronous for larger files. Your code must verify the file's state changes from PROCESSING to ACTIVE before you use it in a prompt. Otherwise, the request will fail. This state management is key for reliable file handling in the Gemini ecosystem.

Give Your AI Agents Persistent Storage

Stop re-uploading files every 48 hours. Get 50GB of persistent, high-speed storage for your AI agents. Free forever.

Solving Persistence with Fastio

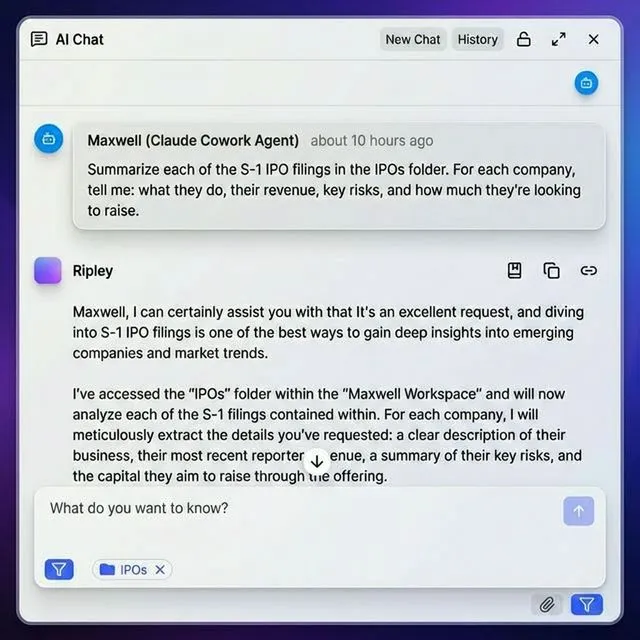

To build real-world agents that remember data forever, you need an external storage layer. Fastio provides a persistent file system that integrates directly with AI agents via the Model Context Protocol (MCP).

Instead of relying on temporary AI Studio storage, your agent can use Fastio to store terabytes of data permanently. When a file is needed for a prompt, the agent uses the Fastio MCP server to get the content or generate a signed URL for Gemini. This architecture bypasses the 48-hour limit and keeps costs low with a free 50GB tier.

The workflow looks like this:

- Ingest: Upload your corporate knowledge base (PDFs, videos, docs) to a secure Fastio workspace.

- Connect: Your AI agent connects to Fastio using the standard MCP server.

- Retrieve: When a user asks a question, the agent searches Fastio, finds the file, and uploads it to the current Gemini session (or passes text content directly if small).

- Forget: Once the session ends, the temporary copy in Gemini expires. The master copy remains safe and version-controlled in Fastio. This delivery method ensures your agent always has the latest data without bloating your API costs or managing complex re-upload scripts.

Best Practices for Gemini File Context

For the best performance from Gemini's long context window:

- Clean Your Data: Remove extra whitespace and formatting from text files to save tokens.

- Use Context Caching: For files you reference often within the 48-hour window, enable context caching to reduce lag and cost.

- Separate Reference Material: Keep "system instructions" files separate from user-uploaded data to prevent prompt injection or confusion.

- Monitor Usage: Watch your storage limits. While individual files expire, many active uploads can hit the project cap quickly.

About Context Caching If the same large file (like a 500-page policy document) is queried repeatedly, standard file uploading is inefficient. Gemini's Context Caching feature lets you "pin" these tokens in the model's memory. While this costs money, it cuts the input token cost for future queries and speeds up response times. Identify high-traffic static assets and move them to cached storage to get better value.

Frequently Asked Questions

Does Google AI Studio store files permanently?

No, files uploaded to Google AI Studio are temporary and expire. For permanent storage, use an external solution like Google Cloud Storage or Fastio.

What is the file size limit for Google AI Studio?

Individual files have size limits and the total storage limit per project varies. Images also have size limits.

Can I upload videos to Gemini?

Yes, Gemini is multimodal and accepts video formats like MP4, MOV, and AVI. It can analyze both visual frames and audio for full understanding.

How do I increase the Google AI Studio storage limit?

The large limit is fixed for the standard tier. For higher limits and long-term retention, you would typically migrate to Vertex AI on Google Cloud Platform.

Related Resources

Give Your AI Agents Persistent Storage

Stop re-uploading files every 48 hours. Get 50GB of persistent, high-speed storage for your AI agents. Free forever.