How to Implement Fastio API Large File Chunked Uploads

Chunked uploading in the Fastio API splits large files into manageable segments, ensuring reliable transmission over unstable networks without exhausting agent memory. This guide covers how to initialize, upload, and complete parallel chunked sessions for massive payloads.

What to check before scaling Fastio API large file chunked uploads

Uploading large assets over standard HTTP requests often leads to timeouts, memory exhaustion, and interrupted connections. Chunked uploading in the Fastio API splits large files into manageable segments. This approach ensures reliable transmission over unstable networks without exhausting agent memory. Instead of sending a massive payload in a single stream, the client divides the file into smaller blocks (usually several megabytes each) and uploads them sequentially or concurrently.

This architectural approach provides significant advantages for developers building reliable integrations. If a network drop occurs during a transfer, the system only needs to retransmit the specific failed block rather than starting the entire process over. This targeted retry mechanism is the main idea behind the Fastio resumable upload API. It allows long-running operations to survive intermittent connectivity issues, which are common in mobile environments or remote production sets.

Chunking enables parallel execution. By opening multiple concurrent connections, an application can upload different segments of the same file simultaneously, speeding up transfers on high-bandwidth links. The server stores these individual pieces and reassembles them into the original file once all parts are confirmed. This method is key to the Fastio API large file chunked uploads system, supporting everything from simple script automation to complex multi-agent workflows.

Helpful references: Fastio Workspaces, Fastio Collaboration, and Fastio AI.

Architecture Patterns for Large File Streaming

When designing an application that uses the Fastio API resumable uploads guide, selecting the right architecture pattern is important. The simplest approach is sequential chunking. In this model, the client reads a block of data, sends it to the server, waits for a successful response, and then proceeds to the next block. While straightforward to implement and easy to debug, sequential uploading does not fully use available network bandwidth. It is highly susceptible to latency, as the round-trip time for each request adds up over thousands of segments.

To achieve the best performance, developers should implement a parallel chunked architecture. This involves a worker pool or asynchronous task queue that dispatches multiple upload requests concurrently. As the file is read from disk, segments are immediately handed off to available network threads. This approach maxes out the network connection, minimizing total transfer time. However, it requires careful memory management. Reading too many blocks into memory simultaneously can cause out-of-memory errors, especially when working within the constrained environments of containerized AI agents.

A hybrid approach often gives the best results. Developers can implement a sliding window protocol, where a fixed number of segments are kept in flight at any given time. As one segment completes, the next is read from disk and dispatched. This maintains high throughput while limiting memory consumption. Regardless of the chosen pattern, reliable state tracking is mandatory. The application must record which segments have been successfully acknowledged by the server, allowing the process to resume if the application crashes or the host machine reboots.

Step-by-Step: Implementing Resumable Uploads

Implementing Fastio resumable uploads requires a specific sequence of API calls. The process begins with an initialization request, followed by the actual data transfer, and concludes with a finalization step. Here is the workflow required to execute a session successfully.

Step 1: Initialize the Upload Session

Before sending any data, the client must notify the server of its intent to upload a file. This is done by making an authenticated POST request to the /v1/uploads/sessions endpoint. The request payload must include metadata about the file, such as its name, total size, and MIME type. In response, the server returns a unique Session ID and an array of upload URLs or a base endpoint for the chunks.

Step 2: Calculate Chunk Boundaries The client must determine the appropriate segment size. While the API supports various sizes, a common best practice is to use blocks of a few megabytes. The client calculates the total number of segments required by dividing the total file size by the block size. Each block is assigned a sequential index starting from zero.

Step 3: Upload Individual Segments For each block, the client reads the corresponding byte range from the source file. It then makes a PUT request to the designated chunk endpoint, including the Session ID and the block index in the URL or headers. The server processes the chunk and returns a success status code. If a request fails due to a network error, the client should wait briefly and retry that specific request.

Step 4: Complete the Session

Once all blocks have been transmitted, the client must instruct the server to reassemble the file. This is accomplished via a POST request to the /v1/uploads/sessions/{session_id}/complete endpoint. The server verifies that all expected segments have been received, concatenates them in the correct order, and finalizes the file record in the storage system. The response contains the final file metadata and access URLs.

Following these precise steps ensures that large file streaming Fastio implementations are reliable, even under poor network conditions.

Give Your AI Agents Persistent Storage

Access an extensive library of MCP tools with our free agent tier. Built for fast api large file chunked uploads workflows.

Handling Failures and Retry Logic

Network volatility is a fact of life when transferring massive payloads. A production-grade implementation of the Fastio multipart upload API must anticipate and handle failures well. Without proper retry mechanisms, a single dropped packet could break a multi-hour transfer. The most effective strategy for managing transient errors is exponential backoff with jitter.

When a chunk upload fails, the client should not immediately retry the request. Immediate retries can worsen network congestion or overwhelm an overloaded server. Instead, the application should wait a short period before attempting again. If the second attempt fails, the wait time should be doubled, and so on. To prevent multiple clients from synchronizing their retries and creating thundering herd problems, a random amount of time (known as jitter) should be added to each delay interval. This spreads out the retry attempts and balances the load on the infrastructure.

Beyond network errors, developers must handle session expiration. Upload sessions typically have a limited lifespan. If a transfer is paused for a long time, the session may become invalid. The client must be prepared to catch expiration errors and, if necessary, initiate a new session. However, because the system tracks completed blocks, a new session can often resume from where the previous one left off, as long as the original file has not been modified. By implementing these defensive programming techniques, developers ensure their large file upload Fastio integrations are reliable.

Parallel Chunk Uploading for Maximum Speed

To fully use high-bandwidth connections, developers must transition from sequential processing to parallel execution. Concurrent uploading reduces the total time required to move massive files, but it introduces complexity in synchronization and resource management. The Fastio API large file chunked uploads system is designed to support highly concurrent access patterns, allowing clients to push data as fast as their local network interfaces permit.

When building a concurrent uploader, the first consideration is the size of the worker pool. Spawning too many threads can lead to context-switching overhead and exhaust local system resources, while too few threads will leave network capacity unused. A common best practice is to dynamically size the worker pool based on the available CPU cores and network latency. As each worker becomes available, it claims the next unassigned block from a thread-safe queue, reads the data from disk, and executes the HTTP PUT request.

Memory management becomes important in this architecture. Applications must avoid reading the entire file into memory before splitting it. Instead, workers should use memory-mapped files or stream the data directly from disk to the network socket. This keeps the application's memory footprint small and constant, regardless of the overall file size. The system must carefully track the completion status of out-of-order blocks. Since concurrent requests will finish at unpredictable times, the finalization step must only be triggered once every dispatched block has been acknowledged by the server. This coordination is what makes parallel chunk uploading fast and efficient.

Working with AI Agents and the MCP Server

In the context of the Fastio ecosystem, large file transfers are not just for human users. They are important for AI agents operating within shared workspaces. Agents frequently need to ingest massive datasets, analyze extensive log archives, or process high-resolution video files. The Fastio API resumable uploads guide is relevant when configuring agents via the Model Context Protocol (MCP) server, as it allows these automated systems to handle heavy I/O operations without crashing or timing out.

When an agent is tasked with uploading a file, it uses the designated MCP tools to interact with the API. The free agent tier provides plenty of storage, which is great for many operations, but handling massive files still requires chunking to respect memory limits. The MCP server translates the agent's high-level intent into the necessary sequence of HTTP requests. Because agents operate autonomously, solid error handling is even more important. If a chunk fails, the agent's workflow must not stop completely. The underlying tooling must silently manage the retries and exponential backoff, only surfacing an error to the agent if the transfer fails completely after maximum attempts.

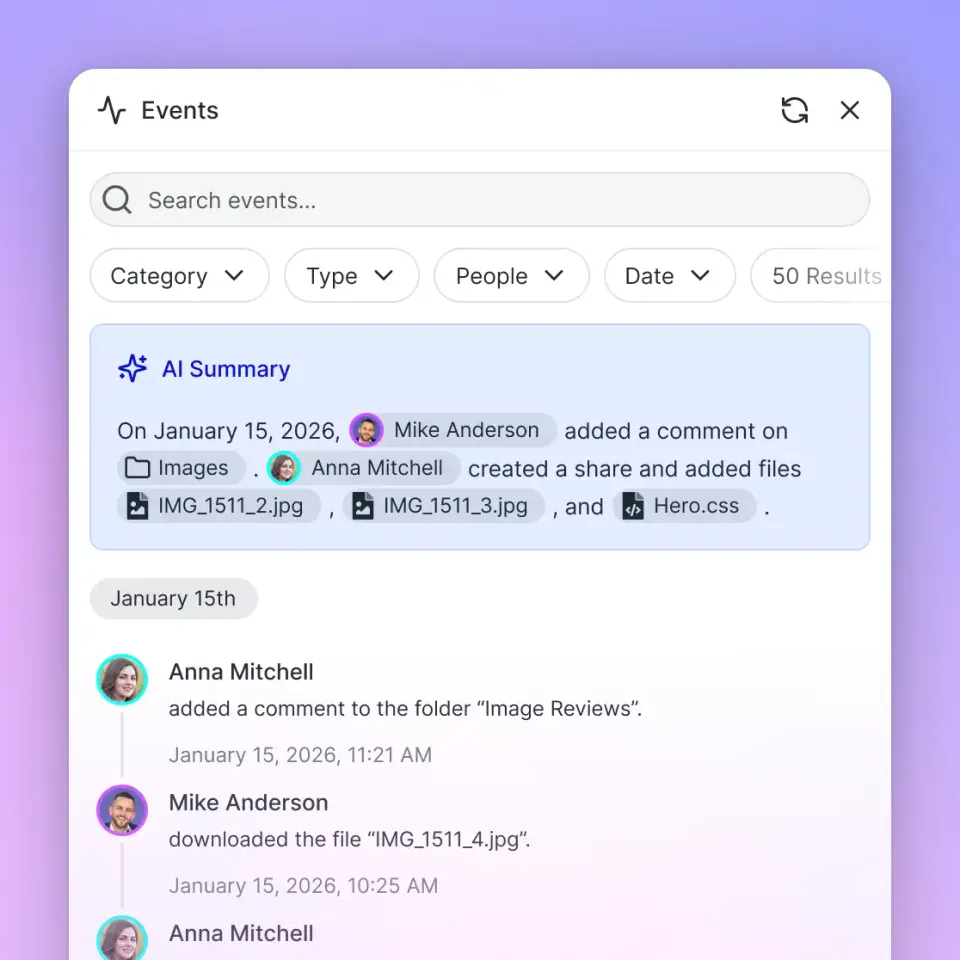

Uploaded files are quickly added into the workspace's intelligence layer. Once the chunked upload session is completed, the Intelligence Mode automatically indexes the content. This means an agent can upload a massive data dump using the chunked API, and moments later, another agent or a human user can begin querying that data using natural language. This transition from raw storage to queryable intelligence shows the difference between legacy file hosting and a modern, agent-centric workspace. By mastering the Fastio chunked upload methods, developers ensure their agents can operate on enterprise-scale data smoothly.

Common Troubleshooting and Edge Cases

Even with solid code, developers will occasionally encounter issues when implementing the Fastio resumable upload API. Understanding common edge cases and troubleshooting techniques is important for maintaining a stable integration. One common problem is file changes during the transfer. If the source file on the local disk is modified after the upload session has begun, the chunks will not align correctly, and the final reassembled file will be corrupted. Applications must obtain an exclusive read lock on the file or create a read-only snapshot before initiating the chunking process.

Another common issue involves incorrect chunk indexing. The API expects chunk indices to be sequential and zero-based. Sending a chunk with an out-of-bounds index or skipping an index will cause the finalization step to fail, as the server will detect missing segments. Developers must ensure their loop logic correctly calculates offsets and assigns the proper index to each HTTP request. Thorough logging of the block index, byte range, and HTTP response code for every request is helpful for diagnosing these off-by-one errors.

Finally, developers must account for firewall and proxy restrictions. Some enterprise networks drop long-running connections or inspect deep packet contents, which can block chunked streams. When troubleshooting connection resets or unexplained timeouts, it is important to test the upload process from outside the corporate network to isolate the issue. If proxy servers are stripping necessary headers or altering the payload, the application may need to fallback to smaller chunk sizes or adjust its transport configuration. By anticipating these challenges, development teams can build reliable integrations that handle massive data transfers in any environment.

Frequently Asked Questions

How do I upload large files to Fastio API?

To upload large files, use the Fastio multipart upload API. First, initialize a session to get a Session ID. Then, split your file into smaller blocks and upload them sequentially or concurrently. Finally, call the complete endpoint to reassemble the file on the server.

What is the maximum file size for Fastio uploads?

Fastio supports large files. Using chunked uploads bypasses the standard single-request limits, letting you break massive assets into manageable streams.

Can I resume an interrupted chunked upload?

Yes, the main benefit of the chunked upload system is resumability. If a transfer drops, you only need to re-upload the specific segments that failed. The server retains the successfully completed blocks until the session expires.

Why are my parallel uploads failing?

Parallel uploads often fail due to local resource exhaustion. Ensure you are not loading the entire file into memory before chunking. Use file streams, limit your concurrent thread pool size, and verify that your chunk indices are correctly calculated.

How does chunking work with AI agents?

AI agents use chunked uploads via the MCP server to handle massive datasets without exceeding memory constraints. Once the multi-part upload completes, the file is automatically indexed by Intelligence Mode for immediate querying.

Related Resources

Give Your AI Agents Persistent Storage

Access an extensive library of MCP tools with our free agent tier. Built for fast api large file chunked uploads workflows.