Best Web Scraping Tools for AI Agents

AI agents need web scraping tools that output token-efficient formats like Markdown instead of raw HTML. We tested the top solutions including Firecrawl, Jina Reader, and Crawl4AI to help you build better data pipelines for your LLMs.

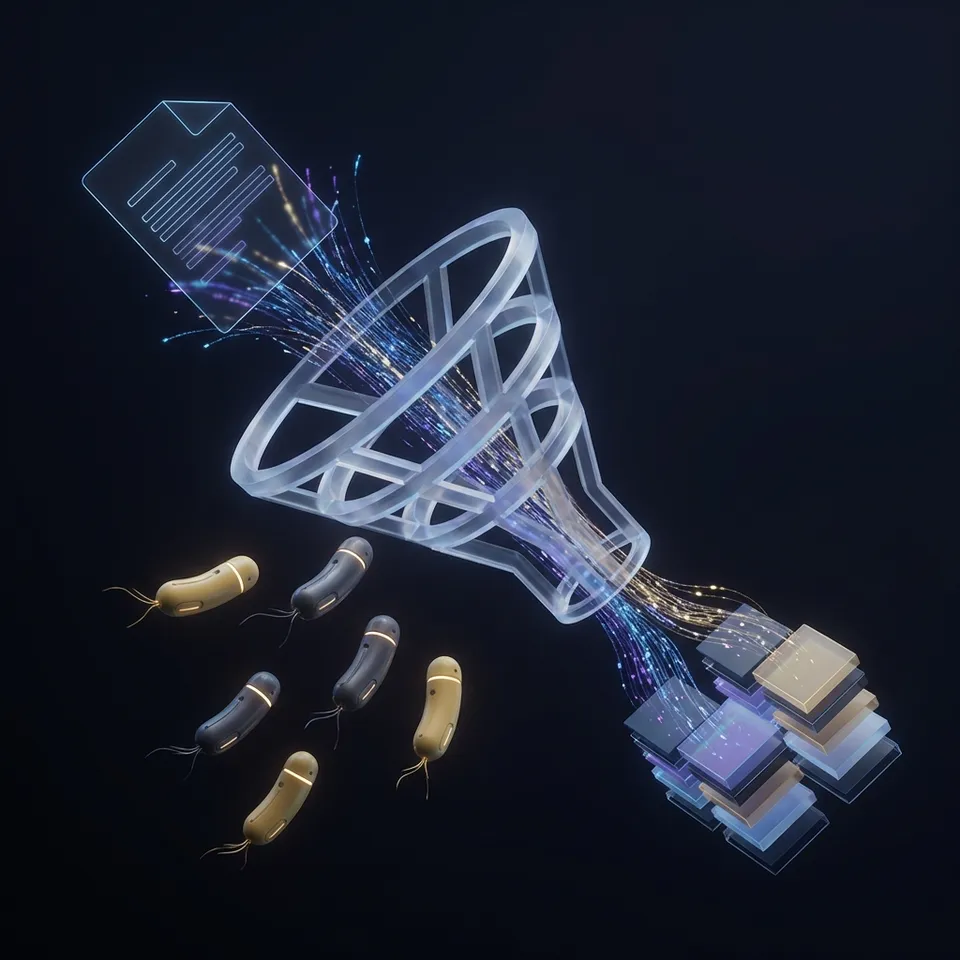

Why AI Agents Need Specialized Scrapers

Traditional web scraping tools were built for humans to extract specific fields into spreadsheets.

AI-optimized scraping tools convert messy HTML into Markdown or JSON that LLMs can process without extra work.

For an AI agent, the format matters as much as the data itself. Raw HTML is token-heavy and full of noise (scripts, styles, navigation) that distracts models and inflates API costs. The best tools for agents strip away this clutter and return semantic content that fits into a context window. When evaluating these tools, look for "LLM-readiness": the ability to output structured Markdown or JSON-LD without complex post-processing scripts.

Helpful references: Fastio Workspaces, Fastio Collaboration, and Fastio AI.

What to check before scaling best web scraping tools for ai agents

We tested the leading options to find which tools offer the best balance of clean output, reliability, and ease of integration for autonomous agents.

Quick

Comparison

1. Firecrawl

Firecrawl has become a go-to tool for AI engineers building RAG (Retrieval-Augmented Generation) pipelines. It transforms any website into well-structured Markdown that preserves headings and hierarchy, making it ideal for chunking and embedding.

Pros:

- LLM-Native Output: Delivers Markdown by default, saving token costs.

- Crawl Capability: Can map and scrape entire subdomains, not just single pages.

- LangChain Integration: First-class support in popular agent frameworks.

Cons:

- Cost: Can get expensive for high-volume crawling compared to self-hosted options.

- Latency: Full site crawls can take time to process.

Verdict: Strong choice for developers who need high-quality data for RAG applications right away. Consider how this fits into your broader workflow and what matters most for your team. The right choice depends on your specific requirements: file types, team size, security needs, and how you collaborate with external partners. Testing with a free account is the fast way to know if a tool works for you.

2. Jina Reader (Jina AI)

Jina Reader offers a simple "Reader API" that turns any URL into LLM-friendly text. Just prepend r.jina.ai/ to a URL and you get a stripped-down version of the page. This simplicity makes it popular for lightweight agents that need to browse the web in real-time.

Pros:

- Zero Setup: No SDK required, just standard HTTP requests.

- Live Data: Good for grounding LLM responses with current web data.

- Image Captioning: Can automatically caption images for multimodal context.

Cons:

- Rate Limits: The free tier has strict limits.

- Less Control: Fewer options for handling complex interactions like logins or scrolling.

Verdict: Fast way to give your agent "eyes" on a specific web page.

3. Crawl4AI

For developers building local agents or who want full control, Crawl4AI is a powerful open-source library based on Playwright. It runs locally or in your own cloud, giving you complete ownership of the scraping pipeline without per-page fees.

Pros:

- Cost Efficiency: Free to use (you only pay for your own compute).

- Privacy: Data never leaves your infrastructure.

- Performance: Optimized for speed with local execution.

Cons:

- Maintenance: You must manage the infrastructure and handle anti-bot measures yourself.

- Setup: Requires more initial configuration than API-based services.

Verdict: Top option for heavy users and those who need data privacy. Consider how this fits into your broader workflow and what matters most for your team. The right choice depends on your specific requirements: file types, team size, security needs, and how you collaborate with external partners. Testing with a free account is the fast way to know if a tool works for you.

4. Fastio Agent Storage

While not a scraper itself, Fastio solves a common problem for scraping agents: Where do you put the data once you've scraped it? Storing scraped datasets in ephemeral containers risks data loss, while databases can be overkill for raw files. Fastio provides persistent, secure cloud storage that agents can access via the Model Context Protocol (MCP). Agents can dump scraped Markdown, JSON, or images directly into a Fastio workspace, where they become searchable and shareable with human team members.

Why Agents Choose Fastio:

- MCP Integration: Native tools for agents to write, read, and search files.

- Persistence: Data survives agent restarts and container rebuilds.

- Free Tier: 50GB of storage and 100GB of bandwidth for free, with no credit card required.

- Human-in-the-Loop: Humans can review scraped files via the web interface.

Verdict: Strong backend storage solution for scraping agents.

Give Your AI Agents Persistent Storage

Give your AI agents a persistent memory. Store up to 50GB of scraped data, logs, and artifacts with Fastio's high-performance cloud storage.

5. ScrapingBee

If your agents face constant CAPTCHAs or IP blocks, ScrapingBee can help. It handles headless browsers and proxy rotation automatically, so your agent gets the data it requests.

Pros:

- Anti-Bot Evasion: Specializes in bypassing Cloudflare and other protections.

- Headless Mode: Renders JavaScript to capture dynamic content.

- Screenshot API: Useful for multimodal agents that need visual context.

Cons:

- Pricing: Premium pricing reflects the infrastructure complexity.

- HTML Output: Primarily returns HTML, requiring a parsing step (like BeautifulSoup) to get Markdown.

Verdict: Good for scraping "difficult" sites that actively block bots. Consider how this fits into your broader workflow and what matters most for your team. The right choice depends on your specific requirements: file types, team size, security needs, and how you collaborate with external partners. Testing with a free account is the fast way to know if a tool works for you.

6. Bright Data

Bright Data is the enterprise heavyweight, offering a large proxy network and a suite of scraping tools. For large-scale data collection where reliability and compliance matter, it leads the industry.

Pros:

- Scale: Can handle millions of requests.

- Datasets: Offers pre-scraped datasets so you might not need to scrape at all.

Cons:

- Complexity: The platform is vast and has a steeper learning curve.

- Cost: Geared towards enterprise budgets.

Verdict: Good choice for corporate AI initiatives that need massive scale. Consider how this fits into your broader workflow and what matters most for your team. The right choice depends on your specific requirements: file types, team size, security needs, and how you collaborate with external partners. Testing with a free account is the fast way to know if a tool works for you.

7. Browse AI

Browse AI is a no-code platform that lets you train a "robot" to scrape specific websites just by pointing and clicking. It works well for setting up monitoring agents that trigger workflows when data changes.

Pros:

- No Code: Train scrapers visually in minutes.

- Monitoring: Built-in change detection and alerts.

- Integrations: Connects to Zapier, Airtable, and Google Sheets.

Cons:

- Rigidity: Less flexible than code-based solutions for dynamic agentic workflows.

- Token Usage: Output can be verbose if not carefully configured.

Verdict: Good for quick, recurring extraction tasks without writing code. Consider how this fits into your broader workflow and what matters most for your team. The right choice depends on your specific requirements: file types, team size, security needs, and how you collaborate with external partners. Testing with a free account is the fast way to know if a tool works for you.

Frequently Asked Questions

How do I scrape data for RAG pipelines?

For RAG, use a tool like Firecrawl or Jina Reader that outputs Markdown. Markdown preserves the semantic structure (headings, lists) of the content, which helps the embedding model understand the context and relationships between data chunks better than raw text or HTML.

What is the best scraper for OpenAI agents?

OpenAI agents work best with tools that have native function calling support or MCP integrations. Firecrawl is widely supported in the LangChain and OpenAI ecosystems. Alternatively, using Fastio as a storage layer allows any scraper to save data that the agent can then access via standard file tools.

Is web scraping legal for AI training?

Web scraping legality is complex and varies by jurisdiction. Generally, scraping publicly available data is often considered permissible, but you must respect `robots.txt` files, terms of service, and copyright laws. Tools like Bright Data offer compliance features to help navigate these legal requirements.

Related Resources

Give Your AI Agents Persistent Storage

Give your AI agents a persistent memory. Store up to 50GB of scraped data, logs, and artifacts with Fastio's high-performance cloud storage.