Best Synthetic Data Generation Tools for AI Training (2026)

Synthetic data tools use AI to create labeled datasets for training other AI models. This helps solve data shortages and privacy issues. We review the best platforms for generating tabular, text, and image data to speed up your machine learning pipelines.

Why Synthetic Data Is Taking Over AI Training

Data shortages and privacy laws slow down modern AI development. Synthetic data uses AI to make artificial datasets. These datasets copy the patterns of real-world data but contain no sensitive information.

According to Gartner, 60% of data used for the development of AI and analytics projects will be synthetically generated by 2024. Developers need to train models on rare events, such as autonomous vehicle accidents or financial fraud patterns, without waiting for them to happen.

Key Benefits of Synthetic

Data * Privacy Compliance: Create privacy requirements and privacy requirements-compliant datasets. Keep the value of production data without the risk of revealing identities.

- Lower Costs: Labeling real data costs too much and takes time. Synthetic data comes labeled by design.

- Fix Bias: Balance datasets by generating more examples of underrepresented groups. This makes models fair.

- Test Rare Events: Make scenarios that are dangerous or impossible to capture in the real world, like extreme weather for self-driving cars.

Helpful references: Fastio Workspaces, Fastio Collaboration, and Fastio AI.

How to Choose the Right Synthetic Data Tool

Before looking at the tool list, understand the differences between these platforms. Not all synthetic data is the same. A tool built for tabular financial data will fail at generating computer vision assets.

1. Data Modality

The main factor is the type of data you need.

- Tabular/Structured: Rows and columns (SQL databases, CSVs). The goal is keeping correlations (e.g., ensuring "Salary" matches "Years of Experience").

- Unstructured Text: Natural language for chatbots, sentiment analysis, or PII redaction. The goal is clear meaning and context.

- Computer Vision: Images and video. The goal is realistic looks and accurate pixel-level labeling.

2. Privacy

For regulated fields, "looking real" isn't enough. You need proof that the data cannot be reversed to reveal the original individuals. Look for tools that offer Differential Privacy, a standard for measuring privacy loss.

3. Dev Tools vs. UI

Do you want a dashboard where you upload a CSV and click "Generate"? Or do you need a Python SDK to add to your build pipeline? Tools like Gretel and Fastio focus on the developer/API side. Others offer traditional enterprise dashboards.

Best Synthetic Data Tools at a Glance

We checked the top platforms based on their ability to handle different data types, privacy guarantees, and developer experience.

1. Gretel.ai

Best For: Developers needing a versatile, API-first platform for all data types.

Gretel.ai is a popular choice in the synthetic data space. It offers a developer-friendly platform that handles tabular data, unstructured text, and images. Its "privacy engineering as a service" approach allows data scientists to generate safe versions of sensitive datasets with a simple API call.

One of Gretel's main features is generating synthetic text using large language models (LLMs). This is good for training NLP models without exposing customer chats or emails.

Key Features

- Gretel Navigator: A generative AI system that creates high-quality tabular data from natural language prompts.

- Privacy Filters: Built-in PII detection and redaction that automatically scrubs sensitive fields before generation.

- Differential Privacy: Configurable privacy budgets to balance data utility with anonymity.

Ideal Use Case

A healthcare startup wants to share patient data with external researchers. They use Gretel's Python SDK to ingest their sensitive CSVs, train a synthetic model, and output a statistically identical dataset that is strict security requirements-compliant and safe to share.

- Strengths: Supports multi-modal data (text, image, tabular), excellent developer documentation, strong privacy guarantees.

- Limitations: Can get expensive for large scale generation tasks.

- Pricing: Free tier available (multiple credits/month); Team plan starts at published pricing.

2. Mostly AI

Best For: Enterprises focused on structured data privacy and compliance.

Mostly AI focuses on generating accurate synthetic versions of complex, structured tabular data. It is popular in banking and insurance to access trapped data where privacy regulations would otherwise prevent sharing. Their platform keeps the statistical correlations between tables in a relational database.

If your main goal is to create a safe sandbox environment for analytics or software testing using production-like data, Mostly AI offers some of the strongest privacy metrics in the industry.

Key Features

- Multi-Table Support: Preserves referential integrity across complex database schemas (e.g., keeping "Customer ID" consistent across Orders and Payments tables).

- Smart Imputation: Automatically fills in missing values in your original data during the generation process.

- Privacy Reports: Generates detailed PDF reports proving the privacy compliance of the output data for auditors.

Ideal Use Case

A large bank needs to give a third-party analytics vendor access to transaction data to build a fraud detection model. Instead of going through a multiple-month compliance review to share real data, they generate a synthetic twin of their transaction database using Mostly AI.

- Strengths: High-fidelity tabular data generation, strong focus on privacy requirements, easy-to-use interface.

- Limitations: Primarily focused on structured data; less suitable for unstructured image or video generation.

- Pricing: Free tier available (up to multiple daily records); Enterprise pricing for larger volumes.

3. Synthesis AI

Best For: Computer vision and human-centric simulation data. Synthesis AI is a platform for generating photorealistic image and video data for computer vision. Unlike tools that modify existing images, Synthesis AI builds data from scratch using cinematic CGI and generative AI. This allows you to create "Synthesis Humans", diverse digital avatars with customizable attributes like age, ethnicity, emotion, and accessories. This tool is needed for training facial recognition, driver monitoring, and pedestrian detection systems where getting diverse real-world data is difficult and prone to bias.

Key

Features

- Synthesis Humans: Generate thousands of unique digital humans with varied biometrics.

- Perfect Labels: Every pixel comes pre-labeled (segmentation maps, depth maps, surface normals), eliminating manual annotation costs.

- Scenario Simulation: Place digital humans in complex environments (cars, offices, streets) with controlled lighting and camera angles.

Ideal Use Case

A smart home security company is building a facial recognition system. They realize their real-world training data is biased towards well-lit environments. They use Synthesis AI to generate multiple images of diverse faces in low-light, high-contrast, and obscured conditions to improve model quality. * Strengths: Photorealistic human generation, perfect pixel-level labels, granular control over environmental conditions.

- Limitations: Niche focus on computer vision; not for tabular or text data.

- Pricing: Custom enterprise pricing.

4. Tonic.ai

Best For: DevOps teams creating staging environments. Tonic.ai focuses on the software development lifecycle, specifically creating realistic test databases for developers. Instead of generating data from scratch, Tonic often works by copying your production database, creating a de-identified replica that maintains referential integrity across tables. This makes it the top choice for engineering teams that need to debug issues in a staging environment that looks like production but without the sensitive customer data.

Key

Features

- Universal Connectors: Connects natively to Postgres, MySQL, Oracle, SQL Server, and Snowflake.

- Format Preservation: Generated data keeps the same format as the original (e.g., valid email addresses, credit card checksums), so application logic doesn't break.

Ideal Use Case

A fintech engineering team needs to reproduce a bug that only happens with specific user data. Developers cannot access production DBs. They use Tonic to create a de-identified subset of the production DB in their staging environment, allowing them to fix the bug without ever seeing real PII. * Strengths: Excellent database subsetting, maintains complex relationships across schemas.

- Limitations: More of a data de-identification/management tool than a pure generative AI research tool.

- Pricing: Enterprise pricing based on data volume.

5. Hazy

Best For: Financial services and complex time-series data.

Hazy has found a place in the financial sector, where data is sensitive and temporal (time-series). Generating synthetic transaction logs that look realistic is hard, but Hazy's models are built to capture these temporal dependencies.

The platform is enterprise-ready, often deployed on-premise or in private clouds to ensure that the original sensitive data never leaves the bank's secure perimeter.

Key Features

- Sequential Data Modeling: specialized in preserving the time-dependent structure of financial transactions.

- Differential Privacy: Mathematical guarantees that specific individuals cannot be re-identified.

- On-Prem Deployment: Designed to run entirely within air-gapped enterprise environments.

Ideal Use Case

A global bank wants to train a new anti-money laundering (AML) model. They cannot move customer data to the cloud due to data residency laws. They install Hazy on-premise to generate a synthetic version of their transaction history, which can then be safely moved to the cloud for model training.

- Strengths: Specialized in financial and time-series data, enterprise-grade security features.

- Limitations: Complex setup compared to self-serve SaaS tools.

- Pricing: Custom pricing.

6. NVIDIA Omniverse Replicator

Best For: Robotics and physically accurate simulation. For physical AI applications like robotics and autonomous vehicles, you need more than just images, you need physics. NVIDIA Omniverse Replicator allows developers to build physically accurate 3D worlds where agents can be trained. It generates synthetic data that follows physics, including lighting, gravity, and material properties.

Key

Features

- Physically Based Rendering (PBR): Light, materials, and sensors behave exactly as they do in the real world.

- Domain Randomization: Automatically vary texture, lighting, and camera position to create strong datasets.

- ROS Integration: Connects with the Robot Operating System (ROS) for end-to-end robotics workflows.

Ideal Use Case

A warehouse robotics company trains its robots to pick up packages. Instead of running physical robots for thousands of hours (which causes wear and tear), they train the robot's vision system in Omniverse, simulating millions of picking scenarios with different package sizes and lighting conditions. * Strengths: Industry standard for simulation, integration with NVIDIA's hardware ecosystem, physically accurate rendering.

- Limitations: High learning curve; requires substantial hardware resources.

- Pricing: Enterprise licensing (Omniverse Enterprise).

7. Fastio

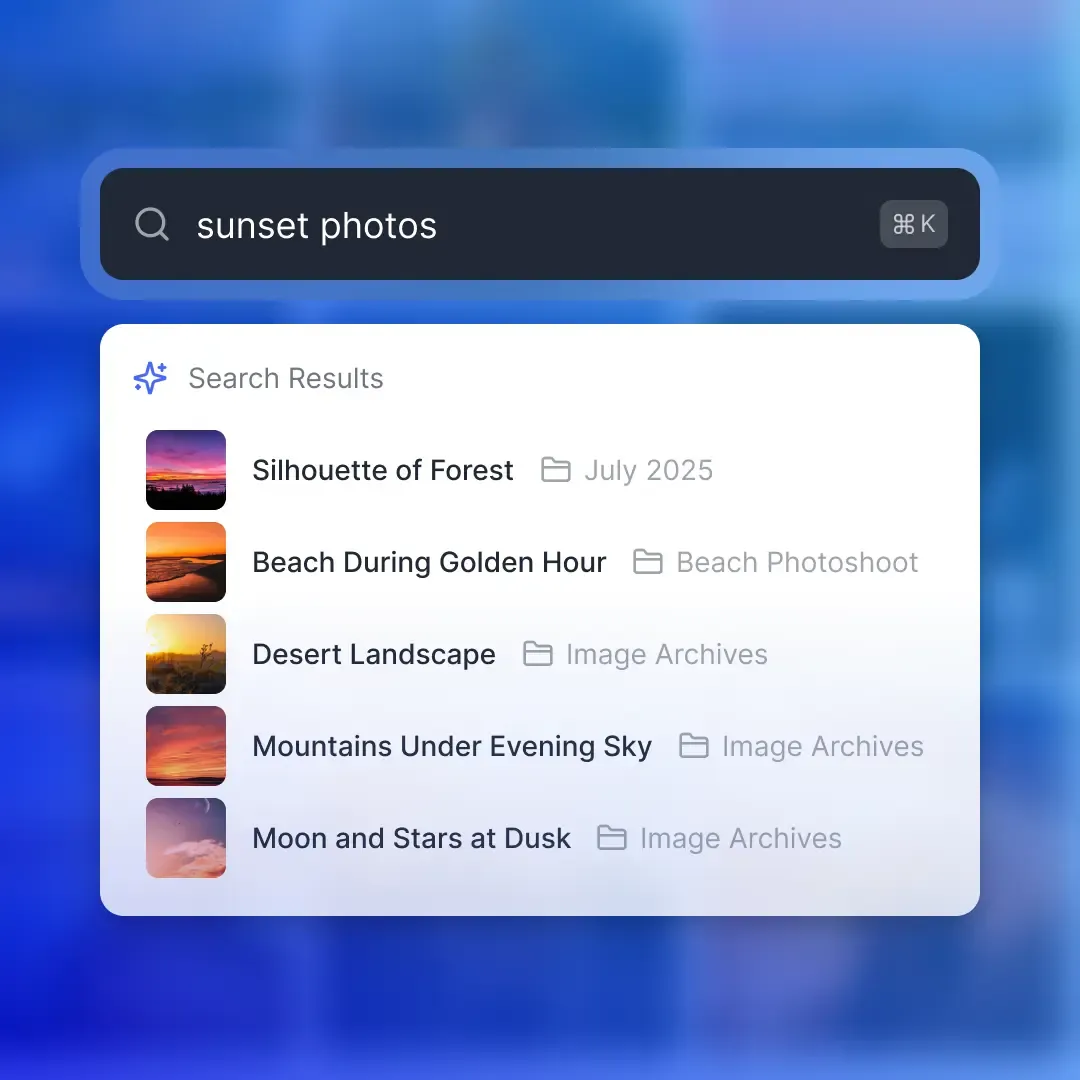

Best For: Managing synthetic data workflows and AI agents. While Fastio isn't a synthetic data generator itself, it is the core infrastructure for teams building AI. Synthetic datasets can be huge, terabytes of images, video logs, or text corpuses. Fastio provides the high-performance, smart workspace where this data lives, and where your AI agents operate. With Fastio, you can use specialized agents (like a Python agent running a Gretel SDK or a custom GAN script) to generate data directly into shared workspaces. Features like Intelligence Mode automatically index this new data, making it searchable by meaning ("Find all synthetic images of pedestrians at night") without manual tagging.

Workflow: Building a Synthetic Data Pipeline

- Agent Integration: Connect a Python agent to your Fastio workspace using the MCP server.

- Generation: The agent runs a script (e.g., using OpenAI's API or Gretel) to generate multiple synthetic JSON records.

- Direct Upload: The agent streams the files directly to

/fast-io/synthetic-data/without filling up your local disk. - Auto-Indexing: Fastio's Intelligence Mode instantly processes the files, making them searchable and queryable by other agents or human reviewers.

- Review: A human reviewer uses the Semantic Search to spot-check the data ("Show me records with high fraud scores") before approving it for training.

- Strengths: 50GB free storage for agents, 19+ consolidated tools for agent integration, auto-indexing of generated assets.

- Limitations: Requires external agents or scripts to perform the actual generation.

- Pricing: Free tier includes 50GB storage and 5,000 monthly credits; Pro plans start at usage-based rates.

Build Your AI Agents on Fastio

Give your AI agents a home with 50GB free storage, instant file indexing, and 19+ consolidated tools. Built for synthetic data generation tools workflows.

The Future of Synthetic Data: Generative AI & Digital Twins

The line between "synthetic data" and "generative AI" is blurring. While traditional synthetic data focused on privacy-preserving tabular data, the explosion of tools like Midjourney, Stable Diffusion, and Sora is changing the field. We are moving towards a future of Digital Twins, complete, dynamic virtual replicas of physical systems (factories, cities, human bodies). In this future, AI agents won't just learn from static datasets; they will "live" and learn inside these dynamic simulations. For developers, this means the skill set is shifting. It's no longer just about collecting data; it's about curating and prompting the generation of data. The ability to design the right simulation parameters or the right prompts to generate a balanced dataset will become as valuable as the ability to design the model architecture itself.

Frequently Asked Questions

How do I generate synthetic training data?

You can generate synthetic training data by using a platform like Gretel.ai or Mostly AI. Upload a sample of your real data (or a schema definition), select a generation model, and the tool will learn the statistical patterns to output a new, artificial dataset.

What is the best tool for synthetic text generation?

For synthetic text generation, Gretel.ai is a top choice due to its specialized LLM-based models. Alternatively, using OpenAI's GPT-multiple API via a custom script is highly effective for generating diverse natural language datasets for specific domains.

Is synthetic data as good as real data?

In many cases, yes. Synthetic data can be better than real data because it is perfectly labeled and can include rare edge cases that are hard to capture in the wild. However, it requires careful validation to ensure it doesn't hallucinate impossible scenarios or drift too far from reality.

Can I use synthetic data for computer vision?

Yes, synthetic data is widely used in computer vision. Tools like Synthesis AI and NVIDIA Omniverse generate photorealistic 3D scenes to train models for autonomous driving, robotics, and facial recognition, often outperforming models trained solely on small real-world datasets.

How do I validate the quality of synthetic data?

Validation typically involves two steps: Privacy checks (ensuring no real PII leaked) and Utility checks (comparing statistical distributions like histograms and correlations against the original data). Most platforms provide automated reports for this.

Related Resources

Build Your AI Agents on Fastio

Give your AI agents a home with 50GB free storage, instant file indexing, and 19+ consolidated tools. Built for synthetic data generation tools workflows.