Best Self-Hosted AI Agent Platforms (2025 Guide)

Self-hosted AI agent platforms let teams run agents on their own infrastructure — keeping data on-premise and avoiding vendor lock-in. This guide compares the leading frameworks, from code-first libraries to drag-and-drop visual builders.

Why Teams Choose Self-Hosted Agents

Self-hosted AI agent platforms run on your own servers. Cloud agents are convenient, but privacy requirements and vendor lock-in make them unsuitable for many industries.

Keeping agents on your own hardware or VPC means sensitive data stays on infrastructure you control. For organizations with data residency requirements — financial services, healthcare, defense contractors — this is usually the primary driver, not a nice-to-have.

There are practical cost considerations too. Processing data locally cuts latency, and at scale, self-hosting can reduce costs compared to managed platforms that add markup on API usage.

Helpful references: Fastio Workspaces, Fastio Collaboration, and Fastio AI.

LangChain / LangGraph

LangChain is the most widely-used Python framework for building LLM applications. LangGraph is its orchestration layer for stateful agents — workflows with multiple steps, branching logic, and memory.

Good for: teams that want to control every detail of how agents reason and use tools. LangGraph gives you fine-grained control over state machines, and a large ecosystem of integrations (document loaders, vector stores, tool wrappers) means you rarely have to write connectors from scratch. LangServe handles turning chains and agents into REST APIs.

Hard part: steep learning curve if your team doesn't write Python or JavaScript regularly, and you own the runtime and infrastructure maintenance.

Best For: Engineering teams building custom, production-grade agent applications.

Pricing: Open source (MIT license).

Flowise (Self-Hosted)

Flowise is a low-code builder for LLM applications. You wire agents together in a node-based visual editor, and the result deploys as an API. It runs on LangChain under the hood, so you get that ecosystem without writing Python.

The Docker setup is quick. Most teams have it running in an afternoon. The tradeoff is that debugging complex loops is harder than reading a stack trace, and you're limited to the available node types unless you write custom JavaScript nodes.

Best For: Prototyping and internal tools where getting to a working demo quickly matters.

Pricing: Open source (Apache 2.0 — community edition).

n8n (Self-Hosted)

n8n started as a workflow automation tool and has grown into a solid option for AI agents, particularly for operations teams that need agents wired into real business systems. It has 400+ native connectors, so you can mix deterministic automation (send an email, update a spreadsheet) with AI agent nodes in the same workflow.

Resource requirements go up with workflow complexity, so plan your infrastructure accordingly. The Sustainable Use License is worth reading: internal business use is free, but commercial redistribution requires a separate enterprise agreement.

Best For: Operations teams who need AI agents talking to dozens of external services.

Pricing: Free for internal use; enterprise license for commercial distribution.

Give Your Self-Hosted Agents Durable Storage

Stop losing data when your containers restart. Get 50GB of free, persistent cloud storage built for AI agents.

Dify (Self-Hosted)

Dify bundles everything you'd otherwise wire together manually: RAG pipeline, prompt orchestration, agent runtime, and built-in monitoring. You deploy it with Docker Compose and get a working dashboard. It's model-agnostic — switch between OpenAI, Claude, Llama, or local models running via Ollama without changing your app logic.

The downside is that it's opinionated. If your architecture doesn't fit how Dify expects agents to work, you'll hit walls that raw code wouldn't impose.

Best For: Teams shipping internal AI apps — chatbots, document assistants, internal copilots — without a dedicated ML infrastructure team.

Pricing: Open source (Apache 2.0); self-hosting is free.

AutoGPT Platform

AutoGPT has moved away from its original "give it a goal and watch it loop forever" approach toward a more controlled low-code platform. The Forge toolkit handles boilerplate so you can focus on agent logic. It connects to OpenAI, Anthropic, Groq, and local Llama models.

It's not as mature as Dify or n8n for production enterprise workloads and requires more configuration to get started. But if you're specifically interested in goal-directed agents that run autonomously through multi-step tasks, it's worth exploring.

Best For: Developers experimenting with autonomous, goal-directed agents.

Pricing: Open source (MIT).

Fastio: Persistent Storage for Self-Hosted Agents

Here's a problem that comes up with every self-hosted agent deployment: container file systems are ephemeral. When your Docker or Kubernetes container restarts, whatever the agent wrote locally is gone. This isn't a theoretical edge case — it's the first thing teams run into when they move from "it works on my machine" to production.

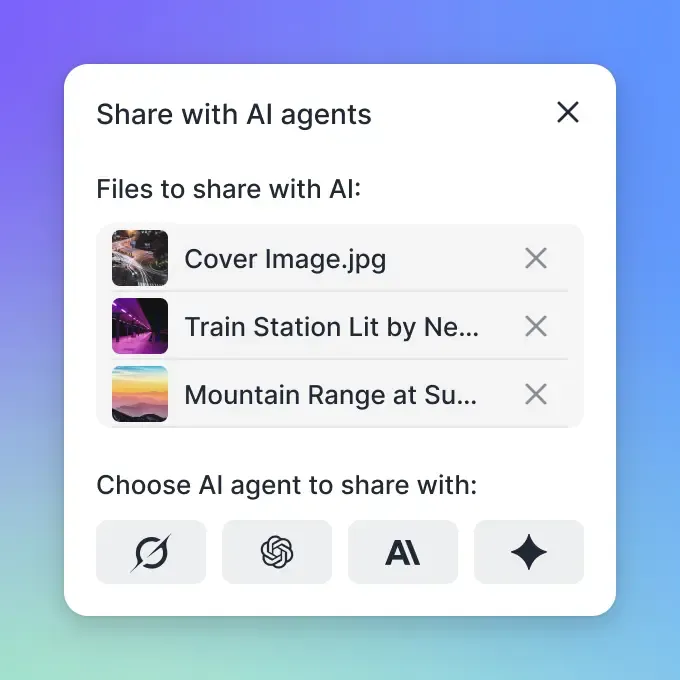

The standard solutions are vector databases (Weaviate, Qdrant) for semantic retrieval, or S3-compatible object storage for raw file persistence. Fastio combines both — file storage with built-in indexing, exposed to agents via an MCP interface.

Connect via the Fastio MCP server and agents can read, write, and search files without any custom middleware. Toggle "Intelligence Mode" and it auto-indexes documents for semantic search. The free agent tier includes 50GB of storage and 5,000 monthly credits with no credit card required.

This setup keeps your compute self-hosted and privacy-controlled while handing storage off to a managed service that won't lose files on restart.

Which Platform Should You Choose?

Short answer:

- Writing Python and want full control? Go with LangChain/LangGraph.

- Shipping an internal tool and need something running fast?

Dify or Flowise are both reasonable starting points.

- Operations team needing agents connected to Salesforce, Slack, and twelve other services?

n8n.

- Experimenting with autonomous goal-directed agents?

AutoGPT Platform.

Whatever you pick: don't rely on container-local storage for anything you care about. Ephemeral file systems lose data on restart. Use a vector DB, object store, or something like Fastio to give agents storage that outlasts the container.

Frequently Asked Questions

What are the best self-hosted AI agent platforms?

The leading self-hosted platforms include LangChain/LangGraph (code-first development), Flowise and Dify (low-code visual building), and n8n (workflow automation). All support Docker-based deployment for full data control.

Can you run AI agents on-premise?

Yes. Open-source frameworks like LangChain, Dify, and n8n can be deployed on-premise via Docker or Kubernetes. This is common in regulated industries with strict data residency requirements.

How do self-hosted agents handle long-term memory?

Self-hosted agents typically use vector databases like Weaviate or Qdrant for semantic retrieval, or object storage for raw file persistence. Platforms like Fastio combine both, providing file storage with built-in indexing for agent use via MCP.

What is the difference between SaaS and self-hosted agents?

SaaS agents run on vendor infrastructure, which is convenient but limits data control. Self-hosted agents run on your own servers, giving you full privacy, customization, and often lower costs at scale by avoiding per-token markup.

Do self-hosted agents require a GPU?

Not necessarily. Agent orchestration logic runs on standard CPUs. If you also self-host the LLM inference using tools like Ollama or vLLM, you will need GPU resources. Many teams self-host the agent logic but call external APIs for inference to avoid GPU costs.

Related Resources

Give Your Self-Hosted Agents Durable Storage

Stop losing data when your containers restart. Get 50GB of free, persistent cloud storage built for AI agents.