Best RAG Deployment Platforms for Production AI Agents

Best RAG deployment platforms for production AI agents pair LLMs with vector search and knowledge bases. RAG cuts down hallucinations by 40-60% for better accuracy. We picked seven leading platforms based on scaling, cost, speed, MCP support, and multi-agent features.

Best RAG Deployment Platforms: What Is RAG?

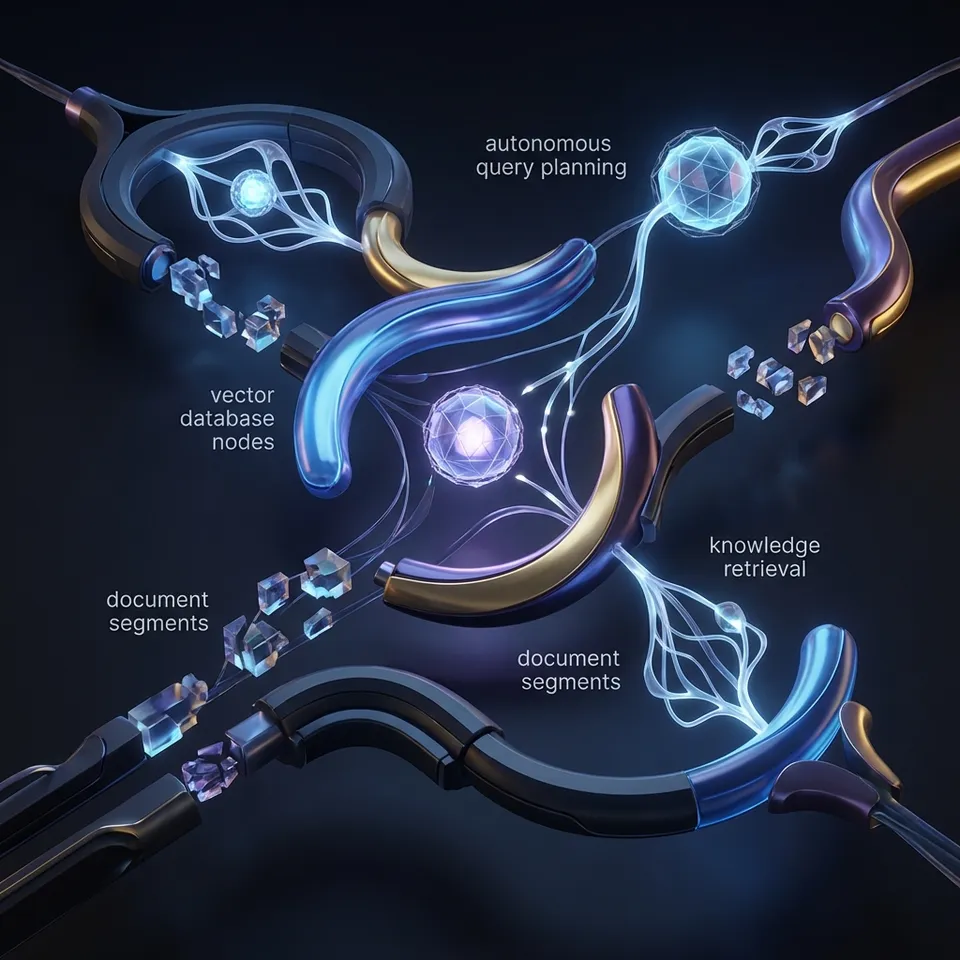

Retrieval-augmented generation (RAG) pulls data from knowledge bases to ground LLM outputs. RAG cuts down hallucinations by 40-60%.

Production AI agents run agentic RAG: multi-step retrieval, tool calls, fast vector search. Good platforms handle indexing, queries, and scaling.

RAG typically involves four steps: ingestion where documents are chunked and embedded; storage in a vector database; retrieval using similarity search; and generation where retrieved context augments the LLM prompt for accurate outputs.

How We Selected These Platforms

We evaluated platforms using key criteria for production AI agents in agentic RAG setups:

Latency: Agentic RAG demands fast vector search to support real-time tool calls and multi-hop reasoning without delays. Scalability: Must handle high QPS with auto-scaling for agent fleets processing millions of queries daily. Pricing: Prefer free tiers for prototyping and transparent pay-per-use at scale to control costs. Deployment Ease: Serverless options with quick index creation and managed scaling. Agent Features: Native support for MCP protocols, file locks for multi-agent coordination, persistent storage beyond ephemeral caches. LLM Integrations: Supports Claude, GPT-4o, Gemini via LangChain, LlamaIndex, or direct APIs. Production Readiness: Uptime SLAs, monitoring, security (RBAC, encryption), and hybrid search (vector + keyword).

Most vector DBs excel at search but lack agent-specific workflow tools like concurrent access controls or built-in RAG pipelines.

Quick Comparison Table

1. Pinecone

Pinecone runs serverless vector databases for RAG workflows.

Strengths:

- Hybrid search (vector + keyword)

- Auto-scaling pods

- $0.33/GB stored

Limitations: Costs grow at scale. No full RAG hosting. Lacks native multi-agent coordination features like file locks.

Pinecone supports metadata filtering, hybrid search, and serverless scaling, making it suitable for real-time agent queries.

Best for: Vector retrieval in your own pipelines.

2. Amazon Bedrock Knowledge Bases

Amazon Bedrock Knowledge Bases offer managed RAG pipelines backed by S3 for enterprise agent applications.

Strengths:

- Integrates multiple LLMs (Claude, Llama, Titan) in one platform

- Enterprise security with VPC, encryption, audit logs

- Pay-per-use ~$0.25/1k tokens for ingestion and query

- Auto-syncs data from S3, Confluence, SharePoint

Limitations: AWS lock-in, complex setup for custom agents, no native MCP or multi-agent locks. Bedrock supports customizable guardrails for safer agent outputs.

Agents can use Bedrock Agents with Knowledge Bases for tool calling:

bedrock_agent = bedrock_agent_runtime.start_agent(

agentId='your-agent-id',

agentAliasId='your-alias',

sessionId='session-id',

inputText='Query with RAG context'

)

Best for: AWS-native production RAG apps with built-in agent orchestration.

3. Weaviate

Weaviate combines vector search with graph capabilities for advanced agentic RAG.

Strengths:

- GraphRAG for multi-hop retrieval in agents

- GraphQL API for complex queries

- Free sandbox, cloud from $45/mo

- Modules for hybrid search and auto-classification

Limitations: Steeper learning curve for graph features; limited agent-specific primitives like locks. GraphRAG enables complex multi-hop reasoning over connected data.

works alongside LangChain for agent retrieval:

from langchain_community.vectorstores import Weaviate

vector_store = Weaviate(client=client)

Best for: Knowledge graphs and hybrid search in agent workflows.

4. Google Vertex AI

Vertex AI Matching Engine provides vector search for RAG.

Strengths:

- Scales for enterprises

- BigQuery integration

- $0.0001/query

Limitations: Google Cloud only.

Vertex AI Vector Search offers filtered ANN indexes for billion-scale datasets, low-latency serving, and integration with BigQuery for data pipelines.

Best for: Pipelines on Google Cloud.

5. Qdrant

Qdrant Cloud focuses on fast vector search.

Strengths:

- Filtering and payloads

- $0.05/hr per pod

- On-prem option

Limitations: Fewer RAG-specific tools. No native agent orchestration or multi-agent features.

Qdrant provides efficient on-disk storage, advanced payload filtering, and quantization for cost-effective scaling.

Best for: Apps where speed matters most.

6. Azure AI Search

Azure AI Search supports vector and hybrid search.

Strengths:

- Free tier

- $0.336/1k indexed docs

- Fits Microsoft stack

Limitations: Indexing costs add up.

Supports semantic reranking, integrated vectorization, and Azure OpenAI compatibility for full RAG pipelines.

Best for: Azure and Microsoft teams.

7. Fastio Intelligent Workspaces

Fastio workspaces have built-in RAG for agents.

Strengths:

- Intelligence Mode auto-indexes files

- 19 consolidated tools for Claude/GPT agents

- File locks for multi-agent coordination

- Free agent plan: 50GB storage, 5,000 credits/mo, no credit card

Fastio Intelligence Mode auto-indexes files for built-in RAG, semantic search, and AI chat with citations.

Limitations: Best for workspace RAG, not standalone vector DB.

Best for: Agent teams sharing knowledge bases via MCP. See storage for agents and MCP docs.

Give Your AI Agents Persistent Storage

Fastio gives teams shared workspaces, MCP tools, and searchable file context to run best rag deployment platforms workflows with reliable agent and human handoffs.

Which RAG Platform Should You Choose?

Consider your cloud provider and agent needs. Pick Pinecone or Qdrant for top vector search speed. Bedrock or Vertex suit large enterprises with integrated cloud services. Fastio excels for MCP-native agent teams with shared workspaces, file locks, and built-in RAG. Test free tiers for latency, cost, and feature fit before scaling.

Frequently Asked Questions

What are the best RAG deployment platforms?

Top picks: Pinecone, Amazon Bedrock, Weaviate, Vertex AI, Qdrant, Azure AI Search, Fastio.

How to deploy RAG at scale?

Choose a platform, upload documents, build the index, connect a retriever to your agent code, monitor latency and costs. Serverless scales automatically.

What is agentic RAG?

Agentic RAG builds on standard RAG with multi-hop retrieval, tool calls, and error fixes via AI agents.

Do RAG platforms support MCP?

Most don't. Fastio runs a full MCP server with 19 consolidated tools for agent integration.

Which RAG platforms have the lowest latency?

Qdrant and Pinecone achieve low latencies suitable for real-time agents.

What RAG platforms support MCP for agents?

Fastio offers full MCP compatibility with 19 consolidated tools. Others lack native support.

Related Resources

Give Your AI Agents Persistent Storage

Fastio gives teams shared workspaces, MCP tools, and searchable file context to run best rag deployment platforms workflows with reliable agent and human handoffs.