Best Code Execution Sandboxes for AI Agents in 2026

Code execution sandboxes let AI agents run generated code in isolated environments without risking your host system. This guide compares 10 platforms by isolation technology, session duration, language support, and pricing — with notes on where each fits in a production agent stack.

Why AI Agents Need Code Sandboxes

AI agents generate and execute code for data analysis, file manipulation, API integration, and workflow automation. Running that code directly on your production system is a bad idea — agents can generate buggy or malicious code, consume unbounded resources, or accidentally touch files they shouldn't. Sandboxes contain the damage.

The practical reasons to sandbox:

- Security: agents can't access sensitive data or modify system files outside the sandbox

- Resource isolation: CPU, memory, and network access are capped per execution

- Predictability: consistent environments eliminate "works on my machine" failures

- Session persistence: long-running tasks survive network interruptions

- Multi-tenancy: multiple isolated agent workloads on shared infrastructure

The tradeoff is always security strength vs. startup speed. Firecracker microVMs give you kernel-level isolation but add 150ms–2s to cold starts. Browser isolates start in under 50ms but support fewer languages. gVisor lands in the middle: user-space kernel protection with broad compatibility and reasonable cold starts.

Comparison Table: Top Sandboxes at a Glance

1. Northflank

Northflank is a full developer platform that happens to support multiple isolation technologies: Firecracker, Kata Containers, Cloud Hypervisor, and gVisor. You choose the security vs. performance tradeoff per workload. Sandboxes persist until you terminate them, and you can deploy to your own AWS, GCP, or Azure account (BYOC).

The downside is complexity. Northflank is not a purpose-built sandbox tool — it's a full infrastructure platform with CI/CD, networking, and storage. Cold starts run around 2 seconds, longer than specialized options.

Best for: Teams that need enterprise infrastructure with full deployment control.

Pricing: Usage-based with a calculator on their site.

2. E2B

E2B was built specifically for AI agent developers — not general compute, not CI/CD. The SDK is designed around agent workflows, and it shows. Firecracker microVMs give kernel-level isolation, and cold starts hit 150ms, which is fast for the security level you're getting.

The limits: 24-hour maximum sessions (long tasks need checkpointing), no GPU support, and no BYOC — it runs on E2B's infrastructure only.

Best for: Agent developers who want a purpose-built product without managing infrastructure.

Pricing: Free hobby tier (one-time $100 credit, up to 1-hour sessions). Pro plan at $150/month with 24-hour sessions.

3. Modal

Modal is a serverless platform for ML and data workloads that recently added Python sandbox support. The main draw is GPU access: A100 and H100 are available, and autoscaling handles burst workloads without configuration. No session time limits.

The tradeoffs: gVisor isolation only (weaker than Firecracker, though Kata is opt-in), no BYOC, and limited Node.js support. It's Python-first by design.

Best for: ML teams running GPU-intensive agent workloads.

Pricing: Free tier includes $30/month in compute credits. Usage-based after that.

4. Daytona

Daytona's headline number is sub-90ms cold starts from code to execution. It uses Docker by default with optional Kata or Sysbox for stronger isolation. Sessions can run indefinitely.

Worth noting: Docker-by-default means weaker isolation unless you explicitly configure Kata. And pricing is enterprise-only — contact sales, no self-serve tier.

Best for: Developer teams that need fast iteration and can configure stronger isolation when required.

Pricing: Contact sales.

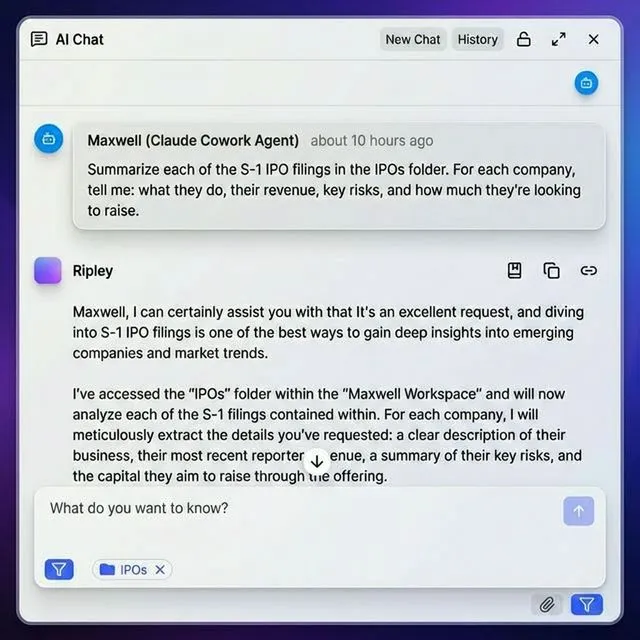

Pair your code sandbox with persistent agent storage

Fastio gives AI agents 50GB free storage with 19 consolidated tools for file operations, built-in RAG, and ownership transfer. Complement your code sandbox with organized workspaces for inputs, outputs, and client delivery.

5. Vercel Sandbox

Vercel Sandbox is still in beta. It provides ephemeral Firecracker microVMs — good security, tight Vercel integration, support for Python and Node.js. Currently free during the beta period.

The 45-minute session cap is the real constraint. That rules it out for most agent workflows that run longer than a short task. The 8 vCPU max with 2GB/vCPU memory is also limited. Worth watching, but not production-ready yet.

Best for: Teams already on Vercel who want sandboxed agent execution for playgrounds and demos.

Pricing: Free during beta. Production pricing not announced.

6. Cloudflare Sandboxes

Cloudflare Sandboxes runs on Cloudflare's edge network using browser isolates — the same isolation model Chrome uses. Cold starts are under 50ms, which is the fastest in this comparison. Works with Workers, R2, and KV if you're already in the Cloudflare ecosystem.

The 30-minute session cap is short, and it's designed for lightweight tasks. This is not the right tool for long-running agent jobs or GPU workloads.

Best for: Edge-first applications needing global distribution and instant startup.

Pricing: Part of Cloudflare Workers. Free tier available; paid from $5/month plus usage.

7. Blaxel

Blaxel's model is different from most sandboxes: perpetual environments that auto-shutdown after 1 second of idle and resume in under 25ms. You pay nothing during standby. This makes it cost-effective for workloads that run in bursts rather than continuously.

The downside is a smaller ecosystem and contact-sales-only pricing.

Best for: Intermittent workloads where instant resume matters more than continuous availability.

Pricing: Contact sales.

8. Depot

Depot targets CI/CD workflows. You get Docker-based sandboxes with build caching, layer sharing, and 8-hour session limits — long enough for most build pipelines. Standard Dockerfiles work without modification.

The isolation is Docker-only (no microVMs), there's no GPU support, and 8 hours is a hard cap for anything that needs to run longer.

Best for: CI/CD pipelines where agents generate or test code.

Pricing: $0.01/minute billed per second, no minimum. Check depot.dev for current plan options.

9. Beam Cloud

Beam Cloud is an open-source alternative to E2B with Docker-based sandboxing. It supports GPU workloads, both Python and Node.js, and has no session time limits. The self-hostable option gives teams full control over infrastructure and data residency.

The tradeoff: Docker isolation is weaker than microVMs, and Beam's community and integration ecosystem are smaller than E2B or Modal.

Best for: Teams that need GPU access and want to self-host or use open-source tooling.

Pricing: Usage-based with a free tier.

10. Fastio

Fastio is not a code execution sandbox — it belongs in this list because most agent stacks need somewhere to put the results. It provides persistent file storage with 19 consolidated tools, built-in RAG indexing, file locking for multi-agent coordination, and ownership transfer so agents can hand off completed work to humans.

A typical pattern: execute Python code in E2B or Modal, write results to a Fastio workspace, let a human review and trigger follow-up tasks. The 50GB free tier with 5,000 monthly credits covers most development workloads without a credit card.

Limitation: No code execution. Agents run compute elsewhere and use Fastio for storage, organization, and handoff.

Pricing: Free tier (50GB, 5,000 credits, no credit card). Usage-based after that.

How we evaluated these sandboxes

We tested each platform across six key factors for AI agent code execution:

1. Isolation Strength

Security matters when running untrusted code. We ranked platforms by isolation technology:

- Firecracker/Kata microVMs: Kernel-level isolation (strongest)

- gVisor: User-space kernel protection (strong)

- Browser isolates: Chrome-based sandboxing (strong for specific workloads)

- Docker: Container isolation (weaker, but fast)

2. Session Duration

Long-running agent tasks need sandboxes that don't cut off execution. We noted maximum session lengths and whether platforms support unlimited sessions.

3. Cold start performance

Agent responsiveness depends on how fast a sandbox goes from API call to running code. We recorded startup times across all platforms.

4. Language support

Python dominates agent code. Node.js is common for API integrations. We verified which platforms support both natively.

5. GPU access

ML inference and training require GPU support. We identified platforms with A100/H100 access for compute-heavy workloads.

6. Pricing model

Free tiers, usage-based pricing, and seat-based models all have different cost profiles at scale. We compared them to help you estimate production costs.

Which sandbox should you choose?

- Maximum security: Northflank or E2B. Both use Firecracker microVMs for kernel-level isolation.

- GPU workloads: Modal or Beam Cloud. Both offer A100/H100 access for ML inference and training.

- Fastest cold starts: Cloudflare Sandboxes (sub-50ms) or Blaxel (25ms resume from standby).

- Long-running sessions: Northflank, Modal, or Daytona — all support unlimited session duration.

- Edge distribution: Cloudflare Sandboxes, to run code close to users on a global network.

- CI/CD pipelines: Depot, with build caching and Docker compatibility.

- File storage and handoff: Fastio alongside whichever compute sandbox you pick.

Most production setups combine at least two of these. A common pattern: execute in E2B or Modal, store results in Fastio, distribute via Cloudflare if latency matters.

State persistence and multi-agent coordination

Execution alone isn't enough. You also need to think about how agents maintain state and coordinate without clobbering each other.

Session checkpointing matters when your sandbox has time limits. E2B caps at 24 hours. Vercel Sandbox caps at 45 minutes. For long tasks, you need to save intermediate state before the session ends. Platforms with unlimited sessions (Northflank, Modal, Daytona) avoid this problem.

Filesystem persistence varies a lot. Some sandboxes give you ephemeral filesystems that wipe on restart. Others maintain state across invocations. Know which you're working with before building a workflow that depends on files surviving between runs.

Multi-agent coordination gets complicated when agents share storage. Without file locks, two agents can overwrite the same file simultaneously. Fastio provides file locks via MCP for safe concurrent access. Alternatively, give each agent its own sandbox and use external storage for shared state.

Output management matters at scale. Where do results go after the sandbox session ends? Most teams write outputs to object storage (S3, R2) or to structured storage like Fastio workspaces, where humans can review work and trigger follow-up tasks.

Security considerations for production deployments

A few things that matter more than most people realize until something goes wrong:

Network isolation: Agents shouldn't be able to call arbitrary external services. Configure outbound firewall rules to whitelist only approved APIs. Cloudflare Sandboxes has built-in egress controls; others require manual configuration.

Resource limits: Set per-sandbox caps on CPU, RAM, disk, and execution time. Without limits, a runaway agent can consume your entire compute budget in a long session. Modal and Northflank give you fine-grained controls.

Audit logging: For compliance-sensitive industries, detailed logs of what code ran, when, and what it accessed are often mandatory. Northflank and Fastio both provide audit trails.

Secret management: API keys should never appear in agent-generated code. Use environment variables or a secret manager. Don't let agents read or write credentials directly.

Human approval gates: For any agent action that touches production databases or calls external payment APIs, consider requiring human review before execution. It's slower, but one bad agent run can be expensive to undo.

Frequently Asked Questions

Why do AI agents need code sandboxes?

AI agents generate and execute code for tasks like data analysis, API integration, and file manipulation. Without sandboxing, this creates serious security risks since agents might generate malicious or buggy code that could compromise your system. Sandboxed execution provides kernel-level or container-level isolation that prevents untrusted code from accessing sensitive data, modifying system files, or consuming unlimited resources. As agent-generated code grows more common, sandboxing becomes important for production deployments.

Is E2B free for developers?

E2B has a free Hobby plan with a one-time $100 usage credit, up to 1-hour sessions, and 20 concurrent sandboxes — no credit card required. For longer sessions (up to 24 hours) and higher concurrency, the Pro plan is $150/month. Production workloads use usage-based billing on top of the plan fee. Check e2b.dev/pricing for current limits.

What's the difference between Firecracker and Docker for sandboxing?

Firecracker provides microVM isolation where each sandbox runs its own kernel, giving kernel-level security similar to running separate virtual machines. Docker uses container isolation where sandboxes share the host kernel with namespace separation. Firecracker offers stronger security isolation but adds some startup latency compared to containers. Docker starts faster but provides weaker security boundaries. For AI agent code execution, Firecracker is preferred when running untrusted code, while Docker works for controlled environments where code comes from trusted sources.

Can AI agents run GPU workloads in these sandboxes?

Only some platforms support GPU access in sandboxes. Modal and Beam Cloud offer A100 and H100 GPUs optimized for ML inference and training workloads. Northflank and Daytona also provide GPU support. However, E2B, Vercel Sandbox, Cloudflare Sandboxes, and Blaxel do not currently offer GPU-accelerated sandboxes. If your agents need to run ML models or perform GPU-intensive tasks, choose Modal, Beam Cloud, or Northflank.

How long can AI agent code run in a sandbox?

Session duration varies by platform. Cloudflare Sandboxes limits executions to 30 minutes. Vercel Sandbox caps sessions at 45 minutes. E2B allows up to 24 hours per session. Northflank, Modal, Daytona, and Beam Cloud support unlimited session duration where sandboxes persist until you terminate them. For long-running agent tasks like overnight data processing or continuous monitoring, choose a platform without time limits. For short-lived tasks like API calls or quick data transformations, shorter session limits are fine.

What happens to files created in a sandbox after execution completes?

File persistence depends on the platform. Some sandboxes provide ephemeral filesystems that delete all files when the session ends. Others offer persistent storage that survives across multiple executions. For important outputs, most teams copy files to external storage like S3, R2, or Fastio workspaces before the sandbox terminates. Fastio provides persistent workspaces where agents can organize outputs by project, enable RAG search, and transfer ownership to humans for review.

Can multiple AI agents share the same sandbox?

Most platforms allow multiple agents to access the same sandbox, but you need to handle concurrency carefully. Without coordination, agents might overwrite each other's files or conflict on shared resources. Use file locks to prevent concurrent writes to the same file. Fastio provides file locks via its MCP server for safe multi-agent coordination. Alternatively, give each agent its own isolated sandbox and use external storage for shared state.

How much does it cost to run AI agents in production sandboxes?

Costs vary by platform and usage patterns. E2B, Modal, and Beam Cloud use usage-based pricing where you pay for compute time, memory, and storage consumed. Depot uses seat-based pricing with usage pooling. Cloudflare Sandboxes uses Workers pricing plus usage. Northflank and Daytona require custom enterprise pricing. For typical agent workloads, costs depend on execution frequency, session duration, and resource requirements. Free tiers from E2B and Modal help you estimate costs during development before committing to paid plans.

Are there compliance considerations when running agent code in sandboxes?

Yes, especially in regulated industries. Healthcare, finance, and legal applications often have requirements around data residency, audit logging, and access controls that sandboxes may or may not satisfy. Check whether your chosen platform offers HIPAA BAAs, SOC 2 reports, or equivalent documentation before deploying agents that handle sensitive data. Consult legal counsel before deploying agents in healthcare or other regulated contexts.

What's the best sandbox for agents using the Model Context Protocol (MCP)?

MCP-compatible agents benefit from sandboxes that integrate well with external tool servers. Fastio provides 19 consolidated tools for file operations via Streamable HTTP and SSE transport, making it ideal for agents that need persistent storage and workspace organization. For code execution, pair Fastio with a compute sandbox like E2B, Modal, or Cloudflare Sandboxes. The agent executes code in the sandbox, stores results in Fastio workspaces, and uses MCP tools to organize files, trigger workflows, or transfer ownership to humans.

Related Resources

Pair your code sandbox with persistent agent storage

Fastio gives AI agents 50GB free storage with 19 consolidated tools for file operations, built-in RAG, and ownership transfer. Complement your code sandbox with organized workspaces for inputs, outputs, and client delivery.