7 Best AI Agent Monitoring Tools for Production

AI agents introduce new failure modes that traditional APM tools can't catch, from infinite reasoning loops to excessive tool usage. This guide compares the top monitoring platforms for tracking agent behavior, cost, and output quality in production.

Why Agent Monitoring is Different from LLM Observability

Agent monitoring goes beyond standard APM to track tool usage, token costs, and reasoning loops. While LLM observability focuses on single inputs and outputs (prompts and completions), agents operate in loops. They plan, execute tools, observe results, and iterate. This autonomy creates unique risks. An agent might get stuck in a "thought loop," burning through your API credits without producing a result. Or it might execute a tool incorrectly, deleting files or hallucinating data that it then treats as fact.

Key metrics for agents include:

- Step Count: How many iterations does it take to solve a task?

- Tool Success Rate: Are API calls or file operations succeeding?

- Loop Detection: Is the agent repeating the same failed action?

- File I/O: What documents did the agent read, generate, or modify? According to recent engineering surveys, engineers spend much of their time debugging these complex interaction patterns. The tools below help you reclaim that time.

Helpful references: Fastio Workspaces, Fastio Collaboration, and Fastio AI.

What to check before scaling best ai agent monitoring tools

LangSmith by LangChain is the industry standard for granular tracing of agent reasoning chains. It visualizes the entire "thought process" of your agent, breaking down every step from the initial user prompt to the final output.

Key Strengths:

- Deep Tracing: See what the agent "thought" at each step and which tools it called.

- Playground Integration: Re-run specific steps with modified prompts to debug failures.

- Dataset Collection: Turn production traces into test datasets for evaluation.

Limitations:

- Can become expensive at high volumes if you log every token. * UI can be overwhelming for non-technical stakeholders.

Best For: Engineering teams building complex, multi-step agents who need deep code-level debugging.

Give Your AI Agents Persistent Storage

Fastio provides secure, monitored storage for your AI agents with built-in audit logs and human-handoff workflows.

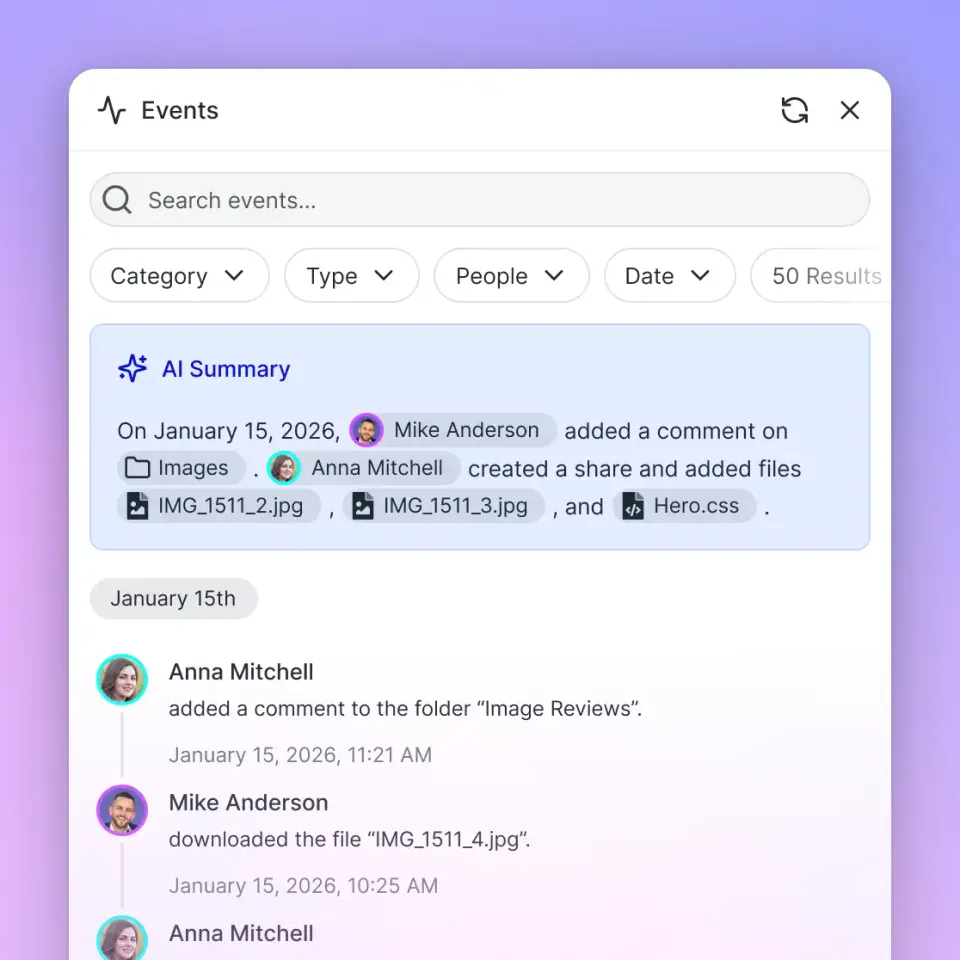

2. Fastio

While other tools monitor the reasoning, Fastio monitors the artifacts. It tracks the files and data your agents interact with. When an agent generates a report, modifies a codebase, or processes a video, Fastio logs that activity and secures the output.

Key Strengths:

- File System Observability: See which files an agent read, wrote, or deleted.

- Audit Trails: Maintains a permanent log of all file operations, critical for compliance and security.

- Human-in-the-Loop: Agents can "hand off" files to humans via branded portals or shared workspaces.

- 19 consolidated tools [^2]: Includes a large library of pre-built tools for file manipulation that work out of the box.

Limitations:

- Focuses on storage and I/O, not prompt engineering or model fine-tuning. * Best used alongside a reasoning tracer like LangSmith for full coverage.

Best For: Agents that generate files, process documents, or need persistent long-term memory.

Pricing: Free tier includes 50GB storage and 5,000 monthly credits [^3].

3. Arize AI

Arize AI focuses on evaluation and drift detection. It excels at answering the question: "Is my agent getting worse over time?" It tracks performance metrics across thousands of runs to identify regression in quality or accuracy.

Key Strengths:

- Drift Detection: Automatically alerts you if your agent's outputs start deviating from established baselines.

- Embedding Visualization: Visualizes high-dimensional data to find clusters of problematic inputs.

- Enterprise Scale: Built to handle millions of inferences per day without slowing down.

Limitations:

- Setup is more complex than lightweight tracing tools. * Targeted more at data science teams than application developers.

Best For: Large-scale enterprise deployments where drift and regression are significant risks.

4. Helicone

Helicone is a proxy that sits between your agent and the LLM provider. Its main strength is caching and cost control. By caching identical requests, it can reduce latency and bills for agents that frequently run similar tasks.

Key Strengths:

- Smart Caching: Returns cached responses for repeat queries, saving time and money.

- Cost Analytics: Detailed breakdown of spend by user, agent, or feature.

- Lightweight: Easy to drop in by just changing your base URL.

Limitations:

- Focuses on the LLM API layer, offering less visibility into internal agent logic or tool execution.

Best For: Startups and teams optimizing token spend and latency.

5. Datadog

For teams already using Datadog for infrastructure, their LLM observability offering is a logical extension. It unifies agent metrics with your broader system health, letting you correlate slow agent responses with database load or server issues.

Key Strengths:

- Unified Glass: View agent performance alongside server CPU, memory, and database metrics.

- Security Integration: Detects sensitive data leaks (PII) in prompts and responses.

- Alerting: Uses Datadog's alerting engine for advanced notification rules.

Limitations:

- Can be expensive if you don't already have a Datadog contract. * Less tailored for specific agentic workflows compared to niche tools.

Best For: DevOps-heavy organizations that want to keep all monitoring in one platform.

6. Langfuse

Langfuse is a powerful open-source alternative for observability. It offers many of the same tracing and evaluation features as proprietary tools but gives you the option to self-host, which is important for teams with strict data privacy requirements.

Key Strengths:

- Open Source: Full control over your data and infrastructure; self-host via Docker.

- Prompt Management: Includes a CMS for managing and versioning agent prompts.

- Model Agnostic: Works well with any LLM or framework, not just LangChain.

Limitations:

- Self-hosting requires maintenance and infrastructure management.

Best For: Privacy-conscious teams or those who prefer open-source stacks. Consider how this fits into your broader workflow and what matters most for your team. The right choice depends on your specific requirements: file types, team size, security needs, and how you collaborate with external partners. Testing with a free account is the fast way to know if a tool works for you.

7. Maxim AI

Maxim AI focuses on the pre-production phase. It provides a testing environment for simulating agent behaviors and running "red team" attacks to find vulnerabilities before you ship.

Key Strengths:

- Simulation Engine: Run thousands of test scenarios to see how the agent handles edge cases.

- Red Teaming: Tools for testing safety and jailbreak resistance.

- Regression Testing: Compare new agent versions against golden datasets automatically.

Limitations:

- More of a testing platform than a live production monitor (though it does both).

Best For: QA and safety teams ensuring agents are safe and reliable before deployment. Consider how this fits into your broader workflow and what matters most for your team. The right choice depends on your specific requirements: file types, team size, security needs, and how you collaborate with external partners. Testing with a free account is the fast way to know if a tool works for you.

Comparison Summary

Which One Should You

Choose? For most teams, a combination of tools works best. Use LangSmith or Langfuse to debug the reasoning logic, and pair it with Fastio to manage and monitor the actual files your agent creates. If cost is your primary constraint, start with Helicone to get immediate savings through caching.

Frequently Asked Questions

How do you monitor an AI agent?

Monitoring an AI agent requires tracking three layers: the LLM (tokens, latency), the logic (reasoning steps, loops), and the side effects (tool usage, file modifications). You need observability tools like LangSmith or Fastio because standard application monitoring misses the internal 'thought process' of the agent.

What is LLM observability?

LLM observability is the practice of tracking the inputs, outputs, and performance of Large Language Models. It focuses on metrics like token usage, response latency, hallucination rates, and cost per query. For agents, this extends to monitoring multi-step chains and external tool interactions.

Why is file monitoring important for AI agents?

Agents often act autonomously to generate reports, edit code, or organize data. Without file monitoring, you have no record of what the agent changed, created, or deleted. Tools like Fastio provide an audit trail for these actions, ensuring you can rollback changes or verify agent work.

Related Resources

Give Your AI Agents Persistent Storage

Fastio provides secure, monitored storage for your AI agents with built-in audit logs and human-handoff workflows.