How to Build Automated Metadata Extraction Webhook Workflows

Polling for new files wastes compute and delays processing. Webhook-driven metadata extraction pipelines react to file events in real time, pulling structured data from documents, images, and media the moment they arrive. This guide walks through building these pipelines with Fast.io webhooks, n8n, and custom Node.js handlers.

Why Polling Falls Short for Metadata Extraction

Most metadata extraction setups start the same way: a cron job checks a folder every few minutes, looks for new files, and kicks off processing. It works until it doesn't.

The problem is waste. A system polling every 30 seconds across 10,000 monitored resources generates roughly 28.8 million requests per day. The vast majority return nothing because no files changed. That's bandwidth, CPU cycles, and API quota burned on empty responses.

Latency is the other cost. If your polling interval is five minutes, a file uploaded one second after the last check sits idle for nearly five minutes before processing begins. For workflows where metadata feeds downstream systems (search indexes, compliance checks, client portals), that delay compounds.

Webhook-based architectures flip the model. Instead of asking "anything new?" thousands of times per day, the storage system pushes a notification the moment a file event occurs. Your extraction pipeline only runs when there's actual work to do.

The difference shows up in two places:

- Latency drops from minutes to seconds. The webhook fires within milliseconds of the file event. Your handler receives the payload, fetches the file, and starts extraction immediately.

- Infrastructure load drops dramatically. No empty polling cycles means your extraction workers only spin up when files arrive. For bursty upload patterns (batch imports, end-of-day submissions), this maps compute directly to demand.

How Webhook-Based Metadata Pipelines Work

A webhook metadata pipeline has four stages: event source, receiver, extractor, and destination.

Event source is the storage platform that detects file changes. When a user or agent uploads a file, the platform fires an HTTP POST to a URL you configure. Fast.io, for example, sends webhook payloads for events like workspace_storage_file_added with context including the workspace ID, file ID, and timestamp.

Receiver is your endpoint that accepts the webhook payload. This can be a serverless function (AWS Lambda, Cloudflare Workers), a framework route (Express, FastAPI), or a workflow automation platform (n8n, Make). The receiver validates the request signature, parses the event data, and decides what to do next.

Extractor is the service that pulls metadata from the file. Depending on the file type, this might mean:

- Reading EXIF data from images (camera model, GPS coordinates, dimensions)

- Parsing document properties from PDFs and Office files (author, creation date, page count)

- Running OCR on scanned documents to extract text fields

- Using AI models to classify content and extract structured data (invoice numbers, contract dates, entity names)

Destination is where the extracted metadata lands. Common targets include a search index (Elasticsearch, Typesense), a database (PostgreSQL, MongoDB), a spreadsheet, or the file's own metadata record back in the storage platform.

The key advantage of this architecture is that each stage is independent. You can swap extractors without touching the receiver. You can add new destinations without modifying the extraction logic. The webhook event is the stable contract that connects everything.

Setting Up Fast.io Webhooks for File Events

Fast.io's webhook system sends HTTP POST requests to your endpoint when events happen in workspaces or shares. For metadata extraction, the most useful events are file additions and modifications.

Here's what a typical webhook payload looks like when a file is uploaded:

{

"event_id": "evt_abc123",

"event": "workspace_storage_file_added",

"created": "2026-04-21T14:30:00Z",

"data": {

"workspace_id": "ws_789",

"object_id": "file_456",

"name": "contract-draft.pdf",

"size": 2048576,

"content_type": "application/pdf"

}

}

Your handler receives this payload, validates the signature using the X-Fastio-Signature header and your webhook secret (HMAC verification), then uses the file ID to fetch the file and run extraction.

A minimal Node.js Express handler looks like this:

const crypto = require("crypto");

const express = require("express");

const app = express();

app.post("/webhook", express.raw({ type: "application/json" }), (req, res) => {

const signature = req.headers["x-fastio-signature"];

const expected = crypto

.createHmac("sha256", process.env.WEBHOOK_SECRET)

.update(req.body)

.digest("hex");

if (signature !== expected) {

return res.status(401).send("Invalid signature");

}

const event = JSON.parse(req.body);

if (event.event === "workspace_storage_file_added") {

extractMetadata(event.data.object_id, event.data.content_type);

}

res.status(200).send("OK");

});

Respond with a 200 status immediately, then process the extraction asynchronously. If your handler takes too long to respond, the webhook system may retry the delivery, leading to duplicate processing.

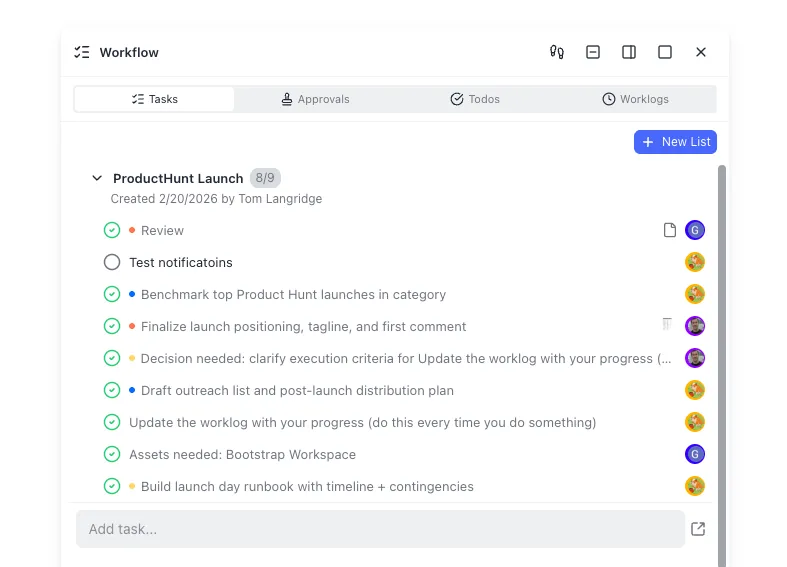

Fast.io also supports Intelligence Mode, which auto-indexes uploaded files for semantic search and AI chat. If your extraction needs are primarily search and summarization, enabling Intelligence on the workspace handles indexing automatically without a custom pipeline. For structured extraction, Fast.io's Metadata Views go further: describe what fields you want (contract dates, invoice totals, counterparty names) in natural language, and the AI builds a typed schema and extracts those values into a sortable, filterable grid across every matching file in the workspace. For fully custom pipelines that feed external databases or trigger downstream workflows, the webhook approach gives you full control.

Start extracting metadata from file uploads automatically

Fast.io workspaces include webhooks, built-in AI indexing, and Metadata Views that extract structured fields into a queryable grid. Describe the data you need in plain English and get a live spreadsheet from your files. 50GB free storage and 5,000 monthly credits, no credit card.

Building Extraction Pipelines with n8n

n8n is an open-source workflow automation platform that handles webhook reception and file processing without custom code. It's a good fit when you want to prototype a metadata pipeline quickly or when non-developers need to modify the extraction logic.

Here's how to wire up a metadata extraction workflow in n8n:

Step 1: Create a Webhook trigger node. Set the HTTP method to POST and note the generated URL. Configure your Fast.io workspace to send file events to this URL.

Step 2: Add a Switch node to route based on event type. Filter for workspace_storage_file_added events and ignore others (member changes, deletions).

Step 3: Add an HTTP Request node to fetch the file using the file ID from the webhook payload. Pass your Fast.io API key in the authorization header.

Step 4: Add an Extract From File node. n8n's built-in extraction handles CSV, JSON, XML, and spreadsheet formats natively. For PDFs and images, connect to an external extraction service or use n8n's AI nodes with a model that supports document understanding.

Step 5: Route the extracted metadata to your destination. n8n has native nodes for PostgreSQL, Google Sheets, Airtable, Notion, and dozens of other targets.

A practical example: a legal team receives contracts through a Fast.io Receive share. Each upload triggers a webhook. n8n fetches the PDF, extracts the contract date, parties, and dollar amounts using an AI extraction node, then writes the structured data to a Google Sheet that the team uses for tracking.

For teams already using Zapier or Make (formerly Integromat), the pattern is similar. Create a webhook trigger, add a file fetch step, then connect to your extraction and storage services. n8n's advantage is that it's self-hostable, so sensitive documents never leave your infrastructure during extraction.

Custom Node.js Webhook Handlers for Advanced Extraction

When you need fine-grained control over extraction logic, file type routing, or error handling, a custom handler gives you full flexibility. Here's a production-ready pattern.

Start with a queue-based architecture. The webhook handler accepts events and pushes them to a job queue (Bull, BullMQ, or a managed service like AWS SQS). A separate worker process pulls jobs and runs extraction. This separation means webhook responses stay fast and extraction failures don't block new events.

const { Queue } = require("bullmq");

const extractionQueue = new Queue("metadata-extraction", {

connection: { host: "localhost", port: 6379 },

});

async function handleWebhook(event) {

const { object_id, content_type, name } = event.data;

await extractionQueue.add("extract", {

fileId: object_id,

contentType: content_type,

fileName: name,

receivedAt: new Date().toISOString(),

});

}

On the worker side, route extraction based on content type:

const { Worker } = require("bullmq");

const sharp = require("sharp");

const pdfParse = require("pdf-parse");

const worker = new Worker("metadata-extraction", async (job) => {

const { fileId, contentType } = job.data;

const fileBuffer = await fetchFileFromFastio(fileId);

let metadata = {};

if (contentType.startsWith("image/")) {

const info = await sharp(fileBuffer).metadata();

metadata = {

width: info.width,

height: info.height,

format: info.format,

colorSpace: info.space,

hasAlpha: info.hasAlpha,

};

} else if (contentType === "application/pdf") {

const parsed = await pdfParse(fileBuffer);

metadata = {

pageCount: parsed.numpages,

author: parsed.info?.Author,

title: parsed.info?.Title,

creationDate: parsed.info?.CreationDate,

wordCount: parsed.text.split(/\s+/).length,

};

}

await saveMetadata(fileId, metadata);

});

For AI-powered extraction (entity recognition, classification, structured field extraction from unstructured documents), add a step that sends the file content to a language model. Fast.io's Metadata Views handle this natively: describe the fields you need in plain English, and the platform extracts structured data from PDFs, spreadsheets, images, and Office files into a queryable grid. No templates or OCR rules required. You can combine Metadata Views with your custom webhook pipeline: let Fast.io extract common fields (dates, amounts, names, status) natively, then run specialized extractors for domain-specific fields that need custom logic.

Handle idempotency by tracking processed event IDs. Webhook systems retry on failure, so your worker should check whether an event has already been processed before running extraction again. A simple Redis set or database lookup works for this.

Error Handling and Reliability Patterns

Webhook pipelines fail in predictable ways. Planning for these failures keeps your metadata extraction running smoothly.

Signature validation failures mean either your webhook secret is misconfigured or someone is sending forged payloads. Log these with the source IP and alert on spikes. Never process a payload that fails signature verification.

File fetch failures happen when the webhook arrives before the file is fully available, or when network issues interrupt the download. Implement exponential backoff with a retry limit. Three retries with delays of 2, 8, and 32 seconds handles most transient issues.

Extraction failures vary by file type. A corrupted PDF might crash your parser. An image without EXIF data returns empty metadata. Handle these gracefully: log the failure, mark the file as "extraction failed" in your database, and move on. Don't let one bad file block the entire queue.

Duplicate deliveries are normal in webhook systems. If your handler crashes after processing but before acknowledging, the sender retries. Use the event ID as a deduplication key. Before processing, check if you've already seen this event ID. If you have, return 200 and skip.

Ordering is not guaranteed. If a file is uploaded and then immediately modified, you might receive the modification event before the creation event. Design your extractor to handle updates idempotently. Re-extracting metadata for an already-processed file should produce the same result, not create duplicate records.

For monitoring, track three metrics: webhook receipt rate (are events arriving?), extraction success rate (are files processing?), and end-to-end latency (how long from upload to metadata availability?). Set alerts on drops in receipt rate and spikes in failure rate. Fast.io's audit trails log webhook delivery attempts, giving you visibility into the source side.

Connecting Your Pipeline to Downstream Systems

Extracted metadata is only useful if it flows to the systems that need it. Here are common patterns for connecting your extraction pipeline to downstream consumers.

Search indexes. Push extracted text, keywords, and structured fields to Elasticsearch, Typesense, or Meilisearch. This powers full-text search across your file library. If you're using Fast.io with Intelligence Mode enabled, workspace files are already indexed for semantic search and RAG chat. Your custom pipeline complements this by indexing domain-specific fields that general-purpose indexing might miss.

Databases. Write extracted metadata to PostgreSQL or MongoDB for querying and reporting. A contract metadata table might include columns for contract date, expiration date, parties, and dollar amount, all populated automatically from webhook-triggered extraction.

Notification systems. Route specific extraction results to Slack, email, or a task queue. If an uploaded document is missing required fields (no signature date on a contract, no author on a report), create a task automatically. Fast.io's workflow primitives (tasks, todos, approvals) can receive these notifications directly, keeping the follow-up action in the same workspace as the file.

Data warehouses. For analytics pipelines, batch extracted metadata into Snowflake, BigQuery, or Redshift. Use a staging table that your extraction worker writes to, then run periodic ETL jobs to merge into your analytics schema.

Back to the storage platform. Write extracted metadata back to the file record itself. Fast.io's API supports custom metadata on files, so you can attach extracted fields directly to the file object. This means anyone browsing the workspace sees the extracted data without querying a separate system.

The webhook event that started the pipeline can also fan out to multiple destinations simultaneously. Your handler pushes one job per destination to the queue, and each worker processes independently. A single file upload can update your search index, populate a database row, and send a Slack notification, all triggered by one webhook event.

Frequently Asked Questions

How do I trigger metadata extraction automatically on file upload?

Configure your storage platform to send webhook notifications on file upload events. When a file arrives, the webhook sends an HTTP POST to your endpoint with details like file ID, name, and content type. Your handler fetches the file and runs extraction logic based on the file type. Fast.io sends a workspace_storage_file_added event for this purpose.

What webhook events are useful for metadata pipelines?

File creation and file modification events are the primary triggers. File creation fires extraction on new uploads. File modification re-extracts metadata when files are updated or replaced. Some platforms also offer folder-level events and share events that let you scope extraction to specific locations.

How do I build a real-time metadata extraction workflow?

Use a webhook trigger connected to a job queue. The webhook handler validates the event and pushes an extraction job. A worker process fetches the file, runs type-specific extraction (EXIF for images, parsing for PDFs, AI models for unstructured documents), and writes results to your destination. The async queue pattern keeps response times fast while handling extraction reliably.

Can I use Fast.io's built-in AI instead of a custom extraction pipeline?

Yes. Fast.io's Intelligence Mode auto-indexes uploaded files for semantic search, summarization, and citation-backed chat. For structured extraction, Metadata Views let you describe the fields you need in natural language, and the AI extracts them into a sortable, filterable spreadsheet across all matching files. Agents can also create Views, trigger extraction, and query results programmatically through Fast.io's MCP server. You can combine Metadata Views with a custom webhook pipeline for domain-specific fields that need specialized handling.

How do I handle webhook failures in a metadata pipeline?

Implement three safeguards: signature verification to reject forged payloads, exponential backoff retries for transient failures (network issues, temporary file unavailability), and idempotency checks using event IDs to prevent duplicate processing. Track event IDs in Redis or a database, and skip events you have already processed.

What's the difference between polling and webhooks for metadata extraction?

Polling checks for new files on a schedule, generating many empty requests when no files have changed. Webhooks push a notification the instant a file event occurs, so extraction starts within seconds of upload. Webhooks eliminate the wasted requests from polling and reduce processing latency from your polling interval down to near-instant.

Related Resources

Start extracting metadata from file uploads automatically

Fast.io workspaces include webhooks, built-in AI indexing, and Metadata Views that extract structured fields into a queryable grid. Describe the data you need in plain English and get a live spreadsheet from your files. 50GB free storage and 5,000 monthly credits, no credit card.