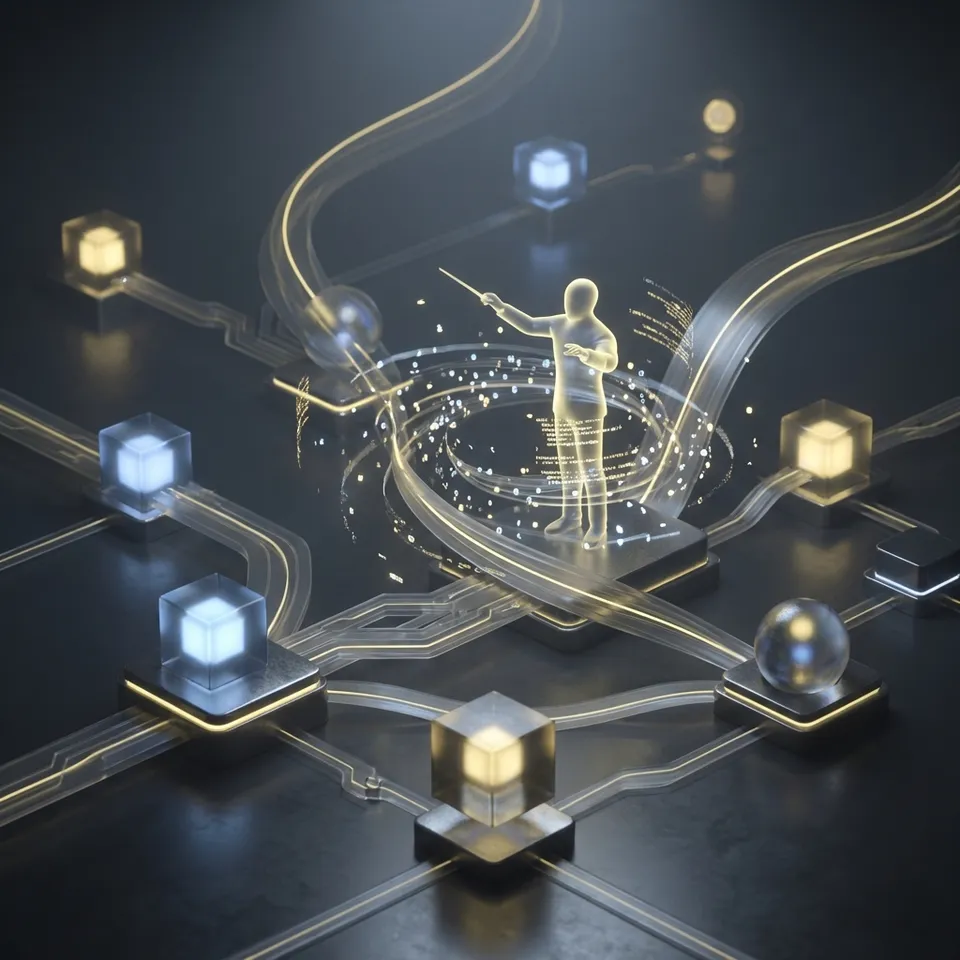

How to Orchestrate AI Agents with Argo Workflows

Argo workflows ai agents provide a Kubernetes-native way to orchestrate multiple AI agents in directed acyclic graphs or sequences. Each agent runs as a containerized step, handling tasks like data processing, LLM inference, or tool calls. This approach scales to thousands of concurrent agents and works alongside persistent storage solutions. You get full visibility through the Argo UI and REST API.

What Are Argo Workflows for AI Agents?

Argo Workflows automates complex agent pipelines on Kubernetes. It runs each agent as a container in a workflow defined by YAML. Agents can chain together, pass artifacts between steps, or branch based on conditions.

Kubernetes schedules these containers across your cluster. You define dependencies with DAGs. For AI agents, one step might ingest data, the next call an LLM, and a third generate reports. The system handles retries, timeouts, and parallelism.

This setup fits agentic systems where tasks split across specialized agents. A research agent pulls data, an analysis agent processes it, and a visualization agent outputs charts. Argo tracks progress and logs everything centrally.

Helpful references: Fastio Workspaces, Fastio Collaboration, and Fastio AI.

Why Use Argo Workflows to Orchestrate AI Agents?

Argo Workflows stands out as the most popular Kubernetes workflow engine. It handles container-native jobs without legacy overhead. Developers define pipelines in YAML familiar from Kubernetes manifests.

Teams choose Argo for its scalability. It supports thousands of concurrent workflows on standard clusters. Built-in features like loops, conditionals, and cron scheduling cover most agent orchestration needs.

According to Argo documentation, over 200 organizations use it for production workloads. The UI provides real-time visualization of agent progress, logs, and artifacts. REST API allows programmatic control from other tools.

Key Benefits for Agent Pipelines

Parallel execution speeds up independent agents. Artifact passing shares large files or results between steps. Retry logic keeps pipelines resilient to LLM timeouts or API failures.

Setting Up Argo Workflows on Kubernetes

Install Argo with Helm or manifests. Add the repo: helm repo add argo https://argoproj.github.io/argo-helm. Install: helm install argo argo/argo-workflows -n argo --create-namespace.

Create a service account and role bindings for workflow execution. Port-forward the UI: kubectl port-forward svc/argo-server -n argo multiple:multiple. Access at localhost:multiple.

Submit your first workflow with argo submit --watch examples/hello-world.yaml. This runs a simple container step.

Scale Your AI Agents with Persistent Storage?

Fastio gives agents 50GB free storage, 5,000 credits/month, and 19 consolidated tools. Build reactive workflows with webhooks and RAG. Built for argo workflows agents workflows.

Example: AI Agent Workflow YAML

Here is a basic workflow for argo ai agent orchestration. It chains three agents: data loader, analyzer, and summarizer.

apiVersion: argoproj.io/v1alpha1

kind: Workflow

metadata:

generateName: ai-agent-pipeline-

spec:

entrypoint: main

templates:

- name: main

dag:

tasks:

- name: load-data

template: agent-load

- name: analyze

template: agent-analyze

depends: load-data

- name: summarize

template: agent-summarize

depends: analyze

- name: agent-load

container:

image: your-agent-image:v1

command: [python, load_data.py]

outputs:

artifacts:

- name: raw-data

path: /output/data.json

- name: agent-analyze

inputs:

artifacts:

- name: raw-data

path: /input/data.json

container:

image: your-agent-image:v1

command: [python, analyze.py]

outputs:

artifacts:

- name: analysis

path: /output/analysis.json

- name: agent-summarize

inputs:

artifacts:

- name: analysis

path: /input/analysis.json

container:

image: your-agent-image:v1

command: [python, summarize.py]

Each agent uses the same image with different scripts. Inputs flow as artifacts. Submit with argo submit pipeline.yaml.

Integrating Agent Storage in Argo Workflows

Agent workflows need persistent storage for inputs, outputs, and state. Argo supports S3-compatible artifacts, but for AI agents, use services with MCP tools.

Fastio offers multiple free storage for agents with multiple MCP tools over HTTP/SSE. Containers in Argo steps call Fastio API to upload/download files. Enable Intelligence Mode for RAG on stored data.

Example: Add env vars for Fastio API key. In agent script: mcp.list_files(), mcp.upload_file(). Webhooks notify on changes for reactive agents. File locks prevent multi-agent conflicts.

This fills the gap where other orchestrators lack agent-native storage. Ownership transfer lets agents build workspaces and hand off to humans.

Scaling Argo Workflows for Thousands of AI Agents

Argo handles massive scale with parallelism limits and node selectors. Set parallelism: multiple in spec. Use HA controller for high availability.

Best practices include pod tolerations for spot instances and resource quotas per workflow. Metrics export to Prometheus tracks agent throughput.

Production users run thousands of concurrent jobs for ML pipelines. Combine with Kubernetes autoscaling for dynamic capacity.

Capture these lessons in a shared runbook so new contributors can follow the same process. Consistency reduces regression risk and makes troubleshooting faster.

Argo Workflows vs Other AI Agent Orchestrators

Argo excels in complex agent DAGs. Knative suits stateless functions. Argo CD handles deployments post-workflow.

Define clear tool contracts and fallback behavior so agents fail safely when dependencies are unavailable. This improves reliability in production workflows.

Frequently Asked Questions

What are Argo workflows for AI agents?

Argo workflows run AI agents as container steps on Kubernetes. Define pipelines in YAML with dependencies, retries, and artifact passing.

Argo workflows AI agents vs other tools?

Argo offers Kubernetes-native scaling and UI not found in LangChain or CrewAI. It works alongside agent storage like Fastio for production.

How do you integrate storage in Argo AI agent workflows?

Use artifact repos like S3 or MCP-compatible storage. Fastio provides multiple tools for agents to manage files directly from workflow steps.

Can Argo handle thousands of concurrent AI agents?

Yes, with parallelism controls and K8s autoscaling. Docs cover massive scale best practices.

What is an example Argo workflow for agents?

See the YAML above chaining load-analyze-summarize agents. Adapt scripts to your LLM/tools.

Does Argo support cron for agent schedules?

Yes, CronWorkflows run pipelines on schedule. Combine with webhooks for event-driven triggers.

Related Resources

Scale Your AI Agents with Persistent Storage?

Fastio gives agents 50GB free storage, 5,000 credits/month, and 19 consolidated tools. Build reactive workflows with webhooks and RAG. Built for argo workflows agents workflows.