How to Automate Spreadsheets with AI Agents

Manual data entry takes up nearly 30% of the work week. AI agents take over these repetitive tasks by reading, updating, and creating spreadsheets autonomously. Unlike Excel plugins that require your active attention, these agents process thousands of rows in the background. This guide shows you how to build a fully automated pipeline where agents pull files from storage, transform the data, and deliver clean reports.

What Is AI Agent Spreadsheet Automation?

AI agent spreadsheet automation uses Large Language Models (LLMs) to read, analyze, transform, and generate spreadsheet data without human help. While traditional macros require strict formatting, AI agents understand context and meaning. An agent sees a "Quarterly Total" label and knows it represents revenue, even if the cell is in a different column than expected. This lets agents handle "messy" data, like invoices with different layouts, logs with missing headers, or user forms, that would break standard Python scripts or Excel formulas. The features that matter most depend on your specific use case. Rather than chasing the longest feature list, focus on the capabilities that directly impact your daily workflow. A well-executed core feature set beats a bloated platform where nothing works particularly well.

Helpful references: Fastio Workspaces, Fastio Collaboration, and Fastio AI.

Why Move Beyond Excel Plugins?

Tools like Microsoft Copilot and ChatGPT plugins are useful, but they are "assistants," not "agents." They require you to open the application, upload the file, and type a prompt. This works for one-off tasks but fails at scale. Autonomous agents work differently:

- They Work While You Sleep: An agent can wake up in the middle of the night, detect a new sales report, process it, and email you the summary before you wake up.

- They Don't Care About the App: Excel, Google Sheets, or raw CSV, it doesn't matter. Agents look at the raw data, not the software interface.

- Scale: A human with Copilot processes one sheet at a time. An agent can run parallel processes to handle hundreds of files at once. Recent studies show that moving to fully autonomous workflows can cut data processing time by 90% for standard reporting tasks.

Comparison: Scripts vs. Plugins vs. Agents

Choosing the right tool is important. Here is how autonomous agents compare to traditional methods.

Prerequisites for Automation

Before building your automation pipeline, make sure you have these components. * An LLM Provider: Access to a capable model like Claude Sonnet, GPT, or a local Llama model. These models have the logic needed to transform data. * A Fastio Account: To provide persistent, secure storage that agents can access via API. The free tier offers 50GB of storage and full MCP support. * MCP Client: An interface to run the agent. This could be the Claude Desktop app, a custom Python script using langchain, or an agent framework like CrewAI. Once these are ready, you can build a workflow that works like a human data analyst but runs at the speed of software.

Centralize Your Data for Agents

Agents need reliable file access. Uploading files to a chat window works for ad-hoc tasks, but real automation requires a shared file system. Fastio works as this shared drive. You create a workspace (e.g., "Finance-Automation") where your team or other automated systems drop raw files. * For Teams: Team members drag-and-drop Excel files into the folder. * For Apps: Use the Fastio API to programmatically upload logs or exports. * For Cloud Storage: Use Fastio's URL Import to pull files from Google Drive, OneDrive, or Dropbox. This creates a bridge where agents can access cloud files without needing complex OAuth setups for every single service. By centralizing data, you give the agent a single "Inbox" to monitor.

Give Your AI Agents Persistent Storage

Use autonomous agents to clean, analyze, and report on your data 24/7. Get 50GB of free storage and full MCP access.

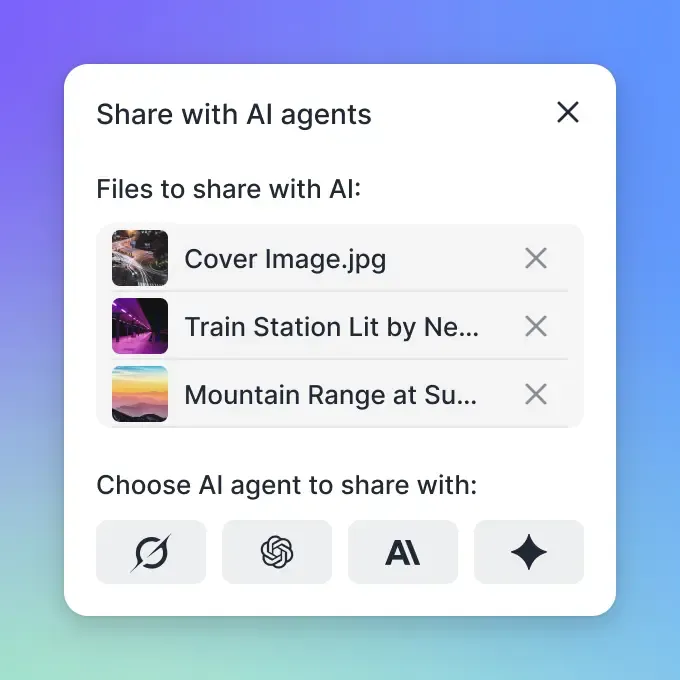

Connect Agents with MCP Tools

The Model Context Protocol (MCP) is the standard for connecting AI models to external systems. For spreadsheet automation, your agent needs tools to "read" the file content and "write" the results. Fastio provides a pre-built MCP server with 19 consolidated tools. Connecting an agent is simple.

For Claude Desktop Users:

You add the Fastio configuration to your claude_desktop_config.json. This gives Claude direct permissions to list files, read their contents, and save new versions.

Key MCP Tools for Spreadsheets:

filesystem_list_files: Allows the agent to scan the "Inbox" folder for new work. *filesystem_read_file: Enables the agent to ingest CSV or JSON data. *filesystem_write_file: Allows the agent to save the cleaned data or generated report. *search_files: Helps the agent find specific historical data to compare against. This tool usage turns a chatbot into a worker. The agent doesn't just talk about the data; it interacts with it directly.

Define the Transformation Workflow

With access and tools ready, you define the logic. Unlike Python scripts, you don't need to write code for every condition. You provide a "System Prompt" that tells the agent what to do.

Example Workflow:

- Trigger: Agent checks

/finance/invoices/raw/every hour. - Read: Reads the file and identifies columns for "Date," "Vendor," and "Amount."

- Normalize: Normalizes dates to ISO format (YYYY-MM-DD).

- Categorize: Categorizes vendors (e.g., mapping "Uber" and "Lyft" to "Travel").

- Calculate: Calculates tax if missing.

- Output: Saves a clean version to

/finance/reports/processed/. Because the agent uses an LLM, it handles edge cases, like a typo in "Restraunt" or a missing header row, that would typically cause a script to crash.

Sample Prompt Instruction:

"You are a Data Processing Agent. Your job is to read any CSV found in the Input folder. For each row, classify the expense category based on the vendor name. If the category is unclear, mark it as 'Review'. Save the result as a new file in the Output folder with the suffix '_processed'."

Handling Large Datasets

A common challenge with LLMs is the context window limit. You cannot paste a massive spreadsheet into a prompt. To handle large datasets, you must implement a "chunking" strategy. * Stream Reading: The agent (or the script controlling it) reads the file in blocks, for example, a few hundred rows at a time. * Batch Processing: The agent processes this block, maintaining a running summary or appending rows to a temporary file. * Aggregation: Once all blocks are processed, the agent combines the results. Fastio's infrastructure supports this by allowing efficient random access to files. An agent can read the first block, process it, and then request the next block, ensuring it never exceeds its token limit.

Real-World Use Case: Automated Lead Qualification

Imagine a marketing team that gets a daily export of hundreds of new leads. Manually checking every company website to qualify them is slow and expensive. With an AI agent, this process happens instantly:

- The agent detects the new

leads_daily.csvfile. * It iterates through the rows. * For each domain, it uses a browser tool (like theagent-browserskill or Fastio's OpenClaw integration) to visit the company homepage. * It extracts key data: Industry, Employee Count, and Value Proposition. * It scores the lead on a scale based on the Ideal Customer Profile (ICP). * It appends these new columns to the spreadsheet and saves it to the "Sales Ready" folder. This happens entirely in the background. The sales team starts their day with a prioritized list of high-value targets, rather than a raw dump of names.

Best Practices for Reliable Automation

To keep your agent running smoothly, follow these tips.

Validation is Key Even smart agents hallucinate. Always ask the agent to generate a "Validation Report" alongside the data. This report should flag any rows where the agent was unsure (e.g., "Row N: Vendor name ambiguous").

Use Versioning

Never overwrite the original file. Always save the processed version as a new file. Fastio keeps a full version history, but explicit naming conventions (e.g., raw_original.csv, cleaned_final.csv) prevent data loss.

Audit Logs Keep a record of what the agent did. Fastio automatically logs every file access and modification. If a file is corrupted, you can check the audit log to see exactly when the agent touched it and revert the changes if necessary.

Frequently Asked Questions

Can AI agents edit Excel files directly?

Yes, agents with the right tools can handle .xlsx files. However, for complex automation, converting data to CSV or JSON formats is often faster and less prone to parsing errors.

Is my spreadsheet data secure with AI agents?

It depends on the platform. Fastio agents operate in a secure sandbox, and all files are encrypted at rest and in transit. Our Intelligence Mode indexes data for the agent's use but never trains public models on your private financial data.

How do I handle files larger than the context window?

Agents use pagination or 'chunking.' They read the file in small blocks (e.g., a few hundred rows), process each block independently, and append the results to a new file. This allows them to handle datasets of any size.

Do I need to know Python to automate spreadsheets?

No. While Python is useful, modern agent frameworks allow you to use natural language. You can instruct the agent to 'calculate the average of column B' without writing a single line of code.

What happens if the agent makes a mistake?

Fastio keeps a full version history. If an agent deletes data or makes an incorrect edit, you can instantly revert the file to its previous state via the dashboard or API.

Related Resources

Give Your AI Agents Persistent Storage

Use autonomous agents to clean, analyze, and report on your data 24/7. Get 50GB of free storage and full MCP access.