How to Build an AI Agent Deep Research Workflow

A deep research workflow is an agentic pipeline that decomposes complex questions, searches multiple sources, synthesizes findings, and delivers structured research reports. This guide walks through the architecture, the reasoning loop, and the pieces most tutorials skip: storage, versioning, and handing the final artifact to a human who can actually use it.

What a Deep Research Workflow Actually Is

A deep research workflow is an agentic pipeline that decomposes complex questions, searches multiple sources, synthesizes findings, and delivers structured research reports. It is not a single prompt. It is not a RAG lookup. It is a loop of planning, retrieval, reading, and writing that runs long enough to produce something a human would otherwise spend half a day on.

The pattern went mainstream in 2024 with OpenAI and Google's consumer "deep research" modes, and by early 2026 open-source implementations had caught up. LangChain published its deepagents pattern on the official docs in March 2026, and Databricks shipped a parallel reference architecture the same quarter. Both converge on the same shape: a planner, a set of search and browse tools, a scratchpad or memory store, and a writer that emits a final document with citations.

The useful mental model: a junior analyst with unlimited patience but a terrible memory. You give them a brief. They go look things up. They come back with a draft. You review it. What makes the agent version interesting is that the analyst can run ten of these in parallel, at three in the morning, and charge you in tokens instead of hours.

Deep research queries average 3 to 5 times more tool calls than standard agent tasks, which is why most naive implementations fall over. The reasoning loop is the easy part. The hard part is what happens to the twenty PDFs, forty web pages, and six intermediate summaries the agent produces along the way.

What to check before scaling ai agent deep research workflow

Every deep research agent, regardless of framework, compresses to five stages: decompose, search, store, synthesize, deliver. Skip any one and the output degrades in a predictable way.

1. Decompose

The agent takes the user's question and breaks it into sub-questions. "What is the competitive landscape for vector databases in 2026?" becomes a list: who are the vendors, what are their pricing models, what differentiates them, what does adoption look like, what are the recent funding events. This planning step is usually a single LLM call with a structured output schema, and the plan itself should be written to the scratchpad so the agent can refer back to it as context windows fill.

A good decomposition has 5 to 15 sub-questions. Fewer and the agent goes shallow. More and it loses the thread by the time it gets to synthesis.

2. Search

Each sub-question fans out to one or more tool calls. In practice this means a web search API (Tavily, Exa, Brave, SerpAPI), a browser tool for reading pages the search snippet doesn't cover, and optionally domain-specific sources like arXiv, GitHub, or internal document stores. A sub-agent pattern works well here: spawn a worker per sub-question, let each run its own mini-loop of search and read until it has enough to answer, then return a compact summary.

This is where the 3 to 5x tool call multiplier shows up. A ten-sub-question brief with three searches and two page reads per sub-question is 50 tool calls before the agent has written a word.

3. Store

Every intermediate artifact, raw search results, fetched page contents, sub-agent summaries, gets written somewhere durable. Most tutorials put this in a Python dict or a local SQLite file and move on. That works for a demo and fails the moment you want to rerun, audit, or hand the research to a teammate.

4. Synthesize

The writer stage reads the sub-agent summaries and the original plan, then drafts a structured report. The output shape matters more than people assume. A report with sections, a bibliography, and inline citations is ten times more useful than a wall of prose, because the human reviewing it can verify claims without rerunning the whole pipeline.

5. Deliver

Someone has to receive the output. If it lands in a chat message, it gets lost. If it lands in a file in an agent's temp directory, it gets lost when the container recycles. Delivery means putting the report somewhere a human can find it, comment on it, and compare it to last week's version.

The Reasoning Loop in Practice

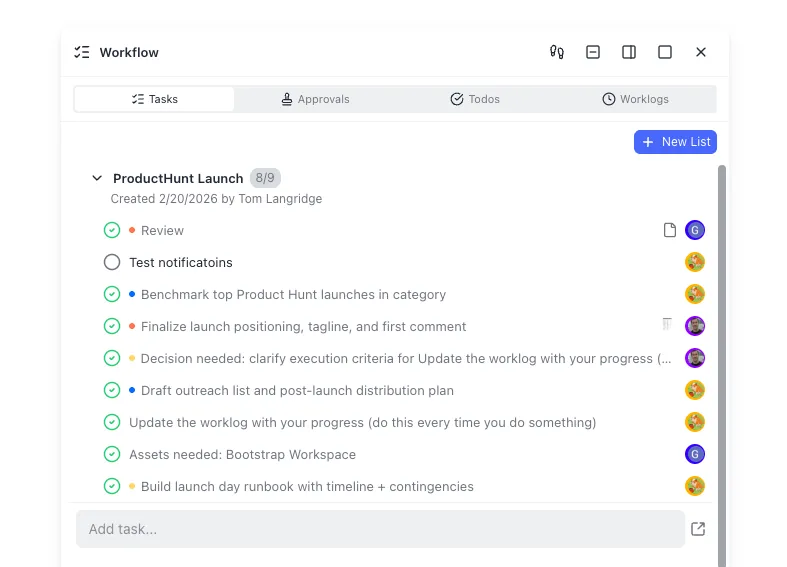

The inner loop inside search and synthesize is what most engineers associate with "the agent." It is a planner-executor pattern: the model decides the next action, a tool runs, the result goes back into context, the model decides again.

LangChain's deep research agent guide lays this out with a create_deep_agent helper that wires planning, sub-agents, and a virtual filesystem together. Databricks's Mosaic AI Agent Framework uses a similar structure with Unity Catalog functions for tools. You can build the same thing by hand with the Anthropic or OpenAI tool use APIs in about 200 lines of Python.

Three things make the loop work at scale:

- A scratchpad the agent can read and write. Without one, the agent either runs out of context or loses track of what it has already done. Frameworks implement this as a virtual filesystem with

read,write, andlstools. The point is that the agent persists thinking across turns rather than trying to hold everything in the active context window. - Sub-agents with narrow scope. A top-level agent with access to a dozen tools behaves worse than a top-level agent that spawns specialized sub-agents with three tools each. The narrower context keeps each agent on task. Anthropic's public writing on multi-agent research reports similar results.

- Explicit stop conditions. Budgets on tool calls, time, or tokens. Without them, deep research agents will happily burn a thousand searches producing marginal returns. A hard cap of 50 searches and a soft cap that triggers early synthesis at 30 is a reasonable starting point.

When to Use a Graph Instead of a Loop

For workflows where the stages are fixed (decompose, search, write) and you want observability per step, a graph framework like LangGraph or the Vercel AI SDK's workflow primitives will beat a free-form loop. For workflows where the agent needs to decide mid-run to go back and search more, a loop wins. Most production deep research systems end up as a small graph with a loop inside the "research" node.

Give your research agent a real workspace

Fast.io gives research agents 50GB of free storage, versioned artifacts, audit trails, and one-click handoff to a human reviewer. No credit card, no trial period. Connect via the Fast.io MCP server and start shipping research. Built for agent deep research workflow workflows.

Storage and Versioning: The Part Tutorials Skip

Existing guides obsess over the reasoning loop and treat storage as a one-line pickle.dump. That breaks the moment you try to use the output for anything serious. The research is the artifact. Treat it that way.

What Actually Needs to Persist

A production deep research workflow produces at least four kinds of artifact, each with different retention and access needs:

- The brief: the original question plus any parameters the user supplied.

- The plan: the decomposed sub-questions, usually as JSON.

- Raw evidence: fetched pages, PDFs, screenshots, API responses. Large, immutable, useful for audit.

- Intermediate summaries: sub-agent outputs before final synthesis. Useful for debugging when the final report is wrong.

- The final report: the thing you actually hand to a human.

Stuffing all of this in an S3 bucket works until someone asks "what sources did we use for the claim on page three of last month's report?" At that point you need structured storage with search, versioning, and something resembling a permissions model.

Options for the Storage Layer

Local disk is fine for development. For anything beyond a single developer on a single machine, you have options. A self-hosted setup on S3 plus Postgres gives you full control and a weekend of wiring. Google Drive or Dropbox via their APIs work if your team already lives there, with the caveat that neither indexes content semantically out of the box.

Fast.io is a cloud workspace designed for this exact shape of problem. Agents upload artifacts over the API or the Fast.io MCP server, files are versioned automatically, and Intelligence Mode indexes everything for semantic search. When the final report needs to go to a human, the agent creates a branded share and transfers ownership of the workspace. The free agent plan gives 50GB of storage and 5,000 credits per month with no credit card, which covers a surprising amount of research volume before you think about paying for anything.

The specific pieces that matter for a deep research workflow:

- File versioning so rerunning the research on the same brief produces v2 of the report rather than overwriting v1.

- Audit trails on every read and write, so the "what sources did we use" question has an answer that is not "grep the logs."

- Semantic search across prior research so the agent can notice when it has already investigated a related question.

- Webhooks to trigger downstream workflows (notify a Slack channel, kick off a review, update a dashboard) when a new report lands.

Handoff to Humans

A research report that nobody reads is worse than no report at all, because it ate compute and created false confidence that the question was answered. Handoff is a design problem, not an afterthought.

The practical pattern: the agent finishes the pipeline, writes the final report plus all supporting artifacts to a workspace, and sends a single notification with a link. The human opens the link, reads the report, clicks through to the evidence for any claim they want to verify, and leaves comments if they want a revision.

Fast.io's ownership transfer is built for this. An agent signs up (no credit card, agent plan), builds the workspace, uploads the report and evidence, creates a branded share, and transfers ownership to the human recipient. The agent keeps admin access for follow-up runs. The human gets a clean workspace without ever seeing the agent's plumbing.

What to Include in the Handoff

The final report as a markdown or PDF document, with section headings a scanning reader can skim.

- Inline citations for every claim, linked to the exact page or paragraph in the evidence folder.

- A one-paragraph executive summary at the top. Half your readers will read only this.

- The original brief and the plan, so a reviewer can tell whether the agent actually answered the question that was asked.

- A changelog if this is a rerun, so the reviewer can see what changed since last time.

What to Leave Out

Debug logs, chain-of-thought traces, raw tool call histories. Keep them in the workspace for audit, but do not put them in the report. A reviewer with a specific question about "why did the agent search for X" can dig in. A reviewer skimming the summary does not need the model's internal monologue.

Closing the Feedback Loop

The best deep research systems learn from review comments. When a human flags a claim as wrong or a section as weak, capture that as structured feedback and feed it into the next run's plan. Over time the agent gets better at the questions your team actually cares about. This is less a framework feature and more a habit: every rejected report is a training signal if you write it down.

Putting It Together: A Reference Architecture

A minimal production deep research stack in 2026 looks roughly like this:

- Orchestration: LangGraph, the Vercel AI SDK, or a plain Python loop. Choice matters less than having explicit stages.

- Model: Claude Opus 4.6 or GPT-4.1 for planning and synthesis, a cheaper model (Haiku, GPT-4.1-mini) for sub-agent summarization. The cost delta is real.

- Search tools: Tavily or Exa for general web, plus one or two domain-specific tools (arXiv, GitHub, your internal docs).

- Browser tool: Playwright or a hosted equivalent for pages the search API does not cover.

- Scratchpad: a virtual filesystem the agent can read and write during the run.

- Artifact store: Fast.io or an equivalent workspace for versioned, audited, shareable storage of the final outputs.

- Delivery: workspace share plus a webhook to Slack, email, or your task tracker.

Wire it up, give it a hard budget on tool calls, and point it at a real question. The first run will be mediocre. The second will be better. By the tenth you will have a workflow that produces research artifacts your team treats as real work product rather than demo output.

A Sample API Flow

For the storage and handoff half of the pipeline, the Fast.io MCP server exposes the operations the agent needs. Connect an MCP client to /storage-for-agents/ (Streamable HTTP) or the legacy SSE endpoint at /storage-for-agents/ authenticate, and the agent can create a workspace, upload artifacts, enable Intelligence for semantic search, generate a share link, and transfer ownership, all as tool calls. Full tool reference lives at mcp.fast.io/skill.md.

The point of going through an MCP server rather than raw HTTP is that the same tools work across Claude, Cursor, Codex, and any other MCP-compatible client. Your research agent can move between LLM providers without rewriting the storage layer.

Frequently Asked Questions

How do deep research agents work?

A deep research agent decomposes a question into sub-questions, runs search and browse tools against each one, stores the results as it goes, and then synthesizes a final report with citations. The loop typically spans 30 to 100 tool calls and 5 to 15 minutes of wall time per query.

What tools do AI research agents use?

Most production agents use a web search API (Tavily, Exa, Brave, SerpAPI), a browser tool for fetching full page contents, domain-specific sources like arXiv or GitHub, a scratchpad filesystem for intermediate notes, and an artifact store for final outputs. LLM providers like Anthropic and OpenAI expose the tool-use APIs that wire these together.

How do you store research agent outputs?

Store the brief, the plan, raw evidence, intermediate summaries, and the final report as separate artifacts. Local disk works for development. For production use versioned storage with audit trails and semantic search, such as Fast.io, Google Drive, or a self-hosted S3-plus-Postgres setup. The key is that the final report should be linkable, reviewable, and auditable months after the run.

How many tool calls does a deep research workflow use?

Deep research queries average 3 to 5 times more tool calls than standard agent tasks. A typical run with 10 sub-questions, 3 searches per question, and 2 page reads per question lands at roughly 50 tool calls before synthesis. Set a hard budget to prevent runaway runs.

What is the difference between RAG and a deep research workflow?

RAG retrieves a few chunks from a prebuilt index and feeds them into a single generation call. A deep research workflow plans a multi-step investigation, runs tools iteratively, and produces a long-form document. RAG is a primitive; deep research is a workflow that often uses RAG as one of several tools.

Can one agent hand research artifacts to a human for review?

Yes. The agent writes the report and supporting evidence to a workspace, creates a shareable link, and notifies the human. On Fast.io the agent can transfer ownership of the workspace so the human becomes the admin while the agent retains access for follow-up runs.

Related Resources

Give your research agent a real workspace

Fast.io gives research agents 50GB of free storage, versioned artifacts, audit trails, and one-click handoff to a human reviewer. No credit card, no trial period. Connect via the Fast.io MCP server and start shipping research. Built for agent deep research workflow workflows.