How to Index Video Archives for Retrieval-Augmented Generation (RAG)

Indexing your video archive turns old recordings into searchable facts for AI. When you pull out transcripts and visual cues with timestamps, AI agents can use your video library as a source of truth. Most company knowledge stays hidden in unrecorded meetings or raw footage; indexing makes that data useful for the first time.

Why Company Video Archives Stay Unused

Most company video archives are just a pile of files collecting digital dust. Thousands of hours of meetings and training sessions sit in storage because finding a specific moment is too hard. Standard search tools only look at file names or manual tags. If an expert explains a fix in a long recording, finding that five-minute clip usually means sitting through the entire video.

This gap hurts productivity. Employees often redo work or have new meetings because they cannot find information recorded months ago. AI agents have it even worse. Without a way to "watch" these videos, they are stuck with text documents and miss the visual context and verbal cues found in demonstrations.

Indexing changes this by making video searchable. When AI can search video scenes, accuracy improves by 35% because the system has the full context of what was shown, not just what was written in a summary.

Helpful references: Fastio Workspaces, Fastio Collaboration, and Fastio AI.

Multimodal Extraction: Beyond Simple Transcription

To index video for RAG, you have to look at more than just speech-to-text. A reliable pipeline uses multiple layers of data. The first is the audio transcript, which converts dialogue into text. While this tells you what was said, it often misses what was actually happening on screen.

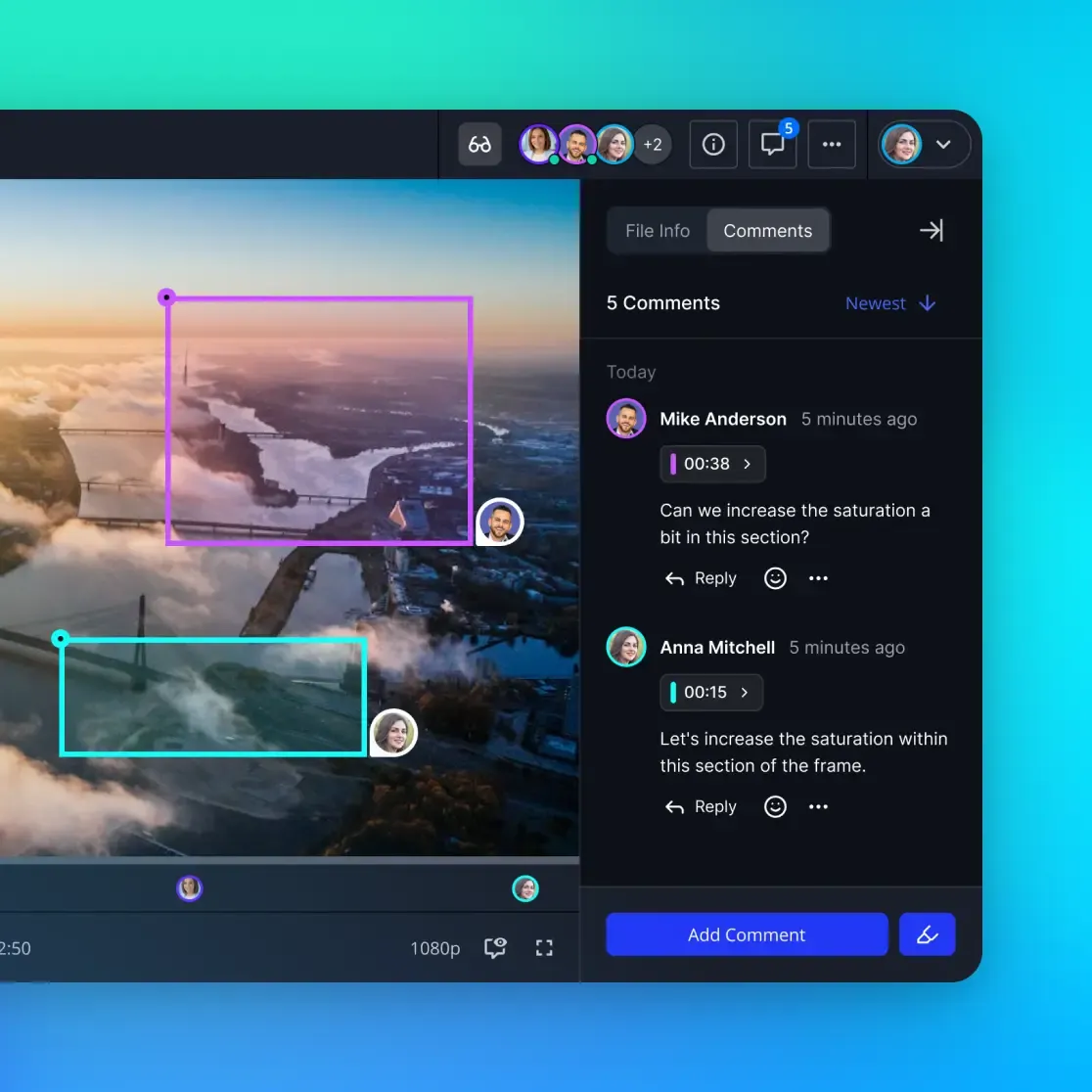

Visual analysis is the second layer. This involves identifying objects, text on screen, and specific actions. For technical tutorials, visual context is often more important than the audio. If a presenter says "click here" while pointing at a button, a text-only system will fail. Visual indexing captures these actions and links them to the timeline.

Finally, temporal metadata maps every word and visual cue to a specific millisecond. This allows the AI to provide timestamped links. It can answer a question and then show you the exact moment in the video where the answer appears. This precision is what makes video RAG useful for professional work.

Unlock Your Video Archive Today

Stop letting your video files sit unused. Start using Fastio’s free tier with 50GB of storage and built-in indexing to get your video RAG project moving. Built for video archive indexing rag workflows.

Strategic Semantic Chunking for Video RAG

Chunking is how you break long videos into pieces for the AI. Splitting a video every multiple seconds is easy to do but usually leads to poor results. It often cuts off speakers mid-sentence or splits a visual scene in half, leaving the AI with fragmented info that is hard to understand.

Semantic chunking is a smarter approach that finds natural breaks in the footage. It looks for scene changes, new speakers, or shifts in the topic. If the screen switches from a slide deck to a live demo, the system knows to start a new chunk right there.

In a recorded project meeting, this ensures "Introductions" stay separate from "Project Goals." When an AI retrieves a chunk, it gets a complete, clear idea. This strategy fits more info into the AI's memory while making sure every segment is relevant to the user's query.

Architecture Deep Dive: From File to Vector Store

A video RAG pipeline moves data from a raw file to a searchable index through a few stages. It starts with Ingestion. Fastio makes this easier with URL Import, letting you pull archives directly from Google Drive or AWS S3. This avoids the headache of local storage and high bandwidth costs.

Next is Processing. This is where the extraction happens. Models create a space where a text description like "installing a motherboard" is mathematically close to a video clip showing that action. This means users can search using normal language and find visual matches instantly.

Finally, the data goes into a Vector Store. Each chunk is stored as a vector, which is just a list of numbers representing its meaning. When a user asks a question, the AI finds the closest matches in the database. Because the vector represents the meaning of the video, the search results are much more accurate than simple keyword matching.

Use Cases: How Teams Use AI-Indexed Video

Once your archive is indexed, you can do more than just search. One common use is automated summaries. An AI agent can watch hours of product demos and generate a single report on new features. This saves time for managers who would otherwise have to watch every recording.

Another use is expert support. Instead of bugging a colleague about a fix, a developer can ask an AI agent connected to the company archive. The agent finds the specific meeting where the bug was discussed and explains the solution, even providing the video clip as proof.

In creative fields, indexing is used for content discovery. Editors can search their whole library for "happy people in a park" and get results in seconds. This removes the need for manual tagging, so creators spend more time editing and less time hunting through old footage.

Addressing Implementation Challenges and Limitations

Video indexing is powerful but has some hurdles. Poor audio quality is the most common. Background noise or quiet speakers can lead to bad transcripts. To fix this, you need high-quality models with noise reduction. If the transcript is wrong, the AI will give the wrong answers.

Lighting and camera angles also matter. If a video is too dark or shaky, object detection might fail. It helps to set clear standards for video quality before starting a big project. In some cases, you can use filters to sharpen the video so the AI can read text on screen more easily.

Then there is the cost. Indexing thousands of hours of video takes a lot of compute power. Using a platform like Fastio can lower these costs by using efficient pipelines. This lets teams scale up without managing their own expensive servers.

Cost and Scalability for Enterprise Video RAG

Scaling a video RAG system requires a clear view of the costs. Expenses usually show up in three areas: storage, compute, and API fees. Video files are huge, and a single hour of footage can take up several gigabytes. Efficient storage management is a priority for any long-term project.

Compute costs come from processing. Running visual analysis and vector models is resource-heavy. If you build a custom solution, you will have to pay for high-performance GPUs. Many teams find that the cost of maintaining this tech is too high, so they look for managed services that include indexing.

API usage is the third factor. If you call external AI models for every video chunk, costs add up fast. An integrated workspace with built-in RAG features helps keep things under control. Fastio handles indexing as part of the workspace, which makes pricing more predictable for growing teams.

Evidence and Benchmarks for Video Indexing

The move toward smart video indexing is backed by data. Research shows that 80% of company knowledge is trapped in unstructured formats like video and audio. This is a huge resource for teams that want to use AI to work faster.

Performance tests show that RAG systems using semantic video indexing are 35% more accurate than those using basic metadata. This is because the AI gets the full context of a scene, which stops it from making things up when it doesn't have enough info.

Gartner reports that the demand for analyzing unstructured data is growing fast. By setting up a RAG-based indexing strategy now, companies can future-proof their systems. This ensures their AI agents have access to the best information for making decisions.

Implementing Video RAG with Fastio and MCP

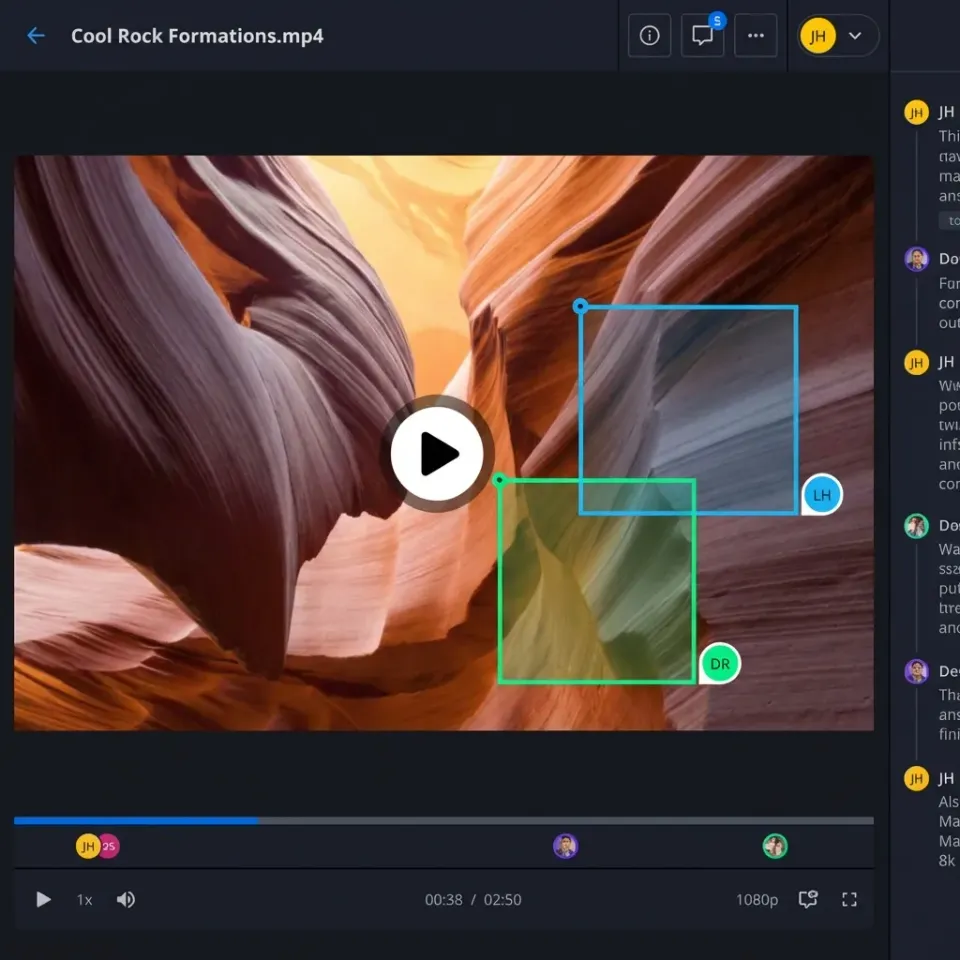

Setting up a video RAG pipeline is usually complex. Fastio simplifies this with its native Intelligence Mode and support for the Model Context Protocol (MCP). When you turn on Intelligence Mode, Fastio automatically indexes your media files for you.

Using the Fastio MCP server, your AI agents can interact with your video archive using specialized tools. These tools let agents search for specific scenes or dialogue using natural language. They can also pull transcripts for specific timestamps and generate answers based on several videos at once.

This setup removes the need to maintain a separate database or build extraction pipelines. Fastio handles the storage, indexing, and retrieval. Whether you are using Claude, GPT-multiple, or a local model via OpenClaw, your agents have a direct connection to your video knowledge.

Frequently Asked Questions

How do you index video for RAG?

You pull out the audio transcripts and visual details, then break them into segments. These pieces are turned into vector data using embedding models and stored in a database. This lets an AI search for what happens in the video instead of just looking at the file title.

Can AI agents search through video archives?

Yes, AI agents can search video archives if the content is indexed using RAG. The agent searches a database to find relevant segments, reviews the metadata, and gives an answer with links to the exact timestamps in the video.

What is the best chunking strategy for video?

Semantic chunking is the best way to index video. It uses scene changes, speaker transitions, and topic shifts to create segments that contain a complete idea. This gives the AI much better context than simple time-based splits.

What are the benefits of semantic video indexing?

Semantic indexing lets you search using natural language and makes AI answers multiple% more accurate. It helps teams find exact moments in massive video libraries without manual tagging, unlocking info that was previously hidden.

Does Fastio support video indexing for RAG?

Fastio supports video indexing through its Intelligence Mode. When enabled, uploaded videos are automatically processed and indexed for AI retrieval. This allows AI agents to use video content as a source of truth via the Fastio MCP integration.

What percentage of data is unstructured video?

Industry research shows that 80% of company knowledge is trapped in unstructured formats, and video is growing the fastest. Indexing this data is key for companies that want their AI agents to have access to all the facts.

Can I index video archives from Google Drive?

Yes, you can index video from Google Drive by importing files into a Fastio workspace. Fastio supports URL Import from major cloud providers, making it easy to bring your archives into an environment where they are automatically indexed.

What models are used for video RAG?

Video RAG usually uses a mix of models. Transcription models handle audio, while multimodal embedding models create the link between text and video. Large Language Models then use this context to generate the final answers.

How does Fastio handle temporal metadata?

Fastio maps all extracted info to specific timestamps. This allows the system to provide direct links to the exact second in a video where information occurs, making it easy for users to check the AI's answers for themselves.

Is there a free tier for indexing video?

Fastio has a free tier for agents that includes multiple of storage. This tier lets you set up small-scale video indexing projects to test your workflows before scaling up. There is no credit card required to get started.

Related Resources

Unlock Your Video Archive Today

Stop letting your video files sit unused. Start using Fastio’s free tier with 50GB of storage and built-in indexing to get your video RAG project moving. Built for video archive indexing rag workflows.