Top 10 OpenClaw Tools for Data Scientists

Data scientists spend most of their time on data cleaning and preparation. OpenClaw tools enable autonomous data querying, storage, browser research, API connectivity, and workflow orchestration through specialized agent skills. This list ranks the top OpenClaw tools based on ClawHub popularity, Python compatibility, and real-world use for data workflows.

Why Data Scientists Turn to OpenClaw Tools

Data preparation takes up the bulk of a data scientist's day. Cleaning messy datasets, handling missing values, and transforming data for modeling eat hours. OpenClaw changes this. Agents equipped with ClawHub skills handle routine tasks like querying databases, researching APIs, or organizing data files.

These tools run locally or in workspaces. They work alongside Python libraries and Jupyter for familiar workflows. Data scientists focus on insights while agents do the grunt work. For teams, MCP orchestration coordinates multiple agents on large pipelines.

OpenClaw fills gaps in traditional tools. Pandas lacks autonomy. Jupyter needs manual runs. ClawHub skills bridge that with agentic execution.

Helpful references: Fastio Workspaces, Fastio Collaboration, and Fastio AI.

How We Ranked These OpenClaw Tools

We evaluated ClawHub skills for data science fit. Key criteria included downloads and stars on ClawHub, Python and Jupyter compatibility, MCP support for ML orchestration, user reviews from data forums, and task coverage for storage, querying, research, and API connectivity.

Tools needed strong data workflows focus. Ease of install mattered too. Zero-config skills ranked higher. We tested each for speed and accuracy against real data science scenarios.

Automate Your Data Science Workflows

50GB free storage, 19 MCP tools, ClawHub integration. No credit card for agents.

OpenClaw Tools Comparison Table

1. Fastio — Persistent Dataset Storage

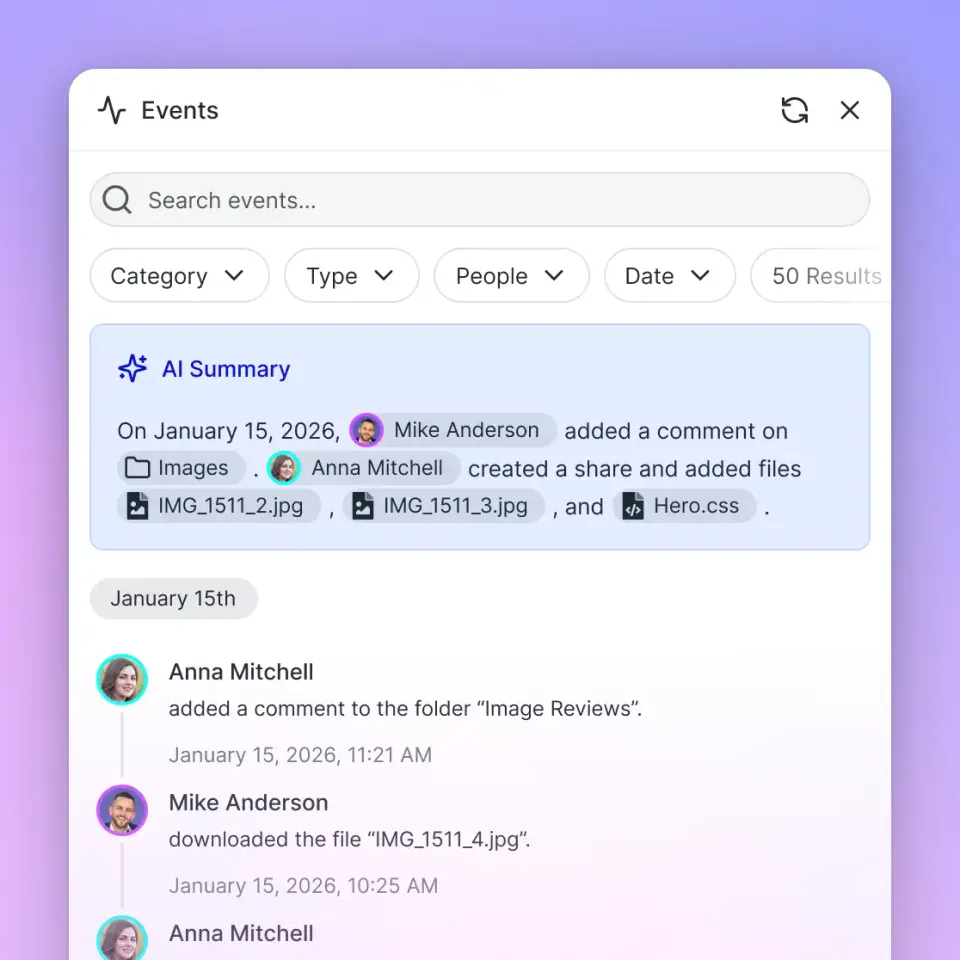

Fastio provides persistent storage for datasets and model artifacts. Install via clawhub install dbalve/fast-io for 19 consolidated MCP tools for files, RAG, and sharing.

Strengths:

- 50GB free, no credit card

- File versioning and activity tracking

- RAG query across stored datasets and docs

- Ownership transfer for delivering results to stakeholders

- Share types for Send, Receive, or Exchange workflows

Limitations:

- Cloud connection required

- 1GB max file on free tier

Best for team sharing and long-term dataset persistence. Pricing: Free agent tier.

ClawHub Page: clawhub.ai/dbalve/fast-io

2. SQL Toolkit — Database Queries and Analysis

SQL Toolkit provides command-line patterns for SQLite, PostgreSQL, and MySQL. Agents use it to design schemas, build complex queries, run migrations, and optimize with EXPLAIN analysis.

Strengths:

- SQLite quick-start with zero setup

- Window functions, recursive CTEs, and join patterns

- Migration script templates included

Limitations:

- Instruction-only — requires an existing database connection

Best for exploratory database analysis. Pricing: Free.

ClawHub Page: clawhub.ai/gitgoodordietrying/sql-toolkit

3. Playwright — Web Data Extraction

Playwright's MCP skill enables browser automation for OpenClaw agents. Data scientists use it to scrape JavaScript-rendered data sources, pull tables from reporting dashboards, or extract structured data from APIs with web-based authentication flows.

Strengths:

- Full MCP action set: navigate, click, type, screenshot, extract data

- Handles dynamic JS-rendered pages standard HTTP requests miss

- PDF export for archiving dashboard states

Limitations:

- Requires Node.js and npx

- Heavier resource usage than direct API access

Best for extracting data from web-based sources. Pricing: Free.

ClawHub Page: clawhub.ai/ivangdavila/playwright

Define clear tool contracts and fallback behavior so agents fail safely when dependencies are unavailable. This improves reliability in production workflows.

4. Agent Browser — API Documentation Research

Agent Browser is a fast Rust-based headless browser CLI. Data scientists use it to research API documentation, pull reference examples, and capture page snapshots of data provider documentation.

Strengths:

- Snapshot interactive page elements with structured reference tags

- Screenshot and PDF export for documentation references

- Cookie and session management for authenticated documentation sites

Limitations:

- Requires Node.js runtime

- Not a storage tool — pair with Fastio for file persistence

Best for researching data source APIs and documentation. Pricing: Free.

ClawHub Page: clawhub.ai/TheSethRose/agent-browser

Automate Your Data Science Workflows

50GB free storage, 19 MCP tools, ClawHub integration. No credit card for agents.

5. Brave Search — Background Research

Brave Search gives OpenClaw agents lightweight web search without a full browser. Data scientists use it to find papers, datasets, methodology references, or check documentation availability before extracting with Playwright.

Strengths:

- Fast headless search with no browser overhead

- Returns titles, links, snippets, and full page content in markdown

- Low resource usage

Limitations:

- Requires a Brave API key

- Up to 10 results per query

Best for rapid background research. Pricing: Free.

ClawHub Page: clawhub.ai/steipete/brave-search

6. Filesystem Management — Dataset Organization

Filesystem Management gives OpenClaw agents advanced local file operations — smart listing, pattern-based search, full-text content search, and batch file processing. Data scientists use it to organize raw data exports, batch-rename files from ingestion pipelines, and prep directories before uploading to Fastio.

Strengths:

- Filter files by type, pattern, size, and date

- Directory analysis to visualize large data folder structures

- Batch copy with dry-run preview before execution

Limitations:

- Local filesystem only — pair with Fastio for cloud sharing

- Requires Node.js

Best for organizing local data pipelines. Pricing: Free.

ClawHub Page: clawhub.ai/gtrusler/clawdbot-filesystem

7. S3 — Large Dataset Archiving

The S3 skill is a best-practices guide for S3-compatible object storage. Data science teams storing large datasets on AWS S3, Cloudflare R2, or Backblaze B2 benefit from the lifecycle policy and versioning patterns.

Strengths:

- Lifecycle rules to auto-tier infrequent datasets to cheaper storage

- Versioning before deletion — critical for dataset integrity

- Compatible with AWS S3, Cloudflare R2, Backblaze B2, and MinIO

Limitations:

- Instruction-only skill — requires existing S3 credentials

- No automated tooling included

Best for archiving large datasets and model artifacts. Pricing: Free.

ClawHub Page: clawhub.ai/ivangdavila/s3

8. Docker Essentials — Reproducible Pipelines

Docker Essentials provides container management guidance for OpenClaw agents. Data science teams running notebooks, training jobs, or data pipelines in containers use this skill to manage images, networks, volumes, and multi-container setups.

Strengths:

- Container lifecycle: run, stop, restart, remove

- Docker Compose for multi-service orchestration

- Multi-stage build patterns for lean pipeline images

Limitations:

- Instruction-only skill — requires Docker CLI installed

- No built-in storage

Best for reproducible model training and pipeline environments. Pricing: Free.

ClawHub Page: clawhub.ai/skills/docker-essentials

9. API Gateway — Data Source Connectivity

API Gateway connects agents to 100+ SaaS APIs — Google Workspace, Airtable, HubSpot, Notion, Salesforce — with managed OAuth. Data scientists use it to pull structured data from SaaS tools without building custom connectors.

Strengths:

- 100+ integrated services with managed OAuth via maton.ai

- Direct native API endpoint access without SDKs

- Multiple connection support with header-based selection

Limitations:

- Requires a

MATON_API_KEYenvironment variable - Dependent on maton.ai service availability

Best for pulling data from SaaS tools into analysis pipelines. Pricing: Free (skill MIT-0; maton.ai usage may apply).

ClawHub Page: clawhub.ai/byungkyu/api-gateway

10. Clawdbot Docs — Platform Reference

Clawdbot Docs (256 stars, 412 installations) provides documentation navigation and reference for the OpenClaw platform. Data scientists new to the ecosystem use it to get setup guidance, configuration snippets, and troubleshooting help without leaving the agent context.

Strengths:

- Decision-tree navigation for setup, config, troubleshooting, and automation

- Retrieves ready-to-use configuration snippets

- Version tracking and snapshot management

Limitations:

- Focused on Clawdbot platform docs, not general data science references

- Fetches from docs.clawd.bot — requires internet access

Best for onboarding and platform configuration. Pricing: Free.

ClawHub Page: clawhub.ai/NicholasSpisak/clawddocs

Where to Start with These Tools

Begin with Fastio for dataset storage and SQL Toolkit for database querying. Add Playwright or Agent Browser when you need to pull data from web sources. Scale to Docker Essentials and S3 for production-grade reproducible pipelines.

Test in ClawHub playground. Stack multiple skills for end-to-end data workflows.

Frequently Asked Questions

Can I use OpenClaw for data science?

Yes. ClawHub skills handle storage, database querying, web data extraction, and API connectivity. Integrate with Jupyter and Python for full workflows.

What are the best AI agent tools for data analysis?

SQL Toolkit and Fastio top the list for database work and persistent storage. Playwright and Agent Browser handle web data extraction.

How does Fastio work with OpenClaw data tools?

Run `clawhub install dbalve/fast-io` to get 19 MCP tools. Store datasets, share results with teammates, and query files with RAG-powered semantic search.

Are these tools free?

Most ClawHub skills are free. Fastio has a free tier with 50GB storage. API Gateway is free but maton.ai usage may apply.

Are these tools Python compatible?

The instruction-based skills work alongside any language. SQL Toolkit, Docker Essentials, and Playwright pair well with Python-based data pipelines.

Related Resources

Automate Your Data Science Workflows

50GB free storage, 19 MCP tools, ClawHub integration. No credit card for agents.