Top File Sharing Tools for AI Workflows in 2026

AI workflows generate 5-10x more intermediate files than traditional automation. Agents pass documents, datasets, and outputs between pipeline stages, often without a human in the loop. Most teams still use file sharing tools built for manual collaboration, and it shows. This guide ranks eight tools by how well they handle agent authentication, API-driven transfers, and pipeline integration.

Why AI Workflows Need Different File Sharing Tools

Traditional file sharing assumes a human is clicking buttons. AI workflows work differently. Agents generate files at 3 AM, pipelines pass outputs between stages without anyone watching, and multi-agent systems need to read each other's results in real time. A 2025 Turing Post survey found that 60% of AI teams call file handoff a top bottleneck. The reasons are predictable:

- No programmatic access. Tools like Dropbox and Google Drive were built around web UIs. Their APIs exist, but they feel like an afterthought.

- Authentication headaches. Most file sharing services expect OAuth flows with browser redirects. Agents running in containers or serverless functions can't open a browser window.

- No persistence between runs. Many workflow tools treat storage as temporary. Files exist during execution, then disappear.

- Manual organization. Human-first tools expect someone to create folders, set permissions, and manage structure. Agents need this handled through code. The eight tools below solve these problems in different ways. Some are purpose-built for agents. Others are traditional platforms with APIs good enough to get the job done. We scored each on API quality, agent authentication, persistence, and fit with automated pipelines.

How We Evaluated These Tools

We tested each tool against five criteria specific to AI workflows:

- API completeness. Can an agent do everything a human can? Upload, download, organize, set permissions, search, and share, all through the API.

- Agent authentication. Does the service support API keys, service accounts, or other headless auth methods? Or does it require browser-based OAuth?

- Persistence. Do files survive between workflow runs? Can agents access outputs from previous sessions?

- Collaboration. Can agents share files with humans and other agents? Are there permission controls?

- Pipeline integration. Does the tool support webhooks, MCP, or other event-driven patterns for triggering downstream steps? Each tool gets a brief overview, its strengths and limitations, and a recommendation for which AI workflow stage it fits best.

The Top File Sharing Tools for AI Workflows

1. Fastio

Fastio is cloud storage built for both humans and AI agents. Agents sign up for their own accounts, create workspaces, upload and download files, and manage permissions through a full REST API. It also has an official MCP server with 19 consolidated tools, so Claude and compatible agents can access files without writing custom integration code.

Strengths:

- Agents are first-class users, not second-class API consumers

- Official MCP server with 19 consolidated tools for native Claude integration

- Persistent workspaces that survive between runs

- Built-in RAG via Intelligence Mode: ask questions about stored files using natural language

- Free agent tier: 50GB storage, 5,000 credits per month, no credit card required

- Human-agent collaboration in the same workspaces

- Ownership transfer: agents can build data rooms and transfer to humans

Limitations:

- Newer platform, so fewer third-party integrations than legacy tools

- Credits-based model requires monitoring usage on large-scale pipelines

Best for: Agents that need persistent, organized file storage with human handoff. Especially strong for Claude-based workflows using MCP.

Pricing: Free agent tier (50GB, 5,000 credits/month). Pro and Business plans use usage-based pricing.

2. Amazon S3

S3 is the go-to for raw file storage in production systems. Every AWS service talks to it, the API is stable, and the documentation is solid. Most AI pipelines already have S3 somewhere in their stack.

Strengths:

- works alongside almost every AI/ML tool out there

- Granular IAM permissions

- Event triggers via S3 notifications and EventBridge

- No practical storage ceiling

- Lifecycle policies for automatic cleanup

Limitations:

- No built-in collaboration features (no comments, previews, or sharing portals)

- Needs substantial setup for human-facing workflows

- Complex permission model for cross-account sharing

- No native file previews or media streaming

Best for: Backend pipeline storage where humans never need to browse or interact with files directly.

Pricing: Pay-per-use. Roughly $0.023/GB/month for standard storage, plus request and transfer fees.

3. Google Cloud Storage

GCS works like S3 but plugs directly into Google's AI platform. If you run Vertex AI or use Google's ML tools, it is the obvious storage layer.

Strengths:

- Direct integration with Vertex AI and BigQuery

- Strong consistency (no eventual-consistency surprises)

- Signed URLs for time-limited access

- Good Python SDK for ML workflows

Limitations:

- Same collaboration gaps as S3, no built-in human interface

- IAM can be confusing for teams new to Google Cloud

- Egress fees add up for data-heavy pipelines

Best for: Teams running AI workflows on Google Cloud/Vertex AI.

Pricing: Pay-per-use. Standard storage starts at $0.020/GB/month.

4. Hugging Face Hub

Hugging Face Hub is where the ML community shares models, datasets, and spaces. If your AI workflow produces or consumes ML artifacts, you probably already have an account.

Strengths:

- Git-based versioning for datasets and models

- Built-in dataset viewer and model card previews

- The default place to share ML artifacts

- Free for public repositories

Limitations:

- Not built for general file sharing or human collaboration

- Slow for large binary files compared to cloud storage

- Limited permission controls

- No real-time event triggers or webhooks

Best for: Sharing ML models, datasets, and training artifacts in AI research and development workflows.

Pricing: Free for public repos. Pro accounts start at published pricing for private repos and larger storage.

Give Your AI Agents Persistent Storage

Fastio is cloud storage built for AI agents. Persistent workspaces, full API access, and an official MCP server. Free tier included.

More Tools Worth Considering

5. Dropbox (via API)

Dropbox has a decent API and supports OAuth 2.0 with refresh tokens, so headless agent access is possible (even if the initial setup is tedious). The sync model means changes propagate to connected clients automatically.

Strengths:

- Well-documented API

- Webhooks for change notifications

- Familiar to non-technical collaborators

- Built-in file previews and search

Limitations:

- Per-seat pricing gets expensive for agent-heavy workflows

- OAuth setup requires an initial browser-based flow

- Sync conflicts can occur in high-throughput scenarios

- No agent-specific features or MCP support

Best for: Teams where humans are the primary users and agents need occasional file access.

Pricing: Business plans start at published pricing/month. Each agent consuming a seat adds cost.

6. Box Platform

Box Platform is the developer API side of Box. It supports service accounts with JWT authentication, one of the cleaner auth models for agents. If your enterprise already runs on Box, you can plug agents into what you have.

Strengths:

- JWT-based service accounts (no browser required)

- Solid governance and compliance features

- Metadata templates for structured file organization

- Content AI features for extraction and classification

Limitations:

- Complex pricing structure

- API rate limits can throttle high-volume workflows

- Heavy enterprise focus may be too much for small teams

- Developer documentation could be clearer

Best for: Enterprise teams already on Box who want to connect agents to their existing setup.

Pricing: Enterprise plans, contact sales. Platform API has separate pricing tiers.

7. MinIO

MinIO is an S3-compatible object store you run yourself. If you need total control over storage, or you work in an air-gapped environment, MinIO gives you S3 API compatibility on your own hardware.

Strengths:

- S3-compatible API (works with existing S3 tooling)

- Self-hosted, full control over data

- High performance for local networks

- Open source with commercial support available

Limitations:

- You manage the infrastructure

- No built-in collaboration features

- Requires DevOps expertise to operate at scale

- No native AI or search features

Best for: On-premise AI workflows or teams needing full data sovereignty.

Pricing: Open source (free). Commercial licenses available for enterprise support.

8. Files.com

Files.com does automated file transfers with enterprise-grade security. It supports SFTP, FTPS, and API-based transfers, with rules that trigger actions when files land.

Strengths:

- Automation rules for file-triggered workflows

- Supports SFTP/FTPS for legacy system integration

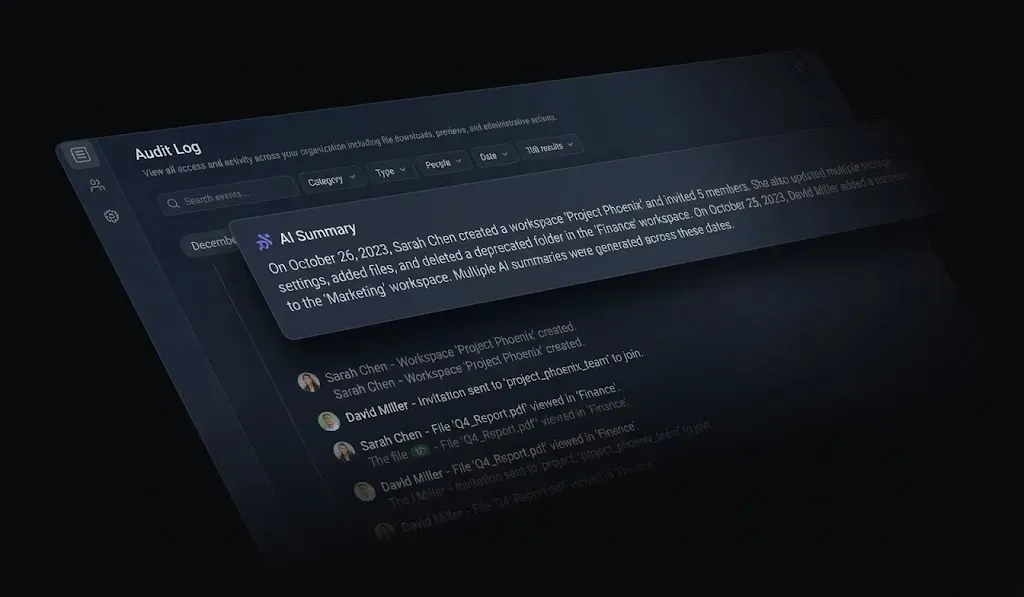

- Detailed audit logs and compliance features

- Webhook notifications on file events

Limitations:

- Expensive (starts at published pricing)

- No AI-specific features

- UI focused on IT administrators, not end users

- Limited file preview capabilities

Best for: Connecting AI pipelines to legacy systems that use SFTP or require managed file transfer compliance.

Pricing: Starts at published pricing for the Power plan.

Comparison Table

The main difference comes down to agent authentication. S3 and GCS use cloud IAM, which works well if your agents already run in those clouds. Box Platform's JWT auth is clean for enterprise setups. Fastio is the only option where agents sign up for their own accounts and operate as first-class users.

Which Tool Should You Choose?

The right tool depends on your workflow architecture:

If agents need to collaborate with humans, use Fastio or Dropbox. Both provide interfaces humans can browse alongside API access for agents. Fastio has the edge with native agent accounts and MCP integration.

If you need raw pipeline storage, use S3 or GCS. Cheap, scalable, and wired into their respective cloud ecosystems. Just don't expect humans to browse the files.

If you share ML artifacts, Hugging Face Hub is the standard. Use it for models and datasets, but reach for something else for general file sharing.

If you run on-premise AI, MinIO gives you S3 compatibility on your own hardware. Pair it with a collaboration tool for human handoff.

If you need managed file transfer compliance, Files.com handles SFTP/FTPS with audit trails and works well for connecting AI pipelines to legacy systems.

If you are building with Claude, Fastio's MCP server lets your agent read and write files without custom API code. Pair that with the free agent tier and you go from prototype to working file storage in minutes. Most production AI workflows end up using two tools: one for raw pipeline storage (S3 or GCS) and one for human-facing collaboration (Fastio, Dropbox, or Box). Plan for both from the start.

Frequently Asked Questions

What tools do AI agents use to share files?

AI agents share files through API-driven storage services. The most common options include cloud object stores like Amazon S3 and Google Cloud Storage for pipeline data, and collaboration platforms like Fastio for human-agent file exchange. Fastio provides native agent accounts and an MCP server so agents can manage files without custom integration code.

How do you share files in an AI pipeline?

AI pipelines typically share files by writing outputs to a shared storage location between stages. Cloud object stores (S3, GCS) work for backend data transfer. For pipelines that involve human review or approval, collaboration tools like Fastio let agents upload results to organized workspaces where humans can browse, comment, and download.

What is the best way to transfer data between AI agents?

The best approach depends on data size and format. For structured data under 1 MB, passing payloads directly through the orchestration framework (LangChain, CrewAI) works fine. For larger files or persistent artifacts, agents should write to shared storage. Fastio's agent workspaces let multiple agents access the same files with permission controls, and the free tier covers 5,000 credits per month.

Do AI workflows need persistent file storage?

Yes, for production use. Most orchestration frameworks treat files as temporary, meaning outputs disappear when a run ends. Persistent storage lets agents reference previous results, build on earlier work, and hand off artifacts to humans. Without it, agents start from scratch every session.

What is MCP and why does it matter for file sharing?

MCP (Model Context Protocol) is an open standard for connecting AI agents to external tools. For file sharing, an MCP server lets agents access storage without custom API code. Fastio offers an official MCP server at mcp.fast.io, which means Claude and compatible agents can upload, download, organize, and share files through a standard interface.

Related Resources

Give Your AI Agents Persistent Storage

Fastio is cloud storage built for AI agents. Persistent workspaces, full API access, and an official MCP server. Free tier included.