How to Handle Files in Pydantic AI Agents

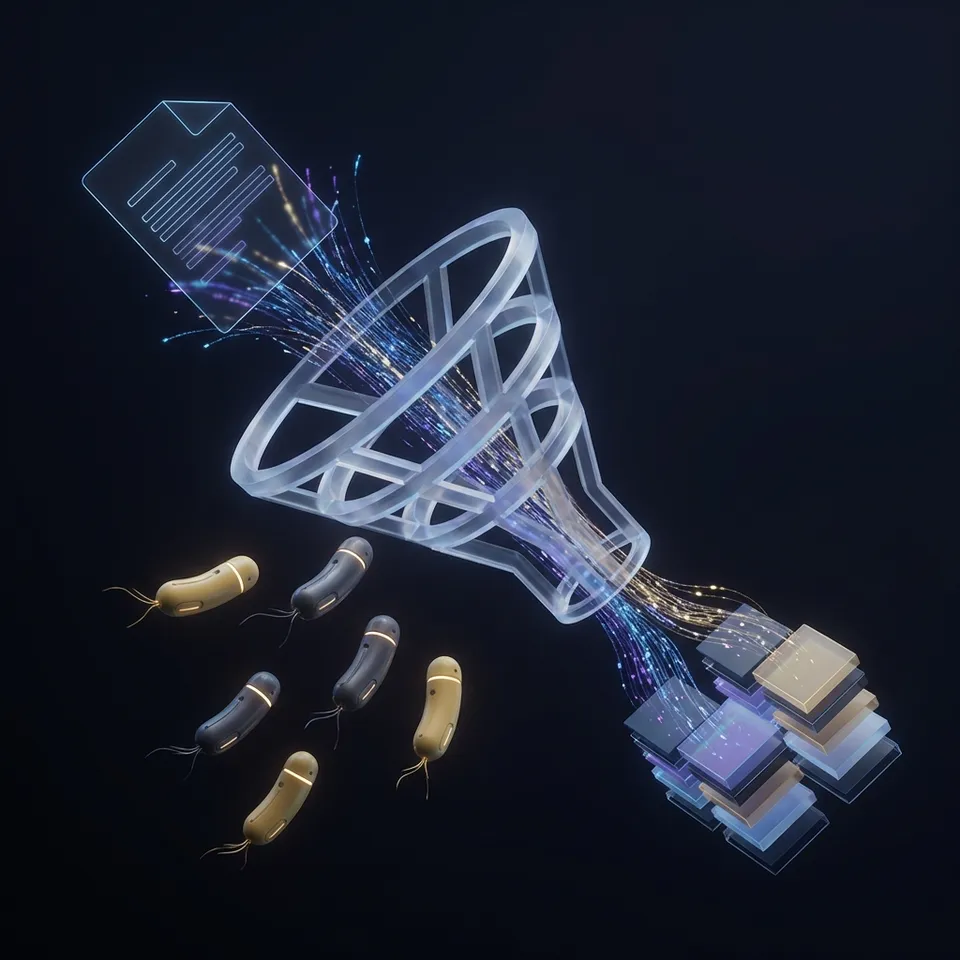

Pydantic AI agents need valid file handling to go beyond text processing. Learn how to upload documents, store files, and process data using Pydantic's validation with the Fastio MCP server.

How to implement pydantic ai file handling reliably

Developers choose Pydantic AI to add data validation to their agent workflows. But Pydantic AI is a framework, not a platform. It lacks built-in storage, file hosting, or a way to handle binary data. When your agent needs to read a PDF, make a report, or analyze a dataset, you face four problems that standard libraries often miss:

- Persistence: Local storage (

./tmp) disappears in serverless setups like Modal, Vercel, or AWS Lambda. Files from one run are gone in the next, which breaks state. - Validation: You need to check for valid file paths, supported types, and file sizes before the agent wastes tokens trying to process them.

- Sharing: The agent needs a way to send the final file (like a CSV or image) to a user or another agent via a URL.

- Latency: Loading large datasets into memory can crash the agent. You need to move file processing to a storage layer. To fix this, we combine Pydantic AI tools with a file storage MCP (Model Context Protocol) server. This connects your logic to cloud storage.

Helpful references: Fastio Workspaces, Fastio Collaboration, and Fastio AI.

What to check before scaling pydantic ai file handling

In Pydantic AI, tools are Python functions with the @agent.tool decorator. To handle files securely, use Pydantic models to check inputs (like filenames and paths) before the tool runs. This stops the agent from guessing file paths and keeps it within your storage rules. Here is how to define a file upload tool using the Fastio MCP SDK. We use Field to tell the LLM exactly what each parameter does:

from pydantic import BaseModel, Field

from pydantic_ai import Agent, RunContext

from fastio_mcp import FastIOClient

class UploadRequest(BaseModel):

local_path: str = Field(..., description="Path to the local file to upload")

destination_folder: str = Field(..., description="Target folder in Fastio (e.g., 'reports/2024')")

public_share: bool = Field(default=False, description="Whether to generate a public share link")

agent = Agent(

'openai:gpt-4o',

system_prompt='You are a file management assistant. Always validate file extensions before uploading.'

)

@agent.tool

async def upload_file(ctx: RunContext, request: UploadRequest) -> str:

"""Uploads a file to secure cloud storage and returns a shareable link."""

client = FastIOClient(api_key=ctx.deps.api_key)

### Upload via Streamable HTTP for efficiency

result = await client.upload(

request.local_path,

folder=request.destination_folder,

share=request.public_share

)

return f"File '{result.name}' uploaded successfully. Access URL: {result.url}"

This ensures the agent can only upload if it provides valid strings. Pydantic's validation lets you add custom checks for file existence or extensions (like .csv or .json), which stops errors before they happen.

Persistent Storage with Fastio MCP

Local file operations are risky for production agents because they tie your app to one machine. Instead of saving to disk, use the Fastio MCP server to handle files over the network. This keeps your agent stateless and portable. Fastio offers a free tier for agents (50GB storage) and an MCP server with 251 tools. It is a file management suite built for agent workflows.

Why use an MCP Server for Pydantic AI?

- Universal Protocol: MCP connects LLMs to data. It works with Claude, Pydantic AI, LangChain, and CrewAI.

- No Local Footprint: Stream files from a URL to storage (using the

save_urltool) without using the agent's RAM or disk. This matters for large datasets in restricted environments. - Security & Auth: The MCP connection handles authentication. Your agent does not need your S3 keys; it only needs to know how to call the tool. This keeps secrets out of prompts and logs.

- Version Control: Track file changes automatically. Your agent can roll back to a previous version if a data change fails. To connect your Pydantic AI agent, run the Fastio MCP server with

mcp-getor configure the transport layer in your Python code.

Give Your AI Agents Persistent Storage

Get 50GB of free, persistent storage and 251 file handling tools for your AI agents.

Advanced: RAG and Document Processing

Once files are stored, you often need to read them. Pydantic AI agents can use Fastio's Intelligence Mode for RAG (Retrieval-Augmented Generation) on documents. This removes the need for a vector database. Instead of writing code to parse PDFs and chunk text:

- Agent uploads file to a workspace with Intelligence Mode on.

- Fastio indexes the content with embedding models.

- Agent uses the

ask_about_filetool to query the document.

@agent.tool

async def analyze_document(ctx: RunContext, filename: str, question: str) -> str:

"""Asks a question about a stored document using semantic search. Use this for PDFs and large docs."""

### This delegates the work to the storage layer, saving tokens

answer = await ctx.deps.fastio.query(filename, question)

return answer

This separation keeps your Pydantic AI agent light. It stops context window issues by only sending relevant text from a large PDF to the LLM. It also keeps your agent fast when working with large data.

Best Practices for Agent File Security

Security is critical when agents handle files. As agents get more autonomous, the risk of data leaks or deletion grows. Follow these rules to keep data safe:

- Use File Locks: In multi-agent systems, use lock/unlock tools to stop conflicts. If two agents edit the same file, a lock ensures only one writes at a time.

- Validate Extensions & Size: In your Pydantic models, use

Field(pattern=r'.*\.pdf$')andle=10_000_000(10MB) to limit file types and sizes. This stops the agent from trying to read a 1GB video as text. - Audit Logging: Fastio logs every read, write, and share. This creates a trail for debugging. If an agent deletes a file, you will know which tool call did it.

- Lifecycle Management: Set expiration dates on links. If an agent makes a report for a user, set an expiration date on the link to reduce security risks.

- Least Privilege: Create specific workspaces in Fastio for different agents. A research agent does not need access to the finance agent's folder. Use the MCP server's permissions to enforce this.

Frequently Asked Questions

Can Pydantic AI agents upload files directly from memory?

Yes, you can define tools that accept byte streams, but it is often more efficient to pass file paths or URLs. For large files, we recommend using the Fastio MCP `save_url` tool to transfer data server-to-server without loading it into the agent's memory.

How do I handle large files with Pydantic AI?

Avoid loading files larger than 10MB into the agent's context window. Instead, upload the file to storage (like Fastio) and use retrieval tools to extract only the relevant snippets needed for the task.

Is the Fastio MCP server free for developers?

Yes, Fastio offers a free developer tier that includes 50GB of storage, 5,000 monthly API credits, and full access to the MCP server features without requiring a credit card.

Related Resources

Give Your AI Agents Persistent Storage

Get 50GB of free, persistent storage and 251 file handling tools for your AI agents.