How to Set Up OpenClaw Multi-Agent Workspaces

When multiple OpenClaw agents need to share files and state, you need shared storage — not local disks on each container. This guide explains the options, then walks through setting up a shared workspace with Fastio as the storage layer.

Why single-agent setups don't scale

Most OpenClaw deployments start simple: one agent, one local container. That works fine for solo tasks. The problem shows up when you add a second agent — suddenly you have two processes with separate memory, no shared context, and a real risk of duplicated or conflicting work.

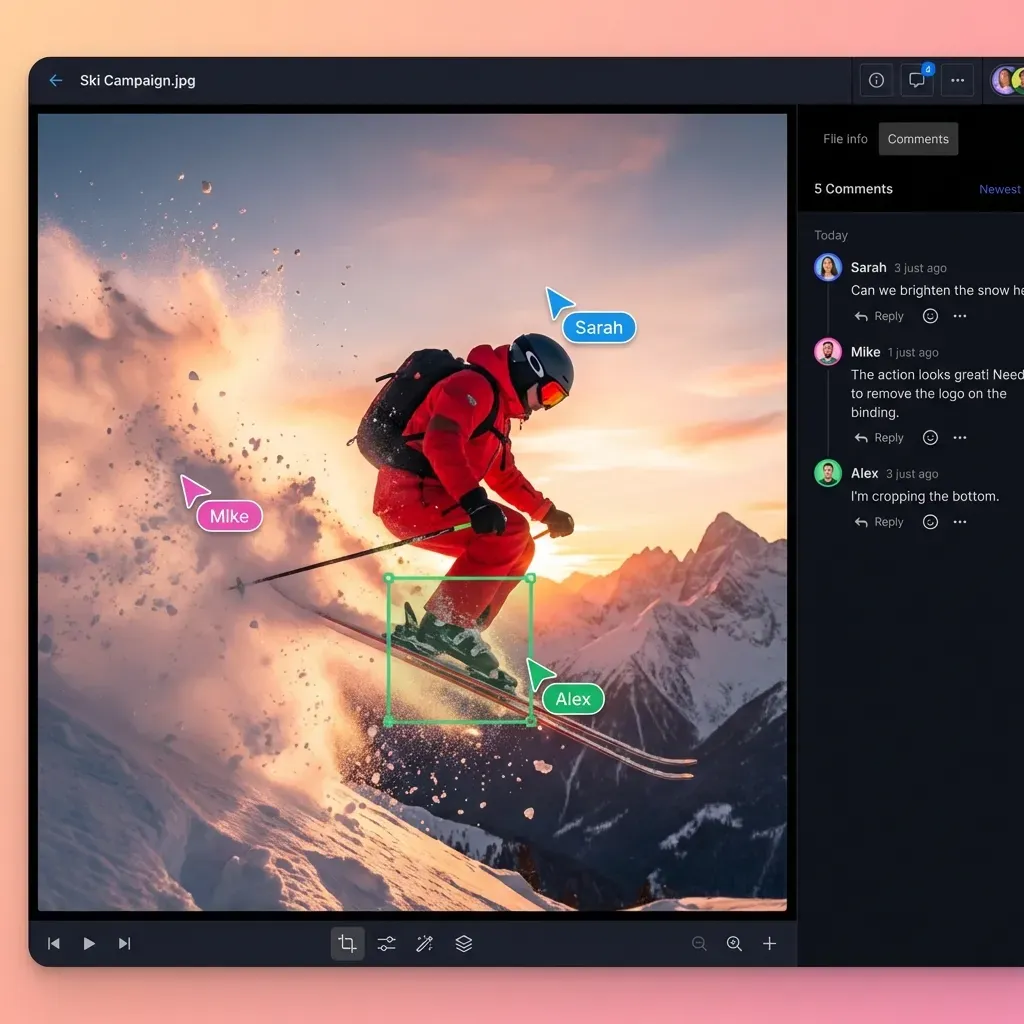

The fix is to move storage out of each agent's local environment and into something shared. When a Researcher agent drops a PDF and a Writer agent can immediately read and query it, you have a real pipeline. When they're both writing to separate local disks, you have two agents doing independent work that happens to look related.

There are several approaches:

- Shared filesystem (NFS, EFS): Simple to set up, no new dependencies. Doesn't give you RAG or semantic search out of the box.

- Object storage (S3, R2, GCS): Good for large files and durability. Requires more plumbing to index and query.

- Database (Postgres, Redis): Great for structured data and fast lookups. Not ideal for documents or binary files.

- Cloud workspace with built-in intelligence (e.g. Fastio): Combines file storage, permissions, RAG indexing, and sharing in one API. More opinionated, but less to wire together.

This guide uses Fastio as the storage layer because it's what the ClawHub skill integrates with directly. If you'd rather use S3 or a shared filesystem, the OpenClaw architecture is the same — swap the storage tools for your preferred backend.

Prerequisites

You need a running OpenClaw instance with ClawHub installed. Node.js 24 is recommended; Node 22.16+ (LTS) also works.

For this guide specifically:

- A Fastio account (free tier works — 50GB, 5,000 credits/month)

- The Fastio ClawHub skill installed on your OpenClaw instance

- At least two agent configurations you want to connect to the same workspace

If you're using a different storage backend, you can skip the Fastio-specific steps and apply the same coordination patterns (file locks, workspace IDs) to your own tooling.

Step 1: Install the Fastio skill

Run this in your OpenClaw terminal:

clawhub install dbalve/fast-io

This registers 19 MCP tools: file and folder operations (storage), workspace management, sharing, AI queries, file locks, and more. The tools handle concurrent access correctly — if two agents try to write the same file without a lock, the second call returns a "resource busy" signal rather than silently overwriting.

Once installed, your agents can reference the workspace by ID in their configuration.

Step 2: Create and configure the shared workspace

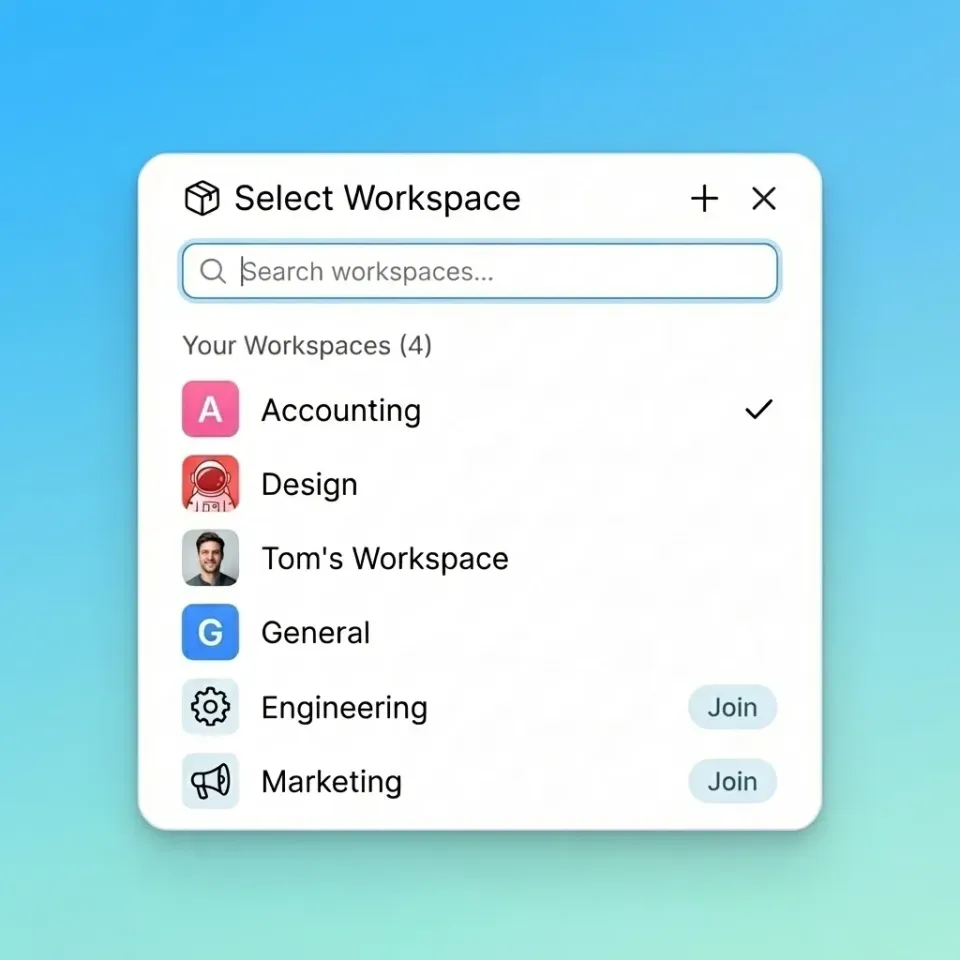

In your Fastio dashboard, create a new workspace for agent operations — something like 'agent-ops' or whatever matches your project. Enable Intelligence Mode. This activates the built-in RAG pipeline: when any agent uploads a file, it gets indexed automatically. Other agents can then query that workspace in natural language and get cited responses without needing a separate vector database.

Note down the workspace ID from the dashboard URL or by calling the workspace tool. Set it as an environment variable in each agent's config:

export FASTIO_WORKSPACE_ID="your_workspace_id_here"

Any agent with this ID and valid credentials can read, write, and query the same workspace.

Give your agents shared storage

Fastio's free agent tier includes 50GB of storage with built-in RAG, file locks, and ownership transfer. No credit card needed.

Step 3: Use file locks for coordination

Multiple agents writing to the same files without coordination will corrupt data. The Fastio skill includes file locking via the storage tool's lock actions — it works similarly to a mutex.

The pattern:

- Acquire: Before editing, call

lock_fileon the target resource. - Edit: Run your

write_fileor append operations. - Release: Call

unlock_fileto free the resource.

If a second agent tries to access a locked file, it gets a "resource busy" response and knows to wait or retry. This is enough for most pipeline patterns — for example, one agent aggregating news while another compiles a newsletter from the aggregated data. They can work in parallel as long as they lock before writing shared files.

If you're using S3 or another backend, you'd implement similar coordination with DynamoDB conditional writes or a Redis lock. The concept is the same regardless of the storage layer.

Step 4: Handle ownership and handoffs

One thing Fastio's workspace model makes easy is transferring ownership. You might have a Builder agent set up a client folder structure, upload initial assets, and configure sharing — then hand the whole workspace to a human when the job is done.

The agent calls the transfer_workspace tool with the client's email. The client receives the populated workspace while the agent retains admin access for ongoing maintenance. This turns agent output into a deliverable the client can actually log into and browse.

For other storage backends, you'd need to replicate this by managing access controls yourself (IAM policies, ACLs, etc.).

Frequently Asked Questions

Can OpenClaw agents share memory across different servers?

Yes, if you use shared cloud storage. Because the files are in a Fastio workspace (or S3, or any shared backend), agents running on different machines or cloud regions all read from and write to the same location.

How do I prevent agents from overwriting each other's work?

Use file locks before any write operation. In Fastio, the `storage` tool includes lock and unlock actions. The same pattern applies with Redis locks or DynamoDB conditional writes for other backends.

Does this require a paid OpenClaw license?

No. OpenClaw is open source. The Fastio free tier — 50GB storage, 5,000 credits/month — is enough for most development setups.

Can I use local LLMs with this setup?

Yes. OpenClaw connects to any LLM provider, local or cloud. The storage layer is separate from the inference layer, so swapping models doesn't affect workspace configuration.

Related Resources

Give your agents shared storage

Fastio's free agent tier includes 50GB of storage with built-in RAG, file locks, and ownership transfer. No credit card needed.