How to Add File Storage to OpenAI Agents SDK Projects

The OpenAI Agents SDK gives you tools, handoffs, and guardrails for building multi-agent systems, but it has no built-in file persistence. This guide walks through adding persistent file storage to your agents using custom function tools, so your agents can save, retrieve, and share files across sessions without losing work.

What the OpenAI Agents SDK Does (and Doesn't) Include

OpenAI released the Agents SDK as the production successor to Swarm. It keeps Swarm's lightweight design (agents, tools, handoffs) and adds guardrails, tracing, and multi-model support. If you've worked with Swarm before, the upgrade is smooth. The SDK handles orchestration well. You define agents with instructions and tools, wire up handoffs between them, and the framework manages the execution loop. But it deliberately leaves infrastructure concerns to you, and file storage is one of them. Here's what the SDK provides out of the box:

- Function tools: Wrap any Python function as an agent tool with automatic schema generation

- Hosted tools: FileSearchTool (vector stores), CodeInterpreterTool (sandboxed execution), WebSearchTool

- Handoffs: Transfer control between specialized agents

- Guardrails: Input and output validation

- Tracing: Built-in observability for debugging agent runs

- Sessions: SQLite-backed conversation persistence (added in later releases)

Notice what's missing: persistent file storage. The SDK's FileSearchTool queries OpenAI's vector stores, which are designed for RAG retrieval, not general-purpose file management. There's no built-in way for an agent to save a report, store processed data, or maintain a library of working documents between sessions. This gap shows up the moment you build anything beyond a chatbot. Research agents need to save findings. Data processing agents produce output files. Multi-agent systems need a shared workspace. You need external storage, and the SDK's function tool system makes it easy to add.

Helpful references: Fastio Workspaces, Fastio Collaboration, and Fastio AI.

Why Agents Need Persistent File Storage

Without persistent storage, your agents are stateless workers that forget everything between runs. This limits them to single-session tasks. Consider a research agent built with the OpenAI Agents SDK. It searches the web, analyzes documents, and produces a summary. Without file storage, that summary exists only in the conversation context. Close the session and it's gone. Run the agent again tomorrow and it starts from scratch. Persistent file storage changes what agents can do:

- Multi-session workflows: An agent generates a draft today, receives feedback tomorrow, and revises next week. Each version is stored and accessible.

- Multi-agent collaboration: Agent A produces a dataset. Agent B picks it up for analysis. Agent C formats the results. They share files through a common workspace.

- Human-agent handoffs: An agent builds a client deliverable and stores it in a shared folder. The human reviews, adds comments, and the agent incorporates feedback.

- Audit trails: Every file version is preserved. You can trace what the agent produced, when, and what changed. The OpenAI Agents SDK's session system (SQLiteSession, SQLAlchemySession) handles conversation state, but conversation state is not the same as file state. Sessions remember what was said. File storage preserves what was produced.

Give Your AI Agents Persistent Storage

Fastio gives teams shared workspaces, MCP tools, and searchable file context to run openai agents sdk file storage workflows with reliable agent and human handoffs.

Building a File Storage Tool for the OpenAI Agents SDK

The Agents SDK's function tool system turns any Python function into a callable tool. You write a function, add a docstring, and the SDK auto-generates the JSON schema for the LLM. Here's how to build a file storage tool that connects your agents to cloud storage.

Step 1: Define the Storage Functions

Start with the core operations your agents need. At minimum: upload, download, and list files.

import httpx

from agents import Agent, function_tool

FASTIO_TOKEN = "your-agent-bearer-token"

WORKSPACE_ID = "your-workspace-id"

BASE_URL = "https://api.fast.io/v1"

@function_tool

def upload_file(filename: str, content: str) -> str:

"""Upload a text file to the agent's workspace. Args:

filename: Name for the file (e.g. 'report.md')

content: The text content to store

"""

response = httpx.post(

f"{BASE_URL}/upload/text-file",

headers={"Authorization": f"Bearer {FASTIO_TOKEN}"},

json={

"profile_id": WORKSPACE_ID,

"profile_type": "workspace",

"filename": filename,

"parent_node_id": "root",

"content": content

}

)

return f"Uploaded {filename} successfully"

@function_tool

def list_files() -> str:

"""List all files in the agent's workspace."""

response = httpx.get(

f"{BASE_URL}/storage/list",

headers={"Authorization": f"Bearer {FASTIO_TOKEN}"},

params={

"context_type": "workspace",

"context_id": WORKSPACE_ID,

"node_id": "root"

}

)

files = response.json()

return "

".join(

f"- {f['name']} ({f['type']})"

for f in files.get("items", [])

)

@function_tool

def download_file(node_id: str) -> str:

"""Download and read a file from the workspace. Args:

node_id: The file's storage node ID

"""

url_response = httpx.get(

f"{BASE_URL}/download/file-url",

headers={"Authorization": f"Bearer {FASTIO_TOKEN}"},

params={

"context_type": "workspace",

"context_id": WORKSPACE_ID,

"node_id": node_id

}

)

download_url = url_response.json()["url"]

file_content = httpx.get(download_url)

return file_content.text

Step 2: Attach Tools to Your Agent

Wire the storage functions into your agent definition:

research_agent = Agent(

name="Research Agent",

instructions="""You are a research agent with file storage. Save your findings using upload_file. Check for previous work using list_files before starting. Resume from stored files using download_file.""",

tools=[upload_file, list_files, download_file]

)

Step 3: Run with Persistence

Now your agent can store and retrieve files across sessions:

from agents import Runner

### Session 1: Agent does research and saves results

result = await Runner.run(

research_agent,

"Research cloud storage pricing and save a report"

)

### Session 2: Agent picks up where it left off

result = await Runner.run(

research_agent,

"Review your previous research and add competitor data"

)

The agent checks its workspace at the start of session 2, finds the report from session 1, and continues building on it.

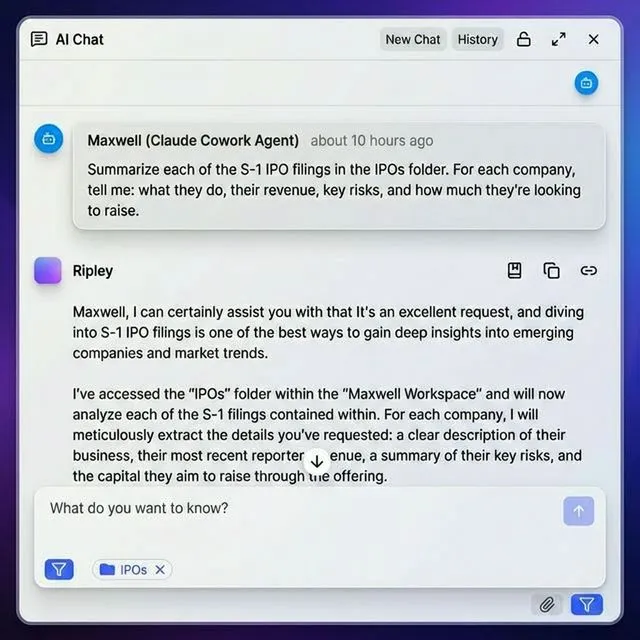

Multi-Agent File Sharing with Handoffs

The OpenAI Agents SDK's handoff system lets agents transfer control to specialized peers. When you combine handoffs with shared file storage, agents can pass work products along a pipeline. Here's a practical pattern: a research agent gathers data, hands off to an analysis agent, which hands off to a report writer.

from agents import Agent, function_tool, handoff

### All agents share the same workspace

shared_tools = [upload_file, list_files, download_file]

researcher = Agent(

name="Researcher",

instructions="""Gather information on the assigned topic. Save raw findings as 'research-notes.md' in the workspace. When done, hand off to the Analyst.""",

tools=shared_tools,

handoffs=[handoff(target="analyst")]

)

analyst = Agent(

name="Analyst",

instructions="""Read 'research-notes.md' from the workspace. Analyze the data and save 'analysis.md'. Hand off to the Writer when analysis is complete.""",

tools=shared_tools,

handoffs=[handoff(target="writer")]

)

writer = Agent(

name="Writer",

instructions="""Read 'analysis.md' from the workspace. Write a polished report and save as 'final-report.md'.""",

tools=shared_tools

)

Each agent reads the previous agent's output from the shared workspace. The files persist regardless of which agent is active. This pattern works because:

- Files outlive sessions: Even if the pipeline crashes mid-run, completed work is preserved

- Agents can retry: If the analyst produces a poor analysis, you can re-run just that step without losing the researcher's work

- Humans can intervene: A human can review files between handoffs and provide guidance before the next agent runs

- Everything is auditable: The workspace contains a complete record of the pipeline's output at each stage

Using the MCP Server Instead of Custom Tools

If your setup supports MCP (Model Context Protocol), you can skip writing custom HTTP tools. Fastio's MCP server exposes 251 file operations as native tools that MCP-compatible agents can call directly. For agents built with the OpenAI Agents SDK, you can integrate MCP tools alongside your custom function tools. The SDK supports MCP servers as a tool source:

from agents import Agent

from agents.mcp import MCPServerStreamableHTTP

### Connect to Fastio's MCP server

fastio_mcp = MCPServerStreamableHTTP(

url="/storage-for-agents/",

name="fastio"

)

agent = Agent(

name="File Manager",

instructions="Manage files using the Fastio storage tools.",

mcp_servers=[fastio_mcp]

)

With MCP, the agent gets access to all file operations without you writing wrapper functions: uploads, downloads, folder management, sharing, permissions, search, and AI-powered document queries.

When to Use MCP vs Custom Tools

Use MCP when:

- Your agent framework supports MCP natively

- You want the full 251-tool set without writing boilerplate

- You need operations beyond basic CRUD (search, sharing, RAG queries)

Use custom function tools when:

- You need fine-grained control over which operations are exposed

- You want to add business logic around storage calls (validation, logging)

- Your agent only needs 3-4 specific file operations

- You're working with a framework that doesn't support MCP

Setting Up Your Agent's Storage Account

Before your agent can store files, it needs a Fastio account. Agents sign up the same way humans do, but programmatically.

Create an Agent Account

import httpx

### Register the agent

signup = httpx.post("https://api.fast.io/v1/auth/signup", json={

"first_name": "Research",

"last_name": "Agent",

"email": "research-agent@yourcompany.com",

"password": "secure-password-here",

"agent": True

})

### The response includes an auth token

token = signup.json()["token"]

The agent: True flag assigns the free agent tier automatically: 50GB storage, 5,000 monthly credits, no credit card required. Credits cover storage (100/GB), bandwidth (212/GB), and AI token usage (1 credit per 100 tokens).

Create a Workspace

### Create an org first

org = httpx.post(

"https://api.fast.io/v1/org",

headers={"Authorization": f"Bearer {token}"},

json={"name": "Research Agent Org", "domain": "research-agent"}

)

org_id = org.json()["id"]

### Then create a workspace

workspace = httpx.post(

f"https://api.fast.io/v1/org/{org_id}/workspace",

headers={"Authorization": f"Bearer {token}"},

json={

"name": "Research Output",

"folder_name": "research-output"

}

)

workspace_id = workspace.json()["id"]

Your agent now has persistent cloud storage. Files uploaded to this workspace survive between sessions, agent restarts, and server reboots.

Enable Intelligence Mode (Optional)

If you want your agent to query stored files using natural language, turn on Intelligence Mode:

httpx.patch(

f"https://api.fast.io/v1/workspace/{workspace_id}",

headers={"Authorization": f"Bearer {token}"},

json={"intelligence": "true"}

)

With Intelligence Mode enabled, Fastio auto-indexes uploaded files. Your agent can then ask questions across its stored documents and get answers with source citations, no separate vector database needed.

Sharing Agent Output with Humans

Storage is only half the problem. Agents produce work for people, and those people need access to the results. Fastio's sharing system lets agents create download pages for delivering files to humans. Your agent can build a share, upload deliverables, and send the link to a client or teammate.

@function_tool

def create_deliverable(

share_name: str,

description: str

) -> str:

"""Create a branded share link for delivering files to a client. Args:

share_name: Display name for the share

description: Brief description of the deliverables

"""

share = httpx.post(

f"{BASE_URL}/share",

headers={"Authorization": f"Bearer {FASTIO_TOKEN}"},

json={

"workspace_id": WORKSPACE_ID,

"name": share_name,

"description": description,

"type": "send",

"access_options": "Anyone with the link"

}

)

share_url = share.json().get("url", "")

return f"Share created: {share_url}"

This pattern is especially useful for:

- Client deliverables: Agent researches, writes, and packages a report, then creates a shareable link

- Team reviews: Agent stores drafts in a workspace and invites specific team members for review

- Ownership transfer: Agent builds an entire workspace with organized folders and files, then transfers ownership to a human. The agent keeps admin access for future updates. The agent doesn't just produce files. It packages and delivers them in a format humans can actually use.

Frequently Asked Questions

How do I store files with OpenAI Agents SDK?

Create a custom function tool using the @function_tool decorator that wraps file storage API calls. Define upload, download, and list functions, then attach them to your agent's tools list. The agent can then call these tools during execution to save and retrieve files. For MCP-compatible setups, you can connect directly to an MCP file storage server without writing custom code.

Does OpenAI Agents SDK have built-in file storage?

No. The OpenAI Agents SDK provides FileSearchTool for querying vector stores and CodeInterpreterTool for sandboxed code execution, but neither is designed for persistent file storage. The SDK's session system (SQLiteSession) persists conversation state, not files. You need to add external file storage through custom function tools or MCP server connections.

What replaced OpenAI Swarm?

The OpenAI Agents SDK replaced Swarm . It keeps Swarm's lightweight primitives (agents, tools, handoffs) and adds production features like guardrails for input/output validation, built-in tracing for debugging, multi-model support, and session persistence. Swarm was experimental and not recommended for production use.

How do OpenAI agents access files across sessions?

Agents access files across sessions by storing them in external cloud storage and reading them back at the start of each new session. During session 1, the agent saves output files to a named workspace. During session 2, the agent lists workspace contents and downloads relevant files to restore context. The files persist in cloud storage regardless of whether the agent is running.

Can I use Fastio's MCP server with the OpenAI Agents SDK?

Yes. The OpenAI Agents SDK supports MCP servers as tool sources. Add Fastio's MCP server (mcp.fast.io) to your agent configuration and it gets access to 251 file operation tools, including uploads, downloads, folder management, sharing, and AI-powered document search. This approach requires less custom code than building individual function tools.

Related Resources

Give Your AI Agents Persistent Storage

Fastio gives teams shared workspaces, MCP tools, and searchable file context to run openai agents sdk file storage workflows with reliable agent and human handoffs.