How to Extract Metadata from Scanned Documents Using OCR

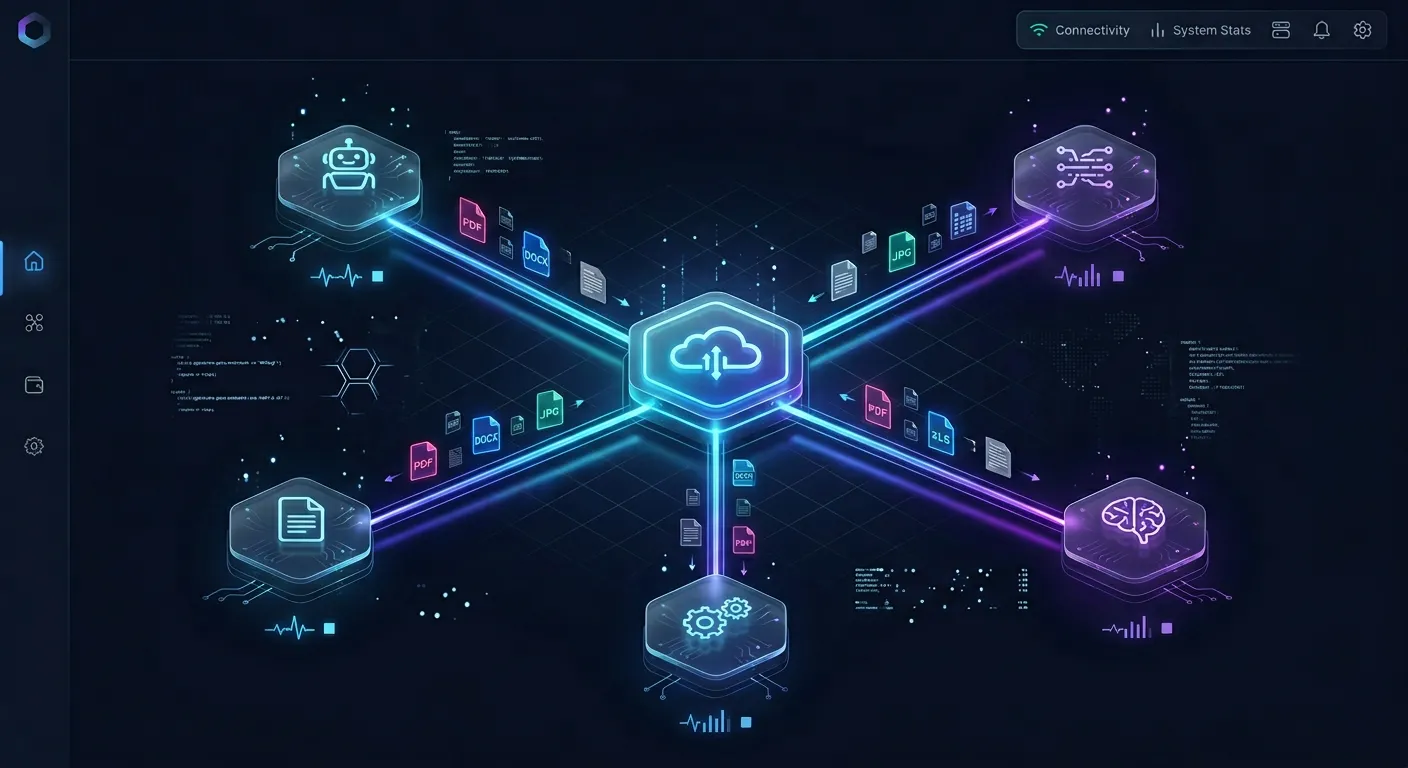

OCR metadata extraction converts scanned document images into structured, searchable data. This guide walks through the complete pipeline, from digitization to indexed output, with tool recommendations for each stage and tips for improving accuracy on real-world documents.

What Is OCR Metadata Extraction?

OCR metadata extraction is the process of using optical character recognition to convert scanned document images into machine-readable text, then extracting and tagging structured metadata from that text for search, compliance, or automation.

Most enterprise content still starts life on paper. Industry estimates put the figure at roughly 80% of business information existing in paper or scanned form. That means invoices, contracts, insurance policies, and medical records sitting in filing cabinets or stored as flat image PDFs that search engines and databases cannot read.

Standard OCR handles the first half of the problem: turning an image of text into actual text characters. But raw OCR output is just a wall of unstructured words. Metadata extraction is the second step, where you identify and tag specific fields within that text. An invoice becomes a set of structured fields: vendor name, invoice number, line items, total amount, due date. A contract becomes counterparties, effective dates, and renewal terms.

The challenge is that most guides treat OCR and metadata extraction as separate problems. You'll find tutorials on running Tesseract and separate tutorials on parsing PDFs for metadata. Few combine both into a single pipeline walkthrough. This guide covers the full workflow from scan to structured output, with tool recommendations for each stage.

The Five-Step OCR Metadata Extraction Pipeline

The full workflow breaks into five stages. Each one feeds the next, and skipping any of them leaves gaps in your output.

1. Scan and Digitize

If you're starting with physical documents, scan at 300 DPI or higher. Lower resolutions hurt OCR accuracy, especially on small text or dense layouts. Use a flatbed scanner for important documents and a sheet-fed scanner for bulk processing. Save scans as TIFF or PNG rather than JPEG, since JPEG compression introduces artifacts that confuse OCR engines.

For documents already stored as digital images or image-only PDFs, this step is just organizing your input files into a consistent format and resolution.

2. Run OCR

Feed your scanned images through an OCR engine. Tesseract is the most widely used open-source option and supports over 100 languages. On clean, well-scanned printed text, modern OCR engines achieve 98-99% character accuracy. Handwriting and degraded scans drop that number considerably.

Pre-process images before OCR for better results: deskew rotated pages, apply binarization to convert to black and white, and remove noise or speckles. Tools like ImageMagick or OpenCV handle these preprocessing steps in automated pipelines.

3. Extract Embedded Document Metadata Scanned PDFs and images carry their own metadata beyond the visible text. A PDF has creation dates, author fields, and producer software. A scanned TIFF might include scanner model, resolution, and color space information. This embedded metadata is separate from the OCR text but often valuable for organizing and auditing your document collection.

Tools like ExifTool, PDFPlumber, and Apache Tika can pull this metadata without any OCR at all. Capture it alongside the OCR output for a complete picture of each document.

4. Combine OCR Text with Document Metadata

This is where extraction becomes useful. Take the raw OCR text and the embedded metadata, then apply rules or AI models to identify specific fields. For an invoice, you might use regex patterns to find dollar amounts, dates, and PO numbers. For a contract, you might use named entity recognition to find party names and addresses.

The approach depends on your document types. Template-based extraction works when documents follow a consistent layout, like invoices from one vendor. Machine learning models handle variation better when documents come from many sources with different formats.

5. Index and Store

Structure the extracted data and send it somewhere useful. Common destinations include databases, search indexes, document management systems, or spreadsheets. At this stage, a scanned piece of paper has become a searchable, queryable record with tagged fields that downstream systems can consume.

Store the original scan, the OCR text layer, and the extracted metadata together. You'll want the originals for verification and the structured data for automation.

Tools for Each Pipeline Stage

Different tools handle different parts of the pipeline. Here's what works at each stage.

OCR Engines

Tesseract remains the standard open-source OCR engine. It handles printed text in over 100 languages and works well on clean scans. OCRmyPDF wraps Tesseract and adds PDF-specific features: creating searchable PDF layers, deskewing pages, and cleaning up compression artifacts. PaddleOCR, an open-source alternative from Baidu, performs better on complex layouts and tables where Tesseract tends to struggle.

For commercial needs, ABBYY FineReader and Google Document AI offer higher accuracy on difficult documents and built-in layout analysis. Microsoft Azure AI Document Intelligence handles form recognition with pre-built models for invoices, receipts, and ID documents.

Metadata Extraction Tools

ExifTool reads embedded metadata from virtually any file format. PDFPlumber extracts text, tables, and metadata from PDFs with fine-grained control over text positioning. Apache Tika detects file types and extracts both text and metadata from over a thousand formats, making it a strong choice for mixed document collections.

Docling, an open-source document conversion library from IBM, handles format detection, OCR, layout analysis, and structured output in a single pipeline. It's designed specifically for feeding documents into AI systems and downstream processing.

End-to-End Platforms

Unstract connects OCR, extraction, and output formatting into a single no-code pipeline for scanned PDFs. Nutrient (formerly PSPDFKit) offers SDK-level OCR and extraction for developers building document processing into their own applications.

For teams that want to skip building a pipeline entirely, Fast.io's Metadata Views take a different approach. Instead of configuring OCR engines and writing extraction rules, you describe what fields you want in plain English. The AI handles text recognition, layout analysis, and field extraction automatically across PDFs, images, scanned pages, and handwritten notes.

Improving OCR Accuracy on Scanned Documents

OCR accuracy depends heavily on input quality. A few adjustments at the scanning and preprocessing stage can save hours of manual correction downstream.

Resolution matters more than you'd expect. 300 DPI is the minimum for reliable OCR. 600 DPI gives better results on small text, fine print, and documents with mixed fonts. Going higher than 600 DPI rarely helps and just slows processing.

Binarization cleans up noisy scans. Converting a grayscale or color scan to pure black and white removes background noise, bleed-through from the reverse side of a page, and uneven lighting. Adaptive thresholding through OpenCV or ImageMagick works better than a fixed threshold on documents with uneven backgrounds.

Deskewing fixes rotated pages. Even a small rotation of two or three degrees degrades OCR accuracy. Most OCR wrappers like OCRmyPDF include automatic deskew. For batch processing, run deskew as a separate preprocessing step before feeding images to the OCR engine.

Language-specific models improve results. Tesseract ships with language data for over 100 languages, but you need to install the correct language pack and specify it at runtime. For documents that mix languages on the same page, some engines support automatic language detection. PaddleOCR handles multilingual documents particularly well.

Confidence scoring catches errors early. Most OCR engines assign a confidence score to each recognized character or word. Set a threshold and flag low-confidence sections for human review rather than trusting uncertain output. This hybrid approach gives the best balance of speed and accuracy for production workflows.

Validate extracted fields before storing them. After pulling structured data from OCR text, check that dates parse correctly, currency amounts have the right format, and required fields are present. Simple validation rules catch extraction errors that would otherwise flow into your database or trigger downstream failures.

Turn Scanned Documents into Structured Data

Fast.io Metadata Views extract fields from scanned pages, PDFs, and handwritten notes. Describe what you need in plain English. 50 GB free, no credit card required.

When to Skip the Pipeline

Building a custom OCR pipeline makes sense when you need full control over every stage, your documents are highly specialized, or you're processing millions of pages at a predictable per-page cost.

But for many teams, the pipeline itself is the bottleneck. Configuring OCR engines, writing extraction rules, maintaining templates for each document type, and handling edge cases can take more engineering time than the extraction work itself.

Fast.io's Metadata Views offer a fundamentally different approach. Upload your scanned documents to a workspace, then describe the fields you want extracted in natural language. Instead of writing a rule like "find the text at coordinates (x, y) and parse it as a date," you write "extract the invoice date." The AI designs a typed schema with field types like Text, Integer, Decimal, Boolean, and Date, then populates a sortable, filterable spreadsheet from your documents.

This works across file types without separate OCR configuration for each format. PDFs, images, Word documents, spreadsheets, scanned pages, and handwritten notes all go through the same extraction process. Need an additional field later? Add a new column without reprocessing existing files.

For teams building agent-driven workflows, the Fast.io MCP server exposes Metadata Views programmatically. An agent can create schemas, trigger extraction, and query results through the same API used for file storage and collaboration. A document processing pipeline can run end to end without human intervention, with ownership transfer available when it's time to hand results to a reviewer.

The free plan includes 50 GB of storage and 5,000 credits per month with no credit card required. That's enough to test extraction workflows on real documents before committing to a platform.

Choosing the Right Approach

The right extraction strategy depends on your document volume, variety, and how much engineering time you can invest.

Build a custom pipeline when you process millions of documents per month, need precise control over preprocessing and OCR engine selection, or work with highly specialized document types that general AI models haven't encountered. Open-source tools like Tesseract, OCRmyPDF, and PDFPlumber give you that control at no licensing cost, though you'll spend time on integration and maintenance.

Use an AI-powered platform when your documents vary in format and layout, when you'd rather describe what you need than write extraction rules, or when you want structured output without maintaining OCR infrastructure. Fast.io Metadata Views, Google Document AI, and similar services trade per-document processing costs for saved engineering time.

A hybrid approach works too. Run a custom Tesseract pipeline for your high-volume, standardized documents like monthly invoices from known vendors. Use an AI extraction platform for the long tail of document types that don't justify a custom template. Most teams start with a platform to validate their extraction requirements, then build custom pipelines only for the document types and volumes that justify the engineering investment.

Frequently Asked Questions

How do you extract metadata from a scanned document?

Scan or digitize the document at 300 DPI or higher, run it through an OCR engine like Tesseract to convert the image to text, extract any embedded file metadata using a tool like ExifTool, then apply rules or AI models to identify and tag specific fields such as dates, names, and amounts from the OCR text. Store the original scan alongside the structured output for verification.

What is OCR data extraction?

OCR data extraction combines optical character recognition with structured field identification. OCR converts document images into machine-readable text, and the extraction layer then identifies specific data points within that text, such as invoice totals, contract dates, or policy numbers. The result is structured data that can feed into databases, spreadsheets, or automated workflows.

Can you get EXIF data from a scanned photo?

Scanned photos contain EXIF data about the scanning process itself, including scanner model, resolution, date of scan, and color space. However, the original camera EXIF data from when the photo was taken is not preserved in a scan. If you need the original shooting metadata like camera settings and GPS coordinates, you'll need the original digital file rather than a scanned copy.

What tools extract text and metadata from scanned PDFs?

OCRmyPDF and Tesseract handle text extraction through OCR. PDFPlumber and Apache Tika extract both text and embedded PDF metadata. ExifTool reads file-level metadata. For end-to-end extraction without building a pipeline, platforms like Fast.io Metadata Views, Google Document AI, and ABBYY FineReader combine OCR and structured field extraction in a single step.

What accuracy can you expect from OCR on scanned documents?

On clean, well-scanned printed text at 300 DPI or higher, modern OCR engines achieve 98-99% character accuracy. Accuracy drops on handwritten text, degraded or low-resolution scans, complex page layouts, and documents with mixed fonts or languages. Preprocessing steps like deskewing, binarization, and noise removal can recover several percentage points of accuracy on difficult scans.

Related Resources

Turn Scanned Documents into Structured Data

Fast.io Metadata Views extract fields from scanned pages, PDFs, and handwritten notes. Describe what you need in plain English. 50 GB free, no credit card required.