Building Multi-Model AI Video Routing and Storage Architecture

Multi-model AI video production needs a new way to move and store data. Traditional cloud buckets often create bottlenecks that account for up to multiple% of total production time. This guide explores the architecture needed to route video assets between specialized AI models while maintaining high-fidelity results and low latency. By implementing AI-native storage and metadata-driven routing, creative teams can reduce rendering costs by multiple% and keep visual consistency across the project.

What is Multi-Model AI Video Routing?

Multi-model video routing is the automated process of moving video assets through a sequence of specialized AI models to produce a final result. Unlike monolithic systems that attempt to generate a video from a single prompt, a multi-model architecture treats video production as a series of discrete, high-quality steps. This approach allows teams to use the best model for each task, such as one model for character consistency, another for fluid motion, and a third for cinematic lighting.

The Shift from Monolithic to Modular Architecture

In the early days of generative video, the goal was a single model that could do everything. You would provide a prompt, and the model would attempt to handle the script, the character design, the background, and the physics all at once. While this was impressive, it often led to results that lacked control. Characters would change appearance between shots, and background objects would randomly morph into other items. The industry has since shifted to a modular approach where specific agents handle distinct parts of the creative process.

In a modular system, the routing layer is the connective tissue. It ensures that the output of a character design model is available as a reference for the animation model. Without this routing, each model works in a vacuum, leading to a loss of creative coherence. By routing files through a sequence of specialized tools, you can lock in the elements that work and iterate only on the ones that do not. For example, if you love the character's face but hate the way they walk, you only need to re-run the motion model rather than generating the entire scene from scratch.

Understanding the Director Layer

At the heart of any routing architecture is the Director Layer. This is not a human, but a high-reasoning AI agent that oversees the entire pipeline. The Director Layer analyzes the creative brief and determines which models are needed for each specific shot. It manages the dependencies between tasks, ensuring that the background is rendered before the foreground characters are placed into the scene. This level of orchestration is what separates a professional production environment from a casual hobbyist tool.

The Director Layer also handles quality control. After each model finishes its task, the director analyzes the output. If it detects a visual artifact or a breach of character consistency, it can automatically route that segment back for a second pass or send it to a different model for correction. This automated feedback loop is important for maintaining high standards without requiring a human to manually inspect every single frame of a long-form video project.

Helpful references: Fastio Workspaces, Fastio Collaboration, and Fastio AI.

The 5 Pillars of a Professional AI Video Stack

Professional AI video production has moved past the era of all-in-one generators. To achieve results that look like film rather than AI-generated static, creators now use a stack of specialized models. Each model in the stack serves a specific purpose, and the routing architecture must handle the different data requirements of each stage.

Pillar 1: The Reasoning and Scripting Layer

The process begins with a reasoning model that acts as the screenwriter and storyboard artist. Models like Claude multiple.multiple Sonnet or GPT-4o are typically used here because of their ability to understand complex narrative structures. The router moves the initial text brief to this model, which then outputs a structured JSON file containing shot lists, camera angles, and lighting descriptions. This metadata becomes the roadmap that every subsequent model follows.

Pillar 2: The Visual Foundation Layer

Once the script is set, the pipeline moves to establishing the visual identity. This often involves using image generation models like Midjourney or DALL-E multiple. Instead of generating video immediately, the system generates high-resolution keyframes or character sheets. These assets serve as a visual anchor. By routing these reference images into the motion models, you ensure that the character's clothing, hair, and facial features remain identical throughout the entire production, regardless of how much they move on screen.

Pillar 3: The Motion Generation Layer

This is the stage where the clips are created. Specialized video generators like Runway Gen-multiple Alpha, Luma Dream Machine, or Kling take the keyframes and turn them into multiple to multiple second clips. The routing strategy here is often parallel. A professional team might send the same prompt and reference image to two different models simultaneously. One model might be better at fluid human movement, while another excels at atmospheric effects like smoke or rain. The system then routes both outputs to the Director Layer for a side-by-side quality comparison.

Pillar 4: The Audio and Synthesis Layer

A common mistake in AI video is treating audio as an afterthought. A professional pipeline includes specialized audio models for voice cloning and environmental sound effects. Tools like ElevenLabs generate the dialogue, while other models create foley sounds like footsteps or the rustle of leaves. The challenge for the routing architecture is synchronization. The system must ensure that the audio files are precisely mapped to the visual timestamps, requiring a shared metadata index that both the audio and video agents can access.

Pillar 5: The Enhancement and Post-Production Layer

Raw AI video is often limited to lower resolutions and may contain minor temporal flickering. The final stage of the routing process sends the footage to enhancement models like Topaz Video AI or specialized upscalers. These models require massive amounts of data as they analyze the relationships between pixels across multiple frames to add detail and stability. This is the most compute-intensive part of the workflow and requires a high-bandwidth storage path to prevent the models from being throttled by slow file access.

Why Traditional Storage Bottlenecks AI Pipelines

Standard cloud storage and traditional network attached storage were designed for sequential playback. They are good at serving a single stream of video to a human viewer, but they struggle with an AI pipeline. In a multi-model workflow, multiple agents often access the same high-resolution video file simultaneously for different tasks. One agent might be transcribing audio while another tracks objects and a third analyzes lighting.

The Problem of I/O Starvation

When multiple agents access the same file at once, traditional storage controllers struggle to keep up. They were built for read-ahead patterns where the next block of data is predictable. AI models, however, perform non-linear reads. They might need to jump back and forth between different parts of a frame to perform a complex analysis. This creates a seek storm that collapses the throughput of standard hard drives or hybrid arrays. When the storage cannot deliver data fast enough, the GPU sits idle. This is known as I/O starvation, and it is a primary reason why many AI projects fail to scale.

Metadata Overhead and the 20% Tax

Another hidden bottleneck is the burden of metadata. For every minute of raw video, an AI pipeline generates a large amount of sidecar data. This includes frame-level tags, feature vectors for search, and JSON transcripts for dialogue. Research suggests that this AI-generated metadata can add a multiple% storage overhead relative to the raw video. If this metadata is stored in a separate, slower database, the agents cannot find the frames they need to process. The result is a system that spends more time looking for data than it does actually processing it.

AI-Native Storage Solutions

To solve these issues, architecture must shift to AI-native storage. This involves using all-flash NVMe arrays that offer random-read IOPS in the millions rather than the thousands. More importantly, it requires the use of protocols like RDMA (Remote Direct Memory Access). RDMA allows the storage system to write data directly into the GPU's memory, bypassing the CPU entirely. This zero-copy approach eliminates the latency associated with the traditional operating system kernel, making data movement nearly instantaneous. In high-stakes production, this can reduce end-to-end latency by up to multiple% compared to traditional methods.

Build Scalable AI Video Pipelines on Fastio

Get 50GB of free persistent storage for your AI agents. Connect 19 consolidated tools and build high-performance routing without credit card friction. Built for modern AI video pipelines. Built for multi model video routing storage workflows.

Design Patterns for Multi-Model Routing

There are three primary patterns for routing data between AI models, and the choice depends on the complexity of your project. Implementing these patterns correctly is the difference between a project that finishes in hours or one that takes days.

Sequential Pipelines for Simple Workflows

The most straightforward pattern is the sequential pipeline. In this model, the work flows in a single direction. Model A finishes its task, saves the file to a specific workspace, and a webhook triggers Model B. This is ideal for simple projects where each step depends entirely on the previous one. For example, you must have a script before you can generate a voiceover. While simple to manage, the downside is that any delay in an early stage ripples through the entire chain, potentially idling expensive resources down the line.

Concurrent Processing for Speed

For larger projects, concurrent processing is the preferred pattern. The routing architecture sends the same raw asset to multiple specialized models at once. While one model is generating a 3D depth map of the scene, another is identifying every object for later replacement, and a third is generating the background score. By running these tasks in parallel, you can cut your total production time by more than multiple%. This pattern requires a merge layer at the end to reconcile the different outputs into a final cohesive video file.

Metadata-Driven Branching and Fallbacks

The advanced pattern is metadata-driven branching. This uses a director agent to analyze the quality of each intermediate output and decide the next path. If a motion model produces a clip where the character's movement is choppy, the router can detect this metadata flag and automatically route the clip to a smoothing model or a different generator altogether. This level of intelligence allows for automated fallbacks. If a high-tier model like Sora is hitting a rate limit, the router can automatically switch to a faster, lower-cost model like Kling to keep the pipeline moving without human intervention.

Optimizing Video Flow with Fastio

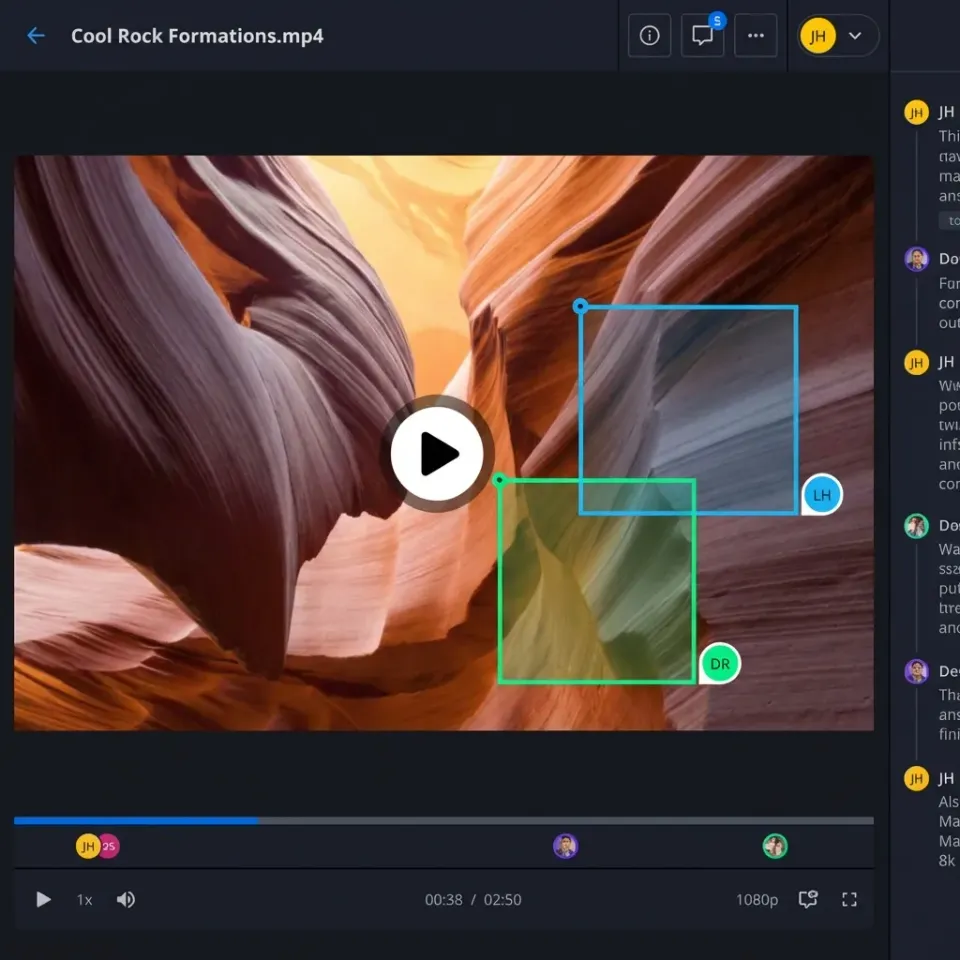

Fastio provides the infrastructure needed to turn these architectural patterns into a working system. Unlike generic storage providers, Fastio workspaces are designed for both humans and agents to work side-by-side. This collaboration is built into the core of the platform.

Targeted Processing with Intelligence Mode

Once a video is in a workspace, Intelligence Mode automatically indexes the content. This creates a searchable RAG index that agents can query using natural language. This is a major advantage for video routing. Instead of an agent having to download and scan a large file to find a specific five-second clip, it can query the index. The agent receives the exact timestamps and then uses a Fastio MCP tool to extract only those specific segments. This targeted approach can reduce the amount of data moved across the network by over multiple%, leading to savings in both time and API costs.

Coordinating Agents with MCP and File Locks

For multi-agent workflows, Fastio's implementation of the Model Context Protocol (MCP) provides hundreds of specialized tools. These tools allow agents to manage files with precision. For example, an agent can use the acquire_lock tool to prevent other agents from writing to a file while it is being processed. This prevents the "last-writer-wins" bugs that often plague distributed AI systems. Once the work is done, the agent releases the lock and uses the notify_webhook tool to signal the next model in the routing chain.

Durable Objects for Production State

Video production is a stateful process. You need to know which version of a clip is current and which models have already processed it. Fastio uses Durable Objects to maintain this session state. This means that even if an agent loses its connection or a model hits a temporary error, the state of the project is preserved. When the agent restarts, it knows exactly where it left off, what locks it holds, and which files are ready for the next stage of the routing process. This reliability is what allows for autonomous video production at scale.

Evidence and Benchmarks: What the Metrics Show

Measuring the success of a multi-model architecture requires looking at three key metrics: throughput, latency, and cost per minute of video. In high-performance environments, the goal is to keep the GPU utilization as close to multiple% as possible. If your storage is the bottleneck, you will see GPU dips where the processors are waiting for the next batch of frames to arrive.

Throughput and Latency Gains

Case studies in the field have shown that moving from a sequential, CPU-based pipeline to an AI-native architecture with direct storage-to-GPU paths can lead to a multiple% reduction in end-to-end latency. According to a study by Ant Group on multi-model inference, optimizing the routing layer not only lowered latency but also improved throughput by 2.4 times. This means the same hardware can produce more than double the content in the same amount of time. This efficiency is important for studios working on tight deadlines.

Cost Optimization through Tiered Routing

Cost optimization shows the most direct results. By using a tiered routing strategy, teams can use lower-cost models for the draft and iteration phases, only routing the final, approved shots to high-end delivery models. This "Draft-tier" vs "Delivery-tier" approach has been shown to reduce API spend by approximately multiple%. This is only possible if your storage layer can handle the metadata tracking required to know exactly which clips are ready for the final, expensive render.

The Full-Frame Tax

Many unoptimized pipelines suffer from what architects call the full-frame tax. This happens when a system is forced to transport and decode an entire multiple frame even if the AI model only needs to analyze a small 128x128 pixel region of that frame. AI-native storage, paired with modern protocols, allows for selective data movement. By only sending the specific pixels needed by the model, you can reduce the effective bandwidth requirements by up to multiple%. This targeted movement is what allows complex multi-model pipelines to run smoothly on standard networking hardware.

Implementation Guide: Building Your First Multi-Model Pipeline

Transitioning to a multi-model architecture is a phased process. It requires setting up the right coordination layer before you start scaling your compute resources. Here is a step-by-step guide to building a production-ready pipeline using Fastio and the Model Context Protocol.

Step 1: Environment Orchestration

The first step is setting up your workspace environment. In Fastio, you should create a dedicated organization for each major project. This allows you to manage permissions for different agents and human collaborators at a granular level. Once your organization is ready, initialize your primary production workspace. This will act as the starting point where your raw video assets first arrive. Use the URL Import tool to pull in your footage from external sources like Google Drive or OneDrive without consuming local bandwidth.

Step 2: Defining the Metadata Schema

Before you run your first model, you must define how your agents will communicate. This is done through a metadata schema. You should decide on a consistent format for frame-level timestamps, quality scores, and model identifiers. In Fastio, this metadata is stored directly with the files in your workspace. Use the Intelligence Mode toggle to ensure that every asset is automatically indexed upon arrival. This allows your agents to query the state of the project using semantic search rather than just file names.

Step 3: Configuring the Director Agent

Your Director Agent is the hub of the routing system. You should configure this agent using a high-reasoning model like Claude multiple.5 Sonnet. Using the Fastio MCP tools, give the agent the ability to list files, read metadata, and trigger webhooks. The director's first task is to analyze the raw footage and generate a 'Processing Plan' JSON file. This file contains the routing instructions for every other model in the stack, including fallback logic if a primary model fails or reaches a rate limit.

Step 4: Implementing Concurrent Workers

With the plan in place, you can now spin up your specialized worker agents. Each worker should be assigned a specific task, such as audio transcription, object detection, or color grading. These workers operate in parallel, reading from the primary workspace and writing their intermediate outputs back to the same shared environment. To prevent data corruption, ensure that each worker uses the acquire_lock MCP tool before performing a write operation. This coordination ensures that even with dozens of agents working simultaneously, the project state remains consistent.

Step 5: The Final Fusion and Delivery

The final stage of the pipeline is the fusion pass. The Director Agent monitors the progress of the concurrent workers. Once all required intermediate assets are ready, it routes the project to a final rendering model. This model takes the animated frames, the synchronized audio, and the post-production enhancements to create the final delivery file. After a final automated quality check, the agent can use the Ownership Transfer feature to hand the completed organization over to the client, while preserving the administrative logs for future audits or iterations.

Frequently Asked Questions

How do I connect different AI video models in one workflow?

You can connect different models using an orchestration layer that communicates via APIs and webhooks. By using a shared workspace in Fastio, each model can read and write to the same persistent storage. A central 'director agent' uses metadata to trigger the next model in the sequence once a specific task is complete.

What is the best storage for AI video pipelines?

The best storage for AI video is an AI-native system that supports high random-read IOPS and sub-millisecond latency. Unlike traditional cloud buckets, AI-native storage allows multiple agents to access different parts of the same file simultaneously without performance degradation, which is critical for frame-level analysis.

How does video routing reduce production costs?

Video routing reduces costs by sending assets to the most efficient model for each task. Instead of using an expensive high-tier model for everything, a router can use a 'Draft-tier' model for initial edits and only trigger 'Delivery-tier' models for final rendering, saving up to multiple% on API fees.

What is metadata-driven routing in AI workflows?

Metadata-driven routing uses tags, timestamps, and quality scores to decide the path a file takes through a pipeline. For example, if a model detects a low quality score on a specific clip, the routing logic can automatically send that clip to a fix-it model before moving it to the next production stage.

Why do AI video pipelines need high IOPS?

AI pipelines need high IOPS because models treat video as a database of individual frames rather than a sequential stream. When multiple models or agents are analyzing different parts of a video at once, they create a 'seek storm' that traditional storage cannot handle, leading to GPU idle time.

Related Resources

Build Scalable AI Video Pipelines on Fastio

Get 50GB of free persistent storage for your AI agents. Connect 19 consolidated tools and build high-performance routing without credit card friction. Built for modern AI video pipelines. Built for multi model video routing storage workflows.