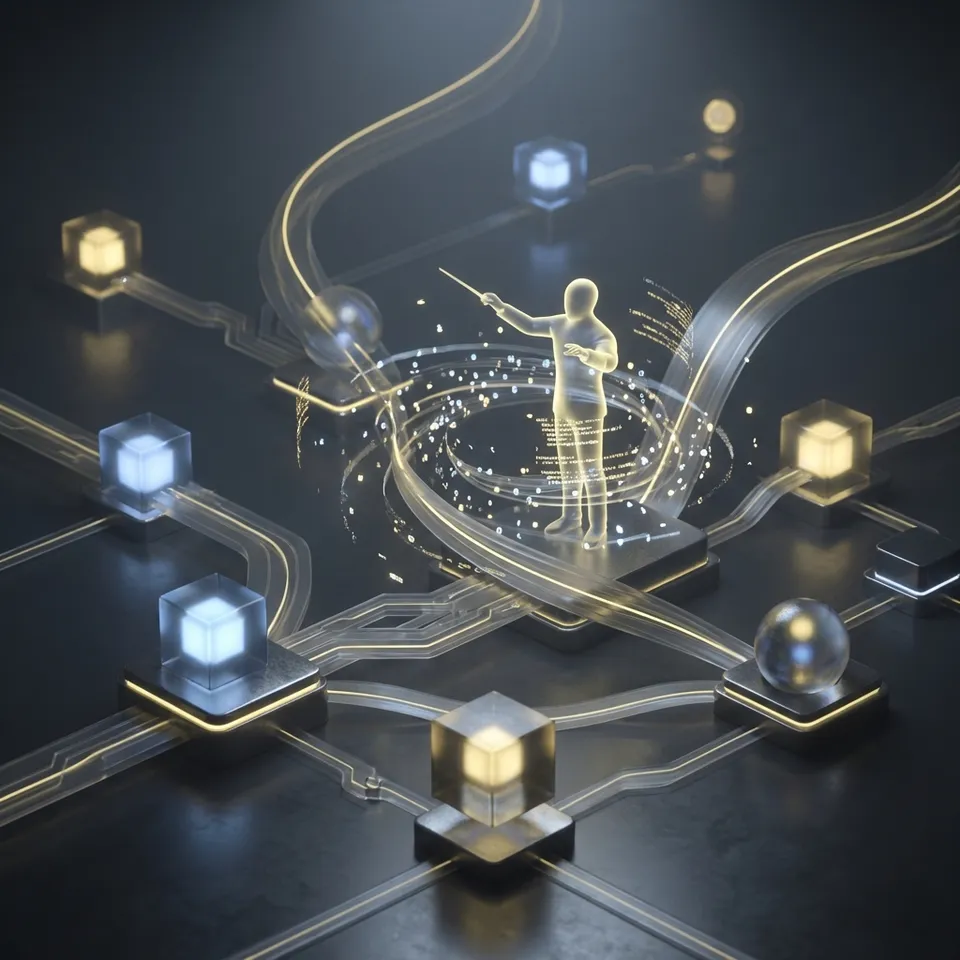

How to Orchestrate MCP Agents in a Workspace

MCP workspace agent orchestration is the architectural pattern of coordinating multiple AI agents within a shared Fastio workspace using the Model Context Protocol (MCP). By connecting agents to a centralized environment with persistent storage and intelligence, teams can build complex, stateful workflows that far exceed the capabilities of isolated scripts. In this model, agents act as autonomous workers that share the same files, folders, and search indexes as their human counterparts.

What Is MCP Workspace Agent Orchestration?

MCP workspace agent orchestration is a methodology for managing multi-agent systems where the "workspace" serves as the central nervous system. Instead of agents passing data directly to each other via fragile API payloads, they operate on a shared state maintained in a persistent cloud file system.

At its core, this approach relies on the Model Context Protocol (MCP), an open standard that enables Large Language Models (LLMs) to connect safely to external data and tools. Fastio implements this protocol as a hosted MCP server (mcp.fast.io), exposing multiple distinct tools that map multiple:multiple with the platform's UI capabilities.

The Role of the Shared Workspace

In traditional multi-agent architectures, state is often ephemeral or siloed. Agent A performs a task and must explicitly transmit the result to Agent B. If Agent A crashes or the transmission fails, context is lost.

In an orchestrated workspace, the file system is the state. Agent A writes a file to project/data/processed_v1.json. Agent B, watching that directory via a webhook, sees the new file and begins its work. If Agent B fails, Agent C can pick up exactly where it left off because the data persists in the workspace.

Bridging Agents and Humans

A unique advantage of this orchestration model is the unification of agent and human environments. The workspace isn't a hidden database; it's a user-friendly interface.

- Agents interact via MCP tools (Streamable HTTP/SSE).

- Humans interact via the Fastio web dashboard or mobile app.

When an agent generates a report, a human manager can open it immediately in the browser. When a human uploads a raw video file, an agent can instantly detect it and start transcoding. This "multiplayer" dynamic bridges the gap between automated backend processes and frontend human collaboration.

Helpful references: Fastio Workspaces, Fastio Collaboration, and Fastio AI.

Why Orchestrate Agents in a Shared Workspace?

Building reliable multi-agent systems requires solving problems of consistency, memory, and coordination. Shared workspaces address these challenges natively.

Solving the Data Silo Problem

Isolated agents often create fragmented data pools. One agent might store logs in S3, another keeps temporary files in local memory, and a third relies on a vector database. Reconciling these sources for a holistic view is difficult. By orchestrating agents in a Fastio workspace, you consolidate all assets, documents, media, logs, and indexes, into a single, organized hierarchy. Every agent sees the same "truth," reducing hallucination risks caused by stale or partial context.

Persistent State and "Infinite" Memory

LLM context windows are limited and expensive. Workspaces provide effectively infinite long-term memory. Agents don't need to keep entire history in their context window; they read the relevant files or use semantic search to retrieve specific memories when needed. Because Fastio files are persistent, an agent workflow can span days or weeks. An agent can "sleep" (terminate its process) and wake up later, checking the workspace state to resume its tasks without losing progress.

Built-in Intelligence (RAG)

Orchestrating agents requires more than just file storage; it requires intelligence. Fastio's Intelligence Mode automatically indexes every file uploaded to the workspace. This provides built-in Retrieval-Augmented Generation (RAG). Instead of managing a separate Pinecone or Milvus instance, agents use the semantic_search tool to query the workspace's knowledge base. This lowers the engineering barrier for deploying intelligent agent teams.

Human-in-the-Loop Governance

Autonomy is powerful, but oversight is critical. Shared workspaces provide a natural governance layer.

- Visibility: Humans can see every file an agent creates.

- Control: Permissions can be revoked instantly if an agent misbehaves.

- Handoffs: Agents can transfer ownership of entire project workspaces to human users for final delivery.

Give Your AI Agents Persistent Storage

Start with 50GB free storage, 5 workspaces, and 251 MCP tools. No credit card required for agents. Built for MCP workspace agent orchestration workflows.

Performance Benefits of Workspace Orchestration

Beyond convenience, workspace orchestration offers tangible performance advantages for production systems.

Latency and Throughput

In distributed agent systems, moving data between nodes incurs network latency and egress costs. In a shared workspace, data "movement" is virtual. Agent A writes to the storage layer, and Agent B reads from it. While exact benchmarks depend on workload, this architecture eliminates the need to serialize and transmit large context payloads between agents. For media workflows, this is especially critical. Fastio's HLS streaming technology delivers media 50-60% faster than standard progressive downloads, allowing video-processing agents to access frames with minimal buffering.

Token Efficiency

Redundant processing is a major cost driver. If three agents need to analyze the same multiple-page PDF, a naive setup might have each agent read and embed the document separately. In a workspace with Intelligence Mode, the document is embedded once upon upload. All agents query the same shared index. This "embed once, query everywhere" model drastically reduces token consumption for embedding models and ensures all agents are aligned on the same semantic understanding of the data.

Atomic Operations

Concurrency bugs are the bane of distributed systems. Fastio workspaces support file locking mechanisms (acquire_lock, release_lock). This allows agents to perform atomic operations, like updating a shared log file or claiming a task from a queue, without race conditions. This reliability is essential for scaling from multiple agents to multiple or more.

Setting Up a Workspace for MCP Agent Orchestration

Getting started with agent orchestration is straightforward. Fastio offers a specialized free tier for agents that includes 50GB of storage and 5,000 monthly credits.

Step 1: Create an Agent Account

Visit the agent signup page. You don't need a credit card or a complex approval process. You'll receive an API key that grants access to the platform and the MCP server.

Step 2: Configure the Workspace

Create a new workspace for your agent team.

- Name: Give it a descriptive name (e.g., "Research-Agents-Alpha").

- Intelligence Mode: Toggle this ON. This ensures all files are auto-indexed for RAG.

- Permissions: If you plan to invite other agents or humans, set the default permissions (e.g., "Editor" for agents, "Viewer" for clients).

Step 3: Initialize the MCP Client

Your agents connect to mcp.fast.io using your API key. Here is a conceptual example of how to initialize a session in a Python-based agent environment:

### Conceptual Python setup for an MCP client

import os

from mcp_client import Client

api_key = os.environ.get("FASTIO_API_KEY")

workspace_root = "/Research-Agents-Alpha/"

### Initialize connection to Fastio MCP server

client = Client(

server_url="/storage-for-agents/",

auth_token=api_key,

transport="sse" # Server-Sent Events for real-time updates

)

### Verify connection

tools = client.list_tools()

print(f"Connected! Available tools: {len(tools)}")

Step 4: Verify Tool Access

Once connected, your agent should have access to multiple tools. Key tools to verify immediately include:

list_files: To explore the directory structure.upload_file: To write data.semantic_search: To test Intelligence Mode.acquire_lock: To test coordination capabilities.

With the workspace ready, you can now deploy your agent logic.

Connecting Multiple Agents

To add more agents, share the workspace with their unique email addresses (or handle IDs). The free tier supports up to 5 members per workspace. Each agent will use its own API key to authenticate but will interact with the same shared directory structure. This separation of identity is crucial for audit logging, you'll see exactly which agent performed which action.

Key MCP Tools for Agent Coordination

While Fastio provides hundreds of tools, a specific subset is important for orchestration. Mastering these tools enables strong multi-agent patterns.

acquire_lock and release_lock

These tools implement a mutex (mutual exclusion) mechanism on files or paths.

- Usage: Before an agent starts a critical section (like writing to a shared state file), it calls

acquire_lock(path="/status.json"). - Behavior: If the lock is free, it returns a lock ID. If held by another agent, the call waits or returns an error (depending on implementation).

- Best Practice: Always use a

try/finallyblock in your agent code to ensurerelease_lockis called, preventing deadlocks if an agent crashes.

set_webhook

Webhooks allow agents to be reactive rather than proactive (polling).

- Usage:

set_webhook(path="/inbox", events=["file_uploaded"], url="https://agent-b-handler.api/...") - Behavior: When a file is uploaded to the

/inboxfolder, Fastio sends a POST request to Agent B's URL. - Benefit: This eliminates the need for agents to constantly list files to check for new work, saving credits and computing resources.

semantic_search

This is the primary interface for the workspace's "brain."

- Usage:

semantic_search(query="What were the Q3 sales figures?", path="/finance") - Behavior: The system returns relevant text chunks from documents in the

/financefolder, complete with citations. - Strategy: Use this tool to route tasks. An "Orchestrator Agent" can search the workspace to decide which specialist agent is best suited for a new document based on its content.

import_url

This tool enables data ingestion without local bandwidth.

- Usage:

import_url(url="https://external-source.com/data.zip", destination="/raw/data.zip") - Behavior: The server pulls the file directly to the workspace.

- Application: Perfect for "Fetcher" agents that aggregate content from the web or other cloud storage providers (Google Drive, Dropbox, etc.) for processing.

Example Multi-Agent Workflow Pipelines

To illustrate the power of this architecture, let's examine two concrete workflow patterns.

1. The Market Research Pipeline

This workflow automates the gathering, analysis, and synthesis of market data.

Agent A (The Hunter):

- Monitors news feeds and RSS sources.

- Uses

import_urlto save relevant PDFs and articles to the/raw-datafolder. - Renames files with standard conventions (e.g.,

YYYY-MM-DD-Topic.pdf).

Agent B (The Analyst):

- Watches

/raw-datavia webhook. - On new file: Uses

semantic_searchto extract key entities and sentiment. - Writes a structured JSON summary to

/processed-data. - Uses

acquire_lockon thedaily_report.mdfile to append its findings safely.

- Watches

Agent C (The Editor):

- Runs on a schedule (cron job).

- Reads

daily_report.md. - Synthesizes the entries into a polished executive summary.

- Uses

create_share_linkto generate a view-only link. - Emails the link to human stakeholders.

2. The Video Production Pipeline

This workflow uses Fastio's media capabilities for automated content processing.

Agent A (Ingest):

- Uploads raw footage from field cameras using

upload_chunked(handling files up to multiple). - Moves files to

/ingest.

- Uploads raw footage from field cameras using

Agent B (Transcode & Tag):

- Detects new video files.

- Fastio automatically generates HLS streams and previews.

- Agent B analyzes the transcript (generated by Intelligence Mode) to tag the video with keywords (e.g., "interview", "outdoor", "product-demo").

- Moves the file to

/library/tagged.

Human Editor:

- Receives a notification.

- Opens the file in the Fastio video player.

- Uses the timeline to add frame-specific comments.

- Because of HLS streaming, playback is 50-60% faster than downloading the raw file, enabling immediate review.

Handling Errors and Retries

In both pipelines, error handling is managed via file status. If Agent B fails to process a file, it moves it to /error instead of /processed. A specific "Janitor Agent" can monitor the /error folder to retry operations or alert a human admin. This "dead letter queue" pattern is easily implemented using standard folder structures.

Security and Governance for Agent Teams

As you scale from one agent to many, security becomes paramount. Shared workspaces provide strong tools to maintain control.

Managing Agent Identities

Never share API keys between agents. Generate a unique key for each agent identity (e.g., "ResearchBot-multiple", "VideoBot-multiple"). This ensures that the Audit Logs correctly attribute every action. If "ResearchBot-multiple" accidentally deletes a critical folder, the logs will identify the culprit immediately, allowing you to revoke that specific key without taking down the entire system.

Permission Scopes

Adhere to the principle of least privilege.

- Read-Only: Agents that only need to ingest data (like a backup verification bot) should have

Viewerpermission. - Write-Access: Agents that generate content need

Editorpermission. - Admin: Only the primary orchestrator or human admin should have

Adminprivileges to manage workspace settings and invites.

Monitoring and Limits

Keep an eye on the Usage Dashboard. While the free tier is generous (5,000 credits/month), an infinite loop in a script could drain this quickly.

- Monitor credit usage daily during development.

- Set up alerts for unusual spikes in activity.

- Use the

get_usagetool programmatically to let agents check their own remaining budget and throttle themselves if necessary.

By treating agents as distinct team members with defined roles and boundaries, you create a secure, observable environment that is ready for enterprise deployment.

Troubleshooting Common Orchestration Issues

Even with a strong architecture, issues can arise. Here are solutions to common problems.

"File

Locked" Errors

Symptom: An agent crashes and leaves a file locked forever.

Solution: Implement a time-to-live (TTL) logic in your locking mechanism, or have a "Janitor" script that forcibly releases locks older than multiple hour. Always wrap critical sections in try/finally blocks in your code.

Rate

Limiting Symptom: Agents receive multiple Too Many Requests errors. Solution: Implement exponential backoff in your API client. If multiple agents wake up simultaneously (e.g., triggered by the same webhook), add a random jitter delay (multiple-multiple seconds) before they start processing to spread the load.

Context

Window Overflow

Symptom: Agent fails to process a large file.

Solution: Don't read the whole file. Use semantic_search to find only the relevant chunks, or use the summarize_document tool to get a compressed version of the content. This is the primary advantage of RAG, you don't need to load everything into context.

Sync

Delays Symptom: Agent B tries to read a file immediately after Agent A writes it but gets a multiple. Solution: While Fastio is strongly consistent, network propagation can take milliseconds. Ensure Agent A waits for a strictly successful multiple OK response from the upload before notifying Agent B. Using webhooks is generally more reliable than polling for immediate availability.

Frequently Asked Questions

What is MCP agent orchestration?

MCP workspace agent orchestration is a design pattern where multiple AI agents coordinate their work within a shared Fastio workspace using the Model Context Protocol. They use shared files for state, file locks for synchronization, and webhooks for event-driven workflows.

What is the free tier for agents?

Fastio offers a dedicated agent free tier with 50GB of storage, 5,000 monthly credits, 5 workspaces, and a 1GB maximum file size limit. No credit card is required to sign up.

How do file locks work for agents?

Agents use the `acquire_lock` and `release_lock` tools to manage concurrent access to files. This prevents race conditions where two agents might try to edit the same file simultaneously, ensuring data integrity in multi-agent pipelines.

Can agents and humans work in the same workspace?

Yes. Workspaces are unified environments. Humans access files via the Fastio web or mobile UI, while agents access the exact same files via MCP tools. This allows for smooth handoffs and collaboration.

How does Intelligence Mode help orchestration?

Intelligence Mode automatically indexes all files uploaded to a workspace. This gives agents built-in RAG capabilities, allowing them to perform semantic searches and ask questions about the data without needing an external vector database.

How many members can a workspace have?

On the free tier, a workspace can support up to 5 members (agents or humans). This is sufficient for small teams or prototyping multi-agent clusters. Paid plans support unlimited members.

Related Resources

Give Your AI Agents Persistent Storage

Start with 50GB free storage, 5 workspaces, and 251 MCP tools. No credit card required for agents. Built for MCP workspace agent orchestration workflows.