How to Extract Document Metadata with Large Language Models

Large language models can read unstructured documents and return structured metadata fields like author, date, topic, and entity tags without hand-coded rules. This guide covers how to prompt LLMs for reliable extraction, catch hallucinated fields, compare costs against traditional parsers, and build a production pipeline.

What LLM-Powered Metadata Extraction Actually Does

Traditional metadata extraction depends on rules. Regex patterns match dates. XPath queries pull fields from XML. Template overlays map pixel coordinates on scanned forms to field names. Each document type needs its own parser, and each parser breaks when the layout shifts.

LLM-powered extraction takes a different approach. You feed a document's text to a large language model along with a description of the fields you want. The model reads the content, understands its structure, and returns those fields as structured data. No templates. No layout-specific rules. The same model handles invoices, contracts, research papers, and insurance forms without changing a single line of extraction logic.

This matters most for documents that don't follow predictable patterns. Contracts from different law firms use different layouts. Invoices from different vendors put line items in different places. Research papers shift citation styles between journals. A rule-based parser needs separate handling for every variation. An LLM reads the document the way a person would and pulls the relevant fields regardless of where they appear on the page.

The practical output looks like this: you upload a contract PDF and ask the model to extract the effective date, counterparties, governing law, and termination clause. It returns a JSON object with those fields populated. Upload an invoice instead, change the prompt to request vendor name, invoice number, line items, and total. Same model, same pipeline, different instructions.

Recent benchmarks from the MOLE metadata extraction study show GPT-4o achieving F1 scores between 0.91 and 0.97 on zero-shot metadata extraction from scientific papers. That's comparable to human annotator agreement in the same evaluation. Accuracy varies by domain though. Extracting a document's title and date is straightforward. Pulling specific clause types from a 200-page legal agreement requires more careful prompting and validation. The 85-95% accuracy range researchers report reflects this spectrum: simple fields approach the high end, domain-specific fields sit closer to the low end.

Rule-Based Extraction vs. LLM Extraction

Rule-based extractors work by matching patterns. A regex finds dates in DD/MM/YYYY format. An XPath query pulls the author element from structured XML. A template maps specific regions on a scanned invoice to named fields.

These tools are fast and cheap to run. Once a rule works, it works identically every time. You don't pay per-document API fees. The extraction logic doesn't change behavior after a provider updates their model.

The problem is brittleness. When documents deviate from expected layouts, rule-based systems break. These extractors commonly fail on 30-40% of fields in non-standard document formats. A vendor changes their invoice template, the extraction stops working. A new contract uses different terminology for the same concept, the parser skips it entirely. Maintenance becomes the real expense: every edge case demands a new rule, and rules interact in ways that create regressions elsewhere.

LLM extraction trades brittleness for flexibility. Because the model processes language in context, it handles layout variations, synonyms, and formatting inconsistencies without new rules. You describe what you want in plain English. The model figures out where to find it.

Here's where each approach fits:

Rule-based extraction works well when:

- Documents follow strict, known templates (government forms, standardized reports)

- Volume is extremely high and per-document cost is the primary constraint

- Target fields are syntactically predictable (dates, phone numbers, email addresses)

- Output formatting must be identical every time for compliance reasons

LLM extraction works well when:

- Documents come from many sources with different layouts

- Extracting a field requires understanding context, not just pattern matching

- The metadata schema changes often as business requirements shift

- Documents contain free-form text where relevant information has no fixed position

Many production systems combine both approaches. A rule-based layer handles structured, predictable fields (dates in known formats, standard identifiers). The LLM handles everything the rules miss. This hybrid approach keeps per-document costs low while covering the long tail of document variation.

How to Prompt an LLM for Structured Metadata Output

Most extraction failures trace back to vague prompts. Asking an LLM to "extract metadata from this document" produces inconsistent, incomplete results. Four techniques make the biggest difference between unreliable output and production-grade extraction.

Define an explicit output schema

Give the model a JSON schema describing every field, its type, and a brief definition. Don't leave field interpretation to the model's judgment.

{

"effective_date": {

"type": "string",

"format": "date",

"description": "Contract start date in ISO 8601 format"

},

"counterparties": {

"type": "array",

"items": {"type": "string"},

"description": "Full legal names of all signing parties"

},

"governing_law": {

"type": "string",

"description": "State or country whose law governs the contract"

},

"total_value": {

"type": "number",

"description": "Total contract value in USD, null if not specified"

}

}

Most LLM APIs now support structured output natively. OpenAI's function calling, Anthropic's tool use, and Google's structured output mode all accept a JSON schema and return validated results. Use these features instead of hoping the model generates valid JSON on its own.

Include few-shot examples

Show the model what correct extraction looks like. Two or three examples of input text paired with expected output teach the model your specific field definitions better than a paragraph of explanation ever could. Research on metadata extraction quality shows measurable improvement when moving from zero-shot to few-shot prompting, even with a single example.

Use chain-of-thought for ambiguous fields

For fields that require reasoning rather than simple lookup, ask the model to explain its logic before producing the final value. This works particularly well for classification fields (contract type, document category) and for cases where several candidate values exist in the text.

For each field:

1. Quote the exact text passage where you found the information

2. Explain your reasoning if the field is ambiguous

3. Provide the extracted value

4. If the information is not present, set the value to null

Chunk long documents strategically

A 200-page contract might contain the effective date on page 1, parties on page 2, and payment terms in Appendix C. Sending the full document wastes tokens and often exceeds context windows.

Use a two-pass approach instead. The first pass scans section headers and the table of contents to identify which pages contain relevant information. The second pass sends only those pages for extraction. On long documents, this cuts token costs by 60-80% while maintaining extraction accuracy.

Turn Documents into Structured Data Without Building a Pipeline

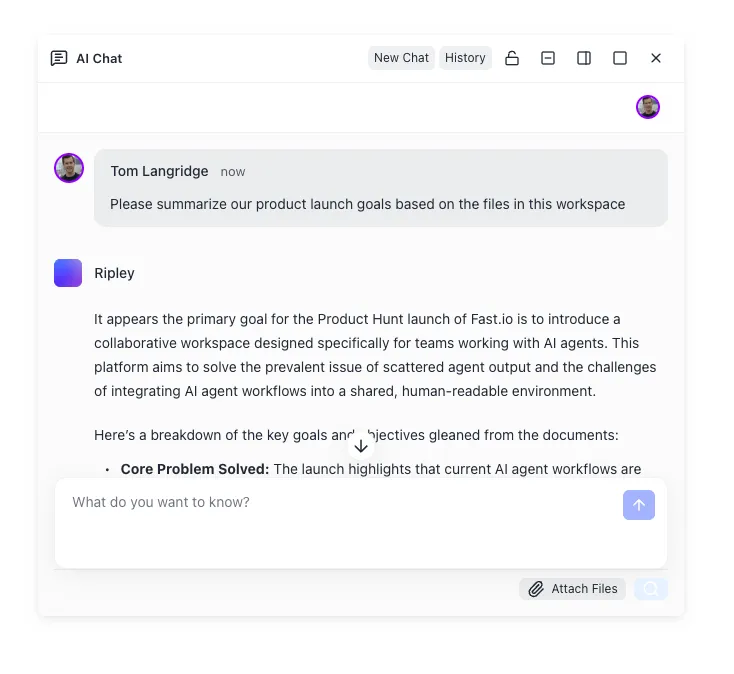

Fast.io Metadata Views extract structured fields from any document type using AI. Describe the columns you want in plain English, and get a sortable, filterable spreadsheet. No templates, no OCR rules, no code.

Catching and Preventing Hallucinated Metadata

The biggest risk with LLM extraction is confident wrong answers. A rule-based parser either finds the pattern or returns nothing. An LLM might generate a plausible contract date that doesn't exist in the document, or produce a vendor name from its training data rather than from the actual text on the page.

Hallucination in metadata extraction follows three patterns.

Fabricated values. The model invents data that looks correct but doesn't appear in the document. A date formatted like a contract effective date. A company name similar to the actual party but slightly different.

Inferred values. The model makes a reasonable guess from context without the information being explicitly stated. If a contract mentions California throughout, the model might return "California" as the governing law even when the governing law clause specifies New York.

Format errors. The model extracts the right information but applies the wrong formatting. Month and day swapped in dates. Currency symbols missing or incorrect. Names reordered unexpectedly.

Here's how to catch each type in practice.

Require source citations per field. Ask the model to return the exact text snippet it used for each extracted value. Then verify programmatically that the snippet actually exists in the source document. If the model cannot point to verbatim source text, the field is likely fabricated.

{

"effective_date": {

"value": "2026-03-15",

"source_text": "This Agreement is effective as of March 15, 2026",

"page": 1

}

}

Add a validation layer after extraction. Run every output through type-specific checks. Date fields must parse as valid dates. Numeric values must fall within reasonable ranges. Entity names should fuzzy-match against text found in the document. Enum fields like contract type or jurisdiction should match a known set of allowed values.

Use confidence thresholds. Ask the model to rate its confidence for each field (high, medium, low). Route low-confidence fields to human review instead of accepting them automatically. In practice, this catches most hallucinated fields before they reach your database.

Cross-validate with a second pass. Run extraction twice with different prompting strategies or different models, then compare results. Fields where both passes agree are almost always correct. Disagreements flag likely hallucinations for review. Self-consistency validation across multiple generations catches errors that single-pass extraction misses entirely.

Cost Comparison at Scale

The cost question around LLM extraction depends on document length, field complexity, and volume. It's rarely as simple as "LLMs are expensive."

Per-document costs with current models

A single-page invoice contains roughly 500 to 1,000 tokens when converted to text. With GPT-4o pricing around $2.50 per million input tokens (early 2026), extracting metadata from one invoice costs about $0.003 to $0.01. That's far less than the $0.20 to $1.00 figure older comparisons cite, which reflected previous-generation model pricing or unnecessarily verbose prompts.

Longer documents scale with page count. A 50-page contract runs 25,000 to 50,000 tokens, pushing per-document cost to $0.10 to $0.50 depending on the model. The chunking strategy from Section 3 cuts this significantly by sending only relevant pages.

Smaller models reduce costs further. Gemini Flash can process roughly 6,000 pages per dollar. Claude Haiku and GPT-4o Mini offer similar economics for straightforward extraction tasks where a flagship model's reasoning power isn't necessary.

How traditional extraction compares

OCR-based extraction through AWS

Textract or Google Document AI costs $0.001 to $0.01 per page. On a strict per-document basis, that's cheaper.

But total cost of ownership changes the math. Rule-based systems require:

- Initial template development: 40 to 200 hours of engineering per document type

- Ongoing maintenance: 5 to 20 hours monthly as document formats change

- Manual exception handling: staff time to process the documents that fail automated extraction

For organizations processing fewer than 100,000 varied documents per month, LLM extraction often costs less when engineering time is included. Above that volume, a hybrid approach (OCR for structured fields, LLM for context-dependent fields) usually offers the best economics.

Where managed platforms fit

If you want structured extraction without building or maintaining a pipeline, Fast.io's Metadata Views collapse the entire workflow into a single workspace feature. You describe the fields you want in natural language. The AI designs a typed schema with seven field types (Text, Integer, Decimal, Boolean, URL, JSON, Date & Time), scans your workspace files, and populates a sortable, filterable data grid. Adding a new column doesn't require reprocessing existing files. The workspace handles PDFs, images, Word docs, spreadsheets, presentations, and scanned pages.

For agent-driven workflows, Metadata Views are accessible through the Fast.io MCP server. Agents can create schemas, trigger extraction, and query results programmatically. The free agent plan includes 50 GB of storage and 5,000 monthly credits with no credit card required.

Dedicated extraction platforms like Parsio and Unstract offer similar managed experiences with more customization around pipeline orchestration. The right choice depends on how much of the pipeline you want to own.

Building a Production Extraction Pipeline

A production metadata extraction system needs four layers: ingestion, text extraction, LLM processing, and output handling.

Ingestion is where documents enter the system, through uploads, API calls, email attachments, or cloud sync. The ingestion layer normalizes file types and routes documents to a processing queue. Event-driven architectures work best here. Webhooks trigger extraction the moment a file arrives, eliminating the latency and waste of scheduled polling.

Text extraction converts documents into machine-readable text. PDFs with embedded text go straight to the LLM. Scanned documents, images, and handwritten notes need OCR first. Open-source options like Tesseract handle basic OCR. Cloud services like AWS Textract and Google Document AI provide higher accuracy on complex layouts with table detection and form parsing.

LLM processing is the core extraction step. It combines your schema definition, few-shot examples, and document text into a single prompt. For production reliability, add retry logic with exponential backoff for API rate limits, model fallback if your primary provider goes down, and output validation against the schema before accepting results.

Here's a minimal Python implementation using OpenAI's structured output:

import json

from openai import OpenAI

client = OpenAI()

schema = {

"type": "object",

"properties": {

"vendor_name": {"type": "string"},

"invoice_number": {"type": "string"},

"invoice_date": {"type": "string", "format": "date"},

"line_items": {

"type": "array",

"items": {

"type": "object",

"properties": {

"description": {"type": "string"},

"quantity": {"type": "number"},

"unit_price": {"type": "number"}

}

}

},

"total": {"type": "number"}

}

}

response = client.responses.create(

model="gpt-4o",

input=[

{

"role": "system",

"content": (

"Extract invoice metadata from the document. "

"Return only fields present in the text. "

"Set missing fields to null."

)

},

{"role": "user", "content": document_text}

],

text={

"format": {

"type": "json_schema",

"schema": schema

}

}

)

metadata = json.loads(response.output_text)

Output handling routes extracted metadata to its destination. PostgreSQL with JSONB columns handles variable schemas well. Elasticsearch or Typesense work if the metadata feeds a search interface. Some teams write results back to the file's metadata record in their storage platform.

If you're already storing files in Fast.io, Metadata Views skip the pipeline entirely. Upload documents to a workspace, define the fields you want, and extraction runs automatically. For custom pipelines, the same workspace can serve as both the document store and the extraction output destination, with Intelligence Mode indexing everything for search and chat alongside the structured metadata.

Frequently Asked Questions

How do you use AI to extract metadata from documents?

Upload the document to an LLM-based extraction system, define the metadata fields you want (date, author, entities, amounts), and the model reads the document text and returns those fields as structured data. You can use API-based approaches with models like GPT-4o or Claude, or managed platforms like Fast.io Metadata Views that handle the entire pipeline from document upload to queryable spreadsheet output without code.

Is LLM metadata extraction accurate?

Current benchmarks show GPT-4o achieving F1 scores between 0.91 and 0.97 on zero-shot metadata extraction tasks. Accuracy depends on field complexity: simple fields like dates and titles score at the high end, while domain-specific fields like contract clause types require more careful prompting. Adding few-shot examples, structured output schemas, and validation layers improves reliability further.

What is the difference between rule-based and LLM metadata extraction?

Rule-based extraction uses predefined patterns (regex, templates, XPath) to find specific fields in known document layouts. It's fast and cheap but breaks when documents deviate from expected formats. LLM extraction reads documents contextually, handling layout variations and synonym differences without custom rules. LLMs cost more per document at high volume but require far less engineering time to support new document types.

How do you prevent hallucinated metadata from LLMs?

Four techniques reduce hallucination in extracted metadata. First, require the model to cite the exact source text for each field and verify the citation exists in the document. Second, validate output types and ranges programmatically (dates must parse, numbers must be reasonable). Third, use confidence scores and route low-confidence fields to human review. Fourth, run extraction twice with different prompts or models and flag any disagreements for manual inspection.

How much does LLM metadata extraction cost per document?

With current model pricing, a single-page document costs roughly $0.003 to $0.01 to process through GPT-4o. Longer documents scale with token count, and a 50-page contract might cost $0.10 to $0.50. Smaller models like Gemini Flash or Claude Haiku can process about 6,000 pages per dollar. Total cost of ownership often favors LLMs over rule-based systems for varied document types because LLMs eliminate template development and maintenance costs.

Related Resources

Turn Documents into Structured Data Without Building a Pipeline

Fast.io Metadata Views extract structured fields from any document type using AI. Describe the columns you want in plain English, and get a sortable, filterable spreadsheet. No templates, no OCR rules, no code.