Building Shared Workspaces for LangGraph Multi-Agent Teams

A shared workspace for LangGraph teams gives agents a central spot to access shared files, state, and tools. We'll look at how to move beyond local checkpoints to build reliable collaborative environments for complex agent systems with human oversight.

What to check before scaling shared workspace langgraph multi agent teams

LangGraph is a popular framework for building stateful, multi-agent systems. While most developers start with simple graphs and local state, real-world applications often need a more reliable setup. The real challenge isn't just the logic flow; it's managing the environment where those agents actually work.

In production, agents rarely work alone. A research agent might download a PDF that a summarization agent needs to read, and then a writer agent has to turn those insights into a draft. Without a shared space, knowledge stays trapped in individual graph nodes, which leaves you with fragmented memory.

A shared workspace solves this by providing a unified file system and state repository. This lets agents pass entire file pointers and structured data objects to each other instead of just text strings. It turns a collection of scripts into a cohesive team that can handle multi-step projects.

Helpful references: Fastio Workspaces, Fastio Collaboration, and Fastio AI.

5 Key Components of a LangGraph Team Workspace

To build a workspace for multi-agent collaboration, you need five specific layers. These parts keep agents aligned, data persistent, and humans in control.

Unified State Repository: A central place where the status of every task is stored and accessible to every agent in the graph. 2.

Shared File System: A common storage area where agents can read from and write to the same set of documents without local I/O bottlenecks. 3. Human-in-the-Loop Interface: A UI that lets human team members see agent work, provide corrections, and approve final outputs. 4.

Persistent Checkpointing: A mechanism to save the state of the workspace at every step, allowing for error recovery and debugging. 5.

Tool Orchestration Layer: A standardized set of tools, such as the Model Context Protocol (MCP), that lets agents interact with the workspace and external services.

Using these layers keeps agents in sync. When there’s one source of truth, you avoid the "broken telephone" effect where context disappears as it moves through the graph.

Scale Your LangGraph Teams with Shared Workspaces

Give your agents a persistent home with 50GB free storage, 251 MCP tools, and built-in human-agent collaboration. No credit card required. Built for shared workspace langgraph multi agent teams workflows.

Implementing Cross-Agent File Sharing

Most LangGraph tutorials miss cross-agent file management. While LangGraph handles message state, it doesn't include a persistent file system that lasts beyond a single node. This means if one agent generates a report, the next agent might not be able to find it easily.

You can fix this by adding a storage layer that understands the workspace. Using Fastio workspaces as the underlying storage gives your agents a persistent file system via MCP tools. When Agent A creates a file, it is indexed and available for Agent B immediately. This handoff is much more reliable than just passing text strings between nodes.

For example, a Research Agent might save source documents to a workspace. The Writing Agent then uses the workspace ID to find those files. Since the workspace handles the indexing, the Writing Agent can search through them using semantic search or RAG. The agent doesn't need the full text in its prompt, which saves on token costs and reduces hallucinations.

This approach also allows for multi-modal collaboration. A data agent could write a CSV file, a python-interpreter agent could generate a visualization from it, and a final presentation agent could then assemble those assets into a slide deck. Each agent performs its specialized task, but they all contribute to a single, persistent output that grows in value as the work moves forward.

Managing Multi-Human Oversight

AI agents are powerful but not perfect. Success rates usually drop when things get complex, so human oversight is a must. A shared workspace connects the background work of the graph with the needs of a human manager.

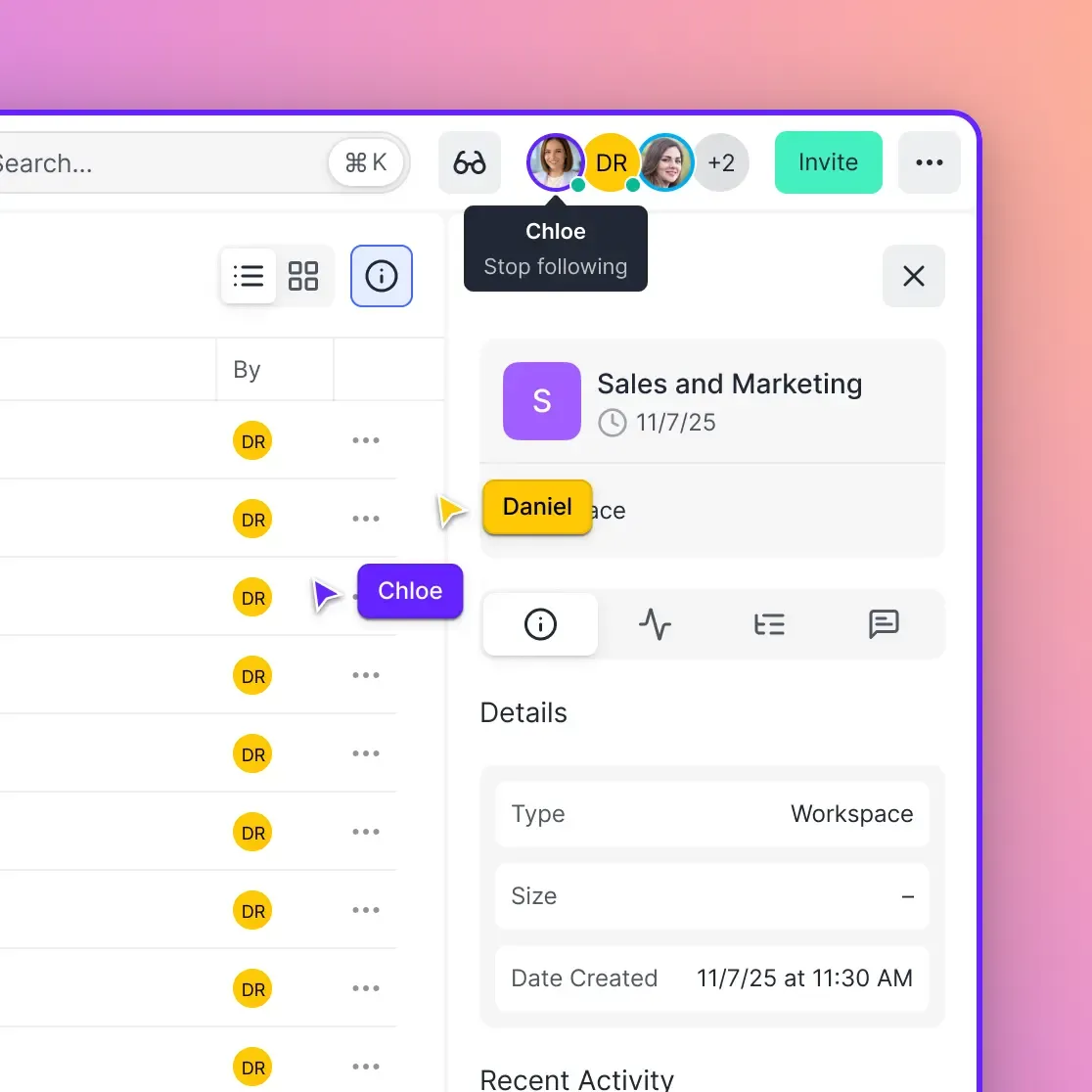

In a shared workspace, humans and agents work in the same environment. You can open the UI to see files an agent is generating as it happens. If an agent goes off track, you can edit a file or update a state variable, which the agent will see in its next turn. You need this kind of transparency for high-stakes work like legal reviews or medical data analysis.

This "shared scratchpad" model works better than simple chat interfaces. It allows for asynchronous collaboration where humans set the strategy and agents handle the details. Ownership transfer features let an agent build a workspace and then hand over full control to a client or manager once the task is done. The user gets a finished product, not just a stream of text.

Evidence and Benchmarks: The Cost of Misalignment

Data on multi-agent systems shows why shared workspaces matter. Coordination is about context, not just logic. When context is fragmented, systems fail more often. Without a shared source of truth, agents can lose the thread of the conversation or contradict each other.

According to research on multi-agent frameworks, systems like MetaGPT and ChatDev can face major hurdles on real-world tasks. Studies indicate that multi-agent LLM systems can fail 40% to 80% of the time depending on complexity. Most failures were caused by agents getting out of sync (36.multiple%) or specification errors (multiple.8%). These issues happen when agents don't have a shared understanding of the project and the files they are working on.

Success rates for complex tasks drop from 58% to 35% when agents move from single-turn to multi-turn coordination, according to Salesforce CRM-Arena-Pro research. Using a shared workspace provides a coordination layer that makes these interactions more stable. A shared file system and unified state repository make sure every agent works from the same context. This setup reduces errors and helps ensure the final output meets quality standards.

Step-by-Step: Setting Up Your LangGraph Workspace

Follow these steps to build a reliable shared workspace for your LangGraph team. These steps assume you are using LangGraph in a Python environment and want to integrate persistent workspace storage.

1. Define Your Global State

Start by creating a TypedDict that includes the message history, a workspace_id, and a shared_context field. This way, every node knows exactly where the work is happening. You might also include a current_task_index to help agents track their progress through a long project.

2. Connect Your Persistence Layer

Pick a checkpointer that supports external storage. SQLite is fine for testing, but a production setup needs a distributed database like PostgreSQL. This lets multiple instances of your graph share the same history, making it easier to scale. Fastio's Durable Objects-based state management can also be used here for reliable session tracking.

3. Register Workspace Tools

Equip your agents with tools for file management. Using the Fastio MCP server is the most efficient path. This gives your agents multiple tools for reading, writing, searching, and managing files within the shared workspace. Pass the workspace_id from your state to these tools. This keeps the agent working in the right context every time.

4. Implement Human Checkpoints

Use LangGraph's interrupt_before or interrupt_after functionality at key nodes. For example, you might interrupt the graph before a final file is emailed to a client. This pauses the graph and lets you know that input is needed. You can then use the workspace UI to review the agent's work, make direct edits if needed, and resume the graph.

5. Orchestrate the Flow with File Logic

Configure your graph edges to handle transitions between agents based on what is in the shared workspace. Instead of just looking at the last message, have your router function check for specific files. For instance, if a quality_score.json file in the workspace contains a low value, the graph should route back to the "refinement" agent automatically.

Frequently Asked Questions

How do multiple LangGraph agents share files?

Multiple agents share files by connecting to a centralized workspace via MCP (Model Context Protocol) or a shared API. Instead of passing file contents as text in messages, agents pass file references or paths. One agent writes the file to the shared storage, and subsequent agents use tools to list, read, or search that storage area.

Can LangGraph handle multi-user collaboration?

Yes, LangGraph can handle multi-user collaboration by using persistent checkpointers and shared workspaces. By storing the graph state in a distributed database and providing a shared UI for humans, multiple team members can observe and interact with the same agentic workflow simultaneously.

What is the best way to persist LangGraph state?

The best way to persist LangGraph state for teams is using a distributed checkpointer like PostgreSQL or a specialized state management service. This ensures that the state is not tied to a single local machine and can be recovered or audited by multiple agents and humans across different sessions.

What are the common causes of multi-agent failure?

Most failures happen because agents get out of sync, miss specifications, or fail to verify tasks. Statistics show that up to multiple.multiple% of failures happen because agents lose context or miscommunicate during handoffs, and another multiple.8% stem from specification issues. A shared workspace helps fix this by providing one source of truth for all agents.

How does a shared workspace improve RAG for agents?

A shared workspace improves RAG by providing a unified index for all project-related documents. When an agent uploads a new file, it is automatically indexed. This allows any other agent in the team to query the entire knowledge base of the workspace using semantic search, ensuring they always have the most relevant context.

Related Resources

Scale Your LangGraph Teams with Shared Workspaces

Give your agents a persistent home with 50GB free storage, 251 MCP tools, and built-in human-agent collaboration. No credit card required. Built for shared workspace langgraph multi agent teams workflows.