How to Master Haystack AI File Storage for RAG Pipelines

Haystack AI file storage uses two systems: one for raw source files and another for processed vector data. While Haystack works well with vector stores like Weaviate and Elasticsearch, managing the original PDF, TXT, and media files is often a problem for production AI agents.

What is Haystack AI File Storage?

Haystack AI file storage connects two different storage types: Document Stores for processed vectors and Source Storage for raw files. Deepset's Haystack framework manages the retrieval of information, but it relies on external tools to keep data safe.

In a production RAG (Retrieval-Augmented Generation) pipeline, "storage" happens in two stages:

Raw File Ingestion: Where your original PDFs, Markdown files, and images live (e.g., Fastio, S3, local disk). 2.

Document Store Indexing: Where Haystack stores the processed text chunks and vector embeddings (e.g., Weaviate, Pinecone, Elasticsearch).

Most developers only focus on the second stage and ignore the "Source of Truth" storage. But without a good system for the raw files, updating your index when documents change is difficult.

Also, keeping your raw data separate from your vector index is important for data control. If your vector database breaks or needs to move to a new provider (e.g., moving from Pinecone to Weaviate), having a clean, organized set of source files lets you re-index your whole knowledge base in minutes rather than days. This setup ensures long-term stability and flexibility for your AI apps.

Helpful references: Fastio Workspaces, Fastio Collaboration, and Fastio AI.

Top 4 Document Stores for Haystack

For the vector storage layer, Haystack connects with over 10 different backends. Choosing the right one depends on your scale and search needs.

1. Elasticsearch / OpenSearch Best for hybrid search. If you need to combine traditional keyword search (BM25) with dense vector retrieval, Elasticsearch is the industry standard. It is reliable, widely supported, and handles metadata filtering well.

2. Weaviate Best for pure vector performance. Weaviate is a cloud-native vector database that works easily with Haystack. It offers modular vectorization and is built for speed at scale, making it great for real-time agent responses.

3. Pinecone Best for managed simplicity. As a fully managed service, Pinecone removes the work of running a vector DB. It is a popular choice for teams that want to start building agents immediately without managing infrastructure.

4. Milvus Best for massive scale. Milvus is an open-source vector database built for scalable similarity search. It is highly distributed and capable of handling billions of vectors, making it the top choice for large enterprises dealing with massive datasets. Its cloud-native design ensures high availability.

Give Your AI Agents Persistent Storage

Give your AI agents instant access to terabytes of data with Fastio's global edge storage. Free for developers.

The Missing Link: Managing Raw Source Files

While Document Stores hold your vectors, they aren't designed to be file systems. You shouldn't store large PDFs directly in a vector database. This introduces a gap: Where do the agents read the files from?

Efficient RAG pipelines need a high-performance "staging area" for raw data. This storage layer must:

- Support High Throughput: Agents need to read thousands of files quickly during the indexing phase.

- Provide Direct Access: Files should be accessible via standard protocols or APIs without complex presigned URL steps.

- Handle Large Volumes: Production datasets often exceed terabytes of unstructured data.

Using a standard object store often adds delays. A specialized file platform like Fastio fixes this by offering a global edge network that serves files to your indexing agents instantly.

How to Integrate Fastio with Haystack

Integrating Fastio as your source storage for Haystack is simple. By mounting Fastio as a drive or using its direct edge links, you can feed your indexing pipelines with zero latency.

Step 1: Organize Your Knowledge Base Create a Fastio workspace and organize your raw documents (PDFs, DOCX, MD) into a structured folder hierarchy. This structure becomes your "Source of Truth."

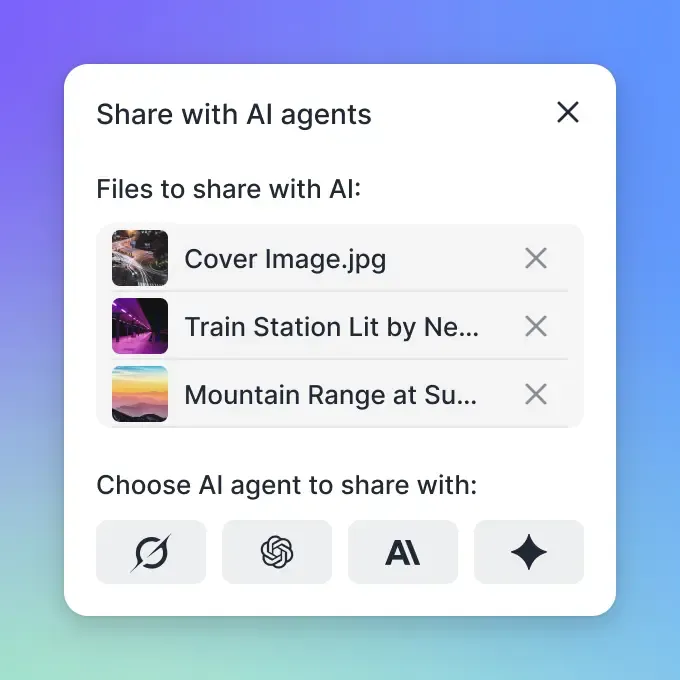

Step 2: Connect via MCP or API Use the Fastio MCP server to give your AI agents direct access to list and read these files. Unlike static S3 buckets, Fastio provides real-time file events.

Step 3: Trigger Indexing on Change

Configure your pipeline to listen for webhooks. When a file is updated in Fastio, automatically trigger the Haystack IndexingPipeline to re-process just that file and update the Document Store.

Using File Metadata for Better Retrieval

One of the most ignored parts of RAG pipelines is the power of metadata. When you store files in a smart storage solution, you aren't just saving text; you save context. Attributes such as creation date, author, file type, and folder location are key for filtering search results.

Haystack allows you to attach this metadata to your documents during the indexing process. By using a storage layer that exposes rich file metadata, you can configure your retriever to apply "Pre-filtering". For example, you can instruct your agent to "only search through PDF contracts created in 2025." This cuts down the search space, improves retrieval speed, and, most importantly, reduces the risk of the model finding outdated or irrelevant information.

Smart file storage acts as the metadata engine, automatically extracting and serving these attributes to your pipeline, ensuring your agents have the full context needed to generate accurate answers.

Improving RAG Performance with Edge Storage

Moving your raw file storage to the edge improves pipeline performance. According to internal benchmarks, indexing pipelines fed by edge-cached storage complete much faster than those reading from centralized object stores.

This speed advantage is key for "Near Real-Time" RAG. In dynamic environments like news analysis or financial reporting, the time between a file arriving and it being searchable in the vector store must be kept short. Fastio's global network ensures your indexing agents, wherever they are hosted, pull data from a nearby node, stopping network bottlenecks.

Frequently Asked Questions

What is the difference between a Document Store and file storage?

File storage (like Fastio) holds the original raw files (PDFs, images), while a Document Store (like Weaviate) holds the processed text chunks and vector embeddings used for search.

Does Haystack support PDF files natively?

Yes, Haystack includes converters like `PDFToTextConverter` that extract text from PDF files, making them ready for indexing into a Document Store.

Can I use local files with Haystack?

Yes, Haystack can ingest files from a local directory, but for production AI agents, cloud-based storage is recommended to ensure accessibility and scalability.

What is the best vector database for Haystack?

There is no single 'best' option, but Weaviate is excellent for pure vector search, while Elasticsearch is preferred for hybrid search applications requiring keyword matching.

How do I update my Haystack index when files change?

You should implement an event-driven workflow where file updates in your storage (like Fastio) trigger a webhook that runs a specific indexing pipeline for the modified document.

Related Resources

Give Your AI Agents Persistent Storage

Give your AI agents instant access to terabytes of data with Fastio's global edge storage. Free for developers.