How to Choose AI Storage: Fastio API vs Supabase Storage

Your storage backend dictates what your AI agents can actually do. Supabase Storage works great for standard web apps, but Fastio API gives agents native MCP support and multi-agent file locks. Here is how they compare for agent workloads.

What is the Difference Between Fastio API and Supabase Storage?

Your storage backend dictates what your AI agents can actually do. Supabase Storage is a general-purpose object storage service built over PostgreSQL and AWS. It works well for standard web or mobile apps, giving you standard buckets and basic transformations for user uploads.

Fastio API is a workspace layer built specifically for autonomous agents and LLMs. It isn't just a static file repository. The core difference is the abstraction level. Supabase provisions raw buckets and objects. Fastio provisions indexed workspaces, allows conversational file retrieval, and supports direct semantic querying through the Model Context Protocol (MCP).

Evaluating Supabase Storage for AI Applications

Developers building generative apps often pick Supabase for its PostgreSQL integration and pgvector extension. You store raw files in buckets and keep metadata in structured database tables. This works well for simple chatbots: you get a document, process it on your backend, generate text embeddings via OpenAI, and store them in a vector table.

That breaks down when you move from simple chat interfaces to autonomous agents. Agents don't just read a file once. They iterate over content, search for specific terms, modify configurations, and share findings. Supabase makes you build this entire interaction layer from scratch. If an agent needs to read a PDF manual, you have to write the code to download the file through the Supabase client, process it locally, chunk the text, and extract the relevant sections. You pay the cost in network latency and local I/O overhead.

File size limits are another constraint. According to Supabase Docs, standard file uploads are limited to 5GB. That limit makes handling large datasets or high-resolution video assets difficult. You end up spending your time managing infrastructure hurdles instead of improving how your agent actually reasons.

Evaluating Fastio API for Agentic Workflows

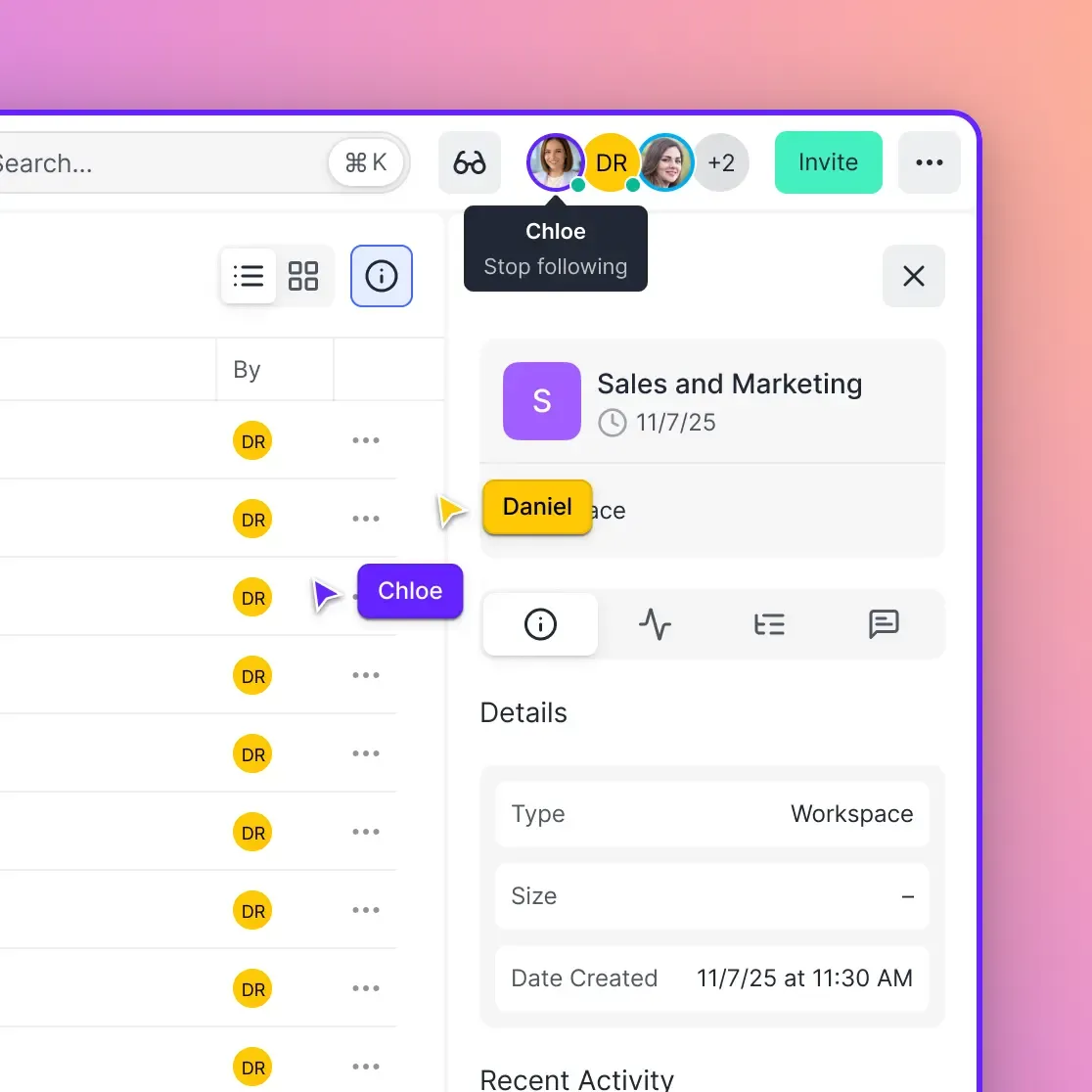

Fastio treats storage as a workspace instead of a passive file bucket. Since files provide the actual context that agents need to reason, Fastio includes native MCP integration. Your agents interface directly with the file system. When you create a workspace via the Fastio API, you aren't just allocating cloud disk space. You are creating an environment agents can navigate using natural language and standard tool calls. Agents get immediate access to tools via Streamable HTTP and server-sent events (SSE). They can list files, read specific sections, run semantic searches, and write updates, all without intermediate downloads or local processing. The Intelligence Mode feature handles the indexing. When you turn it on, Fastio automatically indexes uploaded files in the background. You don't have to configure a separate vector database, write chunking algorithms, or manage embedding pipelines. The retrieval system just works. Agents can ask questions and get answers backed by verifiable citations directly from the workspace. It removes the busywork of preparing documents for LLMs.

Evidence and Benchmarks: Pricing and Limitations

Cost predictability matters when you scale autonomous systems. According to Supabase Pricing, their Pro plan includes 100GB of file storage, with overage charges for every extra gigabyte. Free tier projects on Supabase are restricted to a global maximum file size of 50MB. That multiple limit makes it hard to test workflows with realistic datasets or large media files before paying.

Fastio gives you more room to experiment. You get persistent storage, a higher maximum file size limit, and monthly API credits out of the gate. You can check the pricing details to see the exact tiers, but the goal is to let you build and test agent workflows without hitting early paywalls.

You also have to factor in the hidden costs of data processing. With a standard bucket architecture, you pay for raw storage, plus the compute resources required to process, embed, and index those files on your own servers. Fastio includes built-in text retrieval and auto-indexing. You don't pay extra compute to extract value from your data. That makes Fastio much more cost-effective for token-intensive operations where agents constantly read and write state.

Core Comparison: Native MCP Support vs Standard APIs

The biggest architectural difference here is how agents actually interact with the storage. Supabase relies on standard REST endpoints and GraphQL queries. An AI agent cannot natively call a Supabase endpoint. You have to write a custom translation layer. That means writing the serverless function, exposing it to the agent, defining the JSON schema, and managing the authentication flow yourself.

Fastio completely removes that translation layer through native integration with the Model Context Protocol (MCP). When you point your application to the Fastio server, it immediately gets a suite of file management tools. Your Claude instance, GPT-multiple model, or local LLaMA setup natively understands how to navigate directories and read document contents without any custom glue code.

For teams using the OpenClaw framework, it's even simpler. A basic install command provisions tools optimized for natural language file management. The agent just talks to the API natively. You spend your time on prompt engineering and business logic, not API plumbing or network retry loops.

Give Your AI Agents Persistent Storage

Start using the only storage API built with native MCP support, built-in RAG, and explicit file locks. Built for fast api supabase storage apps workflows.

How to Ingest External Data: URL Import vs Local Downloading

Getting external data into your system is a massive bottleneck for AI agents. If a user provides a Google Drive link to a large dataset, a standard bucket like Supabase forces a clumsy workflow. The agent has to download the file to its local container, eating up bandwidth and disk space, and then re-upload it to the destination bucket. That double-handling is slow and often leads to network timeouts.

Fastio API skips the local download entirely with URL Import. An agent just issues a single API call with the source URL (Google Drive, OneDrive, Box, or Dropbox). Fastio handles the server-to-server transfer behind the scenes. Your agent container uses zero local I/O.

Once the transfer finishes, the file shows up in the workspace. The upload automatically triggers the background indexing process, so the file is immediately ready for semantic querying. Your agents stay fast and run perfectly on lightweight serverless infrastructure.

Handling Concurrent Multi-Agent Access with File Locks

Single-agent designs are giving way to multi-agent architectures. You might have one agent scraping web data while another processes it and a third writes the final report. If all three agents hit the same dataset at the same time, you risk race conditions and corrupted data.

Supabase Storage does not offer native file locking. If two agents try to update the exact same JSON state file concurrently, the last write wins. The previous agent's work gets silently overwritten, breaking your application state. To stop that, you have to build a distributed locking system yourself using PostgreSQL transactions or Redis. It's an entirely separate layer of state management you have to maintain.

Fastio API handles concurrency with explicit file locks. An agent requests an exclusive lock on a file, safely performs its read and write operations, and releases the lock. If another agent tries to modify the file while it's locked, the API tells it the resource is busy. You get reliable data integrity across your multi-agent system without building custom synchronization logic.

Security and Webhook Integration for Reactive Workflows

Agents need to know when their environment changes, but polling a storage bucket repeatedly for new files wastes compute and API credits.

Fastio uses webhooks to enable reactive workflows natively. If a user uploads a new reference document to a shared workspace, Fastio immediately fires a webhook to your agent's notification endpoint. The agent wakes up, processes the new file, and goes back to sleep. It keeps the AI in sync with the human users effortlessly.

Security is also different when dealing with AI. Fastio uses granular permissions to safely expose storage to autonomous systems. You can restrict an agent to a specific workspace or force read-only access, ensuring a hallucinating model can't accidentally delete production data. Supabase offers powerful Row Level Security (RLS) policies tied to database authentication, but configuring RLS for the API keys used by stateless autonomous agents is notoriously difficult. Fastio isolates access at the workspace boundary, making it much easier to deploy agents safely.

How to Implement Ownership Transfer in Fastio

The "build and handoff" pattern is becoming standard in agent development. An AI agent does the heavy lifting, like compiling a massive financial report, and then hands the finished product over to a human. Fastio supports this natively through explicit ownership transfer.

Step 1: Agent Creates the Workspace The agent uses its API credentials to provision a new workspace. It creates folders, imports external assets via URL Import, and generates the necessary files.

Step 2: Agent Invites the Human When the job is done, the agent hits the sharing API to invite the human user. The user gets an email with a secure link to view the files in the Fastio web interface.

Step 3: Ownership Handoff The agent transfers actual ownership of the workspace to the human, retaining admin access only if it needs to make future updates. It cleanly bridges the gap between machine generation and human ownership.

Which Storage Provider Should You Choose?

The choice comes down to the primary actor using your system. If you are building a standard web app where human users click buttons to upload profile avatars or PDF receipts, Supabase Storage is a fantastic choice that pairs perfectly with a PostgreSQL backend.

But if the primary actor navigating your system is an autonomous AI agent, Fastio API is the right tool for the job. Most storage comparisons only talk about CDN speeds and pricing per gigabyte, completely ignoring how AI agents actually access files. Fastio is built specifically for agent file access patterns. You get MCP support, built-in text retrieval indexing, and concurrent file locks out of the box.

By picking a storage layer that actually understands agent workflows, you save weeks of infrastructure development. You don't have to build custom translation layers, and your agents can get straight to work.

Frequently Asked Questions

Is Supabase good for AI agents?

Supabase works well for human-driven web apps and storing raw vector embeddings, but it lacks features designed specifically for AI agents, like Model Context Protocol support or concurrent file locks. You have to build custom translation layers for agents to interact with its buckets.

What is the best storage for AI agents?

The best storage for AI agents provides native intelligent capabilities. Fastio API includes built-in semantic retrieval, automatic file indexing, and native Model Context Protocol support, allowing agents to interact with files directly instead of requiring custom API integrations.

How do file locks work in multi-agent systems?

File locks prevent race conditions when multiple agents try to modify the exact same document at once. In Fastio, an agent can explicitly lock a file while working on it. If another agent attempts to edit the locked file, the API rejects the request, keeping data safe across the system.

Can I import large files without downloading them locally?

Yes. Fastio provides a URL Import feature that lets you specify a source link from Google Drive, Dropbox, or OneDrive. The platform handles the transfer directly between servers, so your serverless agent never has to download or upload the file locally.

Do I need a separate vector database with Fastio?

No. Fastio includes an Intelligence Mode that automatically indexes files in the background when they are uploaded. You don't need to configure a separate vector database or manage chunking pipelines. Agents can query the workspace directly and receive answers with verifiable citations.

Related Resources

Give Your AI Agents Persistent Storage

Start using the only storage API built with native MCP support, built-in RAG, and explicit file locks. Built for fast api supabase storage apps workflows.