How to Optimize Edge AI Video Storage and Cloud Sync

Edge AI video storage lets AI models analyze high-bandwidth video locally before syncing metadata to a shared workspace. This hybrid approach can cut cloud egress costs by up to 90% and reduce latency from seconds to milliseconds. By processing data at the source, teams keep high-resolution archives local while collaborating on key findings in the cloud. This guide explains how to build a resilient video network that balances local speed with cloud collaboration.

The Growing Challenge of High-Bandwidth Video

Modern video systems generate a massive amount of data every second. A single high-definition camera produces gigabytes of footage every hour, and as resolutions move toward multiple and multiple, the strain on traditional network infrastructure becomes unsustainable. Many organizations attempt to solve this by buying more cloud storage, but they quickly encounter the "egress wall." Sending raw video to the cloud for analysis is slow, expensive, and creates a single point of failure if the internet connection drops.

The problem is not just about storage space. It is about the time it takes to move that data across the wire. When a security team or a production crew needs an answer, they cannot afford to wait for a multiple file to finish uploading before the AI can even begin to look at it. This delay creates a gap between an event happening and a human being able to respond to it. Edge AI video storage solves this by bringing the intelligence to the data, rather than the other way around.

Helpful references: Fastio Workspaces, Fastio Collaboration, and Fastio AI.

What is Edge AI Video Storage?

Edge AI video storage is a localized storage architecture that allows AI models to analyze high-bandwidth video locally before syncing relevant clips and metadata to a central workspace. In a traditional cloud-only model, every byte of raw video is streamed to a remote server for processing. This often leads to massive bandwidth bottlenecks and high costs. By keeping the processing local, you turn your storage hardware into an active participant in your workflow.

In practice, this means your storage hardware is equipped with enough computational power to run inference on the fly. Instead of sending hours of video where nothing happens, the system only transmits the important moments. If an AI model detects a specific person or a manufacturing defect, it creates a small metadata package and a short video clip. This package is then synced to your cloud workspace, where it becomes immediately accessible to your entire team.

Why Hybrid Cloud Sync is the New Standard

The shift toward edge computing video storage is driven by the physical limits of network bandwidth. A single multiple camera stream can use up significant upload capacity. Scaling that to dozens of cameras can paralyze a local network. Hybrid cloud sync solves this by treating the cloud as an intelligence layer rather than just a storage bucket. This approach allows you to keep the heavy lifting local while the collaboration happens in the cloud.

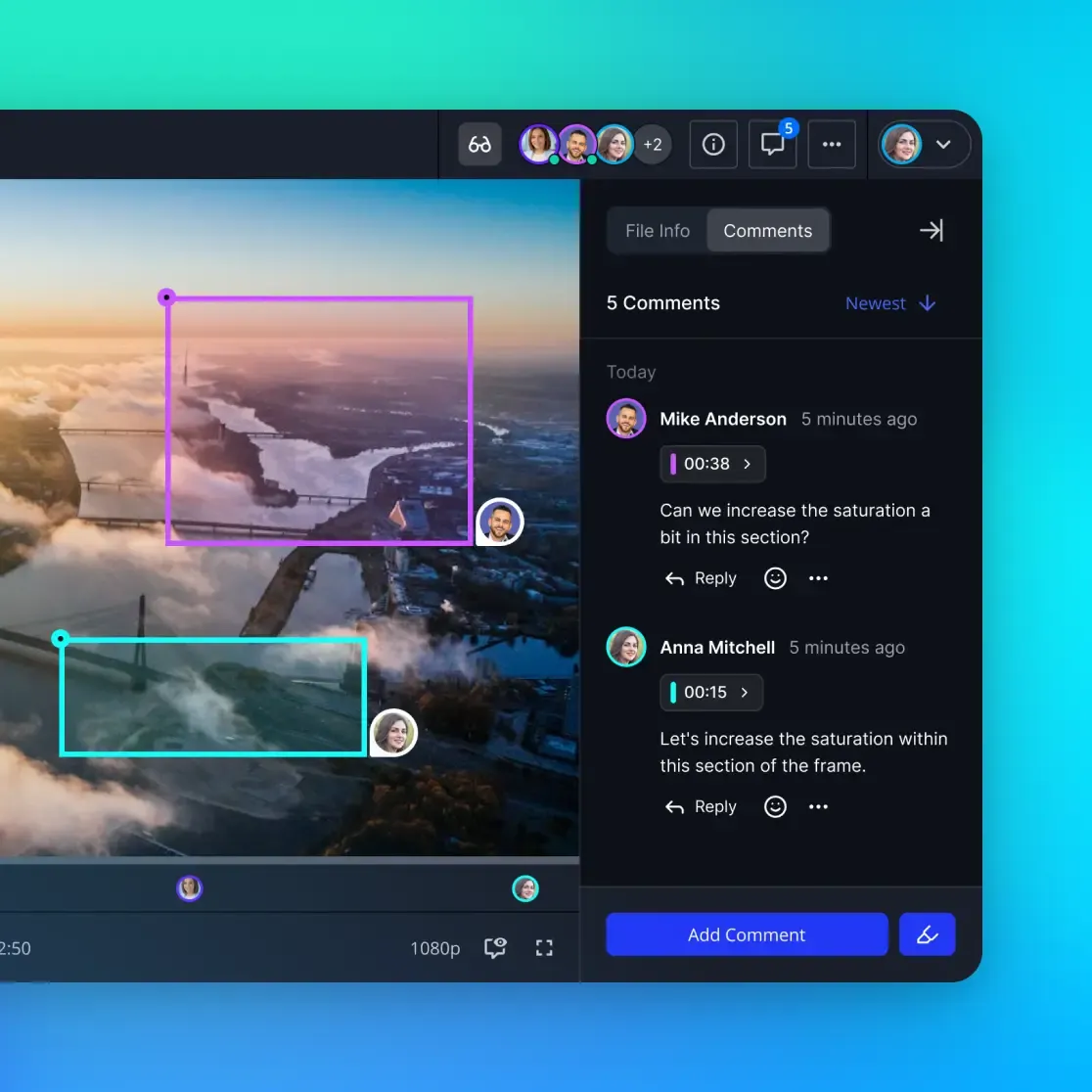

When you sync edge AI results to the cloud, you are moving insights, not just pixels. This setup enables real-time alerts and fast collaboration between humans and agents. For example, an AI agent can monitor edge storage for specific visual triggers and automatically set up a workspace for an editor or security professional. This workflow keeps high-fidelity video local for detailed review while giving the global team quick summaries they can act on.

Stream and Share Video on Fastio

Sync your edge AI insights to an intelligent workspace with 50GB of free storage and 251 MCP tools for your AI agents. Built for edge video storage networks workflows.

Hardware Considerations for Edge AI Storage

Choosing the right hardware is the most important part of building an edge AI network. Because AI models read and write data in unique patterns, standard consumer-grade hard drives often fail under the pressure. You need hardware that can handle high-throughput video streams while simultaneously allowing an AI model to read frames for analysis.

NVMe drives are the preferred choice for the primary ingestion layer. Their high random-read speeds allow AI models to scan multiple parts of a video file without slowing down the recording process. For longer-term local retention, high-capacity SSDs provide the best balance of speed and reliability. If you are working in an industrial environment, embedded eMMC storage is often used for its resistance to vibration and heat, though it generally offers lower performance than NVMe options.

You should also consider the "inference tax" on your hardware. Running a complex AI model requires significant CPU and GPU resources. If your storage controller is shared with your AI processor, you must ensure there is enough overhead to prevent dropped frames. Many modern edge devices use dedicated Vision Processing Units (VPUs) to offload this work, leaving the main processor free to handle file system operations and network sync.

Best Practices for Local Storage for AI Inference

Optimizing local storage for AI video inference requires a balance between write speed and endurance. AI models frequently access video frames in non-linear patterns, which can strain traditional hard drives. Using a tiered storage approach is often the most effective strategy for managing these workloads. This ensures that your system remains responsive even when processing heavy loads.

- High-Speed Buffer: Use RAM or small NVMe drives for the initial ingestion and immediate AI inference. This prevents bottlenecks during the most critical part of the process.

- Persistent Local Archive: Employ high-capacity SSDs or NAS systems for short-term retention of raw footage, typically for multiple to multiple days. This gives you a window for forensic review.

- Metadata Database: Maintain a local index of AI-generated tags to allow for instant local search before syncing to the cloud. This makes it easy to find specific events without hitting the network.

Implementing automated lifecycle management ensures that local storage does not overflow. You should set clear policies to purge raw footage once it has been successfully analyzed and the relevant clips have been synced to your permanent cloud workspace. This keeps your local hardware lean and efficient.

Syncing Edge Insights to Intelligent Workspaces

The true value of edge AI video storage is realized when it works alongside an intelligent workspace. In the Fastio ecosystem, this means your edge devices do not just dump files into a folder. They contribute to a living knowledge base. Once an AI camera identifies a relevant event, it can sync a low-bitrate proxy and a rich metadata payload directly to a shared workspace.

This integration allows AI agents and humans to work on the same data simultaneously. An agent can read the incoming metadata, cross-reference it with historical data in the workspace, and generate a summary or alert. Because Fastio uses Intelligence Mode to auto-index incoming files, the moment a clip arrives from the edge, it is already searchable by meaning and ready for AI-assisted queries. This removes the need for manual tagging and speeds up your entire production or security cycle.

Managing Connectivity and Network Downtime

One of the biggest advantages of edge AI is its ability to operate without a constant internet connection. In a cloud-only system, a network outage means your AI stops working. With edge storage, the AI continues to monitor and record locally. The system queues the metadata and clips for synchronization once the connection is restored.

To make this work, you need a reliable buffering strategy. Your local storage must have enough "headroom" to store several hours or even days of events if the network stays down. When the connection returns, the sync process should be throttled to prevent it from consuming all available bandwidth. This "trickle sync" approach ensures that your real-time data takes priority while the backlog is slowly cleared in the background.

Security and Privacy Benefits of Edge Processing

Privacy is a major concern for any organization handling video data. Edge AI provides a natural layer of protection by keeping sensitive raw footage on-site. Instead of sending faces or license plates to the cloud, the edge device can perform redaction or anonymization locally before the sync occurs. This means your cloud workspace only contains the information you actually need for your business processes.

This architecture also reduces the "attack surface" for your data. Because the raw video never leaves the local network, there is no risk of it being intercepted during transit. Using encrypted local storage also ensures that even if a device is stolen, the data stays protected. This "privacy by design" approach is becoming a requirement for teams working in sensitive environments like healthcare, legal, or high-tech manufacturing.

Evidence and Benchmarks: The Impact of Edge Architecture

Data from industry implementations shows that moving the intelligence layer to the edge provides immediate technical and financial benefits. Organizations moving from cloud-only streaming to hybrid models often see much better operational efficiency and lower costs. These results show that an edge-first approach is more than just a trend.

According to industry benchmarks, edge processing saves up to multiple% in cloud egress costs by eliminating the need to transmit raw high-resolution streams. Decision latency also drops from seconds, common in cloud-only models, to milliseconds when processing happens locally. This speed is critical for safety-critical applications where every fraction of a second counts. By tuning your storage for the edge, you build a faster, more cost-effective network.

Frequently Asked Questions

What is edge video storage?

Edge video storage refers to keeping video data on local hardware near the camera or sensor rather than streaming it immediately to the cloud. This allows AI models to process the video locally, saving bandwidth and reducing latency.

How do I sync edge AI results to the cloud?

You should use an intelligent synchronization strategy that transmits metadata, event triggers, and low-resolution proxies to the cloud while keeping the high-resolution raw video on local storage for archival purposes.

What are the hardware requirements for edge AI storage?

Edge AI storage typically requires high-speed NVMe or SSD drives for rapid data ingestion, along with specialized AI accelerators like GPUs or VPUs to handle the computational load of video inference.

Does edge AI storage improve privacy?

Yes. By processing video locally and only syncing anonymized metadata or specific events to the cloud, you minimize the exposure of sensitive raw video data to external networks.

Can AI agents access edge video storage?

Yes, in an integrated workspace like Fastio, AI agents use MCP tools to monitor incoming edge data, analyze metadata, and collaborate with humans on the results in real-time.

Related Resources

Stream and Share Video on Fastio

Sync your edge AI insights to an intelligent workspace with 50GB of free storage and 251 MCP tools for your AI agents. Built for edge video storage networks workflows.