How to Build a Collaborative Human-AI Medical Image Labeling Workflow

Collaborative human-AI medical image labeling is a specialized workflow where physicians and AI agents work together to annotate diagnostic images in a secure environment. By combining the speed of AI with the clinical judgment of radiologists, teams can improve labeling accuracy by 25%. This approach helps manage the massive volume of imaging data that now makes up multiple% of all healthcare records.

What is Collaborative Human-AI Medical Image Labeling?

Collaborative medical image labeling is a specialized workflow where physicians and AI agents co-annotate diagnostic images in a secure, audited environment. This process moves beyond traditional manual annotation by treating the AI not just as a tool, but as a digital teammate that assists with the heavy lifting of data preparation. In a typical setup, an AI model performs an initial pass on raw medical images, such as MRI or CT scans, to generate preliminary segmentations or bounding boxes. These are often called silver standard labels. Human experts, usually certified radiologists, then review and refine these labels to ensure they meet the gold standard required for clinical use.

This collaboration is necessary because medical image data accounts for 90% of all healthcare data by volume. Without AI assistance, the sheer scale of modern imaging would overwhelm clinical teams. By using AI to handle repetitive tasks like organ segmentation or noise reduction, radiologists can focus their expertise on complex cases where clinical detail is the priority. The goal is to create a direct feedback loop where human corrections improve future AI performance, and AI efficiency allows humans to process more patients with higher precision.

Helpful references: Fastio Workspaces, Fastio Collaboration, and Fastio AI.

The Evolution of Medical Annotation: From Lightboxes to AI Agents

Medical image review has moved from physical film and lightboxes to digital viewers and AI agents. In the early days, a radiologist would look at a single film and dictate their findings. Digital imaging introduced the PACS (Picture Archiving and Communication System), which allowed for better storage and basic measurement tools. However, the annotation process remained largely manual for decades. Doctors had to click through every slice of a 3D volume to outline a tumor or measure a lesion.

The introduction of AI changed this dynamic by automating the geometry of labeling. Modern AI agents can recognize anatomical structures in three dimensions. They can trace the complex contours of the liver or the branching paths of blood vessels in seconds. This shift from "manual drawing" to "AI-assisted refinement" represents a major change in how medical knowledge is digitized. Instead of spending hours on the mechanical task of outlining, physicians now spend their time on the cognitive task of interpretation. This evolution is driven by the need to process the hundreds of exabytes of medical imaging generated globally every year.

Why AI-Human Partnership Outperforms Manual Labeling

The primary reason for adopting collaborative workflows is the increase in output quality. Research shows that AI-human teams improve labeling accuracy by 25% compared to doctors working alone. This improvement comes from a system where the strengths of one partner help make up for the weaknesses of the other. AI models are excellent at consistent results and faster processing. They do not get tired, which helps avoid the errors that can happen during an eight-hour shift of reviewing thousands of near-identical slices in a volumetric scan.

On the other hand, humans are better at context. A radiologist can tell the difference between a subtle pathological anomaly and a benign anatomical variation that might confuse a model trained on a narrower dataset. In practice, this partnership reduces the rate of false negatives. When an AI flags a potential region of interest, it forces the human reviewer to give that area a second look. This double-check ensures that subtle indicators of disease, which might be missed by a tired human or an imperfect algorithm, are captured and documented correctly. For example, in mammography screening, using AI as a second reader has been shown to reduce the workload for radiologists by multiple.multiple% while maintaining or even improving cancer detection rates.

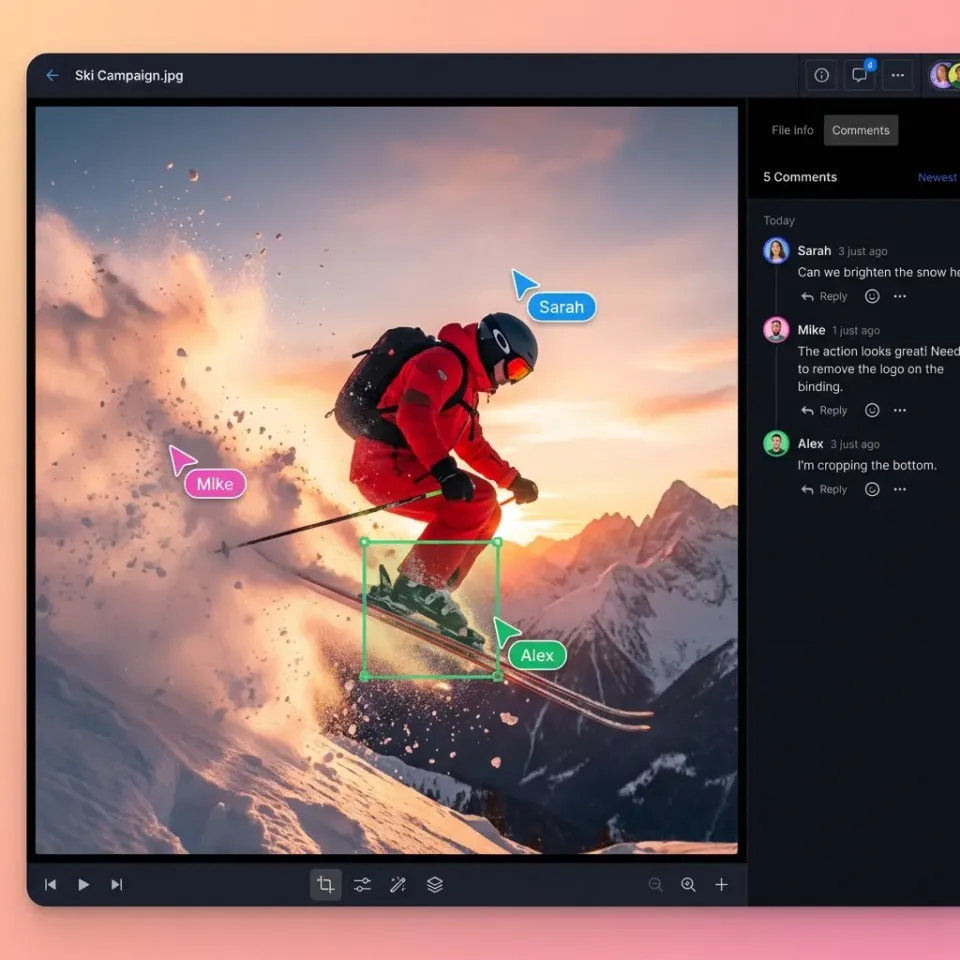

Collaborate on Files with Your Team

Get 50GB of free storage and 251 MCP tools to build secure, collaborative medical labeling workspaces. No credit card required. Built for collaborative human medical image labeling workflows.

Critical Components of a Secure Radiology Workflow

Medical data is subject to some of the strictest privacy considerations. Building a collaborative workflow requires more than just an annotation tool. It needs a secure infrastructure that handles large DICOM files without compromising patient confidentiality. Medical images are rarely basic JPEGs. They are stored in the DICOM format, which contains thousands of metadata fields. Maintaining the integrity of this data during the transfer between AI agents and human reviewers is essential. If the pixel spacing or window leveling data is lost, the resulting annotations may be useless for clinical use.

Before any image is shared in a collaborative workspace, it must undergo de-identification. This process involves stripping Protected Health Information (PHI) from the DICOM headers. Advanced workflows use scripts to clean data at the ingestion point. This ensures that neither the AI nor the human annotator sees sensitive patient identifiers like names or social security numbers. Beyond that, not everyone in a research team needs the same level of access. Collaborative platforms must support granular permissions. For instance, an AI agent might have write access to generate silver standard labels, while a junior resident has edit access, and a senior radiologist holds approval authority. This hierarchy ensures that the final gold standard label is only finalized by the most qualified expert.

Solving the 'Black Box' Problem with Collaborative Oversight

One of the biggest hurdles in medical AI is the "black box" problem, where the reasoning behind a model's decision isn't clear. Collaborative labeling solves this by making the AI's output visible and editable. When an AI agent segments a tumor, the radiologist can see the exact boundaries the model proposed. If the model includes healthy tissue in the tumor volume, the doctor can trim the edges. This interaction provides an immediate audit of the AI's logic.

This oversight is critical for building trust in clinical settings. If a radiologist understands where an AI is likely to fail, they can compensate for those specific weaknesses. For example, some models might struggle with low-contrast boundaries or images with metallic artifacts from implants. In a collaborative workflow, the human expert acts as the final sanity check. This relationship transforms the AI from a mysterious oracle into a transparent assistant. By reviewing the AI's work, the human also learns to spot patterns the AI is particularly good at identifying, creating a symbiotic learning environment.

Active Learning and Triage: Making the Most of Specialist Time

Radiologist time is expensive and scarce. Active learning is a technique used in collaborative labeling to ensure that this time is spent on the most valuable tasks. Instead of asking a doctor to review each image, the AI triage system identifies the cases where it is least confident. If the model identifies a clear-cut normal scan with multiple.multiple% confidence, it might be moved to a low-priority queue. If the model encounters a complex case with ambiguous features where its confidence drops below a certain threshold, it flags that image for immediate expert review.

This triage approach can reduce the time needed for radiology interpretation by up to 40.1%. By focusing on the "hard" cases, the human expert provides the most impactful labels for training the next version of the AI. This creates a cycle where the AI gets better at the easy cases, leaving more time for humans to work on the edge cases. This efficiency is important as the number of FDA-cleared AI radiology devices has now surpassed multiple, each requiring high-quality labeled data to reach the market.

The Content Gap: Why Audit Trails and Permissioned Sharing Matter

Many generic labeling tools fail in a medical context because they lack a detailed audit trail. In healthcare, it is not enough to have a labeled image. You must be able to prove who made every change, what AI model version was used for the initial pass, and which expert approved the final result. An audit trail serves as the record for digital medicine. If a diagnostic model is later found to have a bias, the audit trail allows researchers to trace back through the training data to identify which labeling sessions contributed to the error.

This level of transparency is necessary for getting regulatory approval from the FDA. Permissioned sharing is just as important. In a collaborative medical environment, data often needs to be shared across institutions for multi-center trials. A secure, permissioned workspace allows teams to invite external experts to review specific datasets without giving them access to the entire library. This need-to-know access model is a core principle of data security. Without these features, a labeling project risks becoming a liability rather than an asset.

Practical Implementation: Setting Up the Workflow

Implementing a collaborative human-AI medical labeling workflow involves several stages. Each stage must be set up to minimize friction and maximize data throughput. The first stage is data ingestion and URL Import. Moving gigabytes of medical data can be a bottleneck. Professional workflows use URL Import features to pull data directly from cloud providers like Google Drive, OneDrive, or Box into a centralized workspace. This avoids the latency and security risks of downloading files to a local machine before re-uploading them to the labeling platform.

The second stage is AI-assisted pre-labeling, or the silver standard. Once the data is ingested, an AI agent is triggered via a webhook to perform the first pass. For a chest X-ray study, the AI might identify the boundaries of the lungs and heart. This automation saves the radiologist from having to perform hundreds of clicks to outline simple structures. The third stage is human review and gold standard creation. The radiologist opens the pre-labeled images in a web-based DICOM viewer. They can adjust the AI-generated outlines using familiar tools like window leveling and zooming. The Human-in-the-Loop model ensures that the expert is always the final authority. If the AI misses a small nodule, the radiologist adds it manually, and this correction is fed back into the training loop. Finally, quality assurance and active learning ensure that the dataset remains accurate and that the AI continues to improve over time.

Standardizing the 'Gold Standard': Handling Inter-Observer Variability

A major challenge in medical labeling is that different doctors might label the same image differently. This is known as inter-observer variability. In some studies, the agreement rate between experts for certain types of nodules can be surprisingly low. Collaborative workflows address this by using consensus labeling. In this setup, multiple experts review the same images, and the AI helps identify the points of disagreement.

The AI can act as a neutral third party, highlighting areas where one doctor's label deviates notably from others. This prompts a discussion or a tie-breaking vote from a more senior specialist. By standardizing the labeling process through a shared workspace, teams can reduce variability and create a more reliable ground truth. This consistency is important for training AI models that are expected to perform accurately across different hospitals and patient populations. Without a collaborative platform to manage these disagreements, the resulting dataset may contain conflicting information that confuses the AI model.

Using Fastio for Medical Image Workspaces

Fastio provides the infrastructure layer that makes collaborative medical labeling possible at scale. By combining persistent storage with intelligent agent tools, it serves as the coordination layer where human and AI work happens. Unlike static folders, Fastio workspaces are intelligent. When a DICOM file is uploaded, it is automatically indexed and made searchable. Humans use the intuitive UI to manage projects, while AI agents use the multiple available MCP (Model Context Protocol) tools to interact with the data programmatically.

In complex systems where multiple AI agents are processing data simultaneously, file locks are necessary. Fastio allows agents to acquire and release locks on specific files, preventing two agents from trying to write annotations to the same image at the same time. This prevents data corruption and ensures a stable workflow. In addition, research teams often build labeling pipelines for pharmaceutical clients. Fastio supports ownership transfer, allowing a development team to build the entire infrastructure and then hand it over to the client. The client receives a fully functional, secure workspace while the development team can maintain admin access for ongoing support. Fastio offers a free tier for developers and researchers, including multiple of free storage, a multiple maximum file size, and multiple monthly credits.

Data Points and Benchmarks

The transition to collaborative labeling is supported by several industry benchmarks. These numbers show why the investment in human-AI collaboration is essential for modern healthcare. For example, imaging data accounts for 90% of all healthcare data, with a single MRI study often exceeding 500MB.

According to The Lancet Oncology, AI-supported screening resulted in a 44.3% reduction in the screen-reading workload for radiologists while increasing cancer detection by 20%. In addition, research published in Academic Radiology found that AI tools can reduce the time needed to assess imaging findings by 40.1%. These efficiency gains are being met with rapid regulatory adoption, as the FDA has now cleared over 1,000 AI-enabled medical devices, with radiology accounting for nearly 80% of these approvals. These metrics show that the future of medical imaging is becoming more collaborative.

Frequently Asked Questions

How is AI used in medical image labeling?

AI is used for pre-labeling or silver standard generation. It automates repetitive tasks like segmenting organs or identifying anatomical landmarks. This allows radiologists to focus on reviewing and refining complex pathology. This collaborative approach has been shown to improve labeling accuracy by 25% compared to manual methods alone.

What is the best platform for collaborative radiology review?

The best platform supports native DICOM files, provides granular permission controls, and maintains a detailed audit trail. For teams building their own tools, Fastio offers an intelligent infrastructure layer with multiple MCP tools that allow humans and AI agents to work together in shared, secure workspaces.

What are the pros and cons of AI-first vs. Human-first medical labeling?

An AI-first workflow offers speed and consistency but risks missing subtle clinical context. A Human-first workflow ensures high clinical accuracy but is slow and prone to fatigue. A collaborative approach combines both, using AI for speed and humans for oversight, resulting in a significant accuracy boost and a workload reduction of up to multiple.3%.

Does Fastio support strict security requirements?

Fastio provides strong security features including encryption, audit logs, and granular access controls. However, Fastio is not currently certified for strict security requirements, enterprise security standards, or other regulatory frameworks. Organizations should use the platform's security tools as part of a broader, compliant data management strategy.

Can I pull medical images directly from Google Drive into Fastio?

Yes. Fastio supports URL Import, allowing you to pull data directly from Google Drive, OneDrive, Box, or Dropbox via OAuth. This avoids the need for local I/O and ensures that large DICOM files are moved quickly between cloud environments.

Related Resources

Collaborate on Files with Your Team

Get 50GB of free storage and 251 MCP tools to build secure, collaborative medical labeling workspaces. No credit card required. Built for collaborative human medical image labeling workflows.