How to Build a RAG Pipeline with Claude Cowork

AI agents need access to your files to answer questions accurately. A RAG pipeline in Claude Cowork connects Claude to your workspace, letting it read your documents before responding. This guide shows how to set up a retrieval augmented generation system without managing external vector databases. You will learn how to connect Claude to an intelligent workspace and deploy your AI agents.

The Hallucination Problem and Why a Claude Cowork RAG Pipeline is Necessary

LLMs like Claude are strong reasoners, but they lack access to your private company data. When an AI agent tries to answer questions about your documentation or policies without external context, it might invent facts. This hallucination problem makes it hard to trust AI agents with real work.

Retrieval Augmented Generation (RAG) fixes this. A RAG pipeline pulls relevant information from a knowledge base and gives it to the LLM along with your prompt. Because the model bases its answers on retrieved data, it hallucinates less. According to Vectara, RAG systems can reduce LLM hallucination rates to as low as 2.8%.

In the past, building a RAG pipeline meant wiring together many separate tools. You needed a document loader, a text chunker, an embedding model, a vector database like Pinecone, and an orchestration framework like LangChain. This setup is slow, expensive, and hard to maintain.

Claude Cowork in Fastio takes a different approach by combining storage and intelligence. Instead of building a custom RAG pipeline, you can use a workspace that automatically embeds and indexes files when you upload them. The index is ready immediately for semantic search via the Model Context Protocol (MCP).

Helpful references: Fastio Workspaces, Fastio Collaboration, and Fastio AI.

How Native Workspace Intelligence Solves the RAG Bottleneck

A typical Claude RAG setup uses an extract, transform, and load (ETL) process that breaks easily. Each time you update or move a file, a script has to download it, parse the text, split it into chunks, generate embeddings, and update a separate database. Keeping data synchronized across systems is a major bottleneck for multi-agent workflows. It often causes agents to read outdated information.

Fastio removes this synchronization step. When you create a shared workspace and turn on Intelligence Mode, the storage layer doubles as your vector database. You no longer need to sync files with outside services.

When you upload a file to Fastio, whether through the web UI, an API call, or a URL import from Google Drive, an internal engine processes it. The system maintains the semantic index alongside the file metadata. Your Claude agents always see the current version of a document, with no polling delays or secondary pipelines.

Keeping everything in one place also fixes access control. In standard RAG setups, you have to copy your storage permissions into your vector database, which is error-prone. In Fastio, the semantic search endpoint uses the same access controls, roles, and file locks as the main storage system. AI agents can only read files they are allowed to see.

Building a RAG Pipeline with Claude Cowork: Step-by-Step

Setting up a Claude Cowork RAG system takes only a few steps. Fastio handles the embedding generation and vector storage, so you can focus on writing prompts and orchestrating your agents.

Here is how to add files to Claude Cowork for agent retrieval:

Step 1: Create a Workspace Start by making a new Fastio workspace for your AI workflow. This shared space works for both human team members and AI agents. You can set up an agent organization and transfer ownership to a client later if needed.

Step 2: Turn on Intelligence Mode Go to the workspace settings and turn on "Intelligence Mode". This tells Fastio to process uploaded documents, PDFs, and text files into vector embeddings in the background.

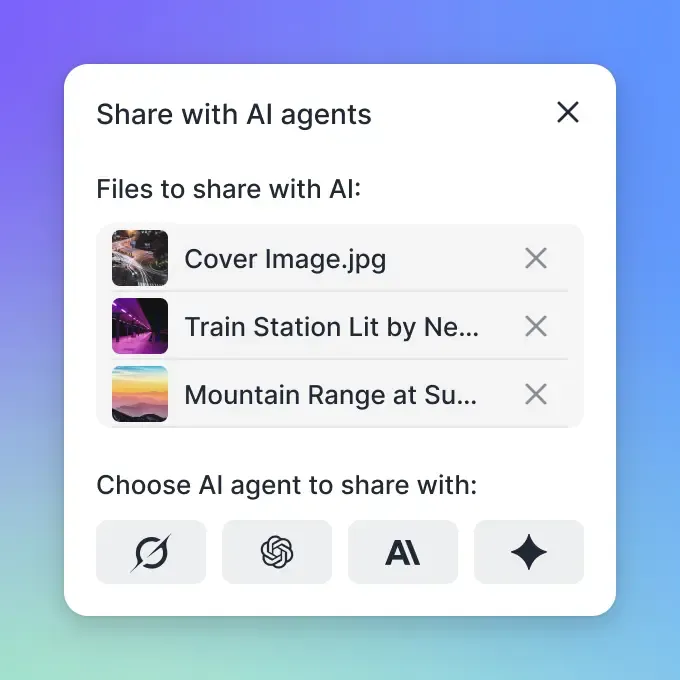

Step 3: Connect via MCP Install the Fastio MCP (Model Context Protocol) server. You can add it to Claude Desktop or use it in your own code. The MCP server gives Claude access to tools for interacting with the workspace over Streamable HTTP or Server-Sent Events (SSE).

Step 4: Upload Files Add your knowledge base to the workspace. You can drag and drop files, use the REST API, or use the URL import tool to pull files from Google Drive or Box. Fastio chunks and embeds the text as soon as the files arrive.

Step 5: Run Semantic Queries

Tell your Claude agent to use the search_workspace_content MCP tool. When a user asks a question, the agent searches the workspace, retrieves relevant text chunks, and writes an answer that includes file citations.

Give Your AI Agents Persistent Storage

Create an intelligent workspace with built-in vector search. Get 50GB free storage and access to 19 consolidated tools today.

Deep Dive: The Architecture of an AI Agent RAG Pipeline

Understanding how Fastio processes data helps you structure documents for better retrieval. The system hides most of the complexity, but knowing the mechanics will improve your RAG pipeline.

1. Parsing Files When you upload a document, Fastio checks the file type. For PDFs and presentations, the system uses OCR and layout analysis to extract text. It keeps the original structure, including headers, paragraphs, and tables, which helps preserve the meaning of the content.

2. Chunking Text Passing a multiple-page manual to an LLM uses up tokens and increases costs. Fastio splits large documents into smaller chunks automatically. Instead of cutting text at a fixed character limit, it chunks at natural boundaries like paragraph breaks and section headers. This keeps related thoughts together.

3. Vector Embedding The system sends each chunk through an embedding model, converting the text into a mathematical vector. Similar concepts sit closer together in the vector space. Fastio runs this process internally, so you do not have to pay for a third-party API or deal with network delays.

4. Searching via MCP When a user asks a question, the Claude agent generates a search query. The Fastio MCP server embeds the query and runs a similarity search against the workspace index. It finds the matching chunks, formats them with file metadata, and returns the data to Claude. The agent then reads the chunks to write its answer.

Evidence and Benchmarks: RAG vs. Standard LLMs

A native RAG pipeline improves the quality of AI responses. When you compare standard Claude chats to a Fastio workspace setup, the RAG approach is more reliable.

Fewer Hallucinations The main benefit of a RAG pipeline is that it stops the model from inventing facts. When you require the LLM to answer using retrieved text instead of its general training data, the responses become factual. Fastio includes file metadata with every chunk, letting Claude cite its sources. Team members can check these citations to verify the AI's work.

Token Optimization Some developers try to give AI agents context by pasting entire document libraries into every prompt. Even with Claude's large context window, sending that many tokens is slow and expensive. A Claude RAG setup fetches only the paragraphs needed to answer the question. This lowers your API costs and helps the model respond faster.

Scaling Multi-Agent Systems As you add more specialized AI agents to your team, combining storage and search becomes more important. Fastio offers a free agent plan with multiple of storage and multiple monthly credits. Because the search index lives inside the workspace, multiple agents can read from the same knowledge base and lock files for updates without overwriting each other's work.

Advanced Configuration: Optimizing Your RAG Setup for Large Document Sets

Using a Claude RAG setup with large document libraries requires planning. Fastio automates the setup, but the way you organize your workspaces changes how well the search performs.

Workspace Segmentation Avoid putting unrelated documents into a single workspace. Just like a person would struggle to find an IT policy in a folder full of marketing assets, an embedding model works better when the search space is focused. Set up separate workspaces for different teams, like an HR Knowledge Base or an Engineering Wiki. You can then tell your Claude agents to query the specific workspace they need.

Folder Structure and Hybrid Search Semantic search works best when paired with standard file filtering. Tell your team to use clear file names and organize folders logically. When an agent searches via MCP, it can use hybrid search to filter for documents modified recently or located in a specific directory. This narrows the search space before running the vector similarity check.

Document Formatting Rules Your RAG pipeline's output depends on its input data. To help Fastio chunk your text correctly, format your documents well:

- Use clear H1, H2, and H3 headings to mark sections.

- Format tables as markdown or text, rather than pasting them as images.

- Define acronyms in the text so the model has context.

- Keep paragraphs focused on one main topic.

Following these formatting rules makes it easier for Claude to read your files and give accurate answers.

Frequently Asked Questions

How do I build a RAG pipeline for Claude?

Create a workspace in Fastio and turn on Intelligence Mode. Upload your documents, then connect your Claude agent using the Model Context Protocol (MCP) server. Fastio handles the vector embedding and indexing, which lets Claude run semantic searches without a separate vector database.

Can Claude Cowork index large document sets?

Yes. Fastio workspaces process documents in the background, chunking and embedding files as you upload them. Agents can search through gigabytes of data to find specific text blocks, rather than trying to load every file into the LLM's context window at once.

What is the difference between RAG and fine-tuning for Claude?

RAG pulls information from a knowledge base to add context to Claude's prompt, which works well for changing facts and documentation. Fine-tuning changes the model's weights to adapt its tone or writing style. RAG is cheaper, faster to set up, and better at preventing hallucinations.

How much does the Claude RAG pipeline cost in Fastio?

Fastio has a free agent plan that includes multiple of storage and multiple monthly credits. You can build and test your Claude RAG setup without adding a credit card. Paid plans are available if you need more storage or compute usage later.

Related Resources

Give Your AI Agents Persistent Storage

Create an intelligent workspace with built-in vector search. Get 50GB free storage and access to 19 consolidated tools today.