How to Choose the Best MCP Servers for Data Analysis

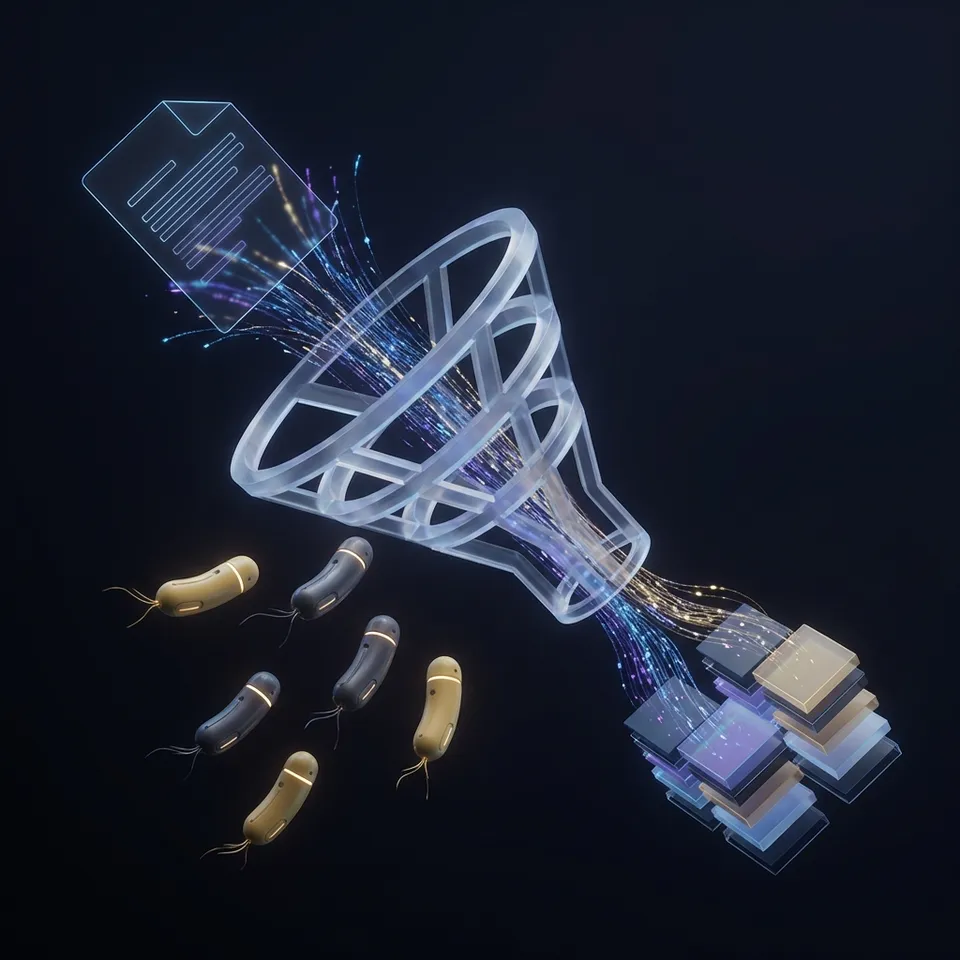

Data analysis MCP servers allow LLMs to directly query databases and execute analysis scripts securely. By connecting tools like SQLite, DuckDB, and Python to your AI agent, you transform simple chatbots into capable data analysts. This guide compares the best MCP servers for handling structured data, running SQL queries, and managing analysis workflows.

Top Data Analysis MCP Servers Compared

Choosing the right Model Context Protocol (MCP) server depends on your data volume, complexity, and environment. Here is a quick comparison of the leading options available today.

While database servers handle the querying, a storage layer is essential for managing datasets, reports, and logs. Most effective data analysis agents use a combination of these tools. For example, Fastio for storage and DuckDB for querying parquet files.

Helpful references: Fastio Workspaces, Fastio Collaboration, and Fastio AI.

Why Use MCP for Data Analysis?

MCP servers solve the context window problem for data science. Instead of pasting CSV rows into a chat prompt (expensive and limited), an MCP server lets the AI execute SQL queries or Python scripts against the data where it lives.

Direct Query Execution The agent generates SQL, sends it to the MCP server, and gets back only the results. This keeps token usage low and security high since the raw data never leaves your infrastructure.

Iterative Exploration Agents can explore schemas, sample data, and refine queries in loops. If a query fails, the agent reads the error and corrects its SQL syntax immediately.

Secure Access Control

With an MCP server, you grant the LLM specific tools (like read_query or list_tables) instead of raw database credentials or unrestricted network access.

1. Fastio MCP Server: The Foundation

Before you can query data, you need a place to store it. Fastio provides the persistent storage layer that stateless AI agents lack. It serves as the file system for your data analysis workflows, allowing agents to upload datasets, save charts, and store logs.

Why it works for data analysis:

- 19 consolidated tools: More file operations than any other MCP server.

- Built-in RAG: Intelligence Mode indexes documents automatically. An agent can ask, "What are the key trends in this Q3 report?" and get cited answers without writing SQL.

- Persistent Storage: Agents get 50GB of free storage to keep datasets and results between sessions.

- Universal Import: Agents can pull files from URLs or other cloud storage directly, skipping local downloads. For data teams, Fastio acts as the "staging area" where raw data lands before being processed by compute-heavy engines like DuckDB or Python.

Give Your AI Agents Persistent Storage

Fastio gives you usage-based cloud storage built for best mcp servers data analysis. Get streaming previews, AI-powered search, and unlimited guest sharing. Start with a free account, no credit card required.

2. DuckDB MCP Server: High-Performance Analytics

DuckDB is the standard for local analytical workloads. It's an in-process SQL OLAP database built for speed.

Key Strengths:

- Vectorized Execution: Processes data in batches (vectors) instead of row-by-row, making it much faster for aggregation and reporting.

- Format Support: Reads Parquet, CSV, and JSON files natively. It can query a Parquet file in storage without importing it first.

- Serverless: Like SQLite, it runs embedded in the application process. No database server to manage. Use the DuckDB MCP server when your agent needs heavy aggregation (SUM, AVG, GROUP BY) on datasets from a few megabytes to several gigabytes. Consider how this fits into your broader workflow and what matters most for your team. The right choice depends on your specific requirements: file types, team size, security needs, and how you collaborate with external partners. Testing with a free account is the fast way to know if a tool works for you.

3. SQLite MCP Server: Lightweight Transactions

SQLite is the most deployed database engine in the world. Its MCP server works well for lightweight, transactional tasks where setup speed matters more than raw analytical throughput.

Best Use Cases:

- Configuration Management: Storing agent preferences or state.

- Simple Lookups: Querying reference tables or small lists.

- Prototyping: Quickly spinning up a relational structure to test an idea. While SQLite handles row-based operations well, it struggles with massive analytical queries compared to column-oriented stores like DuckDB. Best used for your agent's control plane data rather than the data plane. Consider how this fits into your broader workflow and what matters most for your team. The right choice depends on your specific requirements: file types, team size, security needs, and how you collaborate with external partners. Testing with a free account is the fast way to know if a tool works for you.

4. PostgreSQL MCP Server: Production Workloads

When your data lives in a production environment with multiple users, PostgreSQL is the industry standard. The PostgreSQL MCP server connects your agent to a live database instance.

Advantages:

- Concurrency: Handles multiple read/write operations simultaneously without locking the entire database.

- Rich Ecosystem: Supports advanced data types (JSONB, GIS via PostGIS) and complex stored procedures.

- Durability: Includes Write-Ahead Logging (WAL) and point-in-time recovery for business data. This server is ideal for "Analyst Agents" that need to answer business questions from a live data warehouse or CRM backend. Consider how this fits into your broader workflow and what matters most for your team. The right choice depends on your specific requirements: file types, team size, security needs, and how you collaborate with external partners. Testing with a free account is the fast way to know if a tool works for you.

5. Python Local MCP Server: Custom Scripting

SQL can't do everything. Sometimes you need machine learning models, complex statistical analysis, or custom data visualization. The Python Local MCP server lets an agent write and execute Python code in a sandboxed environment.

Capabilities:

- DataFrames: Use

pandasorpolarsfor data manipulation that SQL struggles with. - Visualization: Generate charts with

matplotliborseabornand save them to Fastio storage. - Machine Learning: Run inference using

scikit-learnor other libraries in the environment. This server handles calculation work, often taking data from SQL MCP servers and processing it further. Consider how this fits into your broader workflow and what matters most for your team. The right choice depends on your specific requirements: file types, team size, security needs, and how you collaborate with external partners. Testing with a free account is the fast way to know if a tool works for you.

How to Choose the Right Stack

Most data analysis agents don't rely on a single server. They use a stack of compatible tools.

The "Local Analyst" Stack:

- Storage: Fastio (for datasets and results)

- Compute: DuckDB (for fast SQL over Parquet/CSV)

- Logic: Python Local (for charts and formatting)

The "Enterprise Reporter" Stack:

- Storage: Fastio (for PDF reports and logs)

- Database: PostgreSQL (connecting to the company warehouse)

- Orchestrator: A specialized agent with read-only permissions

Start by installing the Fastio MCP server to handle file inputs and outputs, then add DuckDB or PostgreSQL depending on where your structured data lives. Getting started should be straightforward. A good platform lets you create an account, invite your team, and start uploading files within minutes, not days. Avoid tools that require complex server configuration or IT department involvement just to get running.

Frequently Asked Questions

Can I use multiple MCP servers at the same time?

Yes, this is the primary strength of the Model Context Protocol. You can connect your LLM client (like Claude Desktop or Cursor) to Fastio, DuckDB, and Python simultaneously. The AI selects the right tool for the specific step of the task, such as reading a file from Fastio and then querying it with DuckDB.

Is it safe to give an AI agent database access?

Using an MCP server is safer than direct connection strings because you define the tool boundaries. You can configure the MCP server to run in read-only mode or limit it to specific schemas. Always follow the principle of least privilege. Never give an agent admin or root access to a production database.

Do I need a GPU to run these MCP servers?

No, most data analysis MCP servers run efficiently on standard CPU hardware. DuckDB and SQLite are highly optimized for CPU performance. However, if your Python Local MCP server is running large deep learning models (like PyTorch or TensorFlow), a GPU would improve performance.

Related Resources

Give Your AI Agents Persistent Storage

Fastio gives you usage-based cloud storage built for best mcp servers data analysis. Get streaming previews, AI-powered search, and unlimited guest sharing. Start with a free account, no credit card required.