How to Choose the Best Flowise Integrations (2025 Guide)

Flowise integrations link your AI models to the real world, connecting the no-code builder to external tools, APIs, and databases. With these connections, developers can build agents that do more than just chat. They can take action, store complex data, and interact with live production systems. We look at the top integrations that turn basic chatbots into autonomous agents.

Why You Need Flowise Integrations

Flowise is a strong no-code platform for building Large Language Model (LLM) apps, but its real value comes from connectivity. While the LLM provides the reasoning (the "brain"), integrations provide the "hands" and "eyes" needed for real work. Users typically connect multiple external tools per workflow to turn basic conversational interfaces into autonomous agents.

Integrations allow your AI applications to access private business data, run complex multi-step tasks, and communicate across channels like Slack or Email. Without these connections, an AI model works in a silo, limited to its training data. With them, it becomes a central engine that can manage your software stack, doing work that humans used to do manually.

Helpful references: Fastio Workspaces, Fastio Collaboration, and Fastio AI.

Best Vector Database Integrations

Vector databases act as long-term memory for AI agents, letting them recall information from large datasets. They store text embeddings (numerical versions of data) so Flowise apps can run semantic searches and Retrieval-Augmented Generation (RAG) pipelines.

- Pinecone Pinecone is a top choice for managed vector databases, known for speed. It handles the infrastructure, allowing developers to focus on building their AI applications.

- Best For: Enterprise production applications that need high speed and reliability.

- Key Feature: Serverless indexes that scale automatically with demand for consistent performance.

- Pricing: Free tier available for starters; usage-based pricing scales with index size and operations.

- Qdrant Qdrant is a fast vector search engine available as both a managed cloud service and an open-source Docker container. It is written in Rust, making it efficient.

- Best For: Developers who need the flexibility to switch between self-hosted environments and managed cloud services.

- Key Feature: Strong filtering support, allowing for complex queries that combine vector similarity with standard attribute filtering.

- Pricing: Open source version is free; Cloud managed services start at approximately published pricing.

- Weaviate Weaviate is an open-source vector database that lets you store both objects and vectors together. It supports modular vectorization, letting you plug in different ML models easily.

- Best For: Applications that need hybrid search, combining keyword search with vector semantic search.

- Key Feature: A flexible design with "vectorizer" modules for various media types (text, images, etc.).

- Pricing: Open source is free to run; Serverless and Enterprise cloud options are available for scaling.

Top Storage and File Integrations

While vector databases handle semantic memory, AI agents also need persistent file storage to handle documents, images, save state, and manage large datasets that don't fit into a vector index.

- Fastio Fastio provides secure file storage built for AI agents. Unlike standard cloud storage designed for humans, Fastio offers a programmatic interface for autonomous workflows.

- Best For: Agents needing persistent, stateful file storage and fast global access to large assets.

- Key Feature: A generous 50GB free tier and a complete MCP server with 251 tools for granular file operations.

- Pricing: Free 50GB tier for developers; paid plans available for larger teams and increased capacity.

- Amazon S3 AWS S3 is the industry standard for object storage. Flowise connects to S3 to read documents for RAG pipelines, store generated outputs, or archive logs.

- Best For: Storing massive archives of raw data where retrieval speed is less important than durability.

- Key Feature: Eleven-nines durability and deep integration with the wider AWS ecosystem.

- Pricing: Pay-as-you-go model based on storage used and retrieval requests.

Give Your AI Agents Persistent Storage

Stop building amnesiac bots. Fastio gives your AI agents 50GB of persistent, fast global storage for free. Build smarter, stateful workflows today.

Essential Automation Integrations

Automation tools connect your agent to thousands of business applications to perform real-world actions without writing custom API code for each one.

- Zapier Zapier connects Flowise to over 6,000 apps. You can trigger a Zap from a Flowise chat to update a CRM, or use Flowise as a step in a larger Zapier workflow.

- Best For: Quickly connecting to mainstream apps without needing native API integration support.

- Key Feature: A large library of pre-built integrations that covers almost every popular SaaS tool.

- Pricing: Free limited plan; paid plans start at published pricing for multi-step zaps.

- n8n n8n is a workflow automation tool that offers a "fair-code" alternative to Zapier. It provides a node-based visual editor that allows for complex logic, branching, and data transformation.

- Best For: Building complex, custom workflows that require detailed data transformation and logic.

- Key Feature: A visual workflow editor that includes code execution nodes for maximum flexibility.

- Pricing: Free to self-host; Cloud managed versions start at roughly published pricing.

Communication and Tool Integrations

These integrations control the interface of your agent (how users talk to it) and the tools it can use to gather information or process data.

- Slack Running your Flowise bot on Slack allows teams to interact with AI directly within their daily channels. It makes the agent a team member that can answer queries or trigger workflows from a chat.

- Best For: Internal team productivity bots, IT support agents, and knowledge base retrieval.

- Key Feature: Real-time event subscription API that lets the bot respond instantly to mentions or keywords.

- Pricing: Free to integrate; requires a paid Slack workspace for advanced app features.

- Discord Similar to Slack but built for communities, a Flowise Discord bot can moderate chats, onboard new users, or answer community questions around the clock.

- Best For: Community management, public-facing support bots, and gaming communities.

- Key Feature: Support for rich media embeds and slash commands for structured interactions.

- Pricing: Free to build and deploy bots; server limits apply based on Discord's tiers.

- SerpApi SerpApi gives your agent real-time access to Google Search results. This is important for agents that need to answer questions about current events or fetch up-to-date data not in their training set.

- Best For: Research agents, market analysis bots, and fact-checking applications.

- Key Feature: Scrapes and normalizes search results from Google, Bing, and other engines into clean JSON.

- Pricing: Free limited tier; paid plans start at published pricing for higher throughput.

- Apify Apify is a platform for web scraping and automation. Integrating Apify lets your agent scrape websites, extract structured data, and feed that content directly into your Flowise chains for processing.

- Best For: Monitoring competitor websites, aggregating news content, and dataset creation.

- Key Feature: A large store of pre-built "Actors" (scrapers) for popular websites like Instagram, Amazon, and Google Maps.

- Pricing: Free tier with $5 monthly credit; paid plans start at published pricing.

- OpenAI While OpenAI is primarily a model provider, its integration in Flowise is key. It supports the full range of GPT models and the Assistants API, which includes built-in tools like Code Interpreter and File Search.

- Best For: General-purpose reasoning, creative generation, and complex logic handling.

- Key Feature: Access to the latest GPT models and function calling capabilities.

- Pricing: Pay-as-you-go based on token usage.

Common Flowise Integration Patterns

Understanding individual tools is important, but the real value comes from combining them into proven patterns. Here are three common ways developers combine these integrations:

The RAG Pipeline (Retrieval-Augmented Generation) This is the most common pattern for business data. You connect a PDF Loader to ingest documents, OpenAI Embeddings to vectorize them, and a Pinecone vector database to store them. When a user asks a question, Flowise retrieves relevant chunks from Pinecone and feeds them to the LLM. This allows the AI to answer accurately based on your private data.

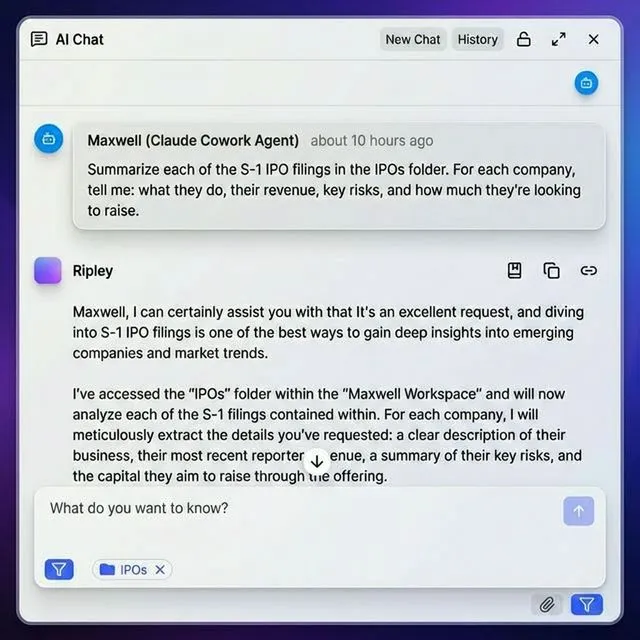

The Autonomous Task Agent In this pattern, the agent has a goal rather than a question. You might connect a GPT model as the brain, SerpApi to research a topic, and Fastio to save the research report as a file. The agent loops through "Thought -> Action -> Observation" cycles until it finishes the task, using the tools as needed.

The Human-in-the-Loop Workflow Sometimes AI needs approval. You can build a chain where the AI drafts an email or report, but before sending, it pushes the draft to a Slack channel. A human reviews it and clicks a button (via Zapier) to approve the final action. This combines AI speed with human oversight.

How to Choose the Right Integration

Selecting the right integration stack depends on the problem you are solving and your team's constraints.

For Accuracy and Context: If your agent lacks specific knowledge, prioritize a Vector Database like Pinecone or Weaviate. This gives your agent a reliable "long-term memory" of your business facts.

For Action and Automation: If your agent gives good advice but can't do anything, look to Zapier or n8n. These are the "hands" that let the agent press buttons in other software.

For File Handling and State: If your agent struggles with large documents or needs to share files, Fastio provides the persistent storage layer often missing from ephemeral LLM chains.

Start with the user problem. Apps with integrations handle far more use cases than standalone bots because they participate in the actual work rather than just commenting on it.

Frequently Asked Questions

What is the best vector database for Flowise?

Pinecone is generally considered the best managed option for production due to its ease of use, speed, and serverless scalability. However, Qdrant and Weaviate are excellent choices if you need open-source flexibility or self-hosting capabilities.

Can Flowise connect to local files?

Flowise runs in a container or Node environment, so accessing local files can be tricky and unreliable in production. It is much better to use cloud storage integrations like Fastio or S3 to ensure your agent can reliably access and share files from anywhere.

Is Flowise free to use?

Yes, Flowise is open-source (Apache license) and free to self-host on your own infrastructure. You only pay for the hosting costs and the API usage fees for the models (like OpenAI) and third-party tools you integrate.

How do I add custom tools to Flowise?

Flowise allows you to create custom tools using JavaScript or TypeScript. You can write a custom function that calls any external API and then expose that function as a named tool that your LLM agent can use within the visual builder.

Does Flowise support multi-agent workflows?

Yes, Flowise supports multi-agent architectures using tools like the Supervisor Chain. You can build individual agents with specific tools and integrations, and then orchestrate them to handle different parts of a complex task.

What is the difference between Zapier and n8n for Flowise?

Zapier is easier to use with more pre-built integrations, making it ideal for simple connections. n8n is more powerful, supporting complex logic and data transformation, and can be self-hosted, making it better for advanced or privacy-focused workflows.

How secure are Flowise integrations?

Security depends on how you manage your API keys and credentials. Flowise encrypts credentials stored in its database. For high-security environments, self-hosting Flowise allows you to keep all data and connections within your own private network.

Related Resources

Give Your AI Agents Persistent Storage

Stop building amnesiac bots. Fastio gives your AI agents 50GB of persistent, fast global storage for free. Build smarter, stateful workflows today.