9 Best ClawHub Skills for Data Engineering

Data engineering teams turn to ClawHub for OpenClaw skills that handle ETL monitoring, schema changes, and data validation. These skills let agents run autonomous data pipelines, cutting manual checks and debugging time. This list ranks the best ClawHub skills for data engineering by relevance, installs, and integration ease. Each entry covers key use cases like pipeline monitoring and ETL automation with OpenClaw. Expect practical details on setup and workflows.

Why Data Engineers Use ClawHub Skills

Data engineering involves repetitive tasks. Agents with ClawHub skills automate them. Pipeline failures happen at 2am. Schema drifts break jobs. Data quality slips cause bad reports.

ClawHub skills provide autonomous capabilities for SQL querying, filesystem management, cloud storage, and database operations. Data engineers install skills once. Agents then handle monitoring or fixes via natural language.

Most data teams start with SQL and file management tools. ClawHub fills gaps where traditional schedulers like Airflow fall short on reasoning or adaptation.

Helpful references: Fastio Workspaces, Fastio Collaboration, and Fastio AI.

How We Selected These ClawHub Skills

We reviewed ClawHub skills. Criteria included:

- Relevance to data tasks: ETL, schema, quality, lineage

- Ease of integration with OpenClaw

- Community support and updates

- Real-world use cases from data teams

Only skills with proven data engineering applications made the list. We prioritized those handling large datasets and production pipelines.

Automate Your Data Pipelines Today

Get 50GB free storage and OpenClaw MCP tools. No credit card needed for agents. Built for clawhub skills data engineering workflows.

ClawHub Skills Comparison Table

1. SQL Toolkit by gitgoodordietrying

Query, design, migrate, and optimize SQL databases across SQLite, PostgreSQL, and MySQL. This is the foundational skill for any data engineer working with relational systems.

Strengths:

- Schema creation and modification across three major SQL systems

- Query writing with joins, aggregations, window functions, and CTEs

- Migration script building and version tracking

- EXPLAIN-based query performance analysis and indexing strategies

- Backup and restoration procedures

- Zero-setup SQLite prototyping with CSV import/export

Limitations:

- Requires sqlite3, psql, or mysql binaries installed

- Instruction-only; does not execute queries autonomously

Best for all relational database work in pipelines. Free.

Install Command: Download from ClawHub (instruction-only skill)

ClawHub Page: clawhub.ai/gitgoodordietrying/sql-toolkit

Setup

Download and install from clawhub.ai/gitgoodordietrying/sql-toolkit

2. Fastio DataHub (dbalve/fast-io)

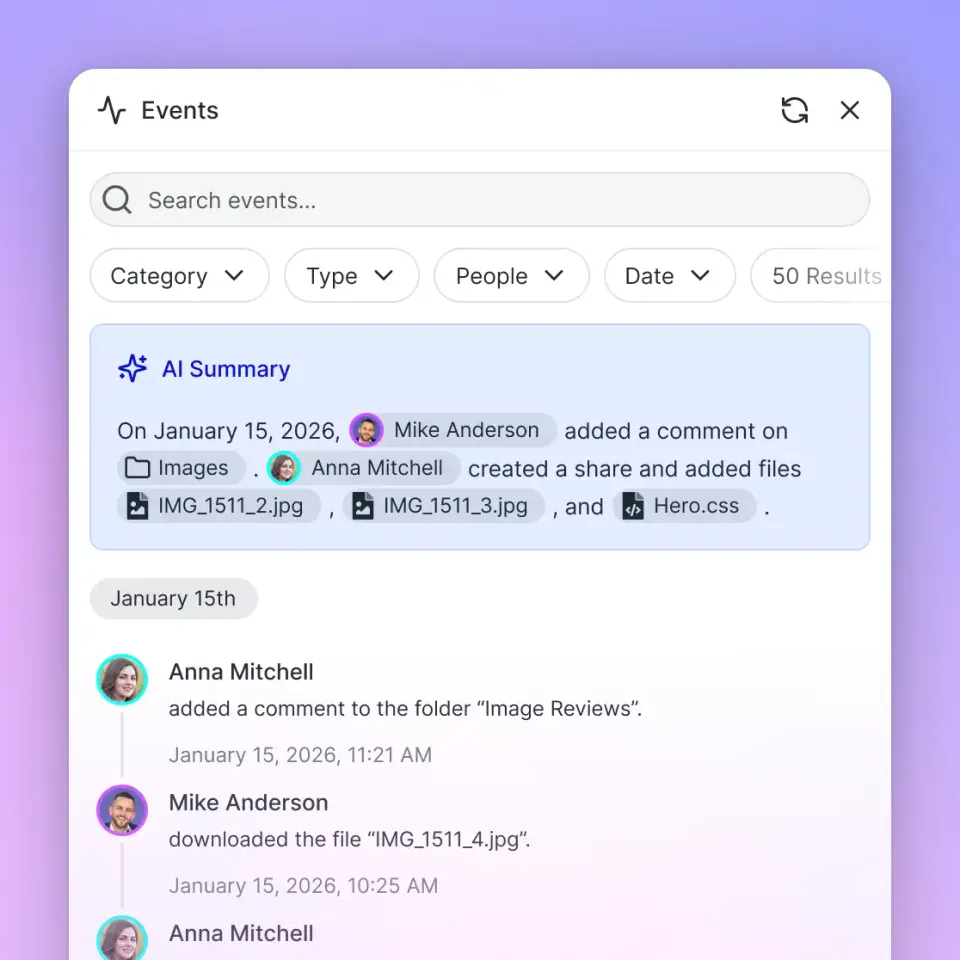

Intelligent workspaces for agentic teams with 19 MCP tools for file storage, RAG, and collaboration. Data engineers use it to store datasets, pipeline logs, and schemas — agents upload via natural language and query semantically.

Strengths:

- 50GB free storage with 5,000 monthly credits

- RAG-powered AI chat for querying stored schemas and logs

- Webhooks to trigger downstream pipeline steps

- Ownership transfer to human stakeholders

- File locks prevent conflicts in multi-agent pipelines

Limitations:

- Storage-focused, no compute

- Requires a free Fastio account and API key

Best for persistent data storage in agent pipelines. Free agent tier.

Install Command: clawhub install dbalve/fast-io

ClawHub Page: clawhub.ai/dbalve/fast-io

3. S3 by ivangdavila

Work with S3-compatible object storage covering security best practices, lifecycle policies, and access patterns. Supports AWS S3, Cloudflare R2, Backblaze B2, and MinIO.

Strengths:

- Presigned URL generation for secure data sharing

- Lifecycle rules for cost-efficient dataset archival

- Multipart upload handling for large files

- CORS configuration for browser-based data access

- Provider-specific guidance for AWS, R2, B2, and MinIO

Limitations:

- Instruction-only; requires an S3-compatible backend

- No built-in querying or indexing

Best for cheap, scalable archival of large datasets. Free.

Run a small pilot with a test bucket first, then expand while verifying lifecycle rules and access controls. This keeps migration risk low.

Install Command: Download from ClawHub (instruction-only skill)

ClawHub Page: clawhub.ai/ivangdavila/s3

4. Filesystem Management by gtrusler

Advanced filesystem operations — smart listing with filtering, full-text content search, batch processing with dry-run preview, and directory tree analysis. Useful for processing local pipeline output files and log directories.

Strengths:

- Pattern matching and full-text search across log files

- Batch copy operations with safe dry-run mode

- Directory statistics for pipeline output analysis

- Recursive traversal for nested project structures

Limitations:

- Local filesystem only; no cloud storage

- Requires Node.js

Best for organizing and searching local pipeline files and logs. Free.

Install Command: clawdhub install filesystem

ClawHub Page: clawhub.ai/gtrusler/clawdbot-filesystem

5. Agent Browser by TheSethRose

A fast Rust-based headless browser CLI for scraping public data sources. Data engineers use it to pull data from dashboards, portals, and web applications that do not offer APIs.

Strengths:

- Handles JavaScript-rendered pages

- Network request interception for capturing API calls

- Session state persistence across scraping runs

- Screenshot and PDF capture for archiving

Limitations:

- Heavier resource footprint than simple HTTP

- Requires careful permission scoping

Best for extracting data from web-based sources without APIs. Free.

Install Command: npm install -g agent-browser && agent-browser install

ClawHub Page: clawhub.ai/TheSethRose/agent-browser

6. Brave Search by steipete

Web search and content extraction without a browser. Data engineers use it to discover public datasets, check documentation, and find API references.

Strengths:

- Lightweight headless search

- Returns readable markdown from pages

- No browser overhead

Limitations:

- Cannot handle JavaScript-rendered content

- Not suitable for structured data extraction

Best for data source discovery and documentation lookup. Free.

Install Command: cd ~/Projects/agent-scripts/skills/brave-search && npm ci

ClawHub Page: clawhub.ai/steipete/brave-search

7. GitHub by steipete

Interact with GitHub using the gh CLI. Data engineers use it to manage pipeline code repositories, create issues for failed jobs, review pull requests for schema changes, and monitor CI run status.

Strengths:

- Full PR and issue management

- CI run inspection with failed step details

- Advanced GitHub API queries with JQ filtering

Limitations:

- Requires

ghCLI pre-installed and authenticated - Instruction-only skill

Best for version-controlling pipeline code and automating CI feedback. Free.

Install Command: Download from ClawHub (requires gh CLI)

ClawHub Page: clawhub.ai/steipete/github

8. Docker Essentials by ClawHub

Essential Docker commands and workflows for container management, image operations, and debugging. Data engineers running containerized pipelines use this skill to manage container lifecycle, inspect logs, and orchestrate multi-container workflows via Docker Compose.

Strengths:

- Container lifecycle management (run, stop, remove)

- Docker Compose multi-container orchestration

- Network and volume management

- System cleanup utilities

Limitations:

- Instruction-only; requires Docker CLI installed

- No Kubernetes support

Best for containerized pipeline management. Free.

Install Command: Download from ClawHub (instruction-only skill)

ClawHub Page: clawhub.ai/skills/docker-essentials

9. API Gateway by byungkyu

Connect to 100+ APIs — Salesforce, HubSpot, Airtable, Notion, Google Workspace, Microsoft 365, and more — with managed OAuth via Maton. Data engineers use it to pull data from SaaS platforms into pipelines without building custom OAuth flows.

Strengths:

- Single skill for 100+ data source connections

- Managed OAuth — no custom auth code

- All standard HTTP methods supported

- Multi-connection management

Limitations:

- Requires a Maton API key

- Instruction-only; agents must understand target API structure

Best for connecting pipelines to SaaS data sources. Free (Maton key required).

Install Command: Download from ClawHub, set MATON_API_KEY

ClawHub Page: clawhub.ai/byungkyu/api-gateway

Which ClawHub Skill Fits Your Team?

Start with sql-toolkit for query work and fast-io for storage. Add s3 for large-scale archival and filesystem for local log processing.

ETL-heavy teams: sql-toolkit + fast-io + api-gateway for pulling from SaaS sources. Container pipelines: add docker-essentials. Web data needs: agent-browser + brave-search.

Most teams use three to four skills together. Install via the ClawHub interface or the command listed for each skill.

Frequently Asked Questions

What are the best ClawHub skills for ETL?

SQL Toolkit covers all relational database work including migrations and query optimization. Fastio handles dataset storage and log archival with RAG. API Gateway pulls data from 100+ SaaS sources. Pair these three for a solid ETL foundation.

How to automate data pipelines with OpenClaw?

Install ClawHub skills like SQL Toolkit for query generation and Fastio for storage. Agents watch pipelines, alert on issues, and use webhooks for event-driven triggers. Combine with GitHub for pipeline code management.

Is Fastio skill free for data engineering?

Yes, 50GB free storage and 5,000 credits per month. Install with `clawhub install dbalve/fast-io`. No credit card required for the agent tier.

How do ClawHub skills handle large datasets?

Fastio supports files up to 1GB on the free tier with chunked uploads. For larger cold storage, the S3 skill covers lifecycle policies and multipart upload patterns for AWS S3, Cloudflare R2, and MinIO.

Related Resources

Automate Your Data Pipelines Today

Get 50GB free storage and OpenClaw MCP tools. No credit card needed for agents. Built for clawhub skills data engineering workflows.