How to Build AI Data Extraction Agents That Store and Organize Results

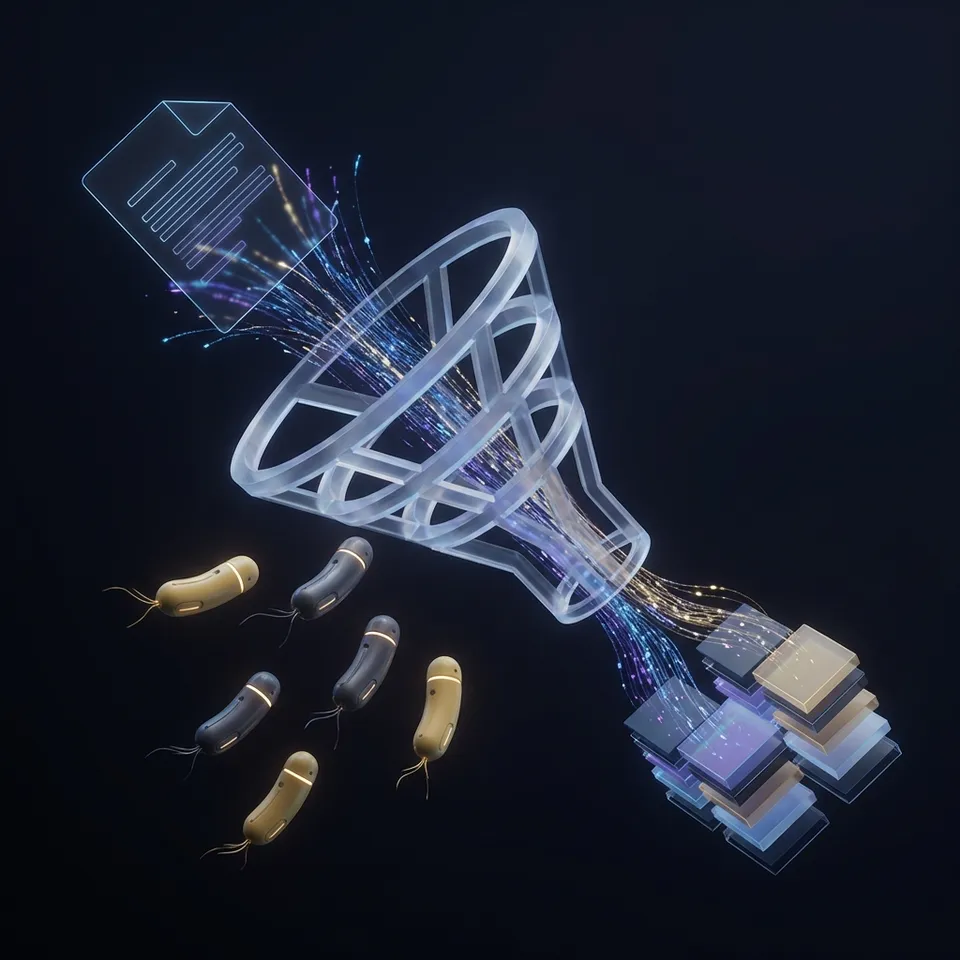

AI data extraction agents are autonomous systems that identify, extract, and structure data from websites, documents, and databases without predefined templates. This guide covers how they work, the main technologies behind them, and the part most guides skip: how to store, organize, and query your extracted data so it actually becomes useful.

What Are AI Data Extraction Agents?

AI data extraction agents are autonomous programs that locate, read, and structure information from unstructured sources like PDFs, web pages, emails, spreadsheets, and databases. Unlike traditional scrapers that rely on fixed rules and CSS selectors, these agents use machine learning and natural language processing to understand context, adapt to new layouts, and handle messy real-world data. A typical extraction agent combines several capabilities:

- OCR (Optical Character Recognition) to convert scanned documents and images into machine-readable text

- NLP (Natural Language Processing) to identify entities, relationships, and key-value pairs within text

- Layout analysis to understand how information is spatially arranged on a page

- Reasoning to resolve ambiguities, validate outputs, and decide what to do next

The biggest difference between an extraction agent and a simple API call is autonomy. An agent can encounter a document it has never seen before, figure out what data matters, extract it, validate the result, and store it, all without a human writing new rules.

How AI Agents Extract Data: The Four-Stage Pipeline

Every extraction agent follows a variation of the same workflow. Knowing these stages helps you design agents that produce clean, usable outputs.

1. Source Identification

The agent first determines where to find data. This might mean crawling a set of URLs, polling an email inbox for new attachments, watching a folder for uploaded documents, or querying a database. Good agents handle multiple source types and can be triggered by events (a new file upload, a webhook notification) rather than running on a fixed schedule.

2. Extraction

This is where the AI does its core work. The agent parses the source, applies OCR if needed, and uses NLP models to identify relevant fields. For invoices, it might extract vendor name, invoice number, line items, totals, and due dates. For job listings, it might pull title, company, salary range, and requirements. Modern AI extraction achieves higher accuracy than rule-based systems on unstructured documents. The accuracy gap widens further on documents with inconsistent formatting.

3. Validation

Raw extraction output needs checking. Agents validate results by cross-referencing fields (does the line item total match the invoice total?), checking data types (is the date actually a date?), and flagging low-confidence extractions for human review. This step separates real production agents from demos.

4. Storage and Organization

The extracted data needs to go somewhere useful. Most tutorials gloss over this stage, but it determines whether your extraction pipeline delivers real value. We cover it in detail below.

Why Storage Is the Missing Piece

Most guides on AI data extraction focus on the extraction logic: which models to use, how to fine-tune them, how to handle edge cases. But extraction without organized storage is just data generation. You end up with JSON files scattered across local directories, results dumped into flat CSVs, or data sitting in ephemeral memory that disappears when the agent stops. This creates real problems:

- No audit trail. You cannot verify what was extracted, when, or from which source document.

- No collaboration. Other team members (or other agents) cannot access or build on the extracted data.

- No queryability. Finding specific results means writing custom search scripts every time.

- No durability. If the agent crashes or its container restarts, extracted data may be lost. Production extraction agents need persistent, organized storage with access controls, version history, and search. They also need a way to hand off results to humans for review, particularly when dealing with high-stakes data like financial records or legal documents.

Give Your AI Agents Persistent Storage

Fastio gives teams shared workspaces, MCP tools, and searchable file context to run ai data extraction agents workflows with reliable agent and human handoffs.

Building an Extraction Agent with Persistent Storage

Here is a working architecture for an extraction agent that stores results in organized, queryable cloud storage using Fastio's MCP server.

Step 1: Set up the storage layer

Your agent creates a workspace for each extraction project. With Fastio's 251 MCP tools, the agent can create workspaces, folders, and files programmatically:

### Agent creates a workspace for invoice extraction

workspace = create_workspace(

name="Q1 Invoice Extraction",

intelligence=True # Enable RAG indexing

)

### Organize by source

create_folder(workspace, "raw-documents")

create_folder(workspace, "extracted-data")

create_folder(workspace, "flagged-for-review")

Setting intelligence=True activates Intelligence Mode, which auto-indexes every file uploaded to the workspace. You can then query your extracted data using natural language without building a separate search system.

Step 2: Process and store results

After extraction, the agent uploads both the source document and the structured output:

### Upload source PDF

upload_file(workspace, "raw-documents", invoice_pdf)

### Upload extracted JSON

upload_text_file(

workspace,

"extracted-data",

f"{invoice_id}.json",

json.dumps(extracted_fields)

)

### Flag low-confidence results

if confidence_score < threshold:

move_file(result, "flagged-for-review")

Step 3: Enable human review

Create a branded share so team members can review flagged extractions without needing developer access:

share = create_share(

workspace,

type="exchange", # Bidirectional

name="Invoice Review Queue"

)

The exchange share lets reviewers download flagged documents, check the extracted data, and upload corrections. The agent picks up corrections automatically via webhooks and incorporates them into future runs.

Step 4: Query extracted data

With Intelligence Mode enabled, you can ask questions across all your extracted data using built-in RAG:

"What was the total amount invoiced by Acme Corp in January?"

"Which invoices have extended payment terms?"

"Show me all extraction errors from last week."

No need to build custom database queries or search infrastructure. The RAG system returns answers with citations pointing to the specific source documents.

Key Technologies Behind Extraction Agents

Your extraction agent's accuracy depends on the models and frameworks you pick. Here are the main technology categories.

Large Language Models (LLMs)

LLMs like ChatGPT, Claude, and Gemini are good at understanding document context and extracting structured data from unstructured text. They handle ambiguous formatting, abbreviations, and implied information better than traditional NLP pipelines. The tradeoff is cost and latency: LLM calls are slower and more expensive per document than specialized models.

Best for: Complex documents with varied layouts, multi-language extraction, cases requiring reasoning.

Specialized Document AI

Services like Google Cloud

Document AI, Amazon Textract, and Azure AI Document Intelligence are trained specifically for document parsing. They combine OCR with layout understanding and offer pre-built extractors for common document types (invoices, receipts, tax forms).

Best for: High-volume processing of standard document types where cost per document matters.

Agentic Frameworks

Frameworks like LangChain, LlamaIndex, and CrewAI give you the scaffolding for building multi-step extraction agents. They handle tool calling, memory management, and workflow orchestration so you can focus on the extraction logic instead of the plumbing.

Best for: Building custom agents that need to chain multiple tools (extract, validate, store, notify).

OCR Engines

Tesseract (open source) and commercial alternatives handle the foundational task of converting images to text. Modern OCR engines support dozens of languages and can handle handwriting, though accuracy drops on low-quality scans.

Best for: The first step in any pipeline that processes scanned documents or images.

Common Extraction Use Cases

AI data extraction agents show up across industries wherever teams deal with large volumes of unstructured or semi-structured data.

Invoice and Receipt Processing

Agents extract vendor details, line items, tax amounts, and payment terms from thousands of invoices per day. Automated invoice processing is much faster than manual data entry and substantially reduces error rates.

Contract Analysis

Legal teams use extraction agents to pull key clauses, dates, party names, and obligations from contracts. The agent stores extracted data alongside the original document so attorneys can verify findings against the source.

Web Data Collection

Agents scrape product prices, job listings, real estate data, or competitive intelligence from websites. Unlike static scrapers, AI agents adapt when site layouts change, reducing maintenance overhead.

Healthcare Records

Extraction agents process medical forms, lab results, and clinical notes to build structured patient records. Validation matters more here than anywhere else, because errors can have real consequences.

Financial Document Processing

Banks and financial services use extraction agents on loan applications, tax returns, and compliance documents. High volume plus regulatory requirements make automated extraction with human review the standard approach. For any of these use cases, the extracted data needs to be stored somewhere accessible, auditable, and searchable. An agent that extracts invoice data but dumps it into a local CSV has done half the job.

Best Practices for Production Extraction Agents

Building a demo extraction agent takes an afternoon. Building one that runs reliably in production takes more care. These practices separate hobby projects from production systems.

Version your outputs

Store each extraction run as a new file version instead of overwriting previous results. Fastio's file versioning tracks every change automatically, so you can compare extractions over time and roll back if a model update introduces regressions.

Use file locks for multi-agent systems

If multiple agents process documents from the same source, use file locks to prevent conflicts. One agent should not overwrite another's in-progress work.

Separate raw data from processed data

Keep source documents in one folder and extracted results in another. This makes it easy to re-run extraction if you upgrade your model, and it preserves the audit trail from source to output.

Build feedback loops

Route low-confidence extractions to human reviewers. Track which corrections come up most often and use that data to improve your extraction prompts or fine-tune your models.

Monitor accuracy over time

Extraction accuracy drifts as source document formats change. Set up periodic spot checks where you compare agent output against human-verified ground truth. Even a small accuracy drop on high-volume document processing means hundreds of errors for downstream teams.

Hand off to stakeholders

Use ownership transfer to give business teams access to extracted data. The agent builds the workspace, organizes results, and then transfers ownership to the team that needs it, while keeping admin access for ongoing maintenance.

Frequently Asked Questions

How do AI agents extract data from documents?

AI agents extract data by combining OCR (to convert images to text), NLP (to understand context and identify entities), and layout analysis (to interpret document structure). The agent reads the document, identifies fields like names, dates, and amounts, validates the results against expected patterns, and stores the structured output. Agents using LLMs can handle documents they have never seen before without custom rules.

What is automated data extraction?

Automated data extraction uses software to pull structured information from unstructured sources like PDFs, emails, web pages, and databases without manual data entry. AI-powered extraction goes beyond pattern matching by using machine learning to understand context, adapt to new formats, and improve over time. making it much faster than manual entry with much lower error rates.

How accurate is AI data extraction?

AI extraction agents achieve higher accuracy than traditional rule-based systems on unstructured documents. Accuracy varies by document type: standardized forms like invoices tend to score higher, while handwritten notes or complex multi-page contracts may score lower. To maintain accuracy, build validation steps into your pipeline and route low-confidence results to human reviewers.

Where should extraction agents store their results?

Production extraction agents need persistent cloud storage with version history, access controls, and search. Storing results in local files or ephemeral memory creates problems with auditability, collaboration, and durability. Cloud platforms with built-in RAG indexing, like Fastio, let you query extracted data using natural language without building a separate database or search system.

Can AI extraction agents work with any document format?

Most AI extraction agents handle PDFs, images (JPEG, PNG, TIFF), Word documents, Excel spreadsheets, emails, and HTML pages. Some also process specialized formats like medical records (HL7/FHIR) or financial data (XBRL). What the agent can handle depends on its OCR engine and NLP models. LLM-based agents tend to be the most flexible since they can reason about document structure instead of relying on format-specific parsers.

Related Resources

Give Your AI Agents Persistent Storage

Fastio gives teams shared workspaces, MCP tools, and searchable file context to run ai data extraction agents workflows with reliable agent and human handoffs.