AI Automation Storage API: Persistent Storage for Autonomous Agents

An AI automation storage API provides programmatic access to persistent file storage that autonomous agents can use to read, write, and share data across sessions. Agents with persistent storage complete 3x more complex tasks compared to those relying on ephemeral memory.

What Is an AI Automation Storage API?

An AI automation storage API is a programmatic interface that gives autonomous agents the ability to store, retrieve, and manage files in cloud storage. Unlike traditional cloud storage APIs designed for human users, automation storage APIs are built specifically for AI agents that need to persist data between sessions, organize outputs, and collaborate with humans.

Core capabilities include:

- File CRUD operations (create, read, update, delete)

- Workspace and folder management

- Permission controls and sharing

- Metadata extraction and search

- Webhooks for reactive workflows

The key difference between agent storage APIs and human-focused storage: agents need structured, programmatic access patterns, not drag-and-drop UIs. They need to automate file workflows, not manually organize folders.

Why AI Agents Need Persistent Storage

Without persistent storage, AI agents lose context between sessions. Every time an agent restarts, it starts from scratch, unable to reference previous outputs, remember decisions, or build on past work.

The impact is measurable. Agents with persistent storage complete 3x more complex tasks because they can:

- Resume interrupted workflows without losing progress

- Reference past decisions and outputs

- Build up knowledge bases over weeks and months

- Share results with humans and other agents

Think of it like the difference between working in a notebook (persistent) versus writing on a whiteboard that gets erased every night (ephemeral). For simple tasks, ephemeral memory works. For complex, multi-step automation, you need persistence.

Common agent workflows that require storage:

Data Pipeline Agents: Extract data from APIs, transform it, generate reports as CSV or PDF files. Storage holds intermediate results and final outputs.

Document Processing Agents: OCR scanned documents, extract structured data, output JSON or spreadsheets. Storage keeps originals and processed files organized.

Content Generation Agents: Create blog posts, marketing copy, or code. Storage versions drafts, stores assets, and maintains a content library.

Research Agents: Scrape websites, summarize findings, compile reports. Storage archives sources and maintains research databases. In each case, ephemeral memory fails. Agents need file systems that survive restarts, allow retrieval by metadata, and enable collaboration.

How Storage APIs Reduce Agent Development Time

Building file storage into an AI agent from scratch takes weeks. You need to handle file uploads, organize folders, manage permissions, implement search, and maintain security. API-based storage reduces development time by 50% by providing these capabilities out of the box.

Without a storage API, developers must:

- Set up S3 buckets or similar infrastructure

- Write custom file upload/download logic

- Implement chunked uploads for large files

- Build folder hierarchies and permission systems

- Add metadata tagging and search

- Secure API endpoints and manage authentication

With a purpose-built storage API:

- Call

/uploadwith file data - Organize files into workspaces via API

- Set permissions with a single parameter

- Search files by name, metadata, or content

- Security and auth are handled server-side

The difference is stark. Instead of weeks building infrastructure, developers integrate storage in hours and focus on agent logic. Unified.to's storage API documentation highlights that one unified API enables developers to push and pull file storage data with zero maintenance. This approach eliminates the need to maintain multiple custom integrations across different storage platforms.

Start with ai automation storage api on Fastio

Fastio gives teams shared workspaces, MCP tools, and searchable file context to run ai automation storage api workflows with reliable agent and human handoffs.

Key Features of Agent-Friendly Storage APIs

Not all storage APIs are built for agents. Generic cloud storage APIs (S3, Google Cloud Storage) require significant integration work and lack agent-specific patterns. Purpose-built agent storage APIs include:

File Operations

- Chunked uploads for files up to 1GB or larger

- Streaming downloads to avoid memory limits

- Bulk operations to upload/download multiple files in parallel

- File versioning to track changes over time

- Locks to prevent concurrent edit conflicts

Organization

- Workspaces for logical project grouping

- Folders and subfolders via API

- Tagging and metadata for search and categorization

- Permissions at workspace, folder, and file levels

Intelligence

- Built-in RAG to query documents in natural language

- Semantic search to find files by meaning, not just keywords

- Auto-summarization for long documents

- Metadata extraction (file type, size, dimensions, duration)

Collaboration

- Human-agent collaboration by inviting humans to agent workspaces

- Ownership transfer to hand off projects from agent to human

- Guest access for external stakeholders

- Activity tracking to audit who did what

Reactive Workflows

- Webhooks to trigger actions when files change

- Real-time notifications via SSE or WebSockets

- File event streams for monitoring uploads, edits, deletes

Developer Experience

- MCP integration with 200+ tools for LLM-native access

- SDKs for Python, Node.js, Go, and other languages

- API documentation designed for agents (llms.txt format)

- Free tier with generous limits for prototyping

MCP Integration: File Storage for LLM Assistants

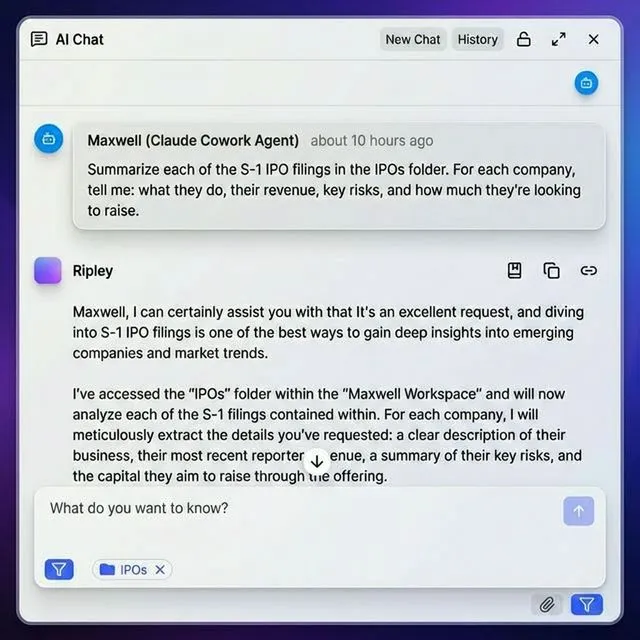

The Model Context Protocol (MCP) is an emerging standard for connecting LLMs to external data sources and tools. Agent storage APIs with MCP support provide zero-friction file access for AI assistants like Claude, GPT-4, and Gemini.

How MCP changes agent storage:

Traditional approach: Build custom API client, handle auth, parse responses, manage state. MCP approach: Install MCP server, configure credentials once, call tools via natural language. For example, Fastio's MCP server offers 251 tools via Streamable HTTP and SSE transport. An agent can upload a file, create a workspace, set permissions, and share a link by calling simple tool functions. No custom integration code needed.

MCP tools for file storage typically include:

upload_file- Upload file datadownload_file- Retrieve file contentslist_files- Browse workspace filescreate_workspace- Organize projectsshare_file- Generate share linkssearch_files- Find files by name or metadataquery_documents- RAG-based Q&A across files

According to the Fastio MCP documentation, session state is stored in Durable Objects, allowing agents to maintain context across multiple API calls without re-authenticating. MCP-compatible storage APIs work across LLM providers. An agent built with Claude can switch to GPT-4 or open-source models without rewriting storage code.

Setting Up MCP for Agent Storage

Installing an MCP server for file storage takes minutes:

- Install the MCP client for your AI assistant (Claude Desktop, Cursor, VS Code)

- Add the MCP server to your config file with credentials

- Restart the assistant to load tools

- Call tools via natural language like "Upload this report to the Marketing workspace"

No SDK installation, no API client code, no auth flow. The MCP server handles it all.

OpenClaw Integration for Natural Language File Management

OpenClaw is a skill framework that lets any LLM manage files through natural language commands. Install via clawhub install dbalve/fast-io for zero-config access to 14 file management tools. Unlike MCP (which requires a server), OpenClaw works with any LLM that supports function calling: Claude, GPT-4, Gemini, LLaMA, local models. No server setup, no configuration files, no environment variables. Agents can say "Create a workspace called ClientPortal and upload contract.pdf" and the OpenClaw skill handles the API calls.

Storage API Architectures: S3 vs Purpose-Built

When choosing a storage API for agents, developers face two paths: use generic object storage (S3, Google Cloud Storage) or adopt a purpose-built agent storage API.

Generic Object Storage (S3, GCS, Azure Blob)

Pros:

- Mature, battle-tested infrastructure

- Low per-GB costs

- works alongside existing data pipelines

Cons:

- Requires custom integration code for every feature

- No built-in RAG, search, or collaboration

- No MCP support (agents need custom API clients)

- Complex permission models

- No ownership transfer or human-agent collaboration

According to MinIO's AI storage documentation, most AI/ML frameworks are designed to work with the S3 API. MinIO provides lifecycle management to automate data tasks, but it still requires significant integration work to build agent-friendly workflows.

Purpose-Built Agent Storage APIs Pros:

- Built-in RAG with citations

- MCP-native with 200+ tools

- Ownership transfer (agent builds, human receives)

- Workspaces for project organization

- Webhooks for reactive workflows

- Free tier with no credit card

Cons:

- Smaller ecosystem than S3

- May have file size or storage limits on free tiers

When to use S3: Large-scale ML training datasets (terabytes), integration with existing AWS infrastructure, need for lowest per-GB cost.

When to use agent storage APIs: Agentic workflows, document processing, human-agent collaboration, need for RAG/search, MCP integration. For most autonomous agent projects, purpose-built APIs cut development time and provide features (like RAG and ownership transfer) that would take months to build on S3.

Implementing Storage in Multi-Agent Systems

Multi-agent systems introduce complexity: agents must coordinate access to shared files, avoid conflicts, and maintain consistency. A good storage API provides file locks, atomic operations, and event streams to orchestrate multi-agent workflows.

File Locks for Concurrent Access

When multiple agents edit the same file, conflicts arise. Agent A reads report.json, makes changes, and uploads the modified version. Meanwhile, Agent B does the same. The last upload wins, and one agent's work is lost.

File locks prevent this:

- Agent A calls

/lockonreport.json - Agent B tries to lock the same file and receives "locked by Agent A"

- Agent B waits or works on a different file

- Agent A finishes, calls

/unlock, and Agent B proceeds

This pattern ensures only one agent modifies a file at a time.

Webhooks for Agent Coordination

Instead of polling for changes, agents subscribe to webhooks. When a file is uploaded, modified, or deleted, the storage API sends an HTTP POST to a configured endpoint. Agents react immediately without constant polling.

Example workflow:

- Agent A uploads

raw_data.csv - Webhook fires to Agent B's endpoint

- Agent B downloads the file, processes it, uploads

processed_data.json - Webhook fires to Agent C's endpoint

- Agent C generates a PDF report

This creates a reactive pipeline where each agent is triggered by the previous step's completion.

Workspace-Based Isolation

Assign each agent (or agent team) to its own workspace. Files are isolated by default, preventing accidental cross-agent interference. Agents only see files in workspaces they're invited to.

Benefits:

- Clear boundaries between agent projects

- Permissions at workspace level

- Easier debugging (fewer files to search)

According to the Google Agent Development Kit memory documentation, platforms like Redis and ADK provide infrastructure for persistent storage, vector search, and caching in one system. However, these still require developers to build workspace logic and file organization themselves.

Security and Compliance for Agent Storage

AI agents often process sensitive data: customer records, financial documents, proprietary code. Storage APIs must provide encryption, audit logs, and granular permissions to meet security requirements.

Encryption At rest: Files are encrypted on disk using AES-256 or similar standards. Even if storage servers are compromised, files remain unreadable without keys.

In transit: All API calls use HTTPS (TLS 1.2+) to prevent interception. File uploads and downloads are encrypted end-to-end.

Audit Logs

Every action is logged: who uploaded which file, when it was accessed, permission changes, share link creations. Audit logs enable compliance reporting and security investigations.

Typical audit events:

- File uploaded by Agent X

- File downloaded by User Y

- Permission changed from "private" to "shared"

- Share link created with expiration date

Granular Permissions

Control access at multiple levels: organization, workspace, folder, file. Assign roles (admin, editor, viewer) to agents and humans.

Example permission model:

- Organization: Agent A is admin (full control)

- Workspace: Agent B is editor (can upload/edit)

- Folder: Agent C is viewer (read-only)

- File: User D has no access (file is private to Agent A)

SSO and MFA

For human users collaborating with agents, support single sign-on (Okta, Azure AD, Google) and multi-factor authentication to prevent unauthorized access. Describe security capabilities factually without claiming compliance status.

Cost Models for Agent Storage APIs

Pricing varies widely across storage APIs. Traditional cloud storage charges per GB stored and per GB transferred. Agent storage APIs may use usage-based credits or hybrid models.

Per-GB Pricing (S3, GCS, Azure)

- Storage: $0.02-$0.03 per GB per month

- Bandwidth: $0.08-$0.12 per GB transferred

- Operations: Per-request charges for uploads/downloads

Pros: Predictable costs for static datasets.

Cons: Unpredictable costs for active agents uploading/downloading frequently. No included features (RAG, MCP, webhooks).

Credit-Based Pricing

Some agent storage APIs use credits that cover storage, bandwidth, and AI features.

Example (Fastio):

- 100 credits per GB stored per month

- 212 credits per GB transferred

- 1 credit per 100 AI tokens (for RAG queries)

- 10 credits per document page ingested

Free tier: 5,000 credits per month, 50GB storage, no credit card.

Pros: Single currency for all operations. Free tier sufficient for prototyping.

Cons: Requires credit math to estimate costs.

Which

Model Is Cheaper? For agents with moderate storage (under 50GB) and frequent file access, credit-based APIs with free tiers are cheaper. For large-scale data storage (terabytes) with infrequent access, per-GB pricing wins. Worth noting: Agent storage APIs bundle features (RAG, MCP, webhooks) that would cost thousands of dollars to build on S3. The per-GB cost is higher, but total cost of ownership is lower.

Getting Started with an Agent Storage API

Ready to add persistent storage to your AI agent? Follow these steps to integrate a storage API in under an hour.

Step 1: Choose an API

Evaluate based on:

- Free tier limits (storage, bandwidth, credits)

- MCP support (if using LLM assistants)

- RAG and search capabilities

- SDK availability for your language (Python, Node.js, etc.)

- Ownership transfer (if building for clients)

Step 2: Sign Up and Get API Credentials

Most agent storage APIs offer agent-specific signup flows. The agent itself can register for an account programmatically, no human intervention needed. For Fastio, agents sign up like human users and receive their own 50GB free tier. No credit card, no trial period, no expiration.

Step 3: Install SDK or MCP Server

Option A: SDK

pip install fastio-sdk

from fastio import FastIO

client = FastIO(api_key="your_key")

workspace = client.create_workspace("AutomationProject")

client.upload_file(workspace.id, "report.pdf", file_data)

Option B: MCP Add to your MCP config, restart your assistant, and call tools:

"Upload report.pdf to the AutomationProject workspace"

Step 4: Build Agent Workflows

Start simple: upload a file, list files, download a file. Then add:

- Folder organization

- Search and RAG queries

- Webhooks for reactive workflows

- Ownership transfer to humans

Step 5: Monitor and Scale

Use audit logs and activity tracking to monitor agent behavior. If you hit free tier limits, upgrade to a paid plan or optimize agent workflows to reduce bandwidth usage. Most developers integrate storage in the first session and have working automation within a day.

Real-World Agent Storage Use Cases

Here's how teams use storage APIs to power autonomous agent workflows:

Document Processing Pipeline

Scenario: Legal team receives hundreds of contracts per week. An agent extracts key terms, generates summaries, and flags risks.

Storage role:

- Contracts uploaded to "ContractsInbox" workspace

- Agent processes each file, extracts structured data as JSON

- Summaries stored in "ProcessedContracts" workspace

- Ownership transferred to legal team for review

Outcome: 90% reduction in manual contract review time.

Content Generation System

Scenario: Marketing team needs 50 blog posts per month. An agent researches topics, drafts posts, generates images.

Storage role:

- Agent stores research notes in "Research" workspace

- Drafts saved to "Drafts" workspace with versioning

- Final posts transferred to "Published" workspace

- Humans review and approve via branded portal

Outcome: 10x increase in content output with same team size.

Data Analysis Agent

Scenario: Sales team uploads weekly reports. An agent analyzes trends, generates charts, compiles insights.

Storage role:

- Raw CSVs uploaded by humans to "SalesData" workspace

- Agent downloads files, processes data, generates charts

- PDF reports stored in "Reports" workspace

- Webhook notifies team when new report is ready

Outcome: Real-time insights without manual data work.

Multi-Agent Research System

Scenario: Three agents collaborate on market research. Agent A scrapes data, Agent B analyzes, Agent C writes reports.

Storage role:

- Shared "MarketResearch" workspace

- Agent A uploads raw data files

- Webhook triggers Agent B to download and analyze

- Agent B uploads analysis, triggers Agent C

- Agent C generates final report, transfers to human

Outcome: Complex research completed 5x faster than manual process.

Frequently Asked Questions

How do AI agents store data?

AI agents store data using cloud storage APIs that provide programmatic access to file systems. Instead of relying on ephemeral memory that resets between sessions, agents upload files to workspaces, organize them into folders, and retrieve them later via API calls. Purpose-built agent storage APIs offer features like chunked uploads, metadata tagging, semantic search, and built-in RAG to make file management easier for autonomous systems.

What storage do AI agents need?

AI agents need persistent, organized storage that survives restarts and supports collaboration. Key requirements include file CRUD operations (create, read, update, delete), workspace management for project organization, permission controls, metadata extraction, search capabilities, and webhooks for reactive workflows. Agents processing documents also benefit from built-in RAG to query files in natural language and ownership transfer to hand off projects to humans.

Can AI agents access cloud storage?

Yes, AI agents can access cloud storage through APIs. Generic object storage like S3 requires custom integration code, while purpose-built agent storage APIs provide MCP servers and SDKs for zero-friction access. Agents can upload files, create workspaces, set permissions, and share links programmatically. Some platforms like Fastio allow agents to sign up for their own accounts with 50GB free storage, treating agents as first-class citizens rather than API-only users.

What is the difference between agent storage APIs and human cloud storage?

Agent storage APIs are designed for programmatic access patterns, not drag-and-drop UIs. They provide features like chunked uploads for large files, file locks to prevent concurrent conflicts, webhooks for reactive workflows, and MCP integration for LLM-native access. Human cloud storage focuses on visual organization, collaboration through comments and sharing, and manual file management. Agent APIs bundle developer-friendly features like SDKs, llms.txt documentation, and free tiers for prototyping.

Do I need a separate storage API for each agent?

No, multiple agents can share the same storage API account by using workspaces for isolation. Each agent (or agent team) gets its own workspace with separate permissions. Files are isolated by default, preventing accidental cross-agent interference. Agents only see files in workspaces they're invited to. This approach is more cost-effective than separate accounts and simplifies human oversight, as all agent activity appears in a single organization dashboard.

How much does agent storage cost?

Cost varies by provider and pricing model. Generic object storage like S3 charges $0.02-$0.03 per GB stored and $0.08-$0.12 per GB transferred. Purpose-built agent storage APIs often use credit-based pricing that covers storage, bandwidth, and AI features. For example, Fastio offers a free tier with 50GB storage and 5,000 monthly credits (enough for moderate agent activity) with no credit card required. For large-scale storage (terabytes), per-GB pricing is cheaper. For active agents with frequent file access, credit-based free tiers win.

Can agents use Google Drive or Dropbox APIs for storage?

Yes, but generic file sharing APIs like Google Drive and Dropbox are designed for human users, not autonomous agents. They lack agent-specific features like file locks, webhooks, MCP integration, and ownership transfer. Integration requires custom OAuth flows, manual permission management, and workarounds for API rate limits. Purpose-built agent storage APIs reduce development time by 50% by providing these capabilities out of the box with agent-friendly authentication and free tiers for prototyping.

What is MCP integration for storage APIs?

MCP (Model Context Protocol) integration means the storage API provides an MCP server with tools that LLMs can call directly via natural language. Instead of writing custom API client code, developers install the MCP server, configure credentials once, and agents call tools like 'upload_file', 'create_workspace', or 'search_files' through simple function calls. Fastio's MCP server offers 251 tools via Streamable HTTP and SSE transport, enabling zero-friction file access for Claude, GPT-4, Gemini, and other LLMs.

How do agents handle large files with storage APIs?

Storage APIs designed for agents support chunked uploads and streaming downloads to handle large files without memory limits. Instead of loading a 1GB file into RAM, agents upload files in chunks. Downloads stream directly to disk or processing pipelines. Some APIs like Fastio support files up to 1GB on the free tier with chunked upload endpoints. For larger files, paid tiers or custom S3 integrations are necessary.

Can AI agents share files with humans through storage APIs?

Yes, agent storage APIs support human-agent collaboration through branded portals, guest access, and ownership transfer. An agent can create a workspace, upload files, and generate a share link for human review. Some platforms allow agents to transfer ownership of entire organizations and workspaces to humans while retaining admin access. This enables workflows where agents build complete data rooms or client portals and hand them off to teams for final approval and delivery.

Related Resources

Start with ai automation storage api on Fastio

Fastio gives teams shared workspaces, MCP tools, and searchable file context to run ai automation storage api workflows with reliable agent and human handoffs.