How to Scale AI Agent Pipelines with MLOps

AI Agent MLOps automates machine learning workflows for autonomous agentic teams. Unlike traditional models, agents produce complex artifacts like memory logs, tool outputs, and multi-step plans that require specialized handling. This guide covers the essential MLOps stack for agents, best practices for scaling, and how Fastio's workspaces enable smooth human-agent collaboration.

What Is AI Agent MLOps?

AI Agent MLOps is the discipline of automating the lifecycle of autonomous AI agents. While traditional MLOps focuses on training and deploying static models, Agent MLOps manages dynamic, goal-seeking systems that interact with their environment. These agents don't just predict; they plan, execute tools, and iterate based on feedback. In a standard machine learning workflow, the pipeline is often linear: data in, training, model out. Agentic workflows are loops. An agent might receive a task, query a database, write code, test it, and then revise its approach. This non-linear behavior requires a new set of operations practices. You need to manage not just model weights, but prompt versions, tool definitions, and the agent's "memory" or state. The core goal of Agent MLOps is reliability. Agents are probabilistic software. They can fail in creative ways, get stuck in loops, or hallucinate. A strong MLOps pipeline provides the guardrails, observability, testing, and version control, to ensure agents perform consistently in production. It turns experimental bots into reliable digital employees.

Helpful references: Fastio Workspaces, Fastio Collaboration, and Fastio AI.

Why You Need Specialized MLOps for Agents

Deploying agents without a dedicated MLOps strategy is a recipe for chaos. The complexity of agentic systems introduces challenges that traditional DevOps tools aren't built to handle.

Artifact Explosion Agents generate a massive volume of artifacts compared to standard software. Every run produces conversation logs, intermediate thought traces (chain-of-thought), tool inputs and outputs, and final deliverables. A single agent task might generate dozens of files. Managing this data requires scalable, persistent storage that is easily accessible to both the agents and their human supervisors.

State Management and Persistence Unlike a stateless API call, an agent often needs to remember context over long periods. It needs "memory" to recall past decisions or user preferences. Traditional databases can be overkill for simple file-based memory, while ephemeral containers lose data on restart. Agents need a persistent workspace where they can read and write memories as files, ensuring continuity across sessions.

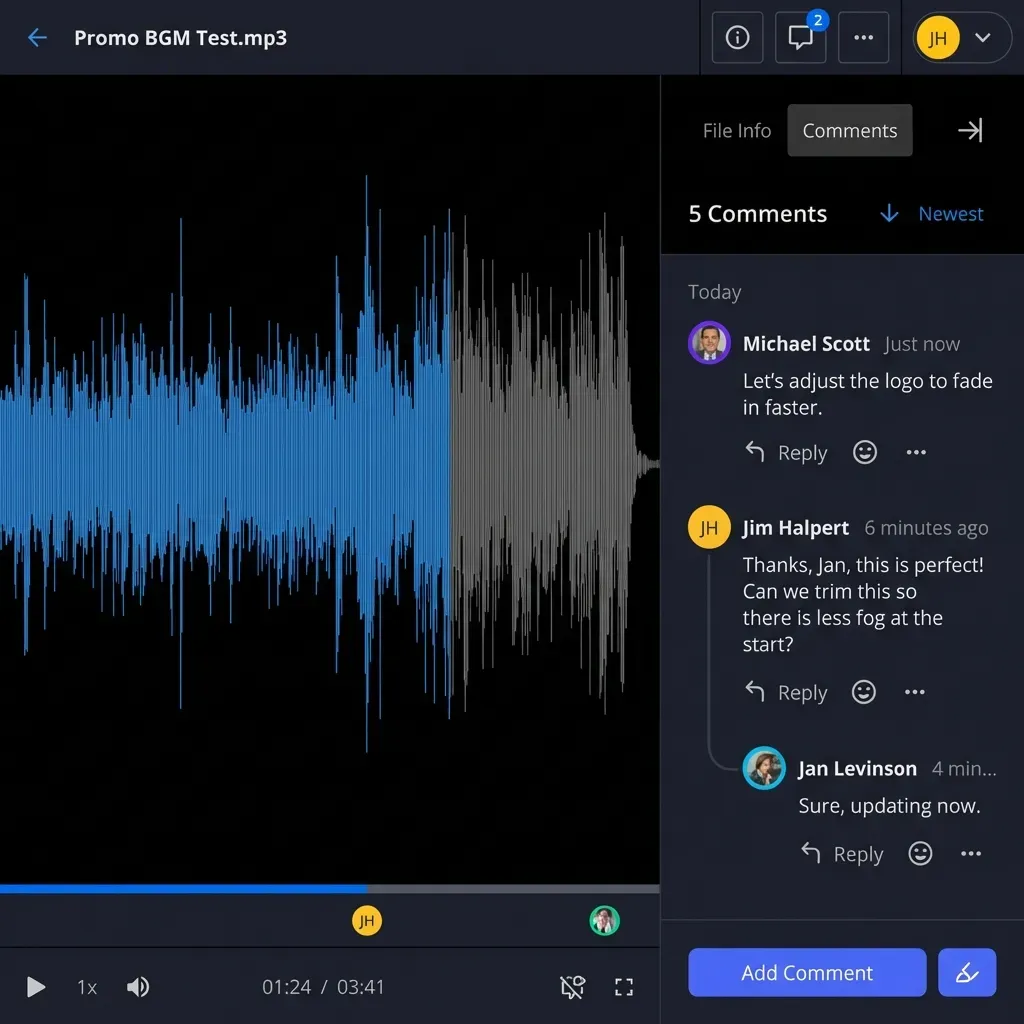

Human-in-the-Loop Collaboration Agents rarely work in total isolation. They often need human approval before taking sensitive actions, or they need to hand off a completed task to a person. This requires a shared environment where humans and agents have equal access. If an agent writes a report, a human should be able to open, edit, and approve it immediately, without complex data pipeline transfers.

The AI Agent MLOps Stack

To build a scalable agent pipeline, you need a stack that covers orchestration, storage, tooling, and observability. Here are the essential components.

1. Orchestration: The Brain Orchestration frameworks like LangGraph, CrewAI, or AutoGen manage the control flow of your agents. They define how agents communicate, how tasks are routed, and how conflicts are resolved.

- LangGraph is particularly strong for defining complex, stateful workflows where agents need to loop or branch based on conditions.

- CrewAI excels at role-playing scenarios where you define specific personas (e.g., 'Researcher', 'Writer') that collaborate to achieve a goal.

2. Storage & Memory: The Workspace Fastio serves as the persistent layer for agent MLOps. It provides a shared file system where agents can store logs, memories, and work products.

- Persistence: Files are stored safely and accessible via standard APIs or the MCP server.

- Intelligence Mode: Fastio's built-in RAG automatically indexes files, allowing agents to query documents semantically without needing a separate vector database.

- File Locks: Critical for multi-agent systems, file locks prevent race conditions when multiple agents try to update the same memory file simultaneously.

3. Tooling: The Hands Agents need tools to interact with the world. The Model Context Protocol (MCP) has emerged as the standard for connecting AI models to external systems. Fastio provides a comprehensive MCP server that gives agents access to the file system, search, and other utilities.

- 19 consolidated tools: Fastio offers multiple pre-built tools for agents, ranging from file manipulation to semantic search.

- Streamable HTTP: Tools are delivered efficiently, ensuring low latency for agent actions.

4. Observability: The Eyes You need to see what your agents are thinking. Tools like Arize Phoenix or LangSmith allow you to trace the execution steps of an agent. Fastio complements this with detailed audit logs of every file change, showing exactly which agent modified which file and when. This is crucial for debugging and compliance.

Beyond tracing, consider integrating an evaluation framework like DeepEval or Ragas. These tools can automatically grade your agent's outputs against a golden dataset, ensuring quality doesn't degrade as you tweak the prompts.

The Role of Ownership Transfer

A unique feature of the Fastio stack is ownership transfer. In a production pipeline, an agent might create a client portal or a project workspace. Once the setup is complete, the agent can programmatically transfer ownership of that workspace to a human user. This enables "setup bots" that provision infrastructure and then hand the keys to a human, a powerful pattern for agency workflows.

Implementing Multi-Agent Pipelines

Setting up a production-ready agent pipeline involves connecting these components into a cohesive workflow. Here is a step-by-step guide to getting started.

Step 1: Create a Shared Workspace Start by creating a Fastio workspace. This will act as the "office" for your agents. Configure access permissions so that your agents have read/write access to necessary folders, while sensitive archives remain read-only.

Step 2: Install the MCP Server Connect your agents to Fastio using the ClawHub or direct MCP installation.

clawhub install dbalve/fast-io

This single command gives your agents access to the full suite of file management and search tools.

Step 3: Define Agent Roles and Handoffs

Partition the work. For example, have a "Researcher" agent that searches the web and saves summaries to a /research folder. A "Writer" agent then monitors that folder (using webhooks to detect changes) and drafts content in a /drafts folder. Use Fastio's file locks to ensure the Writer doesn't read a file while the Researcher is still updating it.

Step 4: Enable Intelligence Mode

Turn on Intelligence Mode for your workspace. This activates the background indexing. Now, your agents can use the search tool to find information by meaning, not just by keyword. This effectively gives your agents a "second brain" containing all your project knowledge.

Step 5: Deploy and Monitor Deploy your agents. Use webhooks to trigger runs based on external events (like a file upload). Monitor the audit logs in Fastio to watch the agents at work. If an agent gets stuck or produces an error, the logs will provide the forensic trail needed to fix the prompt or logic.

Build Scalable Agent Pipelines on Fastio

Get 50GB free storage, 5,000 credits/month, and 19 consolidated tools. The complete MLOps storage layer for your autonomous agents. Built for agent mlops workflows.

Best Practices for Production

Moving from a prototype to a production pipeline requires discipline. Follow these best practices to ensure stability and scalability.

Version Your Agents

Treat agent prompts and configurations like code. Use Git to version control your agent definitions. When you deploy a new version, update the agent's working directory path (e.g., /agents/v1/ vs /agents/v2/) so you can roll back if the new behavior is regression.

Test with Evals Unit tests are hard for probabilistic agents. Instead, use "evals", sets of test cases with graded outputs. Run these evals automatically before every deployment. Check if the agent can correctly retrieve a specific document from the Fastio workspace or if it follows the file naming convention you established.

Isolate Environments

Just like traditional software, agents should have separate environments for development, staging, and production. Use Fastio workspaces to enforce this separation. Develop your agent in a dev workspace with dummy data, move it to staging for integration tests, and finally deploy to prod where it interacts with real user files. Never test experimental prompts on production data.

Implement Least Privilege

Don't give every agent root access. Use Fastio's granular permissions to restrict agents to specific subfolders. A "Logger" agent should only have write access to /logs, while a "Reader" agent should have read-only access to /data. This minimizes the blast radius if an agent is hijacked or malfunctions.

Monitor Costs and Usage Agents can burn through tokens quickly. Fastio's free tier for agents is generous, offering 50 GB of storage and 5,000 credits per month, which is enough for substantial workloads. However, you should still monitor usage patterns. Set up alerts if an agent suddenly starts writing thousands of small files, which could indicate a loop.

Handling Failures Gracefully

Agents will fail. Design your pipeline to handle it. If an agent fails to generate a valid output, it should log the error and retry with a backoff strategy. Ensure your downstream agents can handle missing or partial data without crashing. The robustness of your pipeline depends on how well it handles the unpredictable nature of AI.

Troubleshooting Common Issues

Even well-designed pipelines encounter issues. Here are solutions to common problems in Agent MLOps.

Infinite Loops Agents sometimes get stuck repeating the same action. Use Fastio audit logs to spot repetitive file writes. Implement a "time-to-live" or a maximum step count in your orchestration layer to kill runaway agents.

Hallucinations If an agent invents facts, check its data source. Is the RAG index up to date? In Fastio, indexing happens automatically, but ensure the source files are accurate. Force the agent to cite its sources by providing specific file paths in its output.

Tool Usage Errors Agents might try to call tools with incorrect arguments. The Fastio MCP server provides descriptive error messages. Feed these errors back to the agent so it can self-correct. "I see you tried to read a file that doesn't exist. Please check the file list first."

Data Drift Over time, the data in your workspace changes. An agent trained on last year's data might fail today. Use the "last modified" metadata in Fastio to ensure agents are prioritizing the most recent documents.

Frequently Asked Questions

What is the difference between MLOps and Agent MLOps?

Traditional MLOps focuses on training and deploying static predictive models. Agent MLOps focuses on managing the lifecycle of autonomous, goal-seeking agents, handling their complex state, tool usage, and multi-step execution loops.

How do I handle agent state persistence?

Use a persistent storage layer like Fastio. Agents can write their state (memory, context, logs) to files in a shared workspace. This allows them to pause and resume tasks or share context with other agents.

What are the best tools for agent orchestration?

Popular frameworks include LangGraph, CrewAI, and AutoGen. These tools help define the logic and control flow for your agents. They integrate well with Fastio for the underlying storage and resource management.

Can I use Fastio for agent memory?

Yes. Fastio is ideal for agent memory because it offers persistent file storage and built-in Intelligence Mode. Agents can save memories as text files and then semantically search them later to recall information.

How do I debug agent loops?

Use observability tools to trace the agent's thought process. Check the Fastio audit logs to see if the agent is repeatedly reading or writing the same files. Set step limits in your orchestration code to prevent infinite loops.

Is Fastio free for agents?

Yes, the free agent tier includes 50 GB of storage and 5,000 credits per month. This allows developers to build and test strong agent pipelines without upfront costs or credit card requirements.

What is the Model Context Protocol (MCP)?

MCP is an open standard that enables AI models to connect to external tools and data sources safely. Fastio provides an MCP server with multiple tools, allowing agents to interact with your file system and data directly.

Related Resources

Build Scalable Agent Pipelines on Fastio

Get 50GB free storage, 5,000 credits/month, and 19 consolidated tools. The complete MLOps storage layer for your autonomous agents. Built for agent mlops workflows.