Implementing Collaborative Prompt Versioning for Shared Agent Environments

Guide to collaborative prompt versioning shared agent environments: Teams working on AI agents need a way to track changes to their instructions. When multiple people edit the same prompt, things break quickly without version control. Collaborative prompt versioning helps you manage these changes, prevent performance regressions, and keep behavior consistent across your whole team. This guide shows how to set up a prompt registry and manage the full agent lifecycle without losing track of what w

What is collaborative prompt versioning?

Collaborative prompt versioning tracks every change you make to an agent's instructions. This lets you see what changed, who changed it, and roll back if a new version performs worse than the old one. In shared workspaces, prompts are the source code for how an agent behaves. If you don't track versions, one small tweak by a developer can break a complex multi-agent workflow without anyone knowing why.

Instead of keeping prompts hidden in your application code, you move them to a central registry. This makes it easy to compare two versions of the same prompt side-by-side to see how the logic evolved. According to LLMOps standards, good versioning systems use atomic updates. This means a change only goes live if it passes your validation checks. This lets you iterate on agent logic without redeploying your entire app every time you want to fix a phrasing issue.

By decoupling prompts from the code, teams can treat them as managed assets. You can test a new "personality" for an agent in a staging environment while the stable version continues to serve users. This visibility is what separates an experimental prototype from a reliable, production-ready system.

Helpful references: Fastio Workspaces, Fastio Collaboration, and Fastio AI.

Why you need version control for agents

Shared environments are tricky because agents often rely on the same global instructions. If you edit a prompt to make one agent faster or more concise, you might accidentally delete the context another agent needs for a specialized task.

The risks of unversioned changes are real. Industry research shows that a single untracked change to a system prompt can lead to a 40% error rate in production. These errors usually look like hallucinations or ignored constraints, which are hard to debug without a history of what changed. Version control also cuts deployment errors by 40% because it forces a review step before any prompt goes live.

Shared libraries also help keep your agents consistent. When every department uses the same versioned templates for customer support or data tasks, your brand voice stays the same. Without a central registry, you end up with "prompt debt," where dozens of slightly different versions of the same instructions live in different places, making agent behavior unpredictable.

Collaborate on Files with Your Team

Get 50GB of free storage and 19 consolidated tools to build, version, and share your AI agents with a team. No credit card required. Built for collaborative prompt versioning shared agent environments workflows.

How to set up a shared prompt registry

Moving from simple prompting to prompt engineering starts with separating prompts from your code. Don't store instructions in environment variables or hardcoded strings. Use versioned files like YAML or JSON in a shared workspace like Fastio.

Use semantic versioning: Label your prompts with versions like customer-support.v1.2.0. Major versions are for total rewrites, minor versions for new context, and patches for small phrasing tweaks.

2.

Include metadata: Track the author, the model used (like GPT-4o or Claude multiple.multiple Sonnet), and specific settings like "Intelligence Mode." 3.

Use a staging environment: Test new prompts in a shared workspace against a set of "golden responses" before they go live to ensure no regressions occur. 4.

Set up rollbacks: Make sure you can instantly switch back to the last working version if your metrics drop after an update.

This setup makes collaboration safer. A senior dev can review a prompt update just like a code pull request. This keeps instructions clear and helps you save on token costs by avoiding redundant logic.

Coordinating multiple agents

In shared setups, agents often work in a chain. The output of the first agent becomes the input for the second. Versioning has to account for these dependencies. If you update Agent multiple, you have to make sure the output format still works for Agent multiple.

Using templates with versioned variables is a common fix. You update the template version but keep the variable names the same. This keeps the interface between agents stable even as the logic inside each agent changes.

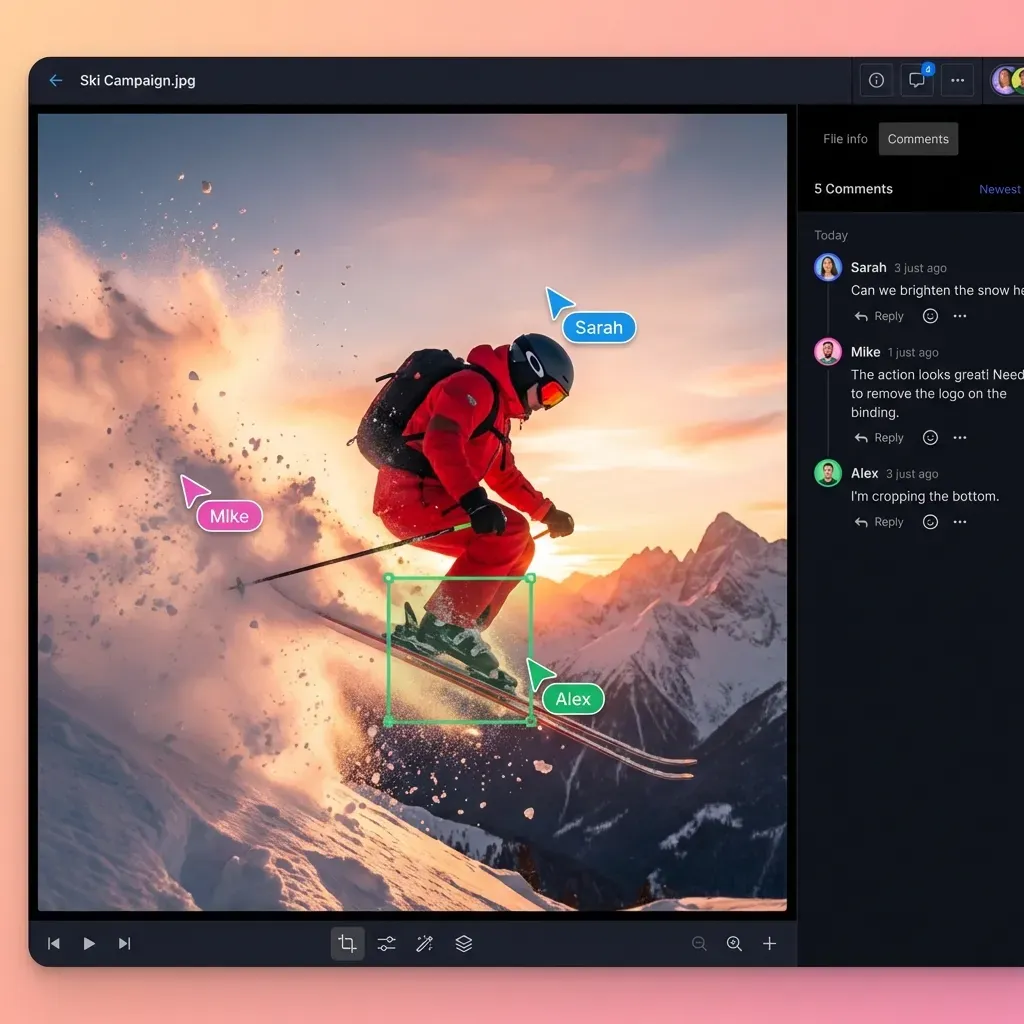

You should also use file locks for shared access. In a workspace, if an agent and a human try to edit the same instruction at the same time, the system needs to handle the conflict. Fastio provides these locks to prevent race conditions, keeping the agent lifecycle smooth as your team grows. This ensures that everyone is working on the most recent version of the truth.

Measuring performance and benchmarks

You can't improve what you don't measure. Use A/B tests to compare versions before you swap them out. Run the new and old versions against the same set of queries to see if the new instructions are actually better or just different.

SparkCo AI data shows that teams using shared prompt libraries see a 40% improvement in response consistency. This works by running automated checks for keywords, sentiment, and formatting rules.

Manual Tracking vs. Collaborative Versioning

Structuring your prompts this way removes the "it worked for me" excuse. Every change is based on data, and every update is a verified step forward for the project.

Future-proofing your prompts

The next step for agent collaboration is self-optimizing prompts. These are systems where an agent looks at its own performance data and drafts its own versioned updates for you to approve. Your versioning system needs an API to support this kind of workflow.

With a workspace that supports multiple MCP tools, agents can manage their own instructions directly. An agent can read its history, see where it failed in the logs, and write a new v2.1.multiple draft for the team to review. This creates a loop of constant improvement with less manual work.

When building your environment, pick a platform with built-in RAG. When your prompts are indexed, agents can learn from what worked in previous versions in the registry. This turns your version history into a training set for your future AI workforce.

Frequently Asked Questions

How do you version control prompts for agents?

The best way to version control prompts is by storing them as independent YAML or JSON files in a Git repository or a specialized registry. This lets you use semantic versioning like v1.0.multiple and track every change through pull requests, making updates easy to test and reverse.

What is the best way to share prompts with a team?

The most effective way is through a central prompt library or shared workspace. This gives everyone a single source of truth. Using a registry with access controls prevents accidental changes while letting the whole team collaborate on improvements and benchmarks.

Can I use Git for prompt versioning?

Yes, Git works well because it has branching and history tracking built in. However, for agents that need to fetch updates in real-time, it is often better to sync your Git repo to an API-accessible registry so agents can access the latest versions without extra local I/O.

Why is semantic versioning important for prompts?

It helps you understand the impact of a change quickly. A patch like v1.0.multiple is usually a minor tweak that won't break anything, while a major version like v2.0.multiple means the logic or output format changed enough that you need to re-test your whole agent chain.

How do I roll back a prompt change?

In a versioned system, you just point your agent's config back to the previous version ID in the registry. This instantly restores the old behavior across all your agents, giving you a safety net if new instructions don't work as expected in production.

Related Resources

Collaborate on Files with Your Team

Get 50GB of free storage and 19 consolidated tools to build, version, and share your AI agents with a team. No credit card required. Built for collaborative prompt versioning shared agent environments workflows.