Fast.io API vs Amazon S3: Which is Better for Agent Storage?

While Amazon S3 offers raw object storage, Fast.io provides an intelligent workspace with built-in MCP tools and semantic search out-of-the-box. This comprehensive comparison explores the key differences between the Fast.io API and Amazon S3 for agent storage, evaluating their features, setup time, and suitability for modern AI applications. Discover which backend is right for your next agentic workflow.

The New Era of AI Agent Infrastructure

The rapid adoption of AI agents has fundamentally changed how applications interact with data. Traditional applications relied on databases and object stores designed for human or programmatic access, where the primary operations were simple CRUD (Create, Read, Update, Delete) actions. AI agents, however, require something fundamentally different: storage that understands context, supports semantic search, and integrates seamlessly with language models.

When evaluating agent storage backends, developers frequently find themselves comparing the industry standard, Amazon S3, with specialized solutions like the Fast.io API. While Amazon S3 offers raw object storage, Fast.io provides an intelligent workspace with built-in MCP tools and semantic search out-of-the-box.

This comprehensive comparison will explore the strengths and weaknesses of both platforms, specifically focusing on the unique needs of AI agents. We will delve into how each platform handles context retrieval, Model Context Protocol (MCP) integration, data lifecycle management, and the overall developer experience. Understanding these differences is crucial for architecting scalable, efficient, and intelligent AI systems that can seamlessly collaborate with human teams.

What is Agent Storage?

Agent storage is a specialized infrastructure designed to maintain state, context, and data for autonomous AI systems. Unlike traditional object storage, which simply holds files, agent storage must facilitate rapid retrieval, semantic understanding, and seamless integration with Large Language Models (LLMs).

A robust agent storage solution typically includes several critical capabilities:

- Vector Search Capabilities: Traditional search relies on keyword matching, which is often insufficient for AI agents trying to understand intent and context. Agent storage must support vector embeddings, allowing agents to find relevant information based on meaning rather than just exact keyword matches.

- Built-in Parsing and Chunking: AI agents cannot process massive documents in a single pass. The storage layer needs to automatically extract text from various file formats (such as PDFs, Word documents, and spreadsheets), chunk the text into manageable pieces, and prepare it for embedding.

- Access Control and Security: As agents operate autonomously, they often handle sensitive data. Robust mechanisms to ensure agents only access the data they are authorized to see are paramount. This includes granular permissions and secure API access.

- Standardized Interfaces (MCP): The emergence of the Model Context Protocol (MCP) has created a standardized way for AI models to interact with external tools and data sources. Agent storage must support these protocols to allow agents to interact with the storage natively, without requiring bespoke integration code for every new model.

- State Management: Agents need a place to store their working memory, task progress, and intermediate outputs. The storage layer should provide a reliable mechanism for maintaining this state across sessions.

For developers, choosing the right agent storage backend is critical. It dictates not only the performance and capabilities of the AI agent but also the engineering overhead required to build and maintain the system.

The Traditional Approach: Amazon S3 for Agent Storage

Amazon Simple Storage Service (S3) is the undisputed heavyweight champion of cloud storage. It is highly scalable, incredibly durable, and deeply integrated into the AWS ecosystem. But how does it perform when repurposed as an agent storage solution?

The Strengths of Amazon S3

Amazon S3's dominance is well-earned. It provides a rock-solid foundation for storing virtually any type of data.

- Massive Scalability and Reliability: S3 provides virtually unlimited storage capacity and high availability (designed for eleven nines of durability). This makes it suitable for storing massive datasets, log files, and the raw data used to train or feed AI models. It can handle large files efficiently without application memory constraints.

- Cost-Effectiveness at Scale: For raw storage, S3 offers highly competitive pricing. With its tiered storage classes (like S3 Standard, S3 Intelligent-Tiering, and S3 Glacier), organizations can optimize costs based on data access patterns.

- Ecosystem Integration: If your infrastructure is already heavily invested in AWS, S3 integrates seamlessly with other services. You can easily connect it to AWS Lambda for event-driven processing, Amazon SageMaker for machine learning workflows, and Amazon Bedrock for generative AI capabilities.

The Limitations for AI Agents

While S3 is exceptional for object storage, it falls short when evaluated specifically against the needs of modern AI agents.

- Lack of Native Intelligence: S3 is fundamentally a "dumb" storage system. It stores bytes and retrieves bytes. It does not understand the content of the files it holds. If an agent needs to find a specific clause in a lengthy PDF stored in S3, the storage layer provides no assistance.

- Complex RAG Setup: To build a Retrieval-Augmented Generation (RAG) system using S3, developers must architect complex data pipelines. When a file is uploaded to S3, a Lambda function must trigger, download the file, parse the text, split it into chunks, call an embedding API, and then store those embeddings in a separate vector database. This adds significant latency, architectural complexity, and maintenance overhead.

- No Native MCP Support: S3 does not natively support the Model Context Protocol. Developers must write and maintain custom integration layers to translate agent requests into S3 API calls, managing authentication, pagination, and error handling manually.

- Poor Human-Agent Collaboration: S3 provides no user interface for human users to interact with the data alongside the agent. If an agent generates a report and saves it to S3, a human user cannot easily view, comment on, or edit that report without a custom-built frontend application.

The Modern Approach: Fast.io API for Agent Storage

Fast.io takes a fundamentally different approach to storage. Instead of providing raw storage blocks, the Fast.io API is designed to create intelligent workspaces. It is purpose-built to serve as the memory, context, and collaboration layer for AI agents.

The Strengths of Fast.io API

Fast.io eliminates the boilerplate and infrastructure management typically associated with building AI applications, allowing developers to focus on agent behavior.

- Built-in RAG and Semantic Search: Fast.io automatically indexes uploaded files (including text, PDFs, and code) for RAG. When a file is added to a Fast.io workspace, the platform automatically parses the content, generates embeddings, and makes it searchable. Agents can query documents directly using AI, eliminating the need to build and maintain complex indexing pipelines or manage separate vector databases.

- Native MCP Integration: A standout feature is the official Fast.io MCP server, which provides over 251 built-in tools via Streamable HTTP and SSE. This allows MCP-compatible agents (like Claude or custom agents built with frameworks like LangChain) to natively read, write, and search files without requiring extensive custom API integrations.

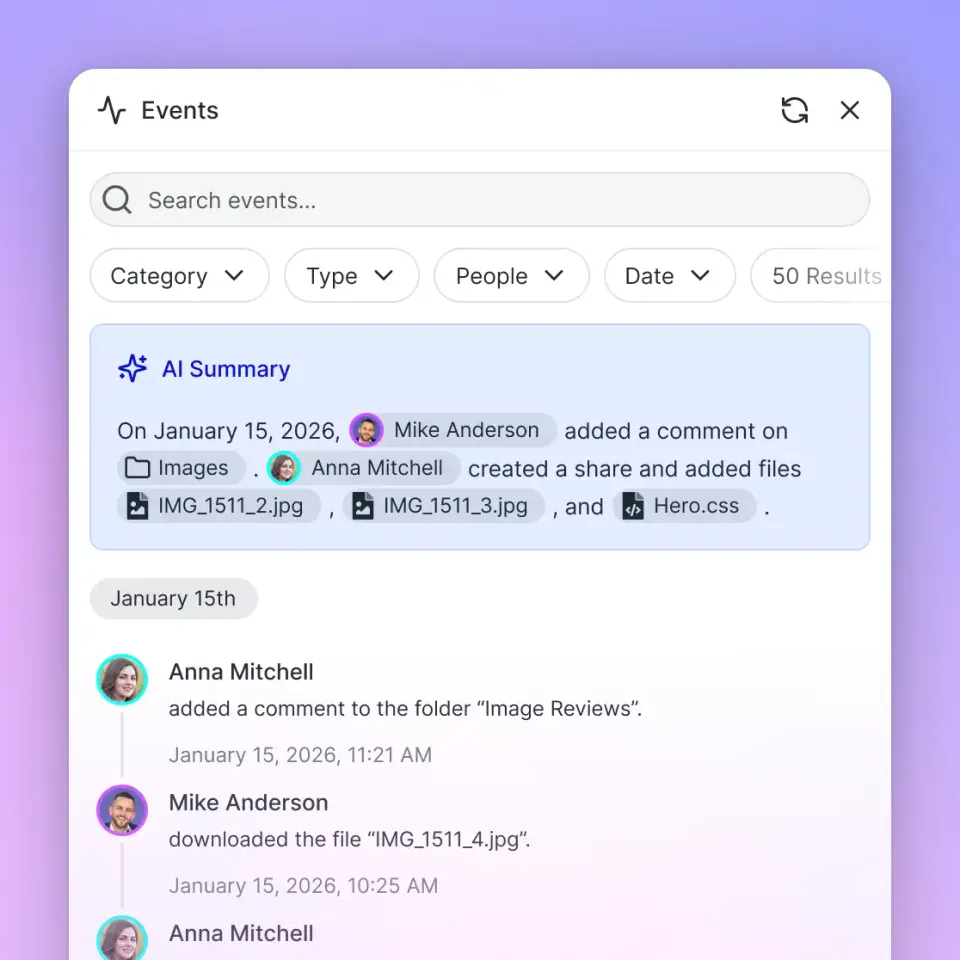

- Human-Agent Collaboration: Fast.io provides dedicated agent accounts and intuitive workspaces. Agents can invite humans into workspaces, transfer ownership of projects, and collaborate in real-time. This bridges the critical gap between AI operations and human oversight, allowing teams to review agent outputs, provide feedback, and co-create within a shared environment.

- URL Import and Webhooks: Fast.io simplifies data ingestion. It can pull files directly from cloud providers like Google Drive, OneDrive, Box, and Dropbox via OAuth, eliminating the need for local I/O operations. Also, webhooks allow developers to build reactive workflows, triggering agent actions automatically when files change.

- File Locks for Concurrency: In multi-agent systems, data consistency is critical. Fast.io provides file locking mechanisms, allowing agents to acquire and release locks to prevent conflicts when multiple agents are working on the same data simultaneously.

Considerations when using Fast.io

- Ecosystem Independence: While Fast.io integrates with many tools, it is a distinct platform. If your entire architecture is strictly bound to AWS primitives, introducing a new platform requires evaluating data gravity and egress patterns.

- Specialized Use Case: Fast.io shines when used for active, working data that agents need to interact with. For cold storage or archiving massive amounts of data that will rarely be accessed, a service like S3 Glacier remains the more cost-effective choice.

Fast.io API vs Amazon S3 for agent storage

When evaluating these platforms for AI workloads, the differences in architecture and intended use cases become starkly apparent. Most S3 comparisons ignore the specific needs of AI agents, like context retrieval and MCP, focusing solely on bandwidth and storage costs. Here is a detailed breakdown of how they compare on key agent-centric features.

The Bottom Line: Fast.io provides a complete, intelligent workspace layer ready for agents to consume immediately. Amazon S3 provides reliable raw storage that requires significant engineering effort, third-party services, and maintenance to make it agent-ready.

Evidence and Benchmarks: The Cost of Complexity

The choice between building a custom storage pipeline on S3 and using a purpose-built solution like Fast.io has measurable impacts on development velocity, system complexity, and ultimately, the time-to-market for AI applications.

According to Fast.io Documentation, adopting the Fast.io API reduces agent boilerplate code by up to 80% compared to building custom RAG indexing pipelines on top of traditional object storage like Amazon S3.

Let's examine the architectural differences that drive this metric:

The S3 RAG Architecture: When using S3 for an agent that needs to answer questions based on documents, developers must construct and maintain a complex pipeline:

- Storage: Amazon S3 bucket to hold the raw documents.

- Event Trigger: S3 Event Notifications triggering an AWS Lambda function.

- Processing: The Lambda function must download the file, extract text (potentially requiring OCR services like Textract for images), and chunk the text.

- Embedding: The chunked text must be sent to an embedding API to generate vector representations.

- Vector Database: The embeddings and metadata must be stored in a separate vector database.

- Agent Integration: The agent must be programmed to query the vector database, retrieve relevant chunks, and synthesize an answer.

This architecture requires managing multiple services, handling API rate limits, ensuring data consistency between S3 and the vector database, and writing extensive glue code.

The Fast.io Architecture: Fast.io collapses this entire architecture into a single, cohesive API. By toggling "Intelligence Mode" on a workspace, the platform handles the complexity internally.

- Storage & Processing: A document is uploaded to Fast.io.

- Automatic Indexing: Fast.io automatically extracts the text, generates embeddings, and stores them in its built-in vector search engine.

- Agent Integration: The agent uses the Fast.io MCP server or API to search the workspace directly, receiving context-rich snippets immediately.

This streamlined approach drastically reduces the time from concept to deployment, allowing engineering teams to focus on prompt engineering and agent logic rather than infrastructure plumbing.

Ready to build smarter AI agents?

Get 50GB of free storage and 5,000 monthly credits. Build agentic workflows with built-in RAG and MCP support in minutes, not weeks.

Security and Compliance in Agent Storage

When agents interact with data, security cannot be an afterthought. Both Fast.io and Amazon S3 offer robust security features, but they approach the problem from different angles.

Amazon S3 Security Posture: Amazon S3 is a fortress when configured correctly. It offers server-side encryption and client-side encryption. Access is governed by AWS Identity and Access Management (IAM), allowing for incredibly granular control over who (or what) can read, write, or delete specific objects. However, this granularity comes with complexity. Misconfigured S3 buckets are a notorious source of data breaches. Developers must meticulously craft IAM policies to ensure an agent has the exact permissions it needs—no more, no less.

Fast.io Security Posture: Fast.io simplifies security for agentic workflows. It employs modern TLS for data in transit and AES encryption for data at rest. For agents, Fast.io uses dedicated agent accounts with API keys scoped to specific workspaces. This means an agent can only access the files within its assigned workspace, inherently limiting the blast radius of a compromised agent. Also, Fast.io's visual interface allows human administrators to easily audit agent activity and revoke access with a single click, providing a more intuitive security management experience than parsing AWS CloudTrail logs.

The Role of the Model Context Protocol (MCP)

To truly grasp the advantage of Fast.io for agent storage, one must understand the Model Context Protocol (MCP). The MCP is an open standard that allows AI models to securely connect to local and remote data sources and tools.

Why MCP Matters: Before MCP, developers had to write custom API integrations for every tool an agent needed to use. If an agent needed to read a file from S3, the developer had to write code to authenticate with AWS, retrieve the object, parse the content, and feed it into the LLM's prompt. This was brittle, time-consuming, and difficult to scale.

Fast.io and MCP: Fast.io has fully embraced the MCP ecosystem. It provides an official MCP server with over 251 built-in tools accessible via Streamable HTTP and SSE. This means that an MCP-compatible agent, such as Anthropic's Claude or an agent built with LangChain, can natively interact with a Fast.io workspace. The agent can list files, read content, search for specific information, and write new files—all without the developer writing a single line of integration code.

S3 and MCP: Amazon S3 does not currently offer a native MCP server. To achieve similar functionality, a developer must build a custom middleware service that exposes an MCP interface to the agent on one side and translates those requests into AWS SDK calls on the other. This adds a significant layer of maintenance and complexity to the application architecture.

Real-World Use Cases: Where Fast.io Outshines Traditional Object Storage

To truly understand the difference between Fast.io and Amazon S3 in the context of AI agents, it is helpful to look at specific, real-world application patterns.

Automated Research and Synthesis Agents Imagine an AI agent designed to monitor industry reports, financial filings, and news articles, synthesizing this information into weekly briefings for a strategy team.

- The S3 Approach: The developer must build a scraper to download the documents, upload them to S3, trigger a Lambda function to extract the text using a service like Textract, chunk the text, generate embeddings, store them in a vector database, and finally, write the agent logic to query the database and generate the report. The report must then be saved back to S3, and a separate system must notify the human team.

- The Fast.io Approach: The agent uses Fast.io's URL import feature to pull the reports directly into a workspace. Fast.io automatically indexes the documents. The agent uses the MCP search tool to query the workspace, synthesizes the report, and saves it as a markdown file in the same workspace. The human team, already invited to the workspace, can immediately view and edit the report. The process is streamlined, reducing infrastructure overhead and focusing development on the agent's analytical capabilities.

Customer Support and Triage Bots Consider a customer support agent that needs to reference historical ticket data, product manuals, and troubleshooting guides to assist users.

- The S3 Approach: The knowledge base must be synced to S3 and continuously processed into a vector database to keep the agent's knowledge current. Maintaining synchronization between S3, the database, and the application layer can be a significant operational burden, especially as documents are updated or deleted.

- The Fast.io Approach: The knowledge base is simply stored in a Fast.io workspace. When a manual is updated, Fast.io automatically re-indexes it. The support agent always has access to the most current information without any manual synchronization pipelines. Also, if the agent encounters an issue it cannot resolve, it can create a log file in the workspace and notify a human agent to take over, demonstrating seamless human-agent handoff.

How to Choose the Right Storage Backend for AI Agents

Choosing between the Fast.io API and Amazon S3 depends entirely on your project's specific goals, the available engineering resources, and the role the AI agent plays in your application.

Choose Amazon S3 if:

- You are building a massive data lake: If your primary concern is storing petabytes of raw training data or application logs where cost per gigabyte is the overriding factor, S3 is the optimal choice.

- You require deep AWS integration: If your application relies heavily on other AWS services and data gravity dictates keeping everything within the AWS ecosystem, S3 provides the necessary native integrations.

- You need complete control over the RAG pipeline: If your use case requires highly specialized chunking strategies, custom embedding models, or proprietary vector search algorithms, building a custom pipeline on top of S3 gives you absolute control over every step.

Choose the Fast.io API if:

- You want to deploy AI agents quickly: If your goal is to get an agent functioning and interacting with documents in minutes rather than weeks, Fast.io's turnkey intelligence is invaluable.

- Your agents need immediate document understanding: For applications where agents need to search, summarize, and reason over PDFs, text files, and code repositories, Fast.io's built-in RAG capabilities eliminate the need for complex ETL pipelines.

- You want to leverage the Model Context Protocol (MCP): If you are building with Claude or other MCP-compatible frameworks, Fast.io's native MCP server provides immediate, standardized access to file operations and search.

- Your workflow involves human-agent collaboration: If your agents are generating content, reports, or code that human team members need to review, edit, and approve, Fast.io's visual workspaces provide the necessary collaborative environment.

The Future of Agentic Workspaces

As AI agents become more sophisticated, their storage needs will continue to evolve from simple data repositories to intelligent, active knowledge bases. Agents will require environments that not only store information but actively organize, retrieve, and contextualize it. While traditional object storage like Amazon S3 will remain foundational for underlying cloud infrastructure, the application layer is shifting towards platforms that natively understand context and semantics.

Fast.io represents this shift in paradigm, offering a developer experience that treats AI agents as first-class citizens. By handling the heavy lifting of indexing, retrieval, and protocol support, it allows developers to focus on what matters most: building intelligent, capable, and collaborative AI agents that deliver real value. As the ecosystem matures, the distinction between "storage" and "memory" will blur, and platforms that bridge that gap will become the standard for agent development.

Frequently Asked Questions

Can I use S3 for AI agents?

Yes, you can use Amazon S3 for AI agents, but it requires significant custom engineering. Because S3 is a raw object store, it lacks built-in semantic search or indexing. To use it for agent context, you must build a custom Retrieval-Augmented Generation (RAG) pipeline, integrating S3 with text extraction services, embedding models, and a separate vector database.

Is Fast.io better than S3 for RAG?

For most developer teams, Fast.io is significantly better and faster to implement for RAG than S3. Fast.io features built-in "Intelligence Mode" that automatically indexes uploaded files (PDFs, text, code) for semantic search. This eliminates the need to build and maintain the complex ETL pipelines and vector databases required when using S3 for RAG.

Does Fast.io support the Model Context Protocol (MCP)?

Yes, Fast.io offers an official MCP server with over 251 built-in tools. This allows MCP-compatible agents, such as Claude, to natively read, write, search, and manage files within Fast.io workspaces without requiring developers to write custom API integration code.

How does pricing compare between Fast.io and S3?

Amazon S3 charges based on raw storage volume, data transfer, and API requests, which can be highly cost-effective for massive scale. Fast.io offers a generous free tier specifically designed for agents, including 50GB of storage and 5,000 monthly credits. While S3 might be cheaper for petabytes of cold data, Fast.io is often more cost-effective when factoring in the engineering time saved by not building custom RAG pipelines.

Can human users access the files stored for agents in Fast.io?

Yes, human-agent collaboration is a core feature of Fast.io. Unlike S3, which requires a custom frontend for user access, Fast.io provides intuitive shared workspaces. Agents can upload files, generate reports, and then invite human team members to review, edit, and collaborate on those same files within a visual interface.

Related Resources

Ready to build smarter AI agents?

Get 50GB of free storage and 5,000 monthly credits. Build agentic workflows with built-in RAG and MCP support in minutes, not weeks.