How to Find the Fastest Business File Sharing: Best Methods for 2026

Guide to business file sharing: Finding the fast way to share business files isn't just about bandwidth. It's about how the technology fits your team's workflow. High-performance teams lose money in labor when they're stuck waiting for slow transfers. This guide compares block-level synchronization, UDP acceleration, and agent-ready workspaces that fix local bottlenecks.

How to implement fast business file sharing reliably

For most businesses, speed is a financial metric. When a creative director or a senior engineer sits idle while a multi-gigabyte project uploads, the company is losing money. Creative and technical talent represents a major operational cost, and idle time during file transfers directly reduces billable output. When teams experience delays from sync issues or failed transfers, the operational cost can exceed the price of the software itself.

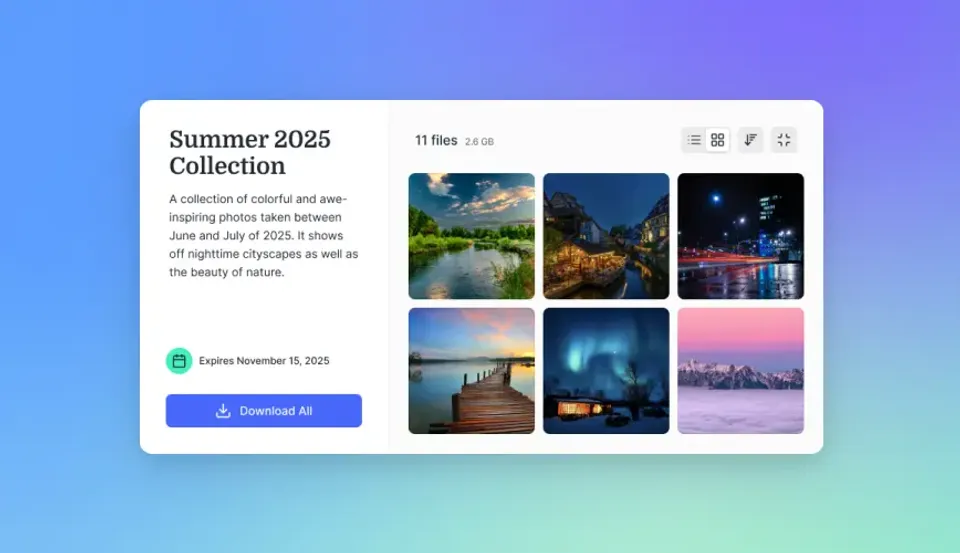

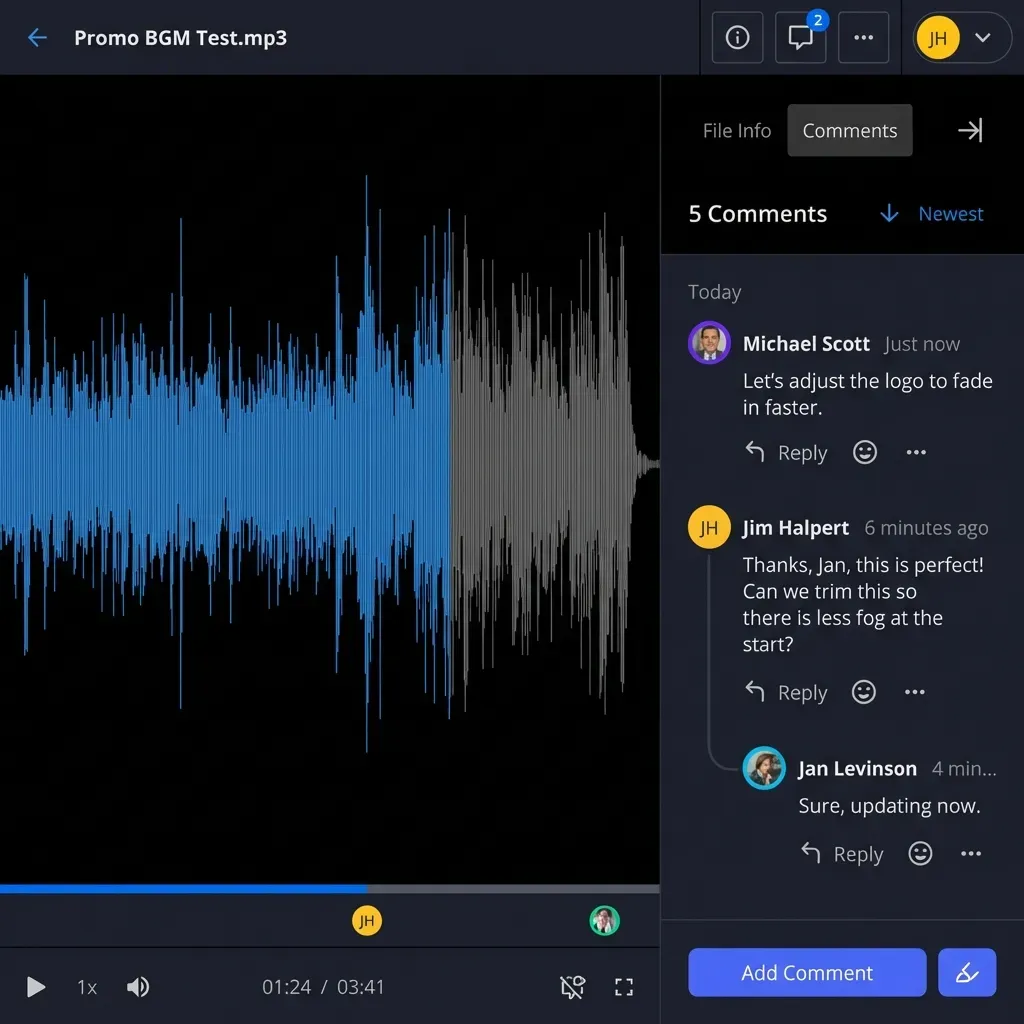

Specialized collaboration platforms now manage creative reviews much faster than email by centralizing feedback and automating the delivery of high-resolution assets. In practice, the fast tools focus on upload and download speeds to meet tight deadlines, ensuring the progress bar doesn't stop production.

The cost also includes the "focus tax." It can take over 23 minutes for a worker to regain deep focus after being interrupted by a slow system. When a transfer takes a long time, the employee doesn't just wait; they switch to a different task. This breaks up the workday and reduces overall quality, making high-speed infrastructure a requirement for high-output teams.

Helpful references: Fast.io Workspaces, Fast.io Collaboration, and Fast.io AI.

What to check before scaling fast business file sharing

If your primary goal is keeping a team in sync while they edit shared files, the best technology for speed is block-level copying. Standard cloud storage often requires the system to re-upload an entire file even if only a single paragraph or one frame of video was changed. This wastes bandwidth and slows down everyone on the team.

Block-level sync breaks files down into small pieces. When you save a change, the software identifies exactly which blocks were modified and only uploads those segments. This leads to nearly instant updates for large files like CAD drawings, Photoshop projects, or database exports. For teams working across different cities, this technology is the backbone of a high-speed collaborative environment.

LAN Sync and Local Acceleration

A key feature of modern block-level sync is LAN Sync. This technology detects if another computer on your local network already has the file you need. Instead of downloading the data from a remote data center over the internet, the software transfers the file directly over your local office network at gigabit speeds. This bypasses your internet service provider, which is helpful for large teams working in the same building on massive media projects.

Moving the Unmovable: UDP-Based Acceleration

When you need to deliver a massive asset, such as high-resolution video files or massive datasets, standard sync often fails. Most web traffic uses Transmission Control Protocol (TCP), which is built for reliability rather than raw speed. TCP requires a constant "handshake" between the sender and receiver. If a single packet is lost or if there is high latency over long distances, TCP slows down to make sure the data is correct.

The fast way to move massive datasets for large-scale delivery is with User Datagram Protocol (UDP) acceleration. Tools like MASV or IBM Aspera use specialized protocols on top of UDP to get the most out of your bandwidth. Unlike TCP, these tools don't wait for a handshake for every packet. They send the data across the network at the highest possible speed and handle error correction in a separate, more efficient process. UDP-based tools can move files far faster than standard browser-based uploads over long-distance international routes.

Global Edge Networks

UDP tools often use global edge networks to reduce the distance data must travel. By routing traffic through a dedicated network of high-speed servers rather than the public internet, these services can maintain consistent speeds even when sending files between continents. This is important for global supply chains in manufacturing and film production, where a delay in receiving a technical spec or a daily cut can stall an entire production line.

Share Files Without Limits on Fast.io

Join the intelligent workspace where files are auto-indexed and ready for agents. Move your data at cloud-to-cloud speeds and skip the local bottlenecks. Built for business file sharing workflows.

The Zero-Download Workflow: Cloud Streaming File Systems

The quickest way to work on a file is to avoid downloading it at all. A newer category of "streaming" file systems, such as LucidLink, has changed how media and entertainment teams handle remote work. Instead of syncing a local copy to every machine, these systems mount the cloud storage as a local drive.

When an editor opens a file, the system streams only the specific bits required for the current task in real-time. This allows a video editor in London to open a complex video project stored on a server in Los Angeles and begin editing immediately, without waiting for a multi-hour download. This "just-in-time" data delivery removes transfer time from the daily workflow. It is a major speed improvement for industries where file sizes have outpaced the growth of residential and office internet speeds.

Virtual File Systems and Metadata

These streaming systems work by separating file metadata from the actual data. The metadata, which includes the file names, folder structures, and permissions, is downloaded instantly, giving the user the appearance of a full drive. The actual "heavy" data is only fetched when a specific application requests it. This architecture allows teams to scale to massive storage volumes without worrying about local disk space or initial sync times.

Fast.io: The Intelligent, Agent-Ready Speed Layer

Fast.io takes a different approach to speed by focusing on intelligence and integration. While other tools focus on moving bytes from point A to point B, Fast.io creates an intelligent workspace where your data is already indexed and ready for action. Fast.io's URL Import feature is a major speed advantage. This lets users pull massive files directly from Google Drive, OneDrive, or Dropbox into a Fast.io workspace without ever downloading them to a local computer. By moving data "cloud-to-cloud," you bypass your own local internet bottlenecks.

Fast.io is also built for agentic work. With multiple Model Context Protocol (MCP) tools, AI agents can manage, search, and share files within your workspaces at speeds that no human could match. When you upload a file, Fast.io's Intelligence Mode automatically indexes it for semantic search. Instead of spending minutes clicking through folders to find a specific contract or design asset, you can ask the workspace a question and get a cited answer in seconds. This is speed at the layer of "time to insight," which is often more valuable than raw transfer bits.

Ownership Transfer and Rapid Handoffs

Fast.io also speeds up the client handoff process through ownership transfer. An agent or a freelancer can build a complete workspace, organize all assets, and then transfer the entire organization to a client in one click. This removes the need for manual file migrations or re-sharing permissions, ensuring that the transition from production to delivery is as fast as the transfers themselves.

Infrastructure Optimization: Getting the Most Out of Your Connection

Even the fast software can be limited by local infrastructure. To reach enterprise-level speeds, businesses must improve their internal networks. For example, switching from Wi-Fi to a wired Cat6e ethernet connection can reduce latency and stop the packet loss that often triggers TCP slowdowns.

Managing your Maximum Transmission Unit (MTU) settings is another way to boost performance. By making sure your router and computer use the largest possible packet size without fragmentation, you can reduce the overhead on every transfer. Using a dedicated high-performance DNS provider can also speed up the "lookup" phase of every connection, ensuring your software spends more time moving data and less time finding servers.

The Role of Managed File Transfer

For enterprises with strict compliance needs, Managed File Transfer (MFT) systems provide speed through automation. By scheduling large batch transfers during off-peak hours and using multi-threaded delivery, MFT systems ensure that bandwidth is used at its maximum efficiency multiple hours a day. When combined with modern protocols like PeSIT, these systems can outperform standard web transfers for high-volume backend operations.

Performance Benchmarks Comparison

To choose the right tool, you have to understand how different technologies perform under various conditions. The following comparison highlights how each method handles specific business scenarios.

Choosing the right method requires analyzing your "latency to productivity." For some, that is the time it takes for a colleague to see an edit. For others, it is the time it takes to deliver a final master to a client. By matching the protocol to the project, businesses can reclaim hundreds of hours of lost productivity every year.

Frequently Asked Questions

What is the fast way to share a large file for business?

For a one-time delivery of a large file, UDP-accelerated tools like MASV are the fast option. They bypass traditional TCP bottlenecks to use nearly all of your available bandwidth, which is faster than standard browser-based uploads or email-linked storage.

Why is cloud sync sometimes slow even with fast internet?

Cloud sync speed often depends on the protocol rather than your bandwidth. Many services use file-level sync, which re-uploads the entire file for every change. Services using block-level sync are much faster because they only transfer the specific pieces of data that were modified.

How does Fast.io speed up file management for teams?

Fast.io improves speed through its URL Import feature, which allows cloud-to-cloud transfers that bypass local internet limits. Also, its Intelligence Mode uses RAG to index files instantly, allowing users and AI agents to find information via natural language search instead of manual navigation.

Is there a free way to get high-speed business file sharing?

Fast.io offers a free agent tier that includes 50GB of storage and 251 MCP tools for automation. This allows teams to build high-speed, intelligent workflows without a credit card or upfront cost, providing a professional entry point into accelerated file management.

What is the difference between TCP and UDP for file transfers?

TCP is a 'reliable' protocol that requires a handshake for every data packet, which causes slowdowns over long distances or high latency. UDP is a 'fast' protocol that sends data continuously without waiting for acknowledgments, making it ideal for massive file transfers when paired with professional error-correction software.

Related Resources

Share Files Without Limits on Fast.io

Join the intelligent workspace where files are auto-indexed and ready for agents. Move your data at cloud-to-cloud speeds and skip the local bottlenecks. Built for business file sharing workflows.