How to Build a Collaborative MCP Tool Development and Testing Environment

Building a collaborative MCP tool development and testing environment lets engineers and AI agents work on tools in one shared workspace. This guide covers the architecture, shared state management, and testing frameworks needed to move past solo development. Learn how to sync tool versions and cut down on debugging time for multi-agent systems.

The Shift from Solo to Shared MCP Development

Helpful references: Fast.io Workspaces, Fast.io Collaboration, and Fast.io AI.

Define clear tool contracts and fallback behavior so agents fail safely when dependencies are unavailable. This improves reliability in production workflows.

Shared Workspace Fundamentals

A collaborative MCP development environment lets multiple engineers and AI agents work on tool definitions and testing scripts in one shared workspace. While the Model Context Protocol (MCP) started as a way for individuals to connect LLMs to local files, enterprise needs in multiple require something more reliable. Moving from a single-user local setup to team infrastructure is necessary for scaling workflows that involve multiple models and data sources.

In a traditional solo setup, environment variables, tool schemas, and testing states are often stuck on a single developer's machine. This leads to cases where AI agents fail in production because they lack the specific context or connection strings present in the local environment. A shared workspace fixes this by putting the MCP host configuration in one place and providing a consistent playground for both human developers and autonomous agents.

When these resources are synchronized, teams can ensure that every agent has access to the same version of a tool, no matter which engineer deployed it. This is especially important for complex integrations where tool outputs are chained together, requiring a stable and predictable environment.

Why Shared State is Necessary for Agentic Tool Testing

Testing AI tools is different from testing standard REST APIs. Because LLMs are probabilistic, the way they call a tool changes based on the surrounding context. In a collaborative environment, shared state lets teams capture these different context windows and replay them across different models. If one developer finds a prompt injection vulnerability or a tool-calling error, the entire team can verify the fix immediately.

Shared state also enables multi-agent coordination. When multiple agents work in the same environment, they need a way to signal intent and avoid conflicting actions. For example, if two agents update the same documentation file, a shared workspace with file locking prevents them from overwriting each other's work.

According to industry reports, the Model Context Protocol grew to exceed 10,000 active server implementations by late 2025, driven largely by the need for these standardized interfaces. As adoption increases, the ability to manage shared state becomes the key difference between experimental scripts and production-ready systems.

Scale Your Agentic Team with Shared Workspaces

Start building your collaborative MCP environment today with 50GB of free storage and 251 built-in agent tools. Built for collaborative mcp tool development and testing environment workflows.

How to Set Up a Shared MCP Staging Environment

Setting up a shared staging environment requires moving your MCP servers from local processes to cloud-accessible endpoints. This lets multiple host applications connect to the same set of tools simultaneously.

Follow these steps to set up a shared environment:

Sync Tool Definitions: Store your tool schemas and MCP server code in a shared repository with strict versioning. This ensures the staging environment always has the latest tool versions. 2.

Deploy via Streamable HTTP or SSE: Instead of running MCP servers as local stdio processes, deploy them using Server-Sent Events (SSE) or Streamable HTTP. This makes the tools accessible via a URL, so different team members can point their agents to the same testing endpoint. 3.

Use Shared Storage: Use a workspace that both agents and humans can access. This serves as the "ground truth" for testing file operations and data extraction tools. 4.

Enable Centralized Logging: Route all tool-calling logs to a shared observer. This allows developers to see how different agents interact with the tools in real-time. 5.

Standardize Authentication: Use a unified authentication layer, such as OAuth multiple.multiple, for all connections to the shared MCP server to maintain security across the team.

Managing Tool Versioning in Teams

In a collaborative setup, breaking changes in a tool's JSON schema can disable agents across the entire organization. Use a semantic versioning strategy for your MCP tools. When a schema changes, deploy the new version to a separate staging URL first. This gives the team a chance to verify that the LLM still understands how to call the tool before updating production.

Evidence and Benchmarks: The Metrics of Shared Environments

Data from teams using collaborative MCP infrastructure shows a clear speed advantage. Centralizing access reduces the friction of onboarding new agents and engineers.

According to Knit, adopting a standardized protocol like MCP can reduce initial development time by up to multiple% while cutting ongoing maintenance costs by multiple%. This efficiency comes from reusing tool definitions across different projects rather than rebuilding them from scratch.

The number of MCP servers grew from just 100 in late 2024 to over 16,000 in its first year. This growth shows how fast the industry is moving toward this standard. For Fortune multiple companies, implementation jumped from multiple% to multiple% in just one year. Collaborative tool management is now a core requirement for enterprise AI architecture.

Debugging Multi-Agent Tool Calls

Debugging gets harder when multiple agents call tools at the same time. A collaborative environment must provide multi-tenant observability, which means filtering logs by agent ID, session ID, or tool name.

A good strategy is to use a shared audit log that records the tool output and the full LLM prompt that triggered it. This lets developers see the "reasoning" behind a failed call. If an agent sends an invalid parameter, you can see if the bug was in the tool's validation logic or the agent's interpretation.

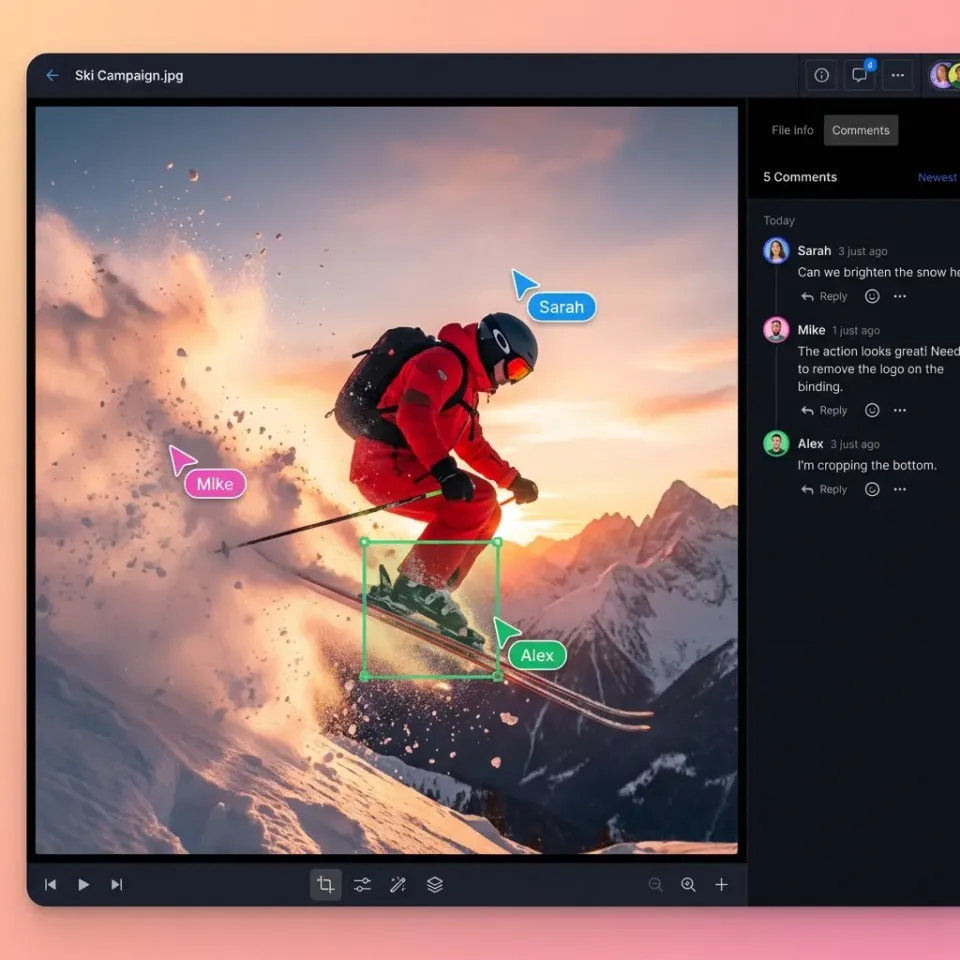

Another key feature is the ability to "shadow" agent calls. In a shared environment, a human can watch an agent's workspace in real-time and step in if the agent gets stuck in a loop. This human-in-the-loop capability is only possible when both the human and the agent work in the same environment.

Fast.io: The Infrastructure for Collaborative Agents

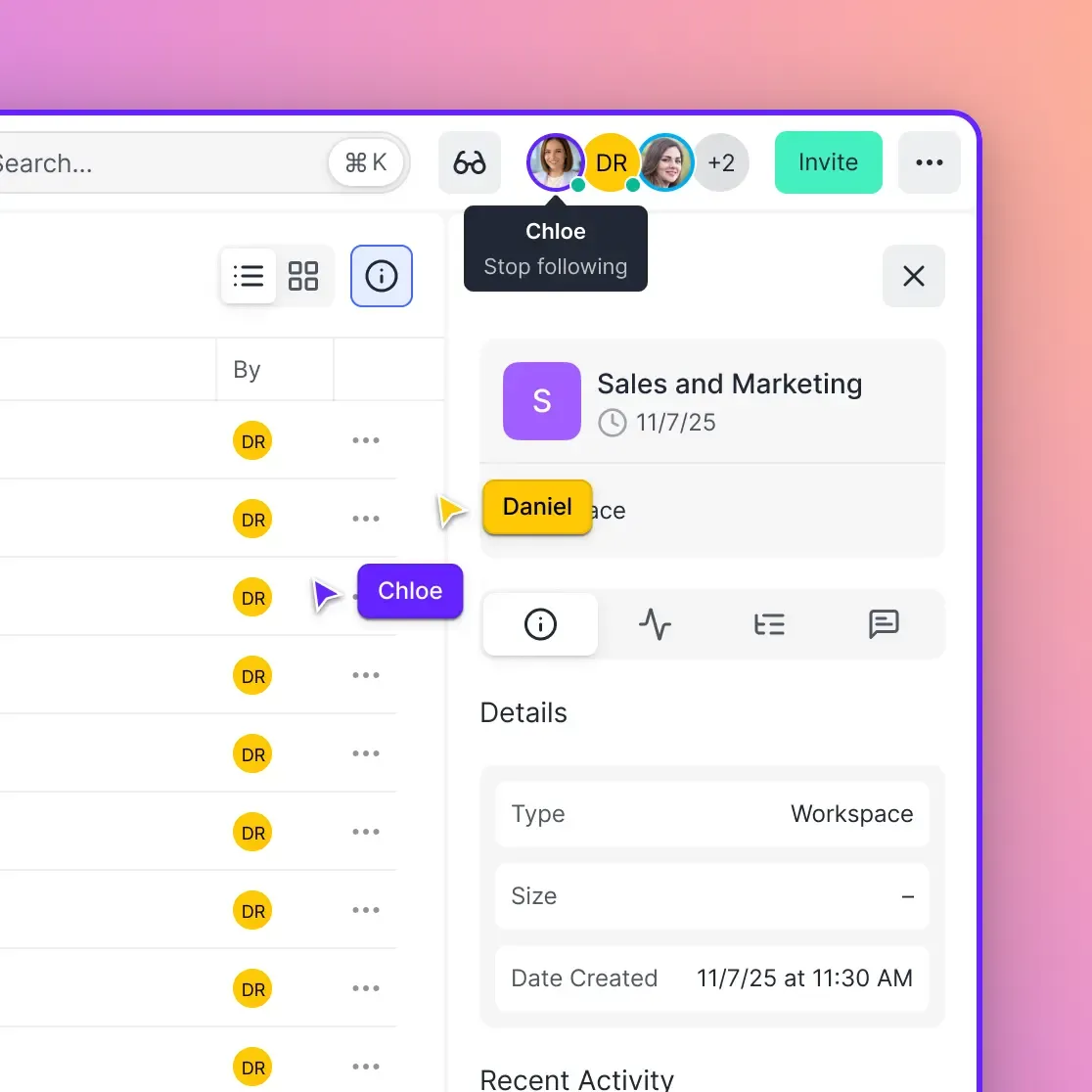

Fast.io is built for collaborative MCP development. Unlike standard cloud storage, these workspaces are designed for the fast access patterns required by AI agents.

With multiple built-in MCP tools, Fast.io lets your agents manage files and search datasets right away. Since these tools use SSE and Streamable HTTP, they work for teams out of the box. Multiple agents can connect to the same workspace, use the same tools, and share a common intelligence layer.

Key features for teams:

- Persistent Agent Storage: Give your agents a permanent home for their data that lasts across sessions.

- Intelligence Mode: Built-in RAG means agents can search and query files with citations without a separate vector database.

- Ownership Transfer: Agents can build workspaces and hand them off to humans, ensuring a smooth transition.

- File Locks: Prevent multi-agent conflicts with built-in file locking.

By using Fast.io as your MCP layer, your team can focus on building better tools instead of managing complex infrastructure.

Frequently Asked Questions

How do multiple developers test the same MCP server?

Multiple developers can test the same MCP server by deploying it as a remote service using SSE (Server-Sent Events). Instead of running it locally, developers connect their host applications to the shared staging URL. This ensures everyone tests against the same version of the tool.

What are the best practices for shared MCP tool versioning?

Use semantic versioning (SemVer) for tool schemas and keep a registry of approved tools. When making breaking changes, deploy the new version to a separate endpoint first. This keeps existing agents running while the team tests the update.

How does a shared workspace help with multi-agent debugging?

A shared workspace provides a central audit log for all agent actions and tool calls. This lets developers trace events across multiple agents to find where conflicts happened. Seeing the shared state in real-time makes it easier to understand why an agent made a specific choice.

Why is persistent storage important for collaborative MCP development?

Persistent storage ensures that data generated by one agent is available to others, even after a session ends. This lets teams build long-running test scenarios where agents interact with files created by different team members over several days.

Can I use Fast.io to coordinate agents from different LLM providers?

Yes. Fast.io works with any model. You can have a Claude agent and a GPT-multiple agent working in the same workspace using the same set of multiple tools. This makes it an excellent platform for comparing how different models use the same toolset.

Related Resources

Scale Your Agentic Team with Shared Workspaces

Start building your collaborative MCP environment today with 50GB of free storage and 251 built-in agent tools. Built for collaborative mcp tool development and testing environment workflows.