How to Choose the Best Vector Database for AI Agents (2026)

Vector databases serve as the long-term semantic memory for AI agents, allowing them to recall context across sessions and vast datasets. With the vector database market projected to reach $5 billion by 2028, choosing the right backend for your agent matters. This guide compares the top solutions in 2026, from specialized databases like Pinecone and Weaviate to integrated storage solutions like Fast.io.

What Are Vector Databases for AI Agents?

A vector database is a specialized storage system designed to handle high-dimensional vector embeddings, which are numerical representations of data like text, images, or audio. Unlike traditional relational databases that match exact keywords, vector databases perform similarity searches to find data that is semantically related to a query. For AI agents, a vector database acts as long-term memory. It enables the agent to:

- Recall past interactions across different sessions

- Retrieve relevant context from millions of documents (RAG)

- Connect concepts that use different wording but share the same meaning

According to MarketsandMarkets, the vector database market size is expected to grow from $1.5 billion in 2023 to over $5 billion by 2028, driven largely by the adoption of Generative AI and autonomous agents.

Helpful references: Fast.io Workspaces, Fast.io Collaboration, and Fast.io AI.

What to check before scaling best vector databases for ai agents

We evaluated the leading options based on scalability, developer experience, and suitability for agentic workflows. Here is the quick comparison:

1. Fast.io (Best for File-Based Agents)

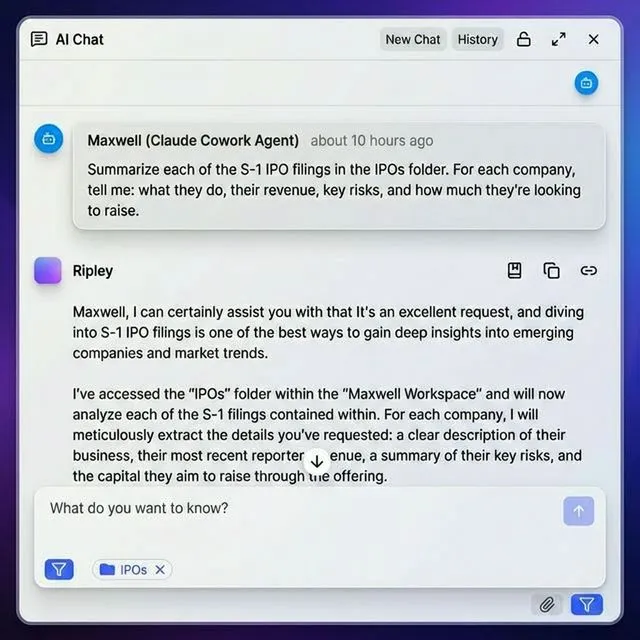

Fast.io takes a different approach than traditional vector databases. Instead of forcing you to set up a separate database and manage ETL pipelines to chunk and index your files, Fast.io builds the vector index directly into the file storage.

Why it works well for agents: With Intelligence Mode, any workspace in Fast.io automatically becomes a RAG-ready knowledge base. When your agent uploads a PDF, video, or text file, Fast.io automatically indexes it. Your agent can then query the storage using natural language via the Model Context Protocol (MCP).

Key Features:

- Built-in RAG: No need to manage Pinecone or Weaviate separately for file content.

- 251 MCP Tools: The most comprehensive set of file operations for Claude, Cursor, and other agents.

- Zero-Config: Indexing happens automatically on upload.

- Agent Tier: Free 50GB storage, 1GB max file size, and 5,000 monthly credits.

Best For: Developers building agents that primarily work with documents, media, and file-based assets who want to avoid managing a separate database infrastructure.

Give Your AI Agents Persistent Storage

Get 50GB of storage with built-in vector indexing and RAG. No credit card required.

2. Pinecone (Best for Pure RAG)

Pinecone remains the industry standard for managed vector search. Its serverless architecture continues to dominate for teams that want a "set it and forget it" solution.

Why it works well for agents: Pinecone's separation of storage and compute allows it to scale infinitely. The introduction of Pinecone Assistant has simplified the workflow further, handling the chunking and embedding generation on the server side, which removes complexity from your agent's code.

Pros:

- Fully managed serverless architecture (no infrastructure to provision). * high availability and reliability for enterprise workloads. * Native integrations with almost every AI framework (LangChain, LlamaIndex).

Cons:

- Can become expensive at high scale compared to self-hosted options. * Closed source means you are locked into their platform.

3. Weaviate (Best for Hybrid Search)

Weaviate is an AI-native vector database that excels at hybrid search, combining vector similarity with traditional keyword filtering. This is important for agents that need to find "the email from John" (keyword) that is "about the project deadline" (semantic).

Why it works well for agents: Weaviate offers modular "vectorizer" plugins that run the embedding models for you. Its emphasis on "Agentic AI" includes features specifically designed to store agent memory and state in a structured graph format.

Pros:

- Strong hybrid search (BM25 + Vector). * Modular architecture supports various embedding models out of the box. * Open-source core allows for self-hosting and customization.

Cons:

- Steeper learning curve than Pinecone. * Running it yourself requires operational expertise (Kubernetes, etc.).

4. Chroma (Best for Prototyping)

Chroma is the developer's favorite for getting started quickly. It is an open-source, embedding database designed to be easy to run locally.

Why it works well for agents: If you are building a local agent using LangChain or AutoGen, Chroma is often the default choice. It spins up in memory with a single line of code, making it ideal for testing and development loops before moving to production.

Pros:

- simple Python API (

pip install chromadb). * Runs locally, ideal for privacy-focused or offline agents. * Free and open-source.

Cons:

- Not designed for massive scale (millions of vectors) without migration. * Fewer managed cloud features compared to Pinecone or Weaviate.

5. Qdrant (Best for Performance)

Written in Rust, Qdrant is built for speed and efficiency. It is a high-performance engine that punches above its weight, often delivering faster query times with lower resource usage than competitors.

Why it works well for agents: For agents that need to make split-second decisions based on retrieval, Qdrant's low latency is a major advantage. It also supports a Recommendation API that is helpful for agents that suggest content or actions.

Pros:

- High performance and low memory footprint (Rust-based). * Advanced filtering capabilities. * Flexible deployment (Docker, Kubernetes, or Cloud).

Cons:

- Smaller community ecosystem than Pinecone or Chroma. Consider how this fits into your broader workflow and what matters most for your team. The right choice depends on your specific requirements: file types, team size, security needs, and how you collaborate with external partners. Testing with a free account is the fast way to know if a tool works for you.

Do You Actually Need a Vector Database?

Before you commit to a dedicated vector database, consider your agent's architecture.

You NEED a vector database if:

- Your agent queries millions of small text chunks unrelated to files. * You need sub-millisecond latency for high-frequency trading or real-time recommendations. * You are building a specialized search engine application.

You MIGHT NOT need one if:

- Your agent primarily interacts with documents, PDFs, codebases, or media files. * You are using Fast.io, where Intelligence Mode handles the embeddings for you. * Your dataset is small enough to fit in the context window of modern Long Context LLMs (like Gemini 1.5 Pro or Claude 3.5 Sonnet). For many file-centric agent workflows, managing a separate vector database introduces unnecessary complexity (ETL pipelines, synchronization issues) that integrated solutions like Fast.io eliminate.

Frequently Asked Questions

Which vector database is best for LangChain?

Pinecone and Chroma are the most popular choices for LangChain. Chroma is ideal for local development and testing due to its simplicity, while Pinecone is the go-to for production deployment because of its managed serverless architecture.

Is a vector database the same as a graph database?

No. A vector database stores data as numerical embeddings to find similar items, while a graph database stores data as nodes and edges to find relationships. However, tools like Weaviate combine vector search with graph-like links, offering a hybrid approach.

How much does a vector database cost?

Costs vary . Open-source options like Chroma and Qdrant are free to self-host but require infrastructure. Managed services like Pinecone usually charge based on the amount of storage and read/write operations, often starting with a generous free tier.

Can I use Fast.io as a vector database?

Yes, for file-based content. Fast.io's Intelligence Mode automatically indexes files you upload, effectively acting as a vector database for your documents and media without requiring you to manage embeddings manually.

Related Resources

Give Your AI Agents Persistent Storage

Get 50GB of storage with built-in vector indexing and RAG. No credit card required.