How to Set Up Agentic AI Storage for Persistent Agent Memory

Agentic AI storage provides persistent file and data access that autonomous agents need to complete multi-step tasks across sessions. This guide covers storage architecture patterns, compares vector databases to file storage, and walks through setting up persistent memory for production agent systems.

What Is Agentic AI Storage?

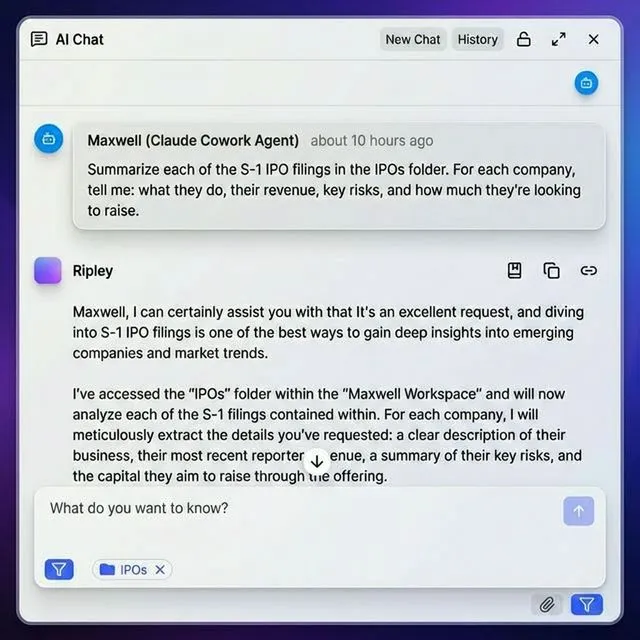

Agentic AI storage is persistent file and data access built for autonomous AI agents. It gives agents the ability to read, write, organize, and share files across sessions without human intervention.

Traditional cloud storage (Dropbox, Google Drive, OneDrive) was designed for people clicking through a UI. Agentic storage flips the model: the primary user is software, not a person. That means API-first access, programmatic file operations, and workspace organization that agents can navigate on their own.

The core problem it solves: LLMs are stateless by default. Every API call starts fresh. Without an external storage layer, agents lose all context when a session ends. Agentic storage provides the persistence layer where agents save outputs, retrieve previous work, and build on past results.

Key properties that separate agentic storage from regular cloud storage:

- Session independence - files persist after the agent process exits

- Programmatic access - full API or MCP tool control, no UI required

- Workspace structure - organized projects instead of flat file dumps

- Human-agent collaboration - both humans and agents access the same files

- Event-driven triggers - webhooks notify agents when files change

Why Agents Fail Without Persistent Storage

In production AI agent deployments, most agent failures in multi-step workflows are related to context loss and state management issues. When agents cannot persist intermediate results, multi-step workflows break down.

The Stateless Problem in Practice

Consider a research agent tasked with analyzing dozens of documents and writing a report. Without storage:

- The agent processes documents during the current session

- The session hits a context window limit or timeout

- All intermediate findings are lost

- The next session starts from scratch, repeating work already done

This pattern wastes compute, burns tokens, and produces worse results. Persistent storage fixes it by letting agents checkpoint progress and resume later.

What Changes With Storage

Agents with persistent access to their own workspace can:

- Save intermediate results across research sessions spanning days

- Build iteratively on code projects without losing context

- Run multi-stage pipelines where each stage reads the previous stage's output

- Collaborate with humans who review and edit agent-produced files

- Reference historical work to avoid repeating analysis

The difference between a single-turn chatbot and a production agent often comes down to whether it has persistent storage.

Comparing Storage Approaches for Agents

Most discussions about agent memory focus on vector databases. That's only part of the picture. Agents need multiple storage types, and understanding the tradeoffs matters for building reliable systems.

Vector Databases: Good for Retrieval, Not for Files

Vector DBs like Pinecone, Weaviate, and Chroma excel at storing embeddings and finding semantically similar content. They power RAG pipelines and give agents contextual memory.

But they don't handle file storage. You can't save a PDF, an image, or a generated report to a vector database. You can't share a vector embedding with a client. And you can't browse vectors in a file manager.

File Storage: Good for Outputs, Weak on Intelligence

Generic file storage (S3, local filesystem, basic cloud drives) handles documents and media well. But it lacks the features agents need: no programmatic organization tools, no built-in search by meaning, and no collaboration features designed for agent-human workflows.

Agent-Native Workspaces: The Missing Layer

Agent-native storage combines file handling with intelligence. Files are stored, indexed, and searchable by meaning. Agents organize work into workspaces, and humans access the same files through a standard UI.

Fast.io takes this approach. When you enable Intelligence Mode on a workspace, every uploaded file is automatically indexed for RAG search. Agents can query documents, get cited answers, and share results with humans through MCP tools or a REST API.

Give Your AI Agents Persistent Storage

Fast.io gives teams shared workspaces, MCP tools, and searchable file context to run agentic ai storage workflows with reliable agent and human handoffs.

Architecture Patterns for Agent Storage

Three architecture patterns have emerged for connecting agents to persistent storage. Each fits different use cases.

Pattern 1: Direct API Integration The agent calls storage APIs directly using HTTP requests or SDK methods.

import requests # Upload agent output via REST API

response = requests.post(

"https://api.fast.io/v1/workspaces/{id}/files",

headers={"Authorization": f"Bearer {api_key}"},

files={"file": open("report.pdf", "rb")}

)

Best for: Custom agent frameworks, full control over file operations, complex workflows.

Tradeoff: Requires writing integration code for each storage provider.

Pattern 2: MCP-Native Storage

The Model Context Protocol provides a standard interface for agent-tool interaction. MCP-native storage exposes file operations as tools that any compatible agent can call.

{

"mcpServers": {

"fast-io": {

"url": "/storage-for-agents/"

}

}

}

Best for: Teams using Claude, GPT-4o, Gemini, or any MCP-compatible client. Works across LLM providers without code changes.

Tradeoff: Requires an MCP-compatible client (most major AI tools support this now).

Pattern 3: Hybrid Memory Stack

Production agents often combine multiple storage types. According to Oracle's developer blog, effective agent memory management typically requires matching storage type to data type:

- Vector DB for semantic search across document embeddings

- File storage for documents, images, reports, and shared outputs

- Key-value store for fast session state and configuration

This hybrid approach is common in frameworks like LangChain and CrewAI, where the reasoning layer connects to multiple backends depending on the operation.

Setting Up Agent Storage Step by Step

Here is a practical walkthrough for setting up persistent storage for your agents.

Step 1: Create an Agent Workspace

Start by giving your agent its own workspace. On Fast.io, agents sign up like human users and get a free tier with 50 GB storage, 5,000 monthly credits, and no credit card requirement.

The agent creates an organization and workspace programmatically:

auth.signup(email="agent@yourco.com", password="...") # MCP tools or REST API

org.create(name="Research Team", domain="research-team")

workspace = org.create_workspace(name="Q1 Analysis")

Step 2: Configure File Access

Choose your integration method based on your agent framework:

- MCP: Point your client at `/storage-for-agents/ (works with Claude, Cursor, VS Code, and others)

- OpenClaw: Run

clawhub install dbalve/fast-iofor zero-config setup with any LLM - REST API: Use bearer tokens for direct HTTP access

Step 3: Implement Persistence Logic

Add checkpointing to your agent's workflow:

- Save intermediate results after each major step

- Load context from storage at session start

- Use file locks to prevent conflicts when multiple agents access the same file

- Version files so you can roll back if something goes wrong

Step 4: Enable Intelligence Mode

Toggle Intelligence Mode on your workspace to activate built-in RAG. Once enabled, every file uploaded is automatically indexed. Your agent can then:

- Ask questions across all workspace files with cited answers

- Search files by meaning, not just filename

- Get automatic document summaries

- Extract structured metadata from unstructured files

Step 5: Connect Human Collaboration Set up shared access so humans can review agent work:

- Create branded shares for client delivery

- Use contextual comments for feedback on specific files

- Configure webhooks to notify agents when humans upload new files

- Transfer ownership to humans when the project is complete

Data Points: Why Storage Architecture Matters

Storage choices directly affect what agents can accomplish.

Market Growth

According to Gartner, 40% of enterprise applications will embed AI agents by the end of 2026, up from less than 5% in 2025. This rapid adoption is driving demand for infrastructure purpose-built for agent workflows, including persistent storage.

The broader agentic AI market is projected to grow from $7.8 billion to over $52 billion by 2030, according to industry analysts at SoftClouds.

Memory Architecture Impact

Research from The New

Stack on production agent deployments shows that agents with structured persistent memory produce more coherent outputs across sessions and reduce redundant API calls. The improvement comes from eliminating repeated context-building that stateless agents must perform at every session start.

The Multi-Database Anti-Pattern

According to Oracle's developer blog on agent memory management, many teams fall into a "polyglot persistence" trap: one vector DB for embeddings, one NoSQL DB for JSON, one graph DB for relationships, and one relational DB for transactions. Each database adds a failure point, a security surface, and operational overhead.

Agent-native storage reduces this complexity. A single workspace with Intelligence Mode enabled provides file storage, semantic search, and RAG capabilities without managing separate vector and file systems.

Performance Comparison | Capability | Generic Cloud Storage | Agent-Native Workspace |

|------------|----------------------|----------------------| | Programmatic file access | API only | API + 251 MCP tools | | Semantic search | Requires separate vector DB | Built-in with Intelligence Mode | | Human collaboration | Limited sharing links | Full workspace with presence | | Agent-to-agent sharing | Manual coordination | Shared workspace access | | Event-driven workflows | Not supported | Webhooks on file events |

Best Practices for Production Agent Storage

Teams running agents in production have developed patterns that prevent common failures.

Checkpoint Early and Often

Save agent state after every significant step, not just at the end. If an agent crashes mid-workflow, checkpoints let it resume from the last saved state instead of starting over.

Use File Locks for Multi-Agent Access

When multiple agents write to the same workspace, use file locks to prevent conflicts. Fast.io provides acquire/release lock operations that prevent two agents from overwriting each other's changes.

Separate Working Files from Deliverables

Organize workspaces with clear boundaries:

/working/for intermediate files the agent creates during processing/output/for finished deliverables ready for human review/input/for source materials the agent needs to process

Set Up Ownership Transfer Early

If your agent builds workspaces for clients, configure ownership transfer from the start. The agent creates the organization, builds out workspaces and shares, then transfers ownership to the client. The agent keeps admin access for ongoing maintenance.

Monitor Storage Credits

The free agent tier provides 5,000 credits per month. Credits cover storage (100/GB), bandwidth (212/GB), AI tokens (1/100 tokens), and document ingestion (10/page). Track usage to avoid hitting limits during critical workflows.

Design for LLM Portability

Build your storage integration to work across LLM providers. MCP-based storage works with Claude, GPT-4o, Gemini, LLaMA, and local models. Avoid vendor-specific file APIs (like OpenAI's Files API) that lock you into a single provider.

Frequently Asked Questions

How do AI agents store data between sessions?

AI agents store data between sessions by writing to external storage systems. Since LLMs are stateless, agents save intermediate results, context, and outputs to file storage, databases, or agent-native workspaces. At the start of each session, the agent loads its saved state and resumes work. MCP-native storage like Fast.io provides 251 tools for file operations, making this process straightforward across different LLM providers.

What storage do autonomous agents need?

Autonomous agents need storage with four properties: persistence (data survives session ends), programmatic access (API or MCP tools, not just a UI), collaboration support (humans and other agents can access the same files), and event notifications (webhooks when files change). File storage complements vector databases by handling the actual documents, reports, and media that agents produce and share.

How do agents remember between sessions?

Agents remember between sessions by saving state to external persistent storage. Before a session ends, the agent writes its progress, intermediate results, and context to a workspace or file system. When a new session starts, the agent reads this saved state and continues from where it left off. This is the agent equivalent of long-term memory, separate from the LLM's context window which only provides short-term working memory.

What is the difference between vector databases and file storage for agents?

Vector databases store numerical embeddings and support similarity search, making them ideal for semantic memory and RAG pipelines. File storage handles actual documents, images, code, and media with version control, sharing, and collaboration features. Most production agents use both: vector DBs for finding relevant context by meaning, and file storage for saving outputs and collaborating with humans.

What are MCP tools for agent storage?

MCP (Model Context Protocol) tools are standardized operations that any MCP-compatible AI agent can call. For storage, MCP tools let agents create workspaces, upload and download files, manage permissions, search by content, and share with humans. Fast.io provides 251 MCP tools via Streamable HTTP and SSE, letting agents perform any file operation without custom API integration code.

How much does agent storage cost?

Fast.io offers a free agent tier with 50 GB storage, 1 GB max file size, and 5,000 monthly credits. No credit card, no trial period, no expiration. Credits cover storage, bandwidth, AI tokens, and document ingestion. For most prototyping and small production workloads, the free tier is sufficient. Scale to paid plans only when you need more storage or higher credit limits.

Related Resources

Give Your AI Agents Persistent Storage

Fast.io gives teams shared workspaces, MCP tools, and searchable file context to run agentic ai storage workflows with reliable agent and human handoffs.