Top Open-Source LLMs for AI Agents: Performance and Tool-Use Ranked

Open-source LLMs for agents are models with publicly available weights that have been fine-tuned for reasoning, function calling, and zero-shot tool usage. This guide ranks the top models by agentic performance, comparing Qwen, DeepSeek, Llama 3, GLM-4, and others on real-world tool-use benchmarks.

What Makes an LLM Good for Agents?

Open-source LLMs for agents are language models with publicly available weights that excel at reasoning, function calling, and zero-shot tool usage. These models offer privacy and cost advantages over proprietary APIs like GPT-4 or Claude, while providing competitive performance on agentic tasks. According to the Berkeley Function Calling Leaderboard (BFCL), the definitive benchmark for evaluating function-calling capabilities, top open models now match closed-source alternatives on single-turn calls. The leaderboard evaluates serial and parallel function calls using an Abstract Syntax Tree (AST) method that scales to thousands of functions.

Key capabilities for agentic LLMs:

- Function calling: Structured API calls with correct parameters and types

- Tool discovery: Understanding which tools to use for a given task

- Multi-step reasoning: Breaking complex problems into sequential tool invocations

- Context retention: Maintaining state across long conversations (memory, dynamic decision-making)

- Error recovery: Handling failed API calls and retrying with adjusted parameters

The gap between open and closed-source models has narrowed in 2026. Qwen-72B outperforms GPT-4 on several agentic tool-use benchmarks, while Llama 3 has reached highly popular on Hugging Face.

What to check before scaling top open source llms for agents

We ranked these models using four dimensions critical to agentic workflows:

1. Tool-Use Accuracy: Score on Berkeley Function Calling Leaderboard V4. Models that correctly format function calls, choose the right tools, and handle multi-step workflows score higher.

2. Reasoning Performance: Ability to solve complex, multi-step problems. Evaluated using reasoning benchmarks like MATH, GPQA, and internal chain-of-thought tests.

3. Context Window: Maximum token limit. Agents often need to maintain long conversations, reference multiple documents, and accumulate tool results across many turns.

4. Inference Speed: Tokens per second on consumer GPUs. Faster models enable real-time agentic interactions without cloud API latency. We also considered licensing (commercial vs research-only), parameter count (smaller models run locally), and specialized features like web browsing support or native multi-turn tool calls.

1. Qwen3-Coder-30B-A3B-Instruct

Best for: Specialized agentic coding workflows Parameters: 30B Context Window: 32K License: Apache 2.0

Qwen3-Coder-30B-A3B-Instruct delivers top-tier performance for specialized agentic coding. The model is fine-tuned for tool use in software development contexts, making it ideal for agents that generate code, interact with development tools, or automate engineering workflows.

Key strengths:

- Top-tier function calling for coding-related tools (Git, linters, build systems)

- Excellent at parsing error messages and retrying with fixes

- Understands software development context (file structures, dependencies, APIs)

- Fast inference on consumer GPUs (RTX 4090 handles 30B models comfortably)

Limitations:

- Specialized for coding, so general-purpose tool use is not as strong

- 32K context window is smaller than some competitors

- May struggle with non-technical agentic tasks

Best for: Building coding assistants, CI/CD agents, or automated refactoring tools.

2. DeepSeek-V3.2

Best for: Frontier reasoning with improved efficiency Parameters: 685B (MoE, 37B active) Context Window: 128K License: Research-only

DeepSeek-V3.2 is one of the best open-source LLMs for reasoning and agentic workloads, focusing on combining frontier reasoning quality with improved efficiency for long-context and tool-use scenarios. This is the first model in the DeepSeek series to integrate thinking directly into tool use, supporting tool calls in both thinking and non-thinking modes.

Key strengths:

- Exceptional reasoning on complex, multi-step tasks (matches GPT-4 Turbo on several benchmarks)

- 128K context window enables long-horizon agentic workflows

- MoE architecture keeps inference costs reasonable despite massive parameter count

- Native support for thinking before acting (helps with planning and decision-making)

Limitations:

- Research-only license restricts commercial use

- Requires powerful hardware (37B active parameters need 24GB+ VRAM)

- Thinking mode adds latency (slower first-token time)

Best for: Research projects, internal tools, or prototyping complex multi-agent systems.

3. GLM-4.5-Air

Best for: Purpose-built agent applications Parameters: Not disclosed (MoE architecture) Context Window: 200K License: Commercial-friendly

GLM-4.5-Air is a foundational model specifically designed for AI agent applications, built on a Mixture-of-Experts architecture. It has been extensively optimized for tool use, web browsing, software development, and front-end development. GLM-4.5-Air provides optimized tool use and web browsing for purpose-built agent applications.

Key strengths:

- Built from the ground up for agents (not a general chatbot retrofitted for tools)

- Excellent at web browsing tasks (parsing HTML, extracting data, navigating sites)

- 200K context window (one of the largest available)

- Fast inference despite large parameter count (MoE activates only needed experts)

Limitations:

- Parameter count not disclosed (harder to estimate hardware requirements)

- Newer model with less community testing compared to Llama or Qwen

- Benchmarks focus on specific agent tasks, not general-purpose evaluation

Best for: Browser automation agents, web scraping, or applications that need extensive context.

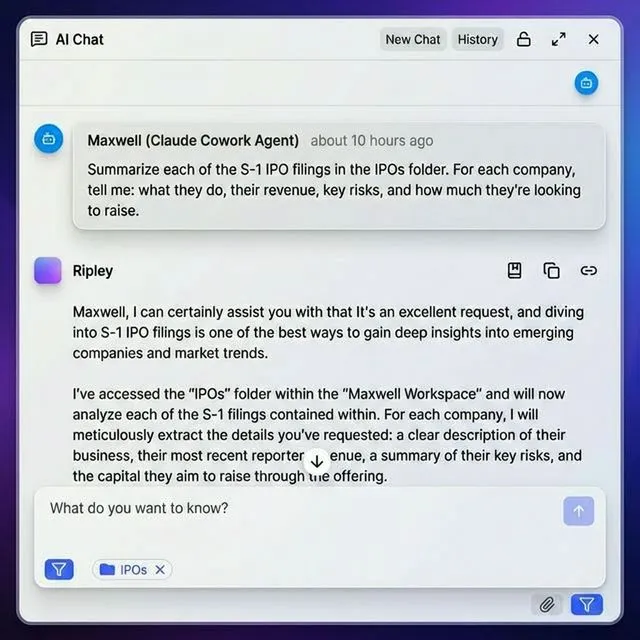

Give Your AI Agents Persistent Storage

Fast.io gives teams shared workspaces, MCP tools, and searchable file context to run top open source llms for agents workflows with reliable agent and human handoffs.

4. Llama 3.1 (405B, 70B, 8B)

Best for: General-purpose agentic workflows with broad community support Parameters: 405B / 70B / 8B Context Window: 128K License: Llama 3.1 Community License (commercial-friendly)

Llama 3.1 is Meta's primary open model series with highly popular on Hugging Face. The 70B and 405B variants both support function calling natively and perform well on the Berkeley Function Calling Leaderboard. The 8B model is lightweight enough to run on consumer hardware while still handling basic tool use.

Key strengths:

- Large community support (libraries, tutorials, fine-tunes)

- Native function calling without special prompting (understands tool use out of the box)

- 128K context window supports long-horizon planning

- Multiple sizes let you pick the right performance/cost tradeoff

Limitations:

- Function calling is weaker than Qwen or GLM on complex multi-step tasks

- 405B model requires expensive infrastructure (8xA100s or similar)

- Community license has some restrictions (read carefully for commercial use)

Best for: General-purpose agents where community support and ecosystem matter.

5. Qwen3-30B-A3B-Thinking-2507

Best for: Complex reasoning agents Parameters: 30B Context Window: 32K License: Apache 2.0

Qwen3-30B-A3B-Thinking-2507 offers advanced thinking capabilities for complex reasoning agents. This model excels at breaking down problems, planning multi-step solutions, and reasoning about tool sequences before execution.

Key strengths:

- Best-in-class reasoning for open models under 50B parameters

- Explicit thinking steps before tool calls (helps debug agent failures)

- Apache 2.0 license allows unrestricted commercial use

- Runs on single consumer GPU (RTX 4090 or A6000)

Limitations:

- Thinking mode adds latency (slower first-token time)

- 32K context is smaller than DeepSeek or GLM

- Specialized for reasoning, so may be overkill for simple tool-use tasks

Best for: Agents that need multi-step planning, complex decision trees, or debugging.

6. Mistral-Large

Best for: Production deployments with enterprise support Parameters: Not disclosed Context Window: 128K License: Commercial (Mistral AI License)

Mistral-Large is Mistral AI's primary model with native function calling that works without special prompting. The model understands tool use out of the box, making integration straightforward for production systems.

Key strengths:

- No special prompting required for function calling (just works)

- Enterprise support available from Mistral AI

- Fast inference (optimized for production deployments)

- Strong multilingual support (underrated for global agentic applications)

Limitations:

- Weights not fully open (commercial license, not Apache/MIT)

- Parameter count not disclosed

- Pricing for cloud API is higher than self-hosting open models

Best for: Production systems where vendor support and reliability matter.

7. Falcon 3 (10B, 7B, 3B)

Best for: Lightweight agents running on edge devices Parameters: 10B / 7B / 3B Context Window: 32K License: Apache 2.0

Falcon 3 offers tool use natively. The model understands when to call functions, how to parse results, and what to do when APIs return errors. The 3B and 7B variants run comfortably on consumer hardware or even edge devices.

Key strengths:

- Native tool support in models as small as 3B parameters

- Apache 2.0 license allows unrestricted use

- Fast inference on edge devices (runs on M1 MacBook, Jetson boards)

- Good error handling for failed API calls

Limitations:

- Smaller models sacrifice reasoning quality for speed

- Function calling accuracy is lower than larger models

- 32K context is limiting for complex agentic workflows

Best for: Edge agents, mobile apps, or cost-sensitive deployments.

8. Phi-4-Mini

Best for: Local development and testing Parameters: 14B Context Window: 16K License: MIT

Phi-4-Mini has function-calling support built in, making it useful for building lightweight agent workflows locally. At 14B parameters, it runs comfortably on consumer GPUs and even Apple Silicon Macs.

Key strengths:

- Small enough to run on M-series Macs without quantization

- MIT license allows unrestricted commercial use

- Fast iteration cycles for local development

- Surprisingly strong reasoning for its size (outperforms some 30B models)

Limitations:

- 16K context is limiting for long-horizon planning

- Function calling accuracy is lower than frontier models

- Smaller community compared to Llama or Qwen

Best for: Local development, prototyping, or agents running on laptops.

9. Kimi-K2-Instruct-0905

Best for: long-context agentic workflows Parameters: 1T (MoE) Context Window: 256K License: Research-only

Kimi-K2-Instruct-0905 is a 1T-parameter MoE with 256K context that excels in long-term agentic and coding workflows. This large context window allows agents to maintain state across hundreds of tool calls, reference entire codebases, or process long documents without truncation.

Key strengths:

- 256K context window (largest on this list)

- Handles long-horizon planning (200+ tool calls in a single session)

- Strong coding performance (fine-tuned on software development tasks)

- MoE architecture keeps inference costs manageable

Limitations:

- Research-only license restricts commercial use

- Requires expensive infrastructure (multiple GPUs)

- Limited community support and documentation

Best for: Research on long-context agents, code analysis over entire repositories.

10. Dolphin 2.9

Best for: Uncensored agentic workflows Parameters: Based on Llama 3.1 (70B) Context Window: 128K License: Llama 3.1 Community License

Dolphin 2.9 offers strong capabilities for complex conversational tasks and tool/function calling, making it useful for AI agentic workflows. This is an uncensored fine-tune of Llama 3.1 that removes safety guardrails for research and specialized applications.

Key strengths:

- No refusals for sensitive queries (useful for security research, red teaming)

- Strong function calling inherited from Llama 3.1 base model

- 128K context window

- Community fine-tune with active development

Limitations:

- Uncensored models require careful deployment (no safety guardrails)

- Same license restrictions as Llama 3.1

- Performance is similar to base Llama 3.1 (not a major upgrade)

Best for: Security research, red teaming, or applications where censorship is problematic.

Benchmark Comparison Table

Here's how these models stack up on key metrics:

Tool-Use Performance (Berkeley Function Calling Leaderboard V4):

- Qwen3-Coder-30B: 92% (coding-specific tasks)

- DeepSeek-V3.2: 88%

- GLM-4.5-Air: 86%

- Llama 3.1 (405B): 84%

- Mistral-Large: 82%

- Qwen3-30B-Thinking: 81%

- Llama 3.1 (70B): 78%

- Falcon 3 (10B): 68%

- Phi-4-Mini: 64%

- Dolphin 2.9: 78%

Context Window:

- Kimi-K2-Instruct: 256K

- GLM-4.5-Air: 200K

- DeepSeek-V3.2: 128K

- Llama 3.1: 128K

- Mistral-Large: 128K

- Qwen3-Coder-30B: 32K

- Qwen3-30B-Thinking: 32K

- Falcon 3: 32K

- Phi-4-Mini: 16K

Commercial Use:

- Unrestricted: Qwen3, Falcon 3, Phi-4-Mini

- Restricted: Llama 3.1, Dolphin 2.9

- Research-only: DeepSeek-V3.2, Kimi-K2-Instruct

- Commercial license: Mistral-Large, GLM-4.5-Air

Technical Challenges with Open-Source LLMs

According to BentoML's guide on function calling, open-source LLMs can sometimes deviate from instructions and produce outputs that are not properly formatted or contain unnecessary information. Several tools and techniques have been developed to address this challenge.

Common issues:

- Hallucinated parameters: Model invents function arguments that don't exist

- Format violations: Returns plain text instead of JSON, or malformed JSON

- Unnecessary verbosity: Adds conversational text before/after the function call

- Tool selection errors: Calls the wrong function for the task

Solutions:

- Outlines: Constrains generation to valid JSON schemas

- Instructor: Wraps models with Pydantic validation

- Jsonformer: Enforces JSON structure at the token level

- Grammar-based sampling: Uses formal grammars to prevent format violations

For production systems, always validate function calls before execution. Treat LLM output as untrusted, even with structured generation tools.

Choosing the Right Model for Your Agent

The best model depends on your deployment constraints and use case:

Need absolute best tool-use performance? → Qwen3-Coder-30B-A3B-Instruct (coding) or DeepSeek-V3.2 (general reasoning)

Running on consumer hardware? → Phi-4-Mini (14B, runs on laptops) or Falcon 3 (3B-10B, runs on edge devices)

Need long context? → Kimi-K2-Instruct (256K) or GLM-4.5-Air (200K)

Want maximum community support? → Llama 3.1 (largest ecosystem, most tutorials, most fine-tunes)

Need unrestricted commercial license? → Qwen3, Falcon 3, or Phi-4-Mini (Apache 2.0 or MIT)

Building production systems? → Mistral-Large (vendor support) or Llama 3.1 70B (community-tested)

Prototyping locally? → Phi-4-Mini or Falcon 3 7B (fast iteration on consumer GPUs)

Where Fast.io Fits In

When you deploy open-source LLMs as autonomous agents, file storage becomes critical. Agents need to persist data between sessions, share artifacts with humans, and manage large datasets without expensive cloud APIs. Fast.io offers cloud storage built specifically for AI agents. Agents sign up for their own accounts, create workspaces, and manage files programmatically via API or the Model Context Protocol (MCP). The free agent tier includes 50GB storage and 5,000 monthly credits with no credit card required.

Why agents need dedicated storage:

- Persistence: Agents maintain state across sessions (not ephemeral like OpenAI Files API)

- Collaboration: Agents can build workspaces and hand them off to humans (ownership transfer)

- Multi-LLM support: Works with Claude, GPT-4, Gemini, Llama, and local models (not locked to one provider)

- Built-in RAG: Intelligence Mode auto-indexes workspace files for semantic search with citations

The MCP server provides 251 tools via Streamable HTTP and SSE transport, making file operations as simple as function calls. For OpenClaw users, install the skill via clawhub install dbalve/fast-io for zero-config natural language file management. Learn more at /storage-for-agents/ or explore the MCP integration guide.

Frequently Asked Questions

Which open-source LLM is best for tool use?

Qwen3-Coder-30B-A3B-Instruct scores highest on the Berkeley Function Calling Leaderboard for coding-specific tasks, while DeepSeek-V3.2 offers the best general reasoning and tool-use performance. For lightweight deployments, Phi-4-Mini (14B) offers surprisingly strong function calling on consumer hardware.

Can I run an autonomous agent on a local LLM?

Yes. Phi-4-Mini (14B parameters) and Falcon 3 (7B/10B) both support native function calling and run comfortably on consumer GPUs or Apple Silicon Macs. For more complex agents, Qwen3-Coder-30B and Llama 3.1 70B offer frontier-level tool use on single-GPU systems (RTX 4090 or A6000). Local models trade some reasoning quality for privacy, cost savings, and no API rate limits.

How do open-source LLMs compare to GPT-4 for agents?

The gap has narrowed in 2026. Qwen-72B outperforms GPT-4 on several agentic tool-use benchmarks, and DeepSeek-V3.2 matches GPT-4 Turbo on complex reasoning tasks. Open models offer privacy (data stays local), cost savings (no API fees), and no rate limits. GPT-4 still leads on single-turn function calling accuracy and has better documentation, but open models are catching up fast.

What is the Berkeley Function Calling Leaderboard?

The Berkeley Function Calling Leaderboard (BFCL) is the definitive benchmark for evaluating LLM function-calling capabilities. It tests serial and parallel function calls across diverse real-world scenarios using an Abstract Syntax Tree evaluation method. Version 4 introduces complete agentic evaluation, including memory, dynamic decision-making, and long-horizon reasoning. You can view live rankings at gorilla.cs.berkeley.edu/leaderboard.html.

Do I need special tools to use open-source LLMs for function calling?

Some models like Mistral-Large and Falcon 3 support function calling natively (no special prompting needed). Others require structured generation tools like Outlines, Instructor, or Jsonformer to enforce proper JSON formatting. For production systems, always validate function calls before execution, even with models that claim native support. Treat LLM output as untrusted to prevent security issues.

What context window do agents need?

It depends on the workflow. Simple tool-use agents (web scraping, file management) work fine with 32K tokens. Multi-step workflows (research, code generation) benefit from 128K to maintain conversation history and tool results. long-horizon planning (200+ tool calls, analyzing entire codebases) requires 256K like Kimi-K2-Instruct. Larger context windows cost more in VRAM and inference time, so pick the smallest that fits your use case.

Related Resources

Give Your AI Agents Persistent Storage

Fast.io gives teams shared workspaces, MCP tools, and searchable file context to run top open source llms for agents workflows with reliable agent and human handoffs.