Top 10 AI Data Scraping Tools for RAG Pipelines

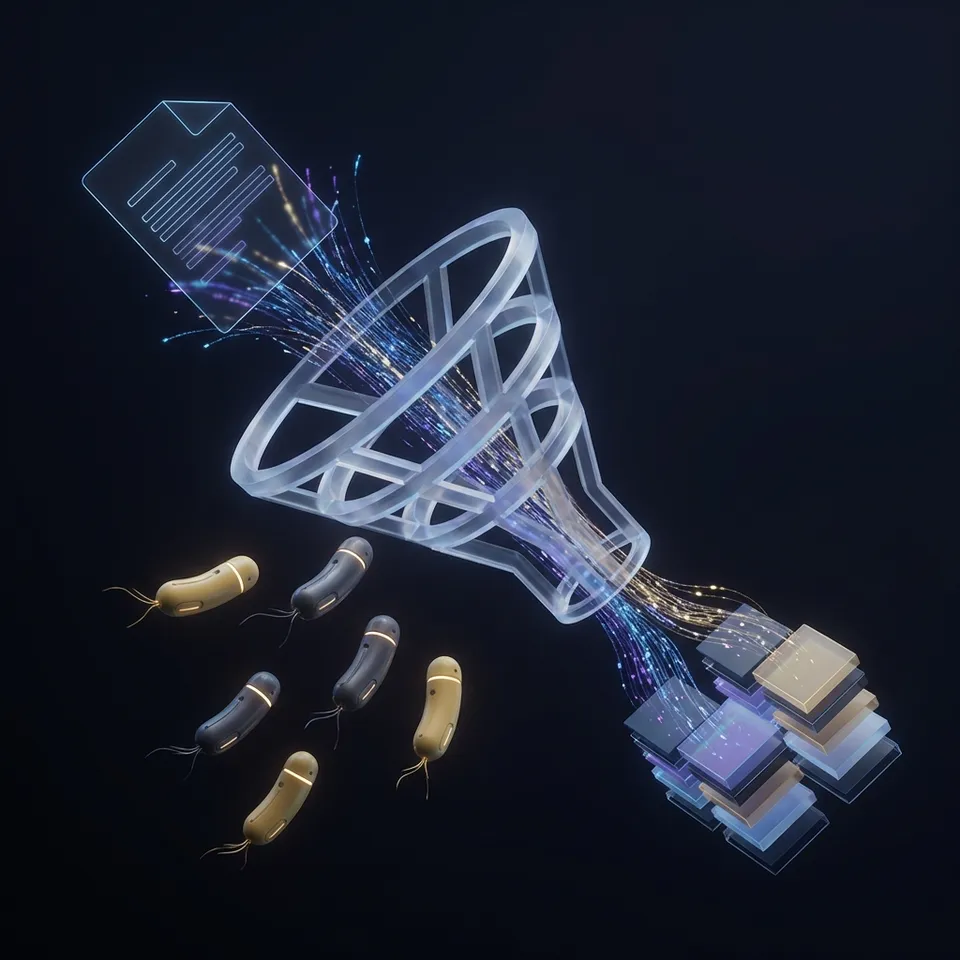

Building a reliable RAG pipeline requires more than just a basic web scraper. The best AI data scraping tools convert raw HTML into clean, semantic Markdown or JSON that LLMs can actually understand. This guide ranks the top 10 tools for scraping data for AI, comparing output quality, anti-bot handling, and integration ease.

Why Traditional Scrapers Fail at RAG: top 10 ai data scraping tools

If you have ever tried feeding raw HTML into a Large Language Model (LLM), you know the problem. Token limits explode, and the model gets distracted by navigation menus, footers, and tracking scripts. Traditional tools like BeautifulSoup or Selenium work well for extracting specific fields, but they struggle to generate the clean context needed for Retrieval-Augmented Generation (RAG). AI scraping tools convert web pages into clean Markdown or JSON for LLM ingestion. They handle dynamic JavaScript rendering, manage anti-bot challenges (CAPTCHAs), and strip away the "noise" of the web to leave only the signal. According to industry benchmarks, using clean Markdown instead of raw HTML can reduce RAG token usage by up to 60% while improving retrieval accuracy. The tools below are selected based on their ability to produce this high-quality, LLM-ready output.

Comparison of Top AI Scraping Tools

Here is a quick overview of how the top contenders stack up. We evaluated them based on output quality, ease of use, and specific features for RAG pipelines.

Give Your AI Agents Persistent Storage

Don't just scrape data; store it. Use Fast.io to give your agents 50GB of free, persistent, auto-indexed storage.

1. Firecrawl

Firecrawl has rapidly become the standard for RAG-focused scraping. Unlike general-purpose scrapers, it is built explicitly to turn websites into LLM-ready data. It handles the crawling of subpages automatically and returns clean Markdown that preserves heading hierarchies, which is critical for the "chunking" phase of RAG.

Key Strengths:

- Optimized Markdown: Strips clutter automatically, returning text that maps perfectly to vector embeddings.

- Crawl Mode: Can traverse an entire documentation site with a single API call.

- Smart Caching: Reduces costs by caching pages you have already scraped.

Limitations:

- Cost: can get expensive for large-scale crawls compared to self-hosted options.

- Focus: Less flexible for extracting specific structured fields (e.g., e-commerce prices) compared to traditional scrapers.

Best For: Developers building chat-with-docs applications who need a "set it and forget it" solution.

Pricing

Free tier available; paid plans start around published pricing for increased limits.

2. Jina Reader

Jina AI Reader is one of the fast ways to turn a URL into LLM context. It works by prepending r.jina.ai/ to any URL. This simplicity makes it a favorite for lightweight agents or quick queries where you don't need a full crawl.

Key Strengths:

- Zero Setup: No API key required for basic usage; just modify the URL.

- Standard Grounding: Widely used by LLMs to "read" the web in real-time.

- Search Integration: Can be combined with Jina's search API to find and read pages dynamically.

Limitations:

- Rate Limits: The free tier is generous but has strict rate limits.

- Depth: Primarily a "reader" for single pages, not a crawler for entire sites.

Best For: Real-time web browsing agents and quick context fetching.

3. Fast.io

While not a scraper itself, Fast.io is the missing piece in the scraping pipeline: persistent storage. Most scrapers are ephemeral; they grab data and vanish. Fast.io provides a file system for your AI agents to store, organize, and retain that scraped data. More importantly, its "Intelligence Mode" automatically indexes stored files (Markdown, PDF, JSON) for RAG without needing a separate vector database.

Key Strengths:

- Persistent Memory: Agents can save scraped content to a real file system (50GB free).

- Built-in RAG: Files are auto-indexed. You can query your scraped library via the API immediately.

- MCP Support: Connects directly to Claude or other LLMs via the Model Context Protocol (MCP).

Limitations:

- Not a Scraper: You need to pair it with a tool like Firecrawl or Jina to get the data first.

- Focus: Designed for storage and retrieval, not the act of crawling.

Best For: Storing scraped datasets and giving agents long-term memory.

4. Crawl4AI

For those who prefer open-source and self-hosting, Crawl4AI is a powerful option. It is a lightweight, high-performance crawler that you run on your own infrastructure. It is particularly good at performance, using asynchronous operations to scrape pages rapidly.

Key Strengths:

- Speed: Highly optimized for performance.

- Cost Control: Free to use (you pay for your own compute/proxies).

- Control: Full access to the configuration and customization of the crawling logic.

Limitations:

- Maintenance: You are responsible for managing the infrastructure and proxies.

- Setup: Requires more technical setup than a managed API.

Best For: Engineering teams who want full control over their scraping infrastructure.

5. Apify

Apify is the heavyweight champion of web scraping. It offers a massive marketplace of "Actors," which are pre-built serverless functions for almost any scraping task. For RAG, the "Website Content Crawler" actor is the go-to tool. It is scalable and handles the complexities of modern web scraping (like infinite scroll and dynamic loading) with ease.

Key Strengths:

- Ecosystem: Thousands of ready-made scrapers for specific sites (Instagram, Google Maps, etc.).

- Scalability: Built to handle enterprise-level scraping loads.

- Integrations: Native integrations with LangChain, Pinecone, and vector databases.

Limitations:

- Complexity: The sheer number of options can be overwhelming for simple tasks.

- Pricing: Usage-based pricing can scale up quickly for heavy users.

Best For: Enterprise-grade scraping and scraping specific platforms (social media, maps).

6. ScrapeGraphAI

ScrapeGraphAI takes a unique approach by using LLMs to define the scraping logic. Instead of writing CSS selectors, you describe what you want in natural language, and the library builds a graph-based scraping pipeline. This makes it resilient to website layout changes.

Key Strengths:

- Resilience: Adapts to UI changes automatically because it understands the content, not just the DOM structure.

- Flexibility: Can extract complex, structured relationships that regex or selectors miss.

- Open Source: Python library available for free.

Limitations:

- Cost/Speed: Using LLMs for the extraction logic is slower and more expensive (token costs) than traditional parsing.

- Experimental: Strong technology but can be overkill for simple static sites.

Best For: Scraping websites that change frequently or have complex, non-standard layouts.

7. Bright Data

Bright Data is known for its proxy networks, but their "Scraping Browser" and "Web Scraper IDE" are powerful tools for AI data collection. Their main selling point is unblockability. If you are scraping targets that aggressively block bots, Bright Data provides the infrastructure to get through.

Key Strengths:

- Unblocking: Industry-leading proxy network and browser fingerprint management.

- Compliance: Strong focus on legal compliance and ethical scraping.

- Reliability: high availability guarantees for enterprise needs.

Limitations:

- Price: Premium pricing reflects the enterprise capabilities.

- Learning Curve: Professional tools require some learning to master.

Best For: Scraping difficult, highly-protected websites at scale. Consider how this fits into your broader workflow and what matters most for your team. The right choice depends on your specific requirements: file types, team size, security needs, and how you collaborate with external partners. Testing with a free account is the fast way to know if a tool works for you.

8. Unstructured.io

The web isn't just HTML; it is full of PDFs, PowerPoints, and Word docs. Unstructured.io specializes in ingesting these non-HTML formats. For a RAG pipeline that needs to include whitepapers, annual reports, or slide decks found on the web, Unstructured is essential.

Key Strengths:

- Format Support: Handles PDF, PPTX, DOCX, and images with OCR.

- Cleaning: Removes headers, footers, and boilerplate from documents.

- Chunking: Smart splitting of documents for vector databases.

Limitations:

- Speed: OCR and complex document parsing take time.

- Setup: Can be run locally (Docker) or via API, but requires resources.

Best For: RAG pipelines that rely heavily on documents (PDFs) rather than just web pages.

9. LlamaParse

Created by the team behind LlamaIndex, LlamaParse solves one specific, painful problem: tables in PDFs. Traditional parsers mangle tables, destroying the context. LlamaParse uses a vision-based approach to understand document layout, ensuring that charts and tables are preserved in a format LLMs can understand.

Key Strengths:

- Complex Layouts: Excellent at handling multi-column PDFs and embedded tables.

- Integration: Native integration with LlamaIndex pipelines.

- Accuracy: High-fidelity conversion for financial or technical documents.

Limitations:

- Specificity: Primarily for documents, not general web crawling.

- Cost: Paid service (though with a generous free tier).

Best For: Financial and technical RAG applications involving complex PDF data. Consider how this fits into your broader workflow and what matters most for your team. The right choice depends on your specific requirements: file types, team size, security needs, and how you collaborate with external partners. Testing with a free account is the fast way to know if a tool works for you.

10. LangChain Document Loaders

While not a standalone "tool" in the same sense, LangChain's library of document loaders is the glue that holds many RAG pipelines together. It provides wrappers for almost all the tools mentioned above (Firecrawl, Apify, Unstructured), allowing developers to switch between scraping backends easily.

Key Strengths:

- Abstraction: Switch scraping providers without rewriting your application logic.

- Post-processing: Built-in utilities for splitting, cleaning, and metadata tagging.

- Community: The standard for Python/JS AI development.

Limitations:

- Dependency: It is a framework, not the engine itself (you still need the underlying API keys).

Best For: Developers who want flexibility to change scraping providers later. Consider how this fits into your broader workflow and what matters most for your team. The right choice depends on your specific requirements: file types, team size, security needs, and how you collaborate with external partners. Testing with a free account is the fast way to know if a tool works for you.

How to Choose the Right Tool

Selecting the right scraper depends on your specific RAG requirements. * For pure "Chat with Website" apps: Use Firecrawl or Jina Reader. They give you the cleanest Markdown with the least effort. * For archiving and long-term agent memory: Pair any scraper with Fast.io. Scrapers get the data; Fast.io remembers it and keeps it searchable. * For complex/protected sites: Use Bright Data or Apify to handle proxies and browser fingerprinting. * For PDFs and Docs: Unstructured or LlamaParse are essential for preserving document structure. Start with the end in mind: what does your LLM need to see? If it is clean text, use Markdown output. If it is precise data points, look for structured JSON capability.

Frequently Asked Questions

What is the best output format for RAG scraping?

Markdown is the best format for RAG. It preserves the semantic structure of the content (headers, lists, emphasis) which helps the LLM understand the hierarchy and importance of information, unlike raw HTML which is full of noise.

How does AI scraping differ from traditional web scraping?

Traditional scraping relies on rigid selectors (CSS/XPath) to extract specific data points. AI scraping focuses on capturing the full 'meaning' of a page, often using LLMs or advanced heuristics to clean content, handle dynamic JavaScript, and adapt to layout changes automatically.

Can I scrape websites for my custom GPT?

Yes, you can use tools like Firecrawl or Jina Reader to convert website content into files (Markdown/txt/PDF) and then upload them to your custom GPT's knowledge base. Alternatively, you can use Fast.io to host the files and connect them via an API action.

Is web scraping for LLMs legal?

Web scraping legality is complex and depends on your jurisdiction and the site's Terms of Service. Generally, scraping publicly available data for analysis is often permitted, but you should respect `robots.txt` files and avoid scraping behind login walls without permission. Always consult legal counsel for your specific use case.

Related Resources

Give Your AI Agents Persistent Storage

Don't just scrape data; store it. Use Fast.io to give your agents 50GB of free, persistent, auto-indexed storage.