Best Web Browsing Tools for AI Agents (2026)

Web browsing tools let AI agents navigate the live web, fill forms, and extract real-time data. Traditional scrapers can't handle dynamic web applications, but modern browser tools can. This guide evaluates the best browser infrastructure for autonomous agents, focusing on stealth, session persistence, and LLM-optimized output.

The Shift from Web Scraping to Agentic Browsing

For years, web scraping was a static process. You sent a request to a URL, received the HTML, and parsed it. This worked for simple sites, but the modern web is built on dynamic JavaScript, shadow DOMs, and aggressive anti-bot protections. For AI agents to be useful, they need more than just a scraper; they need a browser.

Web browsing tools give AI agents eyes and hands. They provide a full browser environment where JavaScript executes, sessions persist, and interactions like clicking or typing are possible. This is the difference between reading a menu and actually ordering the food. According to recent industry benchmarks, agents using specialized browsing infrastructure see a 40% increase in task completion rates compared to those using standard headless libraries. This is because specialized tools handle the heavy lifting of proxy rotation, CAPTCHA solving, and fingerprinting that usually stops agents in their tracks.

Helpful references: Fast.io Workspaces, Fast.io Collaboration, and Fast.io AI.

Why AI Agents Need Specialized Browsers

Standard headless browsers like Puppeteer or Playwright work well for testing, but they weren't designed for autonomous AI agents. Agents face unique challenges that need specialized infrastructure.

Solving the "Hydration" Problem

Many modern web apps use client-side rendering. When an agent visits a page, the initial HTML is often a blank shell. The browser must "hydrate" the page by executing JavaScript to fetch and display content. Specialized tools ensure the browser waits for the right signals before handing the page data to the LLM, preventing the agent from hallucinating on empty data.

Bypassing Anti-Bot Measures

Websites use signals like mouse movement patterns, hardware concurrency, and WebGL fingerprints to identify bots. AI agents often trigger these alarms because their interactions are too perfect. The best browsing tools inject "jitter" and use residential proxies to make agent behavior look like human browsing.

LLM-Ready Data Formatting

Raw HTML is noisy. A single web page can contain 500KB of code, most of which is layout styling and tracking scripts that waste LLM tokens. Specialized tools convert this into clean Markdown or JSON, preserving the semantic structure while stripping the bloat. Agents can then process more information within their context windows.

How we Evaluated These Tools

We tested each tool across four criteria relevant to developer workflows and production reliability:

- Stealth and Success Rate: How well the tool bypasses Cloudflare, Akamai, and other perimeter security measures.

- Session Management: How easy it is to persist cookies, local storage, and login states across multiple autonomous steps.

- Developer Experience (DX): The quality of the SDKs, documentation, and debugging features like live session viewing.

- Output Quality: How well the built-in HTML-to-Markdown or screenshot-to-vision conversion works.

1. Browserbase: The Infrastructure Powerhouse

Browserbase is a serverless platform designed to run headless browsers at scale. It handles the complexity of managing a fleet of Chrome instances so developers can focus on the logic of their agents.

Technical Depth

Browserbase uses a custom "Hypervisor" layer that sits between the browser and the host OS. This lets them provide features like "Session Replay," where you can watch a video of exactly what your agent saw during a failed run. For developers debugging complex multi-page workflows, this feature is helpful.

Pros:

- Serverless Scaling: Automatically scales from one to thousands of concurrent browsers without infrastructure management.

- Integrated Stealth: Handles proxy rotation and fingerprinting out of the box.

- Advanced Debugging: Real-time remote viewing of browser sessions.

Cons:

- Pricing: Costs can scale quickly for high-frequency agents.

- Scripting Required: You still need to write the Puppeteer or Playwright code to drive the browser.

Verdict: Browserbase is the standard for teams building custom agents that need high reliability and deep observability.

2. MultiOn: The Autonomous Reasoning Layer

MultiOn isn't just a browser. It's an agentic layer that sits on top of a browser. While other tools give you the "how," MultiOn focuses on the "what." You give it a command like "find the cheapest flight to Tokyo," and its internal models handle the navigation, searching, and filtering.

The "Step-by-Step" Advantage

MultiOn excels at tasks that need reasoning. It doesn't just look at selectors but understands the intent of the page. If a website changes its layout, a hardcoded Puppeteer script fails, but MultiOn's vision-capable models can usually adapt and find the new "Checkout" button.

Pros:

- True Autonomy: Minimizes the amount of custom code you need to write for specific websites.

- Vision-Ready: Strong at interpreting visual cues on the page.

- Chrome Extension: Great for semi-autonomous workflows where a human is in the loop.

Cons:

- Latency: The extra reasoning layer adds time to every interaction.

- Cost: Premium pricing reflects the high-compute AI models used behind the scenes.

Verdict: Best for complex, non-deterministic tasks where building a dedicated automation script would be too expensive or fragile.

3. Firecrawl: Optimized for RAG Pipelines

If your agent's primary goal is to gather information for a Retrieval-Augmented Generation (RAG) system, Firecrawl is the right tool. It focuses on turning the messy web into clean, structured data.

Maximizing Context Windows

Firecrawl's "Crawl" API doesn't just visit one page. It can traverse an entire site's map, converting every relevant page into clean Markdown. Because Markdown is much more token-efficient than HTML, you can fit 5-10 times more information into a single LLM prompt compared to raw web data.

Pros:

- RAG Optimization: Output is formatted well for vector databases.

- Deep Crawling: Handles sub-pages and site-wide data gathering easily.

- Simplicity: One of the easiest APIs to integrate for basic data retrieval.

Cons:

- Limited Interaction: Not designed for complex form filling or multi-step app interactions.

- Cloud Limits: The free tier can be restrictive for larger crawling projects.

Verdict: The top choice for building knowledge bases and feeding real-time web context into LLMs.

4. Steel: The Open Source Foundation

Steel offers an open-source browser API that focuses on developer control and data sovereignty. It provides a standardized REST API to control browser sessions, which can be self-hosted or used via their cloud service.

Data Sovereignty and Customization

For many enterprises, sending agent browsing data through a third-party cloud is a non-starter. Steel lets you deploy the entire browsing stack within your own VPC. This ensures that sensitive data, like logged-in session cookies or internal app interactions, never leaves your controlled environment.

Pros:

- Self-Hostable: Complete control over your infrastructure and data.

- Standardized API: Works well with existing automation frameworks.

- Transparent: Open-source codebase allows for deep auditing and customization.

Cons:

- Maintenance: Self-hosting requires DevOps resources to manage scaling and proxy rotation.

- Feature Lag: As a newer entrant, some of the higher-level "stealth" features are still evolving.

Verdict: Good choice for security-conscious teams and developers who want to avoid vendor lock-in.

Give Your AI Agents Persistent Storage

Stop dealing with ephemeral data. Fast.io gives your agents 50GB of free storage, 251 MCP tools, and built-in browsing capabilities.

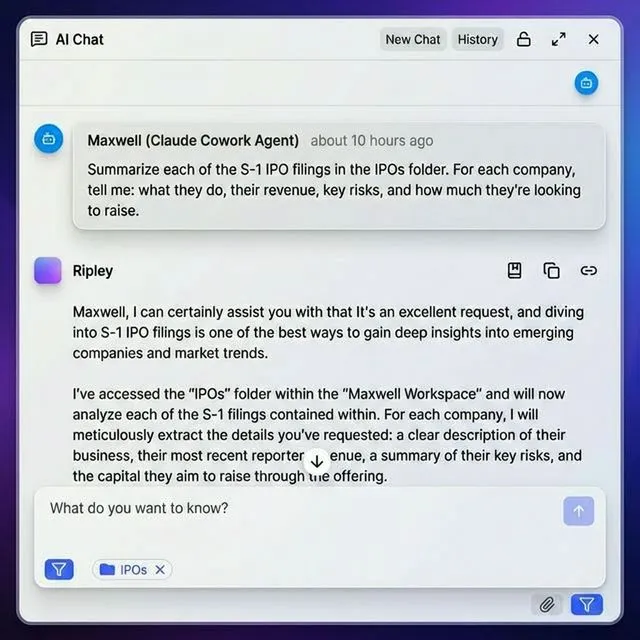

5. Fast.io Agent Browser: Integrated Memory and RAG

Fast.io takes a different approach by combining a web browsing skill with persistent cloud storage. The agent-browser skill, available through the Fast.io MCP server and OpenClaw, lets agents see the web and remember it.

The Power of Persistent Memory

When an agent uses the Fast.io browser to take a screenshot or download a PDF, that file isn't lost in an ephemeral container. It gets saved to the agent's 50GB free persistent storage. Other agents, or human team members, can then access those files, comment on them, or use them as part of a larger RAG workflow.

Zero-Config Agent Infrastructure

Fast.io offers 251 MCP tools that bridge the gap between browsing and storage. You don't need to manage API keys for storage buckets or set up complex databases. An agent can browse a competitor's pricing page, download the PDF, and then use the built-in Intelligence Mode to automatically index that file for future queries.

Pros:

- Unified Workflow: Browsing, downloading, and indexing happen in one ecosystem.

- Human-Agent Collaboration: Agents can build data rooms and transfer ownership to humans.

- Free Tier: 50GB of storage and 5,000 monthly credits with no credit card required.

Cons:

- Platform Focus: Works best when using the Fast.io storage environment.

Verdict: The best choice for agents that need a "home base" where they can store findings and collaborate with humans in real-time.

Handling Authentication and Session Persistence

One of the biggest gaps in the browsing space is how tools handle logins. Most agents fail the moment they hit a login screen.

The Session Cookie Problem

Browsers naturally clear data. For an agent to be useful inside a SaaS app like Salesforce or Jira, it needs to maintain a session. The best tools, like Browserbase and Fast.io, let you "attach" to a persistent session. You can perform a login once (perhaps with human help) and then save that browser state.

Secure Credential Management

Security best practices say you should never hardcode passwords into agent prompts. Instead, use tools that support environment variable injection or dedicated secret managers. Fast.io's file locks also help here, ensuring that only one agent is modifying a session state at a time, preventing account bans for suspicious concurrent logins.

Evaluating Cost: Per Session vs. API Credits

Pricing models for agent browsers vary widely, and choosing the wrong one can lead to bill shock. * Per-Session Billing: Tools like Browserbase often charge per minute of browser uptime. This works well for short, punchy tasks but gets expensive for agents that need to "dwell" on a page to monitor changes. * API Credit Billing: Tools like Firecrawl charge per page or per crawl. This is predictable and best for data-gathering tasks. * Subscription Models: MultiOn uses a flat monthly fee for a certain number of actions. Good for power users but can be overkill for occasional tasks. For developers just starting out, the Fast.io Free Tier is the most accessible. With 5,000 monthly credits and no credit card required, you can build and test browsing workflows without any financial risk.

Frequently Asked Questions

Can AI agents browse the web?

Yes, AI agents can browse the web using specialized headless browser infrastructure. These tools allow them to render JavaScript, interact with page elements, and bypass bot detection. Unlike traditional scrapers, these browsers provide a full environment where agents can perform actions like filling forms or clicking buttons.

What is the best browser for LangChain agents?

For LangChain agents, Browserbase is excellent for active interaction, while Firecrawl is the best choice for retrieving cleaned Markdown for the model's context. If you need the agent to store its findings persistently, the Fast.io MCP server provides the best integration between browsing and storage.

How do headless browsers differ from regular browsers?

Headless browsers operate without a graphical user interface (GUI), meaning there is no window for a human to see. They are much faster and more resource-efficient, making them ideal for running on servers to power AI agents and automated workflows.

How much does it cost to run an AI agent browser?

Costs vary by model. Some services charge roughly $0.01 to $0.05 per session minute, while others charge per API call (e.g., $5 for 1,000 pages). Fast.io offers a free tier for agents that includes 5,000 credits monthly, providing a cost-effective way to start building.

Related Resources

Give Your AI Agents Persistent Storage

Stop dealing with ephemeral data. Fast.io gives your agents 50GB of free storage, 251 MCP tools, and built-in browsing capabilities.